\n

## Diagram: Large Language Model Interaction

### Overview

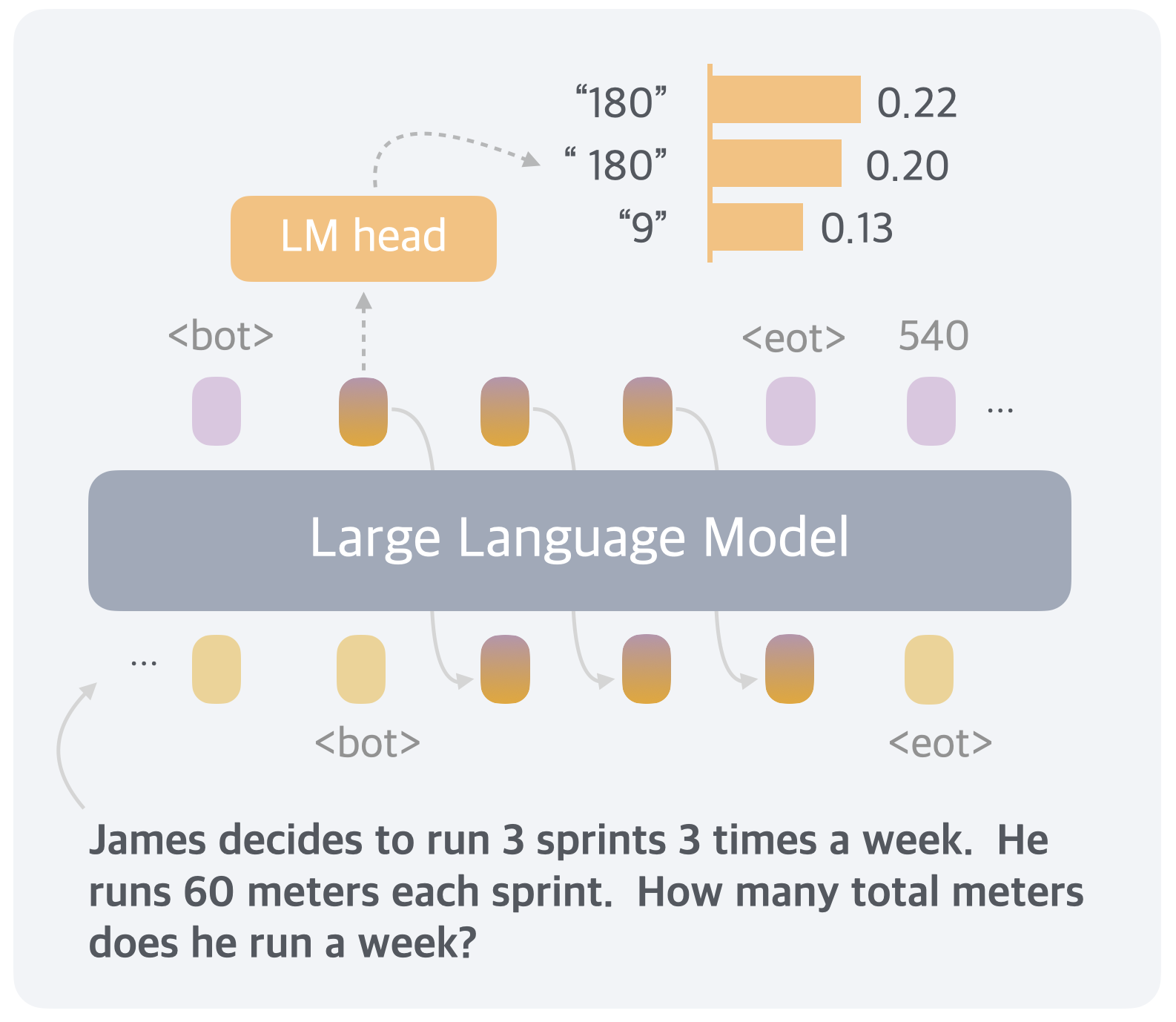

This diagram illustrates the interaction between a Large Language Model (LLM) and an "LM head," showing token processing and associated probabilities. The diagram depicts a simplified flow of information, with input text being processed by the LLM and output generated via the LM head. A specific example question is provided at the bottom to contextualize the model's potential application.

### Components/Axes

The diagram consists of the following key components:

* **Large Language Model (LLM):** A large, light-grey rectangular area representing the core of the model.

* **LM head:** A yellow rectangular area positioned above the LLM, representing a processing unit.

* **Tokens:** Represented as colored circles within the LLM and connected to the LM head via a dashed arrow. Tokens include `<bot>`, `<eot>`, "180", and "9".

* **Probabilities:** A vertical bar chart on the right side of the diagram, displaying probabilities associated with specific tokens. The axis is not explicitly labeled, but it represents probability values.

* **Input Text:** "James decides to run 3 sprints 3 times a week. He runs 60 meters each sprint. How many total meters does he run a week?" positioned below the LLM.

* **Ellipsis (...):** Used to indicate continuation of token sequences.

### Detailed Analysis or Content Details

The diagram shows the following specific data points:

* **Token "180":** Associated with a probability of 0.22 (represented by a light-orange bar).

* **Token "180":** Associated with a probability of 0.20 (represented by a darker-orange bar).

* **Token "9":** Associated with a probability of 0.13 (represented by a brown bar).

* **Token `<eot>`:** Associated with a probability of 540 (represented by a purple bar).

* **Tokens within LLM:** The LLM contains a sequence of tokens, including `<bot>`, several intermediate tokens (represented by orange circles), and `<eot>`. The sequence is truncated with ellipsis on both sides.

* **LM Head Connection:** A dashed arrow originates from the `<bot>` token within the LLM and points towards the LM head.

### Key Observations

* The probabilities associated with the tokens vary significantly, with `<eot>` having a much higher value (540) than the others. This could indicate the end-of-transmission token is highly probable in this context.

* The presence of two "180" tokens with different probabilities suggests potential ambiguity or multiple possible interpretations.

* The example question at the bottom is a simple arithmetic problem, suggesting the LLM could be used for question answering.

### Interpretation

The diagram illustrates a simplified view of how a Large Language Model processes input and generates output. The LLM breaks down the input text into tokens, and the LM head assigns probabilities to these tokens. The higher the probability, the more likely the token is to be selected as part of the output. The example question demonstrates a potential application of the LLM in solving simple reasoning tasks. The large probability value associated with `<eot>` suggests the model is likely to signal the end of its response. The differing probabilities for the same token ("180") could indicate the model is considering multiple possible interpretations or continuations of the input sequence. The diagram doesn't provide enough information to determine the exact nature of the LLM or the specific task it is performing, but it offers a glimpse into the token-based processing that underlies its functionality.