## System Diagram: Privacy-Preserving Robust Model Aggregation

### Overview

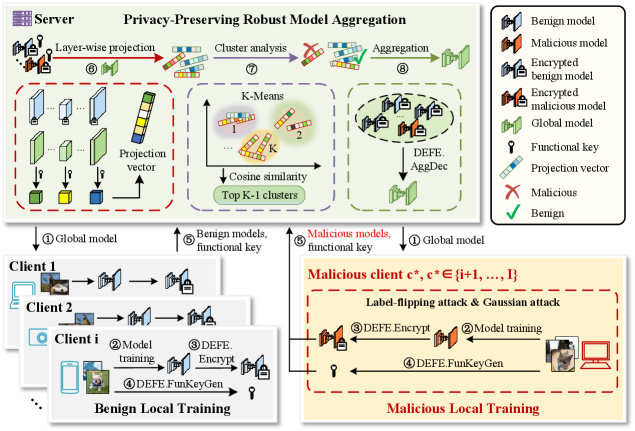

The image is a system diagram illustrating a privacy-preserving robust model aggregation scheme. It depicts the interaction between a server and multiple clients (both benign and malicious) during a federated learning process. The diagram highlights the steps involved in model training, encryption, aggregation, and security measures against malicious attacks.

### Components/Axes

* **Title:** Privacy-Preserving Robust Model Aggregation

* **Server:** Represents the central server in the federated learning system.

* **Clients:** Represent individual clients participating in the training process. There are benign clients and malicious clients.

* **Benign Local Training:** Represents the process of training models on benign clients.

* **Malicious Local Training:** Represents the process of training models on malicious clients, including attack mechanisms.

* **Legend (Top-Right):**

* Benign model (Blue house icon)

* Malicious model (Red house icon)

* Encrypted benign model (Blue house icon with lock)

* Encrypted malicious model (Red house icon with lock)

* Global model (Green house icon)

* Functional key (Key icon)

* Projection vector (Blue/White bars)

* Malicious (Red X)

* Benign (Green Checkmark)

### Detailed Analysis

**Server-Side Processing (Top):**

1. **Layer-wise projection (6):** The server receives model updates from clients and applies layer-wise projection.

* Input: Model updates from clients.

* Process: Applies layer-wise projection.

* Output: Projected model updates.

2. **Cluster analysis (7):** The server performs cluster analysis on the projected model updates using K-Means.

* Input: Projected model updates.

* Process: K-Means clustering.

* Output: Clusters of model updates.

* **K-Means:**

* Clusters labeled 1, 2, ..., K.

* Cosine similarity is used to determine cluster membership.

* Top K-1 clusters are selected.

3. **Aggregation (8):** The server aggregates the selected clusters using DEFE.AggDec.

* Input: Selected clusters.

* Process: Aggregation using DEFE.AggDec.

* Output: Updated global model.

**Client-Side Processing (Bottom):**

1. **Benign Local Training (Left):**

* **Client 1, Client 2, Client i:** Represent individual benign clients.

* **Model training (2):** Clients train models locally using their data.

* Input: Local data.

* Process: Model training.

* Output: Trained model.

* **DEFE.Encrypt (3):** Clients encrypt their trained models using DEFE.Encrypt.

* Input: Trained model.

* Process: Encryption.

* Output: Encrypted model.

* **DEFE.FunKeyGen (4):** Clients generate functional keys using DEFE.FunKeyGen.

* Input: N/A

* Process: Key generation.

* Output: Functional key.

* **Global model (1):** The server sends the global model to the clients.

* **Benign models, functional key (5):** Clients send their encrypted models and functional keys to the server.

2. **Malicious Local Training (Right):**

* **Malicious client c*, c* ∈ {i+1, ..., I}:** Represents a malicious client.

* **Label-flipping attack & Gaussian attack:** The malicious client performs label-flipping and Gaussian attacks.

* **Model training (2):** The malicious client trains a model locally.

* Input: Local data.

* Process: Model training.

* Output: Trained model.

* **DEFE.Encrypt (3):** The malicious client encrypts the trained model using DEFE.Encrypt.

* Input: Trained model.

* Process: Encryption.

* Output: Encrypted model.

* **DEFE.FunKeyGen (4):** The malicious client generates a functional key using DEFE.FunKeyGen.

* Input: N/A

* Process: Key generation.

* Output: Functional key.

* **Global model (1):** The server sends the global model to the malicious client.

* **Malicious models, functional key (5):** The malicious client sends the encrypted model and functional key to the server.

### Key Observations

* The diagram illustrates a federated learning system with privacy-preserving mechanisms.

* Benign clients train models and encrypt them before sending them to the server.

* Malicious clients can perform attacks such as label-flipping and Gaussian attacks.

* The server uses K-Means clustering and DEFE.AggDec to aggregate model updates and mitigate the impact of malicious attacks.

### Interpretation

The diagram presents a system designed to address the challenges of privacy and robustness in federated learning. By encrypting model updates and employing robust aggregation techniques, the system aims to protect client data privacy and mitigate the impact of malicious attacks. The use of K-Means clustering allows the server to identify and potentially isolate malicious model updates, while DEFE.AggDec provides a mechanism for aggregating the remaining updates in a robust manner. The system highlights the importance of considering both privacy and security in federated learning deployments.