\n

## Diagram: Privacy-Preserving Robust Model Aggregation

### Overview

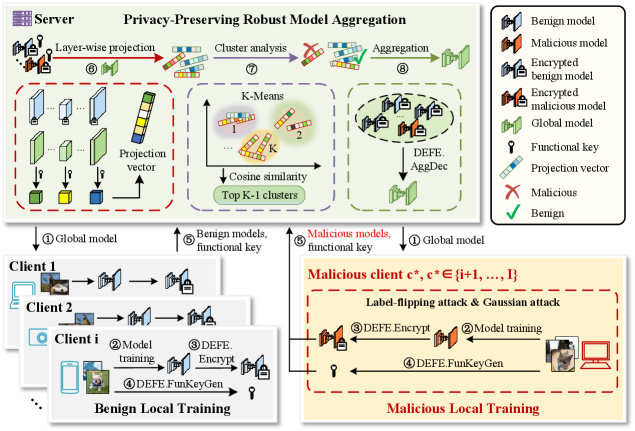

This diagram illustrates a system for privacy-preserving robust model aggregation, involving a server and multiple clients. It details the process of local model training on clients, secure aggregation on the server, and the handling of potentially malicious clients. The diagram is divided into three main sections: Server-side processing (top), Benign Local Training (bottom-left), and Malicious Local Training (bottom-right). The entire process is framed under the title "Privacy-Preserving Robust Model Aggregation".

### Components/Axes

The diagram uses several visual components to represent data and processes:

* **Models:** Represented as rectangular prisms, with different colors indicating their status (Benign, Malicious, Encrypted).

* **Functional Keys:** Represented as key icons.

* **Projection Vectors:** Represented as arrows.

* **Clusters:** Represented as numbered groups (1, 2, K).

* **Arrows:** Indicate the flow of data and processes.

* **Boxes:** Delineate different stages or sections of the process.

* **Labels:** Numbered labels (1-8) guide the flow of the process.

The legend (top-right) defines the color coding:

* **Green:** Benign model

* **Red:** Malicious model

* **Green with a lock:** Encrypted benign model

* **Red with a lock:** Encrypted malicious model

* **Blue:** Global model

* **Key Icon:** Functional key

* **Arrow:** Projection vector

* **Red X:** Malicious

* **Green Circle:** Benign

### Detailed Analysis or Content Details

**Server-Side Processing (Top Section):**

1. **Global Model (①):** A blue rectangular prism representing the initial global model.

2. **Layer-wise Projection (⑥):** The global model is projected into multiple projection vectors (arrows). Each projection vector is associated with a rectangular prism, colored to indicate whether it's a benign or malicious model.

3. **Cluster Analysis (⑦):** The projection vectors are fed into a K-Means clustering algorithm. The diagram shows two clusters labeled "1" and "2", with "Top K-1 clusters" indicated below. Cosine similarity is used for clustering.

4. **Aggregation (⑧):** The clusters are aggregated, resulting in a new set of models. These models are then decrypted using "DEFE\_AggDec". The resulting models are blue (global model).

**Benign Local Training (Bottom-Left Section):**

1. **Client 1, Client 2, Client i (①):** Represent multiple clients participating in the training process.

2. **Model Training (②):** Each client trains a local model.

3. **DEFE\_Encrypt (③):** The trained model is encrypted using "DEFE\_Encrypt".

4. **DEFE\_FunKeyGen (④):** A functional key is generated.

**Malicious Local Training (Bottom-Right Section):**

1. **Malicious client c*, c* {i+1, ... , I} (⑤):** Represents malicious clients.

2. **Model Training (⑥):** Malicious clients train models, potentially using "Label-flipping attack & Gaussian attack".

3. **DEFE\_Encrypt (⑦):** The trained model is encrypted using "DEFE\_Encrypt".

4. **DEFE\_FunKeyGen (⑧):** A functional key is generated.

### Key Observations

* The system distinguishes between benign and malicious clients.

* Encryption is used to protect the privacy of the local models.

* The server aggregates the models from multiple clients.

* K-Means clustering is used to identify and potentially mitigate the impact of malicious models.

* The "DEFE" modules (DEFE\_Encrypt, DEFE\_AggDec, DEFE\_FunKeyGen) play a crucial role in the privacy-preserving and robust aggregation process.

* The malicious client section explicitly mentions "Label-flipping attack & Gaussian attack", indicating the types of attacks the system is designed to handle.

### Interpretation

This diagram depicts a federated learning system designed to be robust against malicious participants. The core idea is to allow clients to train models locally, encrypt them, and send them to a central server for aggregation. The server uses techniques like K-Means clustering and functional keys to identify and mitigate the influence of malicious models. The "DEFE" modules likely implement differential privacy or other privacy-enhancing technologies to protect the data and models during the aggregation process.

The use of K-Means suggests that the server attempts to group similar models together, potentially identifying outliers (malicious models) that deviate significantly from the norm. The functional keys likely provide a mechanism for secure aggregation, ensuring that the server can combine the models without learning the individual client's data.

The explicit mention of "Label-flipping attack & Gaussian attack" highlights the system's focus on defending against common adversarial attacks in federated learning. The diagram suggests a layered approach to security, combining encryption, clustering, and functional keys to achieve both privacy and robustness. The entire system is designed to allow for collaborative model training while protecting the privacy of individual clients and ensuring the integrity of the aggregated model.