## Diagram: Privacy-Preserving Robust Model Aggregation System

### Overview

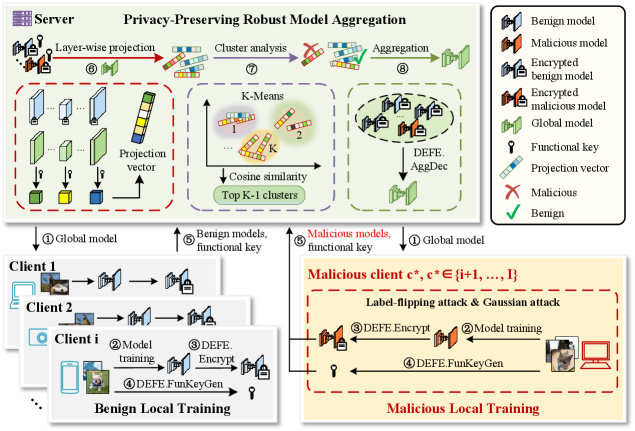

The diagram illustrates a decentralized machine learning system where multiple clients (benign and malicious) train local models, which are aggregated into a global model by a central server. The system emphasizes privacy preservation through encryption, functional keys, and robust aggregation techniques to mitigate malicious attacks.

### Components/Axes

1. **Server Section**:

- **Title**: "Privacy-Preserving Robust Model Aggregation"

- **Steps**:

- **6**: Layer-wise projection → Projection vectors (colored bars: green, yellow, blue).

- **7**: Cluster analysis using K-Means → Cosine similarity metric → Top K-1 clusters.

- **8**: Aggregation → DEFE.AggDec (decryption/aggregation process).

- **Legend**:

- **Benign model** (blue), **Malicious model** (orange), **Encrypted benign/malicious models** (locked icons), **Functional key** (key icon), **Projection vector** (bar chart), **Malicious** (red X), **Benign** (green check).

2. **Client Sections**:

- **Benign Clients (1, 2, i)**:

- **Steps**:

- **2**: Model training (images → screens → locked models).

- **3**: DEFE.Encrypt (encryption process).

- **4**: DEFE.FunKeyGen (functional key generation).

- **Flow**: Local training → Encryption → Functional key generation → Server.

- **Malicious Client (c*)**:

- **Label**: "Malicious client c*, c* ∈ {i+1, ..., I}"

- **Attacks**: Label-flipping attack & Gaussian attack.

- **Steps**:

- **3**: DEFE.Encrypt (malicious encryption).

- **2**: Model training (with attacks).

- **4**: DEFE.FunKeyGen (malicious key generation).

- **Flow**: Malicious training → Encryption → Functional key generation → Server.

### Detailed Analysis

- **Server Workflow**:

1. **Layer-wise projection** (Step 6): Clients project model parameters into vectors (colored bars), which are sent to the server.

2. **Cluster analysis** (Step 7): Server groups projection vectors into clusters using K-Means, prioritizing top K-1 clusters to reduce noise.

3. **Aggregation** (Step 8): DEFE.AggDec decrypts and aggregates models, ensuring robustness against malicious inputs.

- **Client Workflow**:

- **Benign Clients**: Train models locally, encrypt them with DEFE.Encrypt, and generate functional keys (DEFE.FunKeyGen) to enable secure aggregation.

- **Malicious Clients**: Perform label-flipping/Gaussian attacks during training, encrypt models, and generate functional keys. Their malicious models are flagged in the legend (red X).

- **Security Mechanisms**:

- **Encryption**: All models (benign/malicious) are encrypted before transmission (locked icons).

- **Functional Keys**: Generated via DEFE.FunKeyGen, used to decrypt models during aggregation.

- **Robust Aggregation**: Server focuses on top K-1 clusters to minimize the impact of malicious models.

### Key Observations

1. **Malicious Client Isolation**: Malicious clients are visually separated (orange box) and labeled with attacks (label-flipping/Gaussian).

2. **Encryption Consistency**: All models (benign/malicious) use DEFE.Encrypt, but malicious models are flagged post-aggregation.

3. **Key Generation**: Functional keys (DEFE.FunKeyGen) are critical for decryption, ensuring only valid models contribute to the global model.

4. **Cluster Prioritization**: Server discards outliers (bottom clusters) via K-Means, focusing on top K-1 clusters for aggregation.

### Interpretation

The system balances decentralization and security by:

- **Privacy Preservation**: Encrypting models and using functional keys to prevent exposure of raw data.

- **Robustness**: Aggregating only top clusters to mitigate malicious inputs, ensuring the global model remains accurate despite attacks.

- **Decentralization**: Clients retain control over local training, reducing central points of failure.

The diagram highlights a trade-off between computational overhead (encryption/key generation) and security, emphasizing that robust aggregation (via clustering and decryption) is essential for trustworthy federated learning in adversarial environments.