## Textual Rule Set: Decision Rules for Binary Classification

### Overview

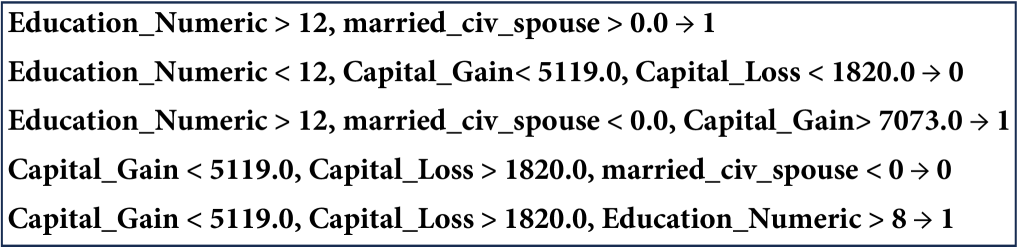

The image displays a set of five logical rules, likely extracted from a decision tree or a rule-based machine learning model. Each rule is presented as a line of text within a light gray rectangular box. The rules define conditions based on numerical features that lead to a binary outcome, indicated by an arrow (→) pointing to either `0` or `1`. The text is in a monospaced font.

### Components/Axes

This is not a chart or diagram with axes. It is a textual list of conditional statements.

- **Format**: Each line follows the pattern: `[Condition 1], [Condition 2], ... → [Outcome]`

- **Variables Used**: `Education_Numeric`, `married_civ_spouse`, `Capital_Gain`, `Capital_Loss`.

- **Outcomes**: Binary values `0` and `1`.

- **Spatial Layout**: The five rules are listed vertically, left-aligned, with consistent spacing. The entire block is contained within a simple bordered box.

### Detailed Analysis / Content Details

Here is the precise transcription of each rule, from top to bottom:

1. **Rule 1**: `Education_Numeric > 12, married_civ_spouse > 0.0 → 1`

* **Conditions**: `Education_Numeric` is greater than 12, AND `married_civ_spouse` is greater than 0.0.

* **Outcome**: `1`.

2. **Rule 2**: `Education_Numeric < 12, Capital_Gain< 5119.0, Capital_Loss < 1820.0 → 0`

* **Conditions**: `Education_Numeric` is less than 12, AND `Capital_Gain` is less than 5119.0, AND `Capital_Loss` is less than 1820.0.

* **Outcome**: `0`.

3. **Rule 3**: `Education_Numeric > 12, married_civ_spouse < 0.0, Capital_Gain> 7073.0 → 1`

* **Conditions**: `Education_Numeric` is greater than 12, AND `married_civ_spouse` is less than 0.0, AND `Capital_Gain` is greater than 7073.0.

* **Outcome**: `1`.

4. **Rule 4**: `Capital_Gain < 5119.0, Capital_Loss > 1820.0, married_civ_spouse < 0 → 0`

* **Conditions**: `Capital_Gain` is less than 5119.0, AND `Capital_Loss` is greater than 1820.0, AND `married_civ_spouse` is less than 0.

* **Outcome**: `0`.

5. **Rule 5**: `Capital_Gain < 5119.0, Capital_Loss > 1820.0, Education_Numeric > 8 → 1`

* **Conditions**: `Capital_Gain` is less than 5119.0, AND `Capital_Loss` is greater than 1820.0, AND `Education_Numeric` is greater than 8.

* **Outcome**: `1`.

### Key Observations

* **Feature Frequency**: `Capital_Gain` appears in 4 rules, `Education_Numeric` and `married_civ_spouse` in 3 rules each, and `Capital_Loss` in 3 rules.

* **Threshold Values**: Specific numerical thresholds are used: `12` and `8` for education, `0.0` for marital status, `5119.0` and `7073.0` for capital gain, and `1820.0` for capital loss.

* **Outcome Distribution**: Three rules lead to outcome `1`, and two rules lead to outcome `0`.

* **Potential Overlap/Conflict**: Rules 4 and 5 share identical initial conditions (`Capital_Gain < 5119.0, Capital_Loss > 1820.0`) but have different third conditions and, crucially, **different outcomes (`0` vs. `1`)**. This suggests the rules may not be mutually exclusive or that the model's logic is complex, potentially involving feature interactions not fully captured in these isolated rules.

### Interpretation

This image presents a **rule-based classifier**, likely for a binary prediction task such as predicting income level (e.g., >50K vs. <=50K), given the feature names which are common in datasets like the UCI Adult Census.

* **What the Data Suggests**: The rules attempt to segment a population based on education level, marital status, and capital financial gains/losses. Higher education (`>12`) often correlates with a positive outcome (`1`), but this can be modified by marital status or very high capital gains. Lower capital gains combined with lower capital losses and lower education tend toward a negative outcome (`0`). The presence of conflicting rules (4 & 5) indicates that the decision boundary is not simple; the model likely uses these features in a hierarchical or interactive manner.

* **Relationship Between Elements**: Each rule is a conjunction (AND) of conditions. The features act as decision nodes. The outcome (`0` or `1`) is the leaf node prediction. The rules are the paths from root to leaf in a decision tree.

* **Notable Anomalies**: The direct contradiction between Rules 4 and 5 is the most significant observation. In a standard decision tree, paths are mutually exclusive. This could imply:

1. These rules are extracted from different parts of a model (e.g., different trees in a forest) and are not meant to be applied simultaneously.

2. There is an error in the rule extraction or presentation.

3. The model contains logic (like feature interactions or context from prior splits) that makes these rules valid in their specific, non-overlapping contexts, even though they appear conflicting in isolation.

**Language Declaration**: The text in the image is entirely in English. No other languages are present.