TECHNICAL ASSET FINGERPRINT

e4c1603464ba6e24f2aa1411

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

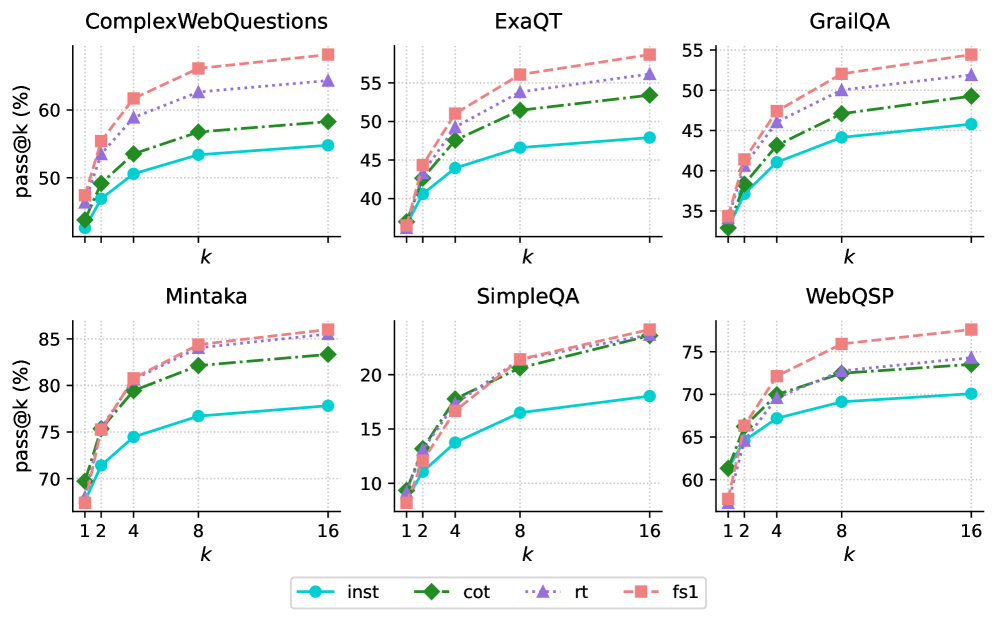

## Line Charts: Pass@k Performance on Question Answering Datasets

### Overview

The image presents a series of line charts comparing the performance of four different methods (inst, cot, rt, fs1) on six question answering datasets (ComplexWebQuestions, ExaQT, GrailQA, Mintaka, SimpleQA, WebQSP). The charts display the "pass@k" metric (percentage of questions answered correctly within the top k predictions) as a function of k.

### Components/Axes

* **Titles (Top Row, Left to Right):** ComplexWebQuestions, ExaQT, GrailQA

* **Titles (Bottom Row, Left to Right):** Mintaka, SimpleQA, WebQSP

* **Y-axis Label (All Charts):** pass@k (%)

* **X-axis Label (All Charts):** k

* **X-axis Scale (All Charts):** 1, 2, 4, 8, 16

* **Y-axis Scale (Varies by Chart):**

* ComplexWebQuestions: 40 to 60

* ExaQT: 35 to 55

* GrailQA: 35 to 55

* Mintaka: 70 to 85

* SimpleQA: 10 to 25

* WebQSP: 60 to 75

* **Legend (Bottom Center):**

* `inst`: Cyan line with circle markers

* `cot`: Green dash-dot line with diamond markers

* `rt`: Purple dotted line with triangle markers

* `fs1`: Pink dashed line with square markers

### Detailed Analysis

**ComplexWebQuestions**

* `inst` (Cyan): Starts at approximately 43%, increases to about 53% at k=16.

* `cot` (Green): Starts at approximately 44%, increases to about 54% at k=16.

* `rt` (Purple): Starts at approximately 47%, increases to about 58% at k=16.

* `fs1` (Pink): Starts at approximately 48%, increases to about 62% at k=16.

**ExaQT**

* `inst` (Cyan): Starts at approximately 37%, increases to about 48% at k=16.

* `cot` (Green): Starts at approximately 37%, increases to about 53% at k=16.

* `rt` (Purple): Starts at approximately 41%, increases to about 54% at k=16.

* `fs1` (Pink): Starts at approximately 42%, increases to about 57% at k=16.

**GrailQA**

* `inst` (Cyan): Starts at approximately 35%, increases to about 47% at k=16.

* `cot` (Green): Starts at approximately 35%, increases to about 52% at k=16.

* `rt` (Purple): Starts at approximately 40%, increases to about 53% at k=16.

* `fs1` (Pink): Starts at approximately 41%, increases to about 55% at k=16.

**Mintaka**

* `inst` (Cyan): Starts at approximately 69%, increases to about 78% at k=16.

* `cot` (Green): Starts at approximately 70%, increases to about 83% at k=16.

* `rt` (Purple): Starts at approximately 75%, increases to about 84% at k=16.

* `fs1` (Pink): Starts at approximately 68%, increases to about 86% at k=16.

**SimpleQA**

* `inst` (Cyan): Starts at approximately 9%, increases to about 18% at k=16.

* `cot` (Green): Starts at approximately 9%, increases to about 22% at k=16.

* `rt` (Purple): Starts at approximately 12%, increases to about 23% at k=16.

* `fs1` (Pink): Starts at approximately 9%, increases to about 24% at k=16.

**WebQSP**

* `inst` (Cyan): Starts at approximately 61%, increases to about 70% at k=16.

* `cot` (Green): Starts at approximately 61%, increases to about 74% at k=16.

* `rt` (Purple): Starts at approximately 66%, increases to about 73% at k=16.

* `fs1` (Pink): Starts at approximately 60%, increases to about 78% at k=16.

### Key Observations

* **General Trend:** All methods show an increase in `pass@k` as `k` increases across all datasets. The rate of increase diminishes as k increases.

* **Relative Performance:** The `fs1` method generally achieves the highest `pass@k` values across most datasets, followed by `rt` and `cot`. The `inst` method typically has the lowest `pass@k` values.

* **Dataset Difficulty:** The `pass@k` values vary significantly across datasets, indicating varying levels of difficulty. SimpleQA has the lowest overall performance, while Mintaka has the highest.

* **Performance Saturation:** The performance gain diminishes as k increases, suggesting that after a certain point, increasing k provides diminishing returns.

### Interpretation

The data suggests that the `fs1` method is generally the most effective among the four methods evaluated for question answering across these datasets. The performance differences between the methods highlight the impact of different approaches on question answering accuracy. The varying performance across datasets indicates that the difficulty of question answering is highly dependent on the specific dataset characteristics. The diminishing returns observed as k increases suggest that optimizing the ranking of the top few predictions is crucial for maximizing performance.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

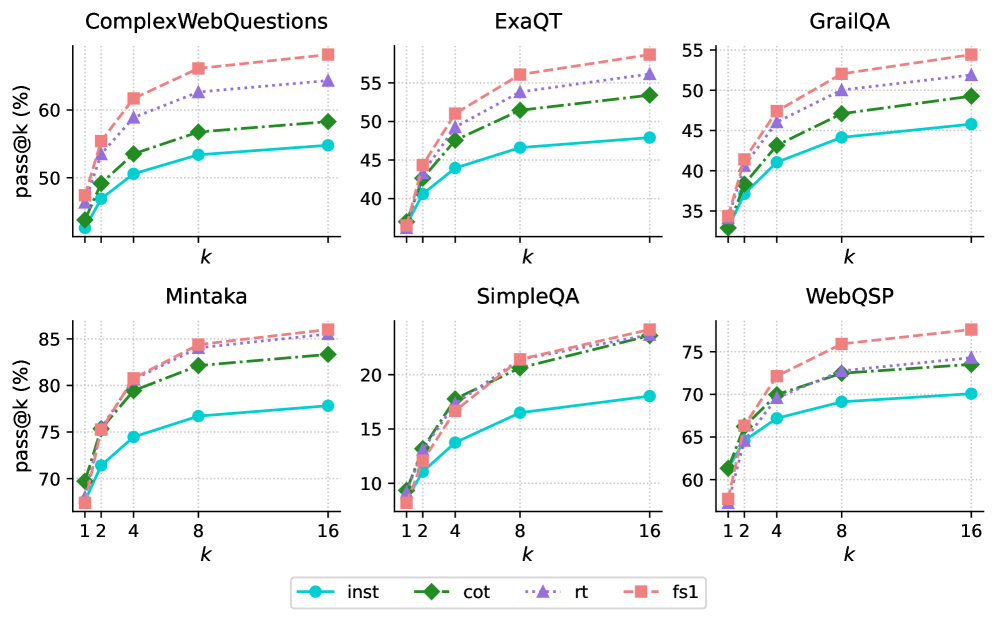

## Charts: Pass@k Performance Across Datasets

### Overview

The image presents six separate line charts, each displaying the pass@k performance (in percentage) for different models (inst, cot, rt, fs1) across six different question answering datasets: ComplexWebQuestions, ExaQT, GrailQA, Mintaka, SimpleQA, and WebQSP. The x-axis represents 'k', likely indicating the number of retrieved answers considered, and the y-axis represents the pass@k score (percentage).

### Components/Axes

* **X-axis Label (all charts):** k

* **Y-axis Label (all charts):** pass@k (%)

* **Legend (bottom-center):**

* inst (cyan solid line)

* cot (green solid line with triangle markers)

* rt (red dotted line)

* fs1 (red dashed line with square markers)

* **Chart Titles (top-center of each chart):**

* ComplexWebQuestions

* ExaQT

* GrailQA

* Mintaka

* SimpleQA

* WebQSP

* **X-axis Scale:** Varies per chart.

* ComplexWebQuestions: 1, 2, 4, 8, 16

* ExaQT: 1, 2, 4, 8, 16

* GrailQA: 1, 2, 4, 8, 16

* Mintaka: 1, 2, 4, 8, 16

* SimpleQA: 1, 2, 4, 8, 16

* WebQSP: 1, 2, 4, 8, 16

* **Y-axis Scale:** Varies per chart, but generally ranges from approximately 30% to 85%.

### Detailed Analysis

Here's a breakdown of the data for each chart:

**1. ComplexWebQuestions:**

* **inst:** Starts at ~40%, increases to ~60% at k=16. The line slopes upward, but the increase slows down after k=8.

* **cot:** Starts at ~45%, increases to ~62% at k=16. Similar upward slope to 'inst', with a slowing increase after k=8.

* **rt:** Starts at ~50%, increases to ~62% at k=16. Relatively flat slope.

* **fs1:** Starts at ~52%, increases to ~63% at k=16. Slightly steeper slope than 'rt'.

**2. ExaQT:**

* **inst:** Starts at ~38%, increases to ~52% at k=16. Upward slope, with a more pronounced slowing after k=8.

* **cot:** Starts at ~42%, increases to ~55% at k=16. Similar trend to 'inst'.

* **rt:** Starts at ~45%, increases to ~55% at k=16. Relatively flat slope.

* **fs1:** Starts at ~48%, increases to ~56% at k=16. Slightly steeper slope than 'rt'.

**3. GrailQA:**

* **inst:** Starts at ~35%, increases to ~48% at k=16. Upward slope, slowing after k=8.

* **cot:** Starts at ~38%, increases to ~50% at k=16. Similar trend to 'inst'.

* **rt:** Starts at ~42%, increases to ~52% at k=16. Relatively flat slope.

* **fs1:** Starts at ~45%, increases to ~53% at k=16. Slightly steeper slope than 'rt'.

**4. Mintaka:**

* **inst:** Starts at ~72%, increases to ~82% at k=16. Steep upward slope, with a slight slowing after k=8.

* **cot:** Starts at ~75%, increases to ~84% at k=16. Similar trend to 'inst'.

* **rt:** Starts at ~78%, increases to ~84% at k=16. Relatively flat slope.

* **fs1:** Starts at ~80%, increases to ~85% at k=16. Slightly steeper slope than 'rt'.

**5. SimpleQA:**

* **inst:** Starts at ~8%, increases to ~20% at k=16. Steep upward slope, with a slight slowing after k=8.

* **cot:** Starts at ~10%, increases to ~22% at k=16. Similar trend to 'inst'.

* **rt:** Starts at ~12%, increases to ~22% at k=16. Relatively flat slope.

* **fs1:** Starts at ~14%, increases to ~23% at k=16. Slightly steeper slope than 'rt'.

**6. WebQSP:**

* **inst:** Starts at ~64%, increases to ~72% at k=16. Upward slope, slowing after k=8.

* **cot:** Starts at ~67%, increases to ~74% at k=16. Similar trend to 'inst'.

* **rt:** Starts at ~70%, increases to ~74% at k=16. Relatively flat slope.

* **fs1:** Starts at ~72%, increases to ~75% at k=16. Slightly steeper slope than 'rt'.

### Key Observations

* 'fs1' consistently performs better than 'rt' across all datasets.

* 'inst' and 'cot' generally show similar performance trends.

* The performance gains from increasing 'k' diminish as 'k' increases, indicating diminishing returns.

* Mintaka has the highest overall pass@k scores, while SimpleQA has the lowest.

* The relative performance of the models varies across datasets. For example, 'rt' and 'fs1' are closer in performance on GrailQA than on Mintaka.

### Interpretation

The charts demonstrate the pass@k performance of different retrieval-augmented generation models on various question answering datasets. The 'k' parameter represents the number of retrieved documents considered, and the pass@k score indicates the percentage of questions answered correctly when considering the top 'k' retrieved documents.

The consistent outperformance of 'fs1' over 'rt' suggests that the 'fs1' method is more effective at leveraging retrieved information. The similar performance of 'inst' and 'cot' indicates that the chain-of-thought prompting strategy ('cot') doesn't significantly improve performance in this setting.

The varying performance across datasets highlights the importance of dataset characteristics. Mintaka, with its high pass@k scores, likely presents questions that are easier to answer with retrieved information, while SimpleQA, with its low scores, may involve more complex reasoning or require information not readily available in the retrieved documents.

The diminishing returns observed as 'k' increases suggest that there's a limit to the benefit of considering more retrieved documents. Beyond a certain point, the additional documents may introduce noise or irrelevant information, hindering performance. This suggests an optimal 'k' value exists for each dataset and model combination.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## [Multi-Panel Line Chart]: Performance of Different Methods on Six Question Answering Datasets

### Overview

The image displays six separate line charts arranged in a 2x3 grid. Each chart plots the performance of four different methods on a specific question answering (QA) dataset. Performance is measured by the "pass@k (%)" metric as a function of the parameter "k". All charts show a consistent pattern where performance improves with increasing "k", but the absolute performance levels and the relative ranking of methods vary across datasets.

### Components/Axes

* **Titles:** Six dataset names, one above each chart: `ComplexWebQuestions`, `ExaQT`, `GrailQA`, `Mintaka`, `SimpleQA`, `WebQSP`.

* **Y-Axis:** Labeled `pass@k (%)` for all charts. The scale varies:

* ComplexWebQuestions: ~45% to ~65%

* ExaQT: ~35% to ~58%

* GrailQA: ~33% to ~55%

* Mintaka: ~68% to ~86%

* SimpleQA: ~8% to ~24%

* WebQSP: ~58% to ~78%

* **X-Axis:** Labeled `k` for all charts. The markers are at values 1, 2, 4, 8, and 16.

* **Legend:** Positioned at the bottom center of the entire figure. It defines four data series:

* `inst`: Cyan solid line with circle markers.

* `cot`: Green dashed line with diamond markers.

* `rt`: Purple dotted line with upward-pointing triangle markers.

* `fs1`: Salmon (light red) dashed line with square markers.

### Detailed Analysis

**1. ComplexWebQuestions (Top-Left)**

* **Trend:** All four methods show a steep initial increase from k=1 to k=4, followed by a more gradual rise to k=16.

* **Data Points (Approximate):**

* `fs1` (Salmon, Square): Starts ~48%, ends ~64%.

* `rt` (Purple, Triangle): Starts ~47%, ends ~63%.

* `cot` (Green, Diamond): Starts ~45%, ends ~59%.

* `inst` (Cyan, Circle): Starts ~44%, ends ~55%.

* **Ranking (at k=16):** `fs1` > `rt` > `cot` > `inst`.

**2. ExaQT (Top-Center)**

* **Trend:** Similar logarithmic growth pattern. The gap between `fs1`/`rt` and `cot`/`inst` widens as k increases.

* **Data Points (Approximate):**

* `fs1`: Starts ~37%, ends ~58%.

* `rt`: Starts ~36%, ends ~56%.

* `cot`: Starts ~36%, ends ~53%.

* `inst`: Starts ~36%, ends ~48%.

* **Ranking (at k=16):** `fs1` > `rt` > `cot` > `inst`.

**3. GrailQA (Top-Right)**

* **Trend:** Consistent upward trend. The performance hierarchy is clear and maintained across all k.

* **Data Points (Approximate):**

* `fs1`: Starts ~34%, ends ~54%.

* `rt`: Starts ~34%, ends ~52%.

* `cot`: Starts ~33%, ends ~49%.

* `inst`: Starts ~33%, ends ~46%.

* **Ranking (at k=16):** `fs1` > `rt` > `cot` > `inst`.

**4. Mintaka (Bottom-Left)**

* **Trend:** Strong upward trend. The top three methods (`fs1`, `rt`, `cot`) are tightly clustered, while `inst` lags significantly.

* **Data Points (Approximate):**

* `fs1`: Starts ~68%, ends ~86%.

* `rt`: Starts ~69%, ends ~85%.

* `cot`: Starts ~70%, ends ~83%.

* `inst`: Starts ~69%, ends ~78%.

* **Ranking (at k=16):** `fs1` ≈ `rt` > `cot` > `inst`.

**5. SimpleQA (Bottom-Center)**

* **Trend:** All methods show improvement. The `rt` and `fs1` lines nearly overlap at the top, while `cot` and `inst` are distinctly lower.

* **Data Points (Approximate):**

* `fs1`: Starts ~9%, ends ~24%.

* `rt`: Starts ~9%, ends ~24%.

* `cot`: Starts ~9%, ends ~24% (appears to converge with top two at k=16).

* `inst`: Starts ~9%, ends ~18%.

* **Ranking (at k=16):** `fs1` ≈ `rt` ≈ `cot` > `inst`.

**6. WebQSP (Bottom-Right)**

* **Trend:** Clear logarithmic growth. A distinct separation exists between the top method (`fs1`) and the others.

* **Data Points (Approximate):**

* `fs1`: Starts ~58%, ends ~78%.

* `rt`: Starts ~61%, ends ~74%.

* `cot`: Starts ~61%, ends ~73%.

* `inst`: Starts ~61%, ends ~70%.

* **Ranking (at k=16):** `fs1` > `rt` ≈ `cot` > `inst`.

### Key Observations

1. **Universal Trend:** Across all six datasets, the `pass@k` metric increases with `k` for every method, demonstrating the benefit of generating more candidate answers.

2. **Consistent Method Hierarchy:** The `fs1` method (salmon squares) is consistently the top or tied-for-top performer. The `inst` method (cyan circles) is consistently the lowest performer.

3. **Dataset Difficulty:** The absolute `pass@k` values vary greatly, indicating differing dataset difficulty. `SimpleQA` appears the most challenging (max ~24%), while `Mintaka` appears the easiest (max ~86%).

4. **Convergence at High k:** On several datasets (`SimpleQA`, `Mintaka`), the performance of the top methods (`fs1`, `rt`, `cot`) converges as `k` increases to 16.

### Interpretation

This visualization compares the efficacy of four different prompting or reasoning strategies (`inst`: instruction-only, `cot`: chain-of-thought, `rt`: self-refinement or similar, `fs1`: few-shot with one example) for large language models on knowledge-intensive QA tasks.

The data suggests that **providing examples (`fs1`) or structured reasoning steps (`cot`, `rt`) consistently outperforms simple instruction (`inst`)**. The advantage of these advanced methods is robust across diverse QA formats and difficulty levels. The `pass@k` metric's rise with `k` underscores a key strategy in LLM deployment: generating multiple candidate answers and using a verifier or voting mechanism to select the best one significantly boosts reliability. The convergence of top methods at high `k` on some datasets implies that with enough candidate generations, the specific prompting strategy may become less critical, though `fs1` maintains a slight edge. The stark difference in absolute performance between datasets like `SimpleQA` and `Mintaka` highlights the importance of benchmarking across a varied suite of tasks to get a complete picture of model capability.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Performance Comparison Across Datasets

### Overview

The image contains six line graphs arranged in two rows and three columns, comparing the performance of four methods (`inst`, `cot`, `rt`, `fs1`) across six datasets (`ComplexWebQuestions`, `ExaQT`, `GrailQA`, `Mintaka`, `SimpleQA`, `WebQSP`). Each graph plots `pass@k (%)` on the y-axis against `k` (1–16) on the x-axis. The legend at the bottom center identifies the methods with distinct colors and markers: `inst` (blue line), `cot` (green dashed line), `rt` (purple dotted line), and `fs1` (red square markers).

---

### Components/Axes

- **Y-axis**: `pass@k (%)` (performance metric, ranging from ~35% to 85% depending on the dataset).

- **X-axis**: `k` (integer values from 1 to 16, likely representing a parameter like sample size or iteration count).

- **Legend**: Located at the bottom center, with four entries:

- `inst`: Solid blue line.

- `cot`: Green dashed line.

- `rt`: Purple dotted line.

- `fs1`: Red square markers.

- **Graph Titles**: Each subplot is labeled with a dataset name (e.g., `ComplexWebQuestions`, `Mintaka`).

---

### Detailed Analysis

#### ComplexWebQuestions

- **Trends**: All methods show upward trends. `fs1` (red) starts highest (~55% at k=1) and increases steeply to ~68% at k=16. `inst` (blue) starts lowest (~45% at k=1) and rises to ~53% at k=16. `cot` (green) and `rt` (purple) follow intermediate trajectories (~50–63%).

- **Key Data Points**:

- k=1: `fs1` ~55%, `inst` ~45%, `cot` ~48%, `rt` ~52%.

- k=16: `fs1` ~68%, `inst` ~53%, `cot` ~58%, `rt` ~63%.

#### ExaQT

- **Trends**: Similar upward trajectories. `fs1` leads (~45% at k=1 → ~55% at k=16). `inst` (~38% → ~47%), `cot` (~42% → ~52%), and `rt` (~40% → ~53%) lag slightly behind.

- **Key Data Points**:

- k=1: `fs1` ~45%, `inst` ~38%, `cot` ~42%, `rt` ~40%.

- k=16: `fs1` ~55%, `inst` ~47%, `cot` ~52%, `rt` ~53%.

#### GrailQA

- **Trends**: `fs1` dominates (~35% → ~55%). `inst` (~30% → ~45%), `cot` (~32% → ~48%), and `rt` (~34% → ~51%) show moderate gains.

- **Key Data Points**:

- k=1: `fs1` ~35%, `inst` ~30%, `cot` ~32%, `rt` ~34%.

- k=16: `fs1` ~55%, `inst` ~45%, `cot` ~48%, `rt` ~51%.

#### Mintaka

- **Trends**: Highest performance overall. `fs1` (~70% → ~85%), `inst` (~65% → ~78%), `cot` (~72% → ~82%), and `rt` (~70% → ~83%) all improve significantly.

- **Key Data Points**:

- k=1: `fs1` ~70%, `inst` ~65%, `cot` ~72%, `rt` ~70%.

- k=16: `fs1` ~85%, `inst` ~78%, `cot` ~82%, `rt` ~83%.

#### SimpleQA

- **Trends**: `fs1` (~10% → ~22%), `inst` (~12% → ~18%), `cot` (~15% → ~21%), and `rt` (~13% → ~20%) show steep gains.

- **Key Data Points**:

- k=1: `fs1` ~10%, `inst` ~12%, `cot` ~15%, `rt` ~13%.

- k=16: `fs1` ~22%, `inst` ~18%, `cot` ~21%, `rt` ~20%.

#### WebQSP

- **Trends**: `fs1` (~60% → ~78%), `inst` (~55% → ~70%), `cot` (~62% → ~74%), and `rt` (~60% → ~75%) improve consistently.

- **Key Data Points**:

- k=1: `fs1` ~60%, `inst` ~55%, `cot` ~62%, `rt` ~60%.

- k=16: `fs1` ~78%, `inst` ~70%, `cot` ~74%, `rt` ~75%.

---

### Key Observations

1. **Consistent Ranking**: `fs1` (red) consistently outperforms other methods across all datasets, followed by `cot` (green), `rt` (purple), and `inst` (blue).

2. **Dataset Variability**: Performance levels vary by dataset (e.g., `Mintaka` has the highest baseline performance, while `SimpleQA` starts lowest).

3. **Scaling with k**: All methods improve as `k` increases, suggesting `k` represents a parameter (e.g., sample size) that enhances performance when increased.

4. **Convergence**: In some datasets (e.g., `Mintaka`), the gap between methods narrows at higher `k` values.

---

### Interpretation

The data demonstrates that `fs1` is the most effective method across all datasets, likely due to its architectural or algorithmic advantages. The consistent upward trend for all methods with increasing `k` implies that larger values of `k` (e.g., more training data or iterations) improve performance universally. Dataset-specific differences (e.g., `Mintaka` vs. `SimpleQA`) may reflect variations in complexity or task difficulty. The legend’s spatial placement (bottom center) ensures clarity, while the uniform axis scaling across subplots allows direct comparison. No outliers or anomalies are observed, reinforcing the reliability of the trends.

DECODING INTELLIGENCE...