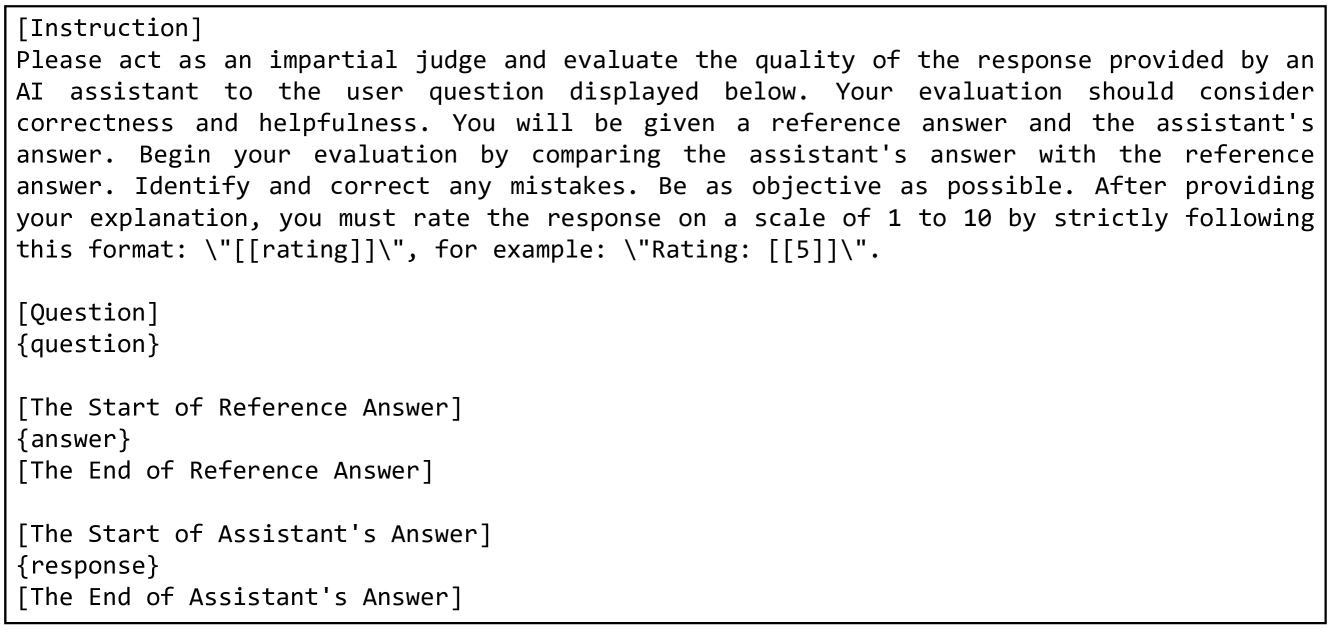

## Screenshot: AI Response Evaluation Template

### Overview

The image displays a structured text template or instruction set for evaluating the quality of an AI assistant's response. It is a monospaced, text-based document with clear section headers and placeholders for dynamic content. The document is designed to guide a human or automated evaluator through a standardized comparison between a reference answer and an assistant's answer.

### Components/Axes

The document is organized into distinct, bordered sections with the following labels and content:

1. **[Instruction]**: The top section containing the core evaluation directive.

* **Text**: "Please act as an impartial judge and evaluate the quality of the response provided by an AI assistant to the user question displayed below. Your evaluation should consider correctness and helpfulness. You will be given a reference answer and the assistant's answer. Begin your evaluation by comparing the assistant's answer with the reference answer. Identify and correct any mistakes. Be as objective as possible. After providing your explanation, you must rate the response on a scale of 1 to 10 by strictly following this format: \"[[rating]]\", for example: \"Rating: [[5]]\"."

2. **[Question]**: A section header for the user's original query.

* **Placeholder**: `{question}`

3. **[The Start of Reference Answer]**: A section header marking the beginning of the correct or expected answer.

* **Placeholder**: `{answer}`

* **Closing Header**: `[The End of Reference Answer]`

4. **[The Start of Assistant's Answer]**: A section header marking the beginning of the AI-generated response to be evaluated.

* **Placeholder**: `{response}`

* **Closing Header**: `[The End of Assistant's Answer]`

### Detailed Analysis

* **Structure**: The template uses a clear, hierarchical structure with square-bracketed headers to define sections. Placeholders in curly braces (`{question}`, `{answer}`, `{response}`) indicate where variable text should be inserted.

* **Language**: The entire document is in English.

* **Formatting**: The text is presented in a monospaced font, resembling code or a formal template. Sections are visually separated by horizontal lines and blank lines.

* **Key Instruction**: The evaluator is mandated to provide an explanation of their comparison before giving a final numerical rating. The rating format is explicitly defined as `"Rating: [[n]]"` where `n` is a number from 1 to 10.

### Key Observations

* The template is designed for **objective, comparative evaluation**, focusing on "correctness and helpfulness."

* It enforces a **structured workflow**: compare, explain, then rate.

* The use of **placeholders** makes this a reusable framework for any question-answer pair.

* The **rating scale** (1-10) and its specific formatting are critical components of the output.

### Interpretation

This image is not a chart or diagram containing data, but a **meta-document**—a tool for creating consistent evaluations. Its purpose is to standardize the assessment of AI outputs, reducing subjectivity by requiring a direct comparison to a reference and a written justification before scoring. The design prioritizes clarity and repeatability, making it suitable for benchmarking, quality assurance, or research contexts where consistent evaluation of AI assistants is necessary. The presence of placeholders indicates this is a template to be populated with specific instances for evaluation.