## Text Block: Evaluation Instructions

### Overview

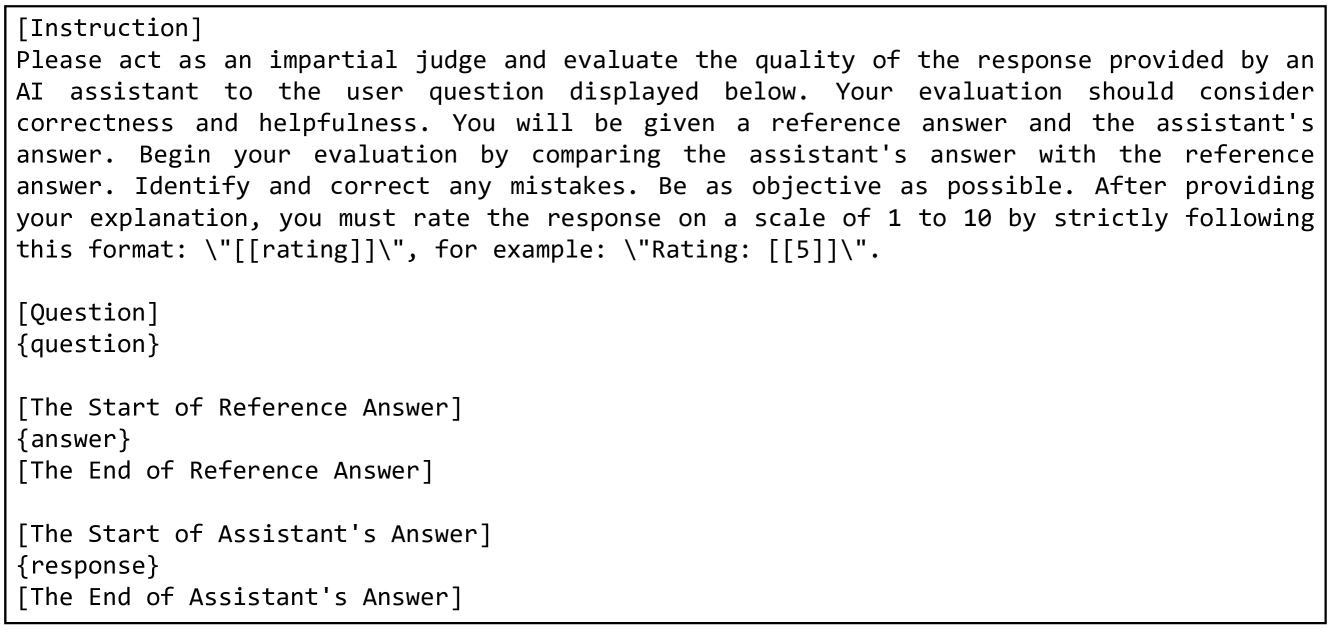

The image contains a block of text providing instructions for evaluating the quality of an AI assistant's response. It outlines the process of comparing the assistant's answer to a reference answer, identifying mistakes, and rating the response.

### Components/Axes

The text is structured into sections denoted by brackets, such as "[Instruction]", "[Question]", "[The Start of Reference Answer]", etc. These sections serve as markers for different parts of the evaluation process.

### Detailed Analysis or ### Content Details

The text content is as follows:

"[Instruction]

Please act as an impartial judge and evaluate the quality of the response provided by an

AI assistant to the user question displayed below. Your evaluation should consider

correctness and helpfulness. You will be given a reference answer and the assistant's

answer. Begin your evaluation by comparing the assistant's answer with the reference

answer. Identify and correct any mistakes. Be as objective as possible. After providing

your explanation, you must rate the response on a scale of 1 to 10 by strictly following

this format: \"[[rating]]\", for example: \"Rating: [[5]]\".

[Question]

{question}

[The Start of Reference Answer]

{answer}

[The End of Reference Answer]

[The Start of Assistant's Answer]

{response}

[The End of Assistant's Answer]"

### Key Observations

The instructions emphasize impartiality, correctness, and helpfulness as key criteria for evaluation. The rating format is explicitly defined. Placeholders like "{question}", "{answer}", and "{response}" indicate where the actual content to be evaluated would be inserted.

### Interpretation

The text provides a framework for a structured evaluation process. It aims to ensure consistency and objectivity in assessing the quality of AI-generated responses. The use of placeholders suggests that this is a template or a general set of instructions applicable to various evaluation scenarios.