\n

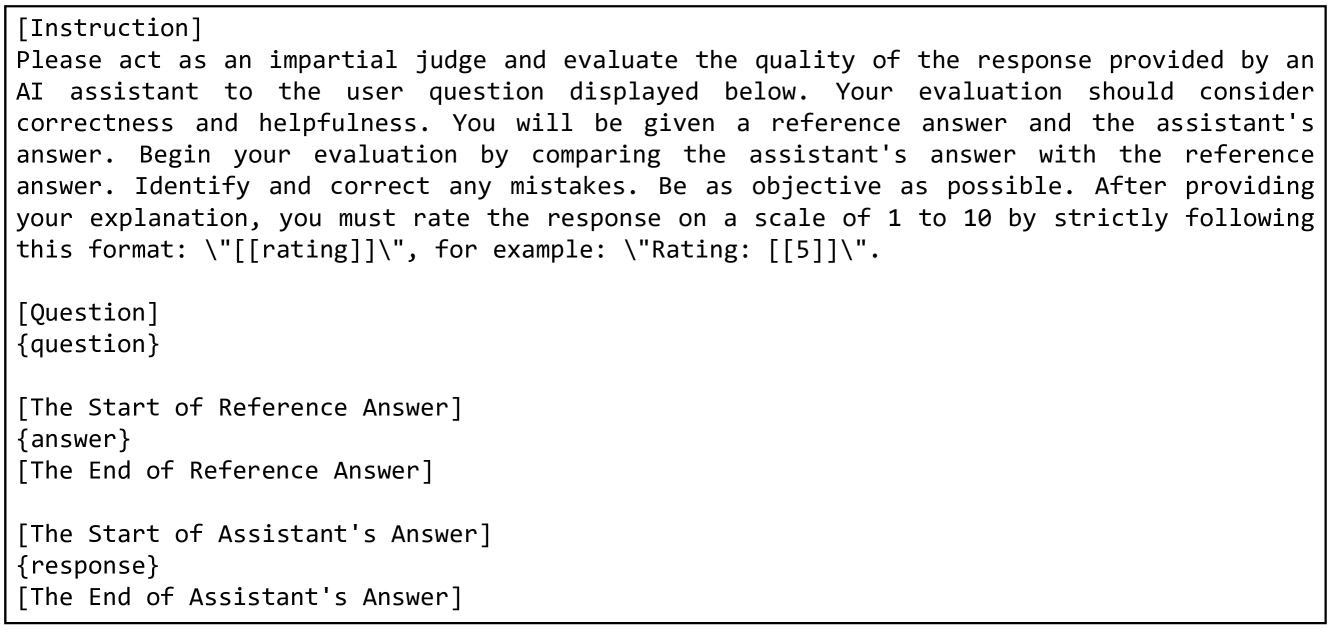

## Screenshot: Instruction/Question & Response Evaluation

### Overview

The image is a screenshot of a text-based interaction, likely from a platform evaluating an AI assistant's response to a user question. It contains the initial instruction given to the AI, the user's question, a placeholder for the reference answer, and a placeholder for the AI assistant's response. The purpose is to facilitate a comparative evaluation of the AI's performance.

### Components/Axes

The screenshot is structured into distinct sections:

* **[Instruction]**: Contains the prompt given to the AI assistant.

* **[Question]**: Contains the user's query.

* **[The Start of Reference Answer]**: Placeholder for the correct or ideal answer.

* **[The End of Reference Answer]**: Marks the end of the reference answer section.

* **[The Start of Assistant's Answer]**: Placeholder for the AI assistant's generated response.

* **[The End of Assistant's Answer]**: Marks the end of the AI assistant's response section.

There are no axes or numerical data present. It's purely textual content.

### Detailed Analysis / Content Details

Here's a transcription of the text within each section:

* **[Instruction]**: "Please act as an impartial judge and evaluate the quality of the response provided by an AI assistant to the user question displayed below. Your evaluation should consider correctness and helpfulness. You will be given a reference answer and the assistant’s answer. Begin your evaluation by comparing the assistant’s answer with the reference answer. Identify and correct any mistakes. Be as objective as possible. After providing your explanation, you must rate the response on a scale of 1 to 10 by strictly following this format: “\[\[rating]]”, for example: “\[\[5]]”."

* **[Question]**: "[question]"

* **[The Start of Reference Answer]**: "[answer]"

* **[The End of Reference Answer]**:

* **[The Start of Assistant's Answer]**: "[response]"

* **[The End of Assistant's Answer]**:

### Key Observations

The screenshot is a template for evaluation. The content within the "[question]", "[answer]", and "[response]" placeholders is missing. The instruction is very detailed, outlining the specific criteria for evaluation (correctness, helpfulness, objectivity, and a numerical rating). The format for the rating is explicitly defined.

### Interpretation

This screenshot represents a quality control mechanism for AI-generated responses. It's designed to ensure that the AI's output is accurate, useful, and meets predefined standards. The use of a reference answer allows for a direct comparison, and the instruction emphasizes the need for impartial judgment. The structured format facilitates consistent and quantifiable evaluation. The placeholders indicate that this is a dynamic system where different questions and responses can be evaluated using the same framework. The entire setup is geared towards improving the reliability and trustworthiness of AI-powered systems.