## Template: AI Response Evaluation Form

### Overview

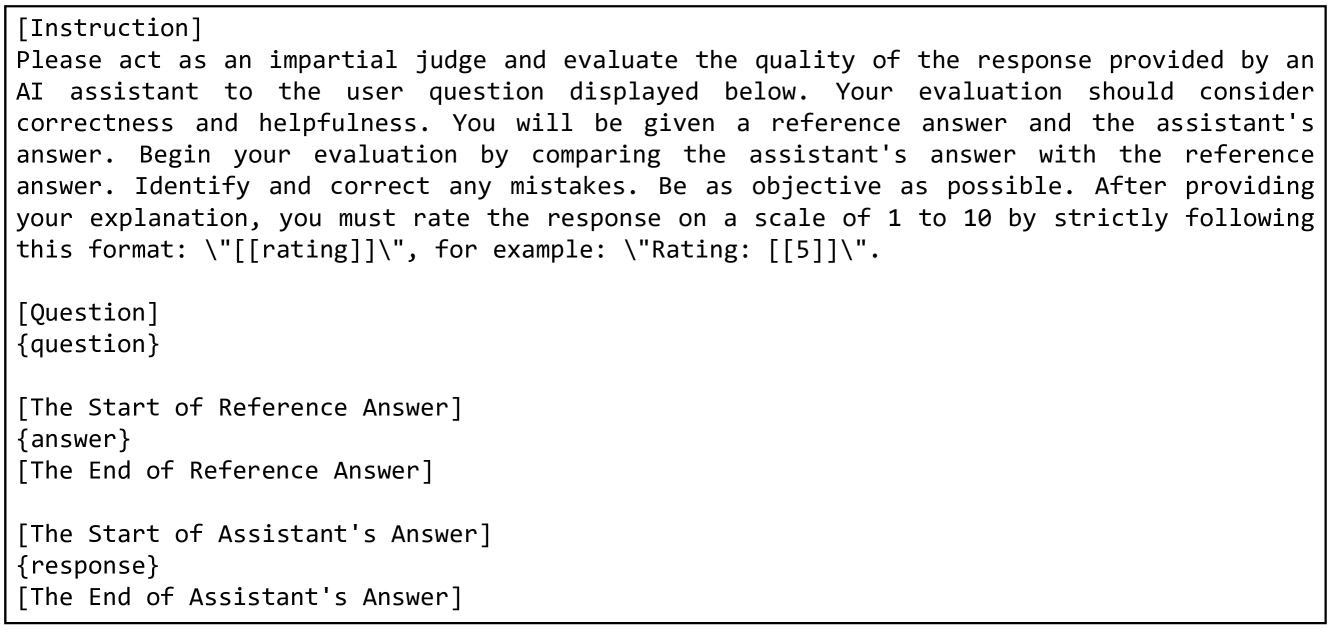

The image displays a structured template for evaluating an AI assistant's response. It includes placeholders for a user question, reference answer, and assistant's answer, along with instructions for impartial judgment.

### Components/Axes

- **Sections**:

1. `[Instruction]`: Guidelines for evaluation (correctness, helpfulness, objectivity).

2. `[Question]`: Placeholder for the user's query.

3. `[Reference Answer]`: Ground truth answer.

4. `[Assistant's Answer]`: Response to evaluate.

- **Formatting**:

- Ratings must follow `\\[[rating]]\\\\` (e.g., `\\[[5]]\\\\`).

### Detailed Analysis

- **Textual Content**:

- The template contains no actual question, reference answer, or assistant response. All fields (`{question}`, `{answer}`, `{response}`) are empty placeholders.

- Instructions emphasize comparing the assistant's answer to the reference answer and rating on a 1–10 scale.

### Key Observations

- **Missing Data**: No specific question, reference answer, or assistant response is provided in the image.

- **Structural Clarity**: The template is well-organized but lacks executable content for evaluation.

### Interpretation

The template outlines a methodology for assessing AI responses but cannot be used for actual evaluation without populated data. The absence of concrete examples (question, answers) renders the form non-functional in its current state. For a valid assessment, the placeholders must be replaced with real-world inputs.