## Scatter Plot: LiveCodeBench v5 Performance vs. Total Parameters

### Overview

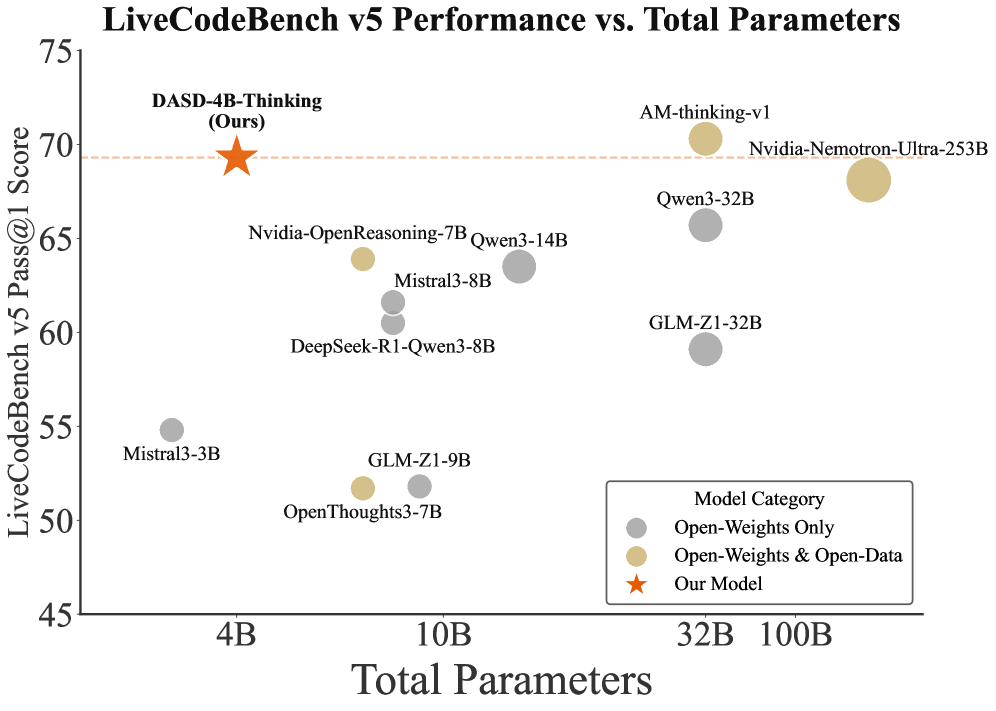

This is a scatter plot comparing the performance of various large language models on the LiveCodeBench v5 coding benchmark against their total parameter count. The chart highlights a specific model, "DASD-4B-Thinking (Ours)," positioning it relative to other open-weight models. The data suggests an analysis of model efficiency, showing performance (y-axis) versus model size (x-axis).

### Components/Axes

* **Chart Title:** "LiveCodeBench v5 Performance vs. Total Parameters"

* **Y-Axis:** "LiveCodeBench v5 Pass@1 Score". The scale runs from 45 to 75, with major tick marks at 45, 50, 55, 60, 65, 70, and 75.

* **X-Axis:** "Total Parameters". The scale is logarithmic, with labeled tick marks at 4B, 10B, 32B, and 100B (B = Billion).

* **Legend (Bottom-Right Corner):** A box titled "Model Category" defines three data series:

* **Gray Circle:** "Open-Weights Only"

* **Tan/Gold Circle:** "Open-Weights & Open-Data"

* **Orange Star:** "Our Model"

* **Highlighted Element:** A dashed orange horizontal line extends from the "Our Model" data point (score ~70) across the chart, serving as a visual reference for its performance level.

### Detailed Analysis

**Data Points (Approximate Coordinates & Category):**

The following lists all labeled models, their approximate position on the chart, and their category based on color.

* **Top-Left Quadrant (High Score, Low Parameters):**

* **DASD-4B-Thinking (Ours):** Orange Star. Position: X ≈ 4B, Y ≈ 70. This is the highlighted model.

* **Top-Right Quadrant (High Score, High Parameters):**

* **AM-thinking-v1:** Tan Circle. Position: X ≈ 32B, Y ≈ 70.

* **Nvidia-Nemotron-Ultra-253B:** Tan Circle. Position: X ≈ 250B (estimated, far right), Y ≈ 68.

* **Qwen3-32B:** Gray Circle. Position: X ≈ 32B, Y ≈ 66.

* **Middle Region (Moderate Score, Moderate Parameters):**

* **Nvidia-OpenReasoning-7B:** Tan Circle. Position: X ≈ 7B, Y ≈ 64.

* **Qwen3-14B:** Gray Circle. Position: X ≈ 14B, Y ≈ 63.

* **Mistral3-8B:** Gray Circle. Position: X ≈ 8B, Y ≈ 62.

* **DeepSeek-R1-Qwen3-8B:** Gray Circle. Position: X ≈ 8B, Y ≈ 61.

* **GLM-Z1-32B:** Gray Circle. Position: X ≈ 32B, Y ≈ 59.

* **Bottom Region (Lower Score, Varying Parameters):**

* **Mistral3-3B:** Gray Circle. Position: X ≈ 3B, Y ≈ 55.

* **GLM-Z1-9B:** Gray Circle. Position: X ≈ 9B, Y ≈ 52.

* **OpenThoughts3-7B:** Tan Circle. Position: X ≈ 7B, Y ≈ 52.

**Trend Verification:**

* **General Trend:** There is a loose positive correlation; models with higher parameter counts (right side) tend to have higher scores (top). However, there is significant variance, especially in the 7B-32B range.

* **"Our Model" Trend:** The orange star (DASD-4B-Thinking) is a clear outlier. It achieves a score (~70) comparable to the top-performing models that have 8x to 60x more parameters (e.g., AM-thinking-v1 at 32B, Nemotron at ~253B).

### Key Observations

1. **Efficiency Outlier:** The model labeled "Our Model" (DASD-4B-Thinking) demonstrates exceptional parameter efficiency, matching the performance of much larger models.

2. **Performance Clustering:** Models cluster into rough performance tiers:

* **Top Tier (Score ~68-70):** Includes "Our Model," AM-thinking-v1, and Nvidia-Nemotron-Ultra-253B.

* **Upper-Mid Tier (Score ~61-66):** Includes Qwen3-32B, Nvidia-OpenReasoning-7B, Qwen3-14B, Mistral3-8B, DeepSeek-R1-Qwen3-8B.

* **Lower-Mid Tier (Score ~52-59):** Includes GLM-Z1-32B, Mistral3-3B, GLM-Z1-9B, OpenThoughts3-7B.

3. **Category Distribution:** Both "Open-Weights Only" (gray) and "Open-Weights & Open-Data" (tan) models are spread across all performance tiers. The top-performing group contains models from both categories plus the highlighted "Our Model."

### Interpretation

This chart is likely from a research paper or technical report introducing the "DASD-4B-Thinking" model. Its primary argument is that this new model achieves state-of-the-art or competitive performance on a coding benchmark while using significantly fewer parameters than existing models.

* **What the data suggests:** The plot challenges the simple "bigger is better" paradigm in LLM scaling. It suggests that architectural innovations, training data quality, or training methodologies (implied by the "Thinking" in the model name) can lead to more efficient models that punch above their weight class.

* **How elements relate:** The dashed reference line from the orange star visually reinforces the central claim: this 4B model performs at the level of 32B+ models. The inclusion of various other models provides context, showing the competitive landscape and where this new model fits.

* **Notable Anomalies:** The most significant anomaly is the position of "Our Model." Another point of interest is that `Nvidia-OpenReasoning-7B` (tan) outperforms several larger gray models, suggesting the "Open-Data" component may contribute to efficiency. Conversely, `GLM-Z1-32B` (gray) underperforms relative to its size, scoring lower than several smaller models.