TECHNICAL ASSET FINGERPRINT

e5556bfd89812710ac4c8a20

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Bar Chart: Persuader's Success Rate with Different Helpers

### Overview

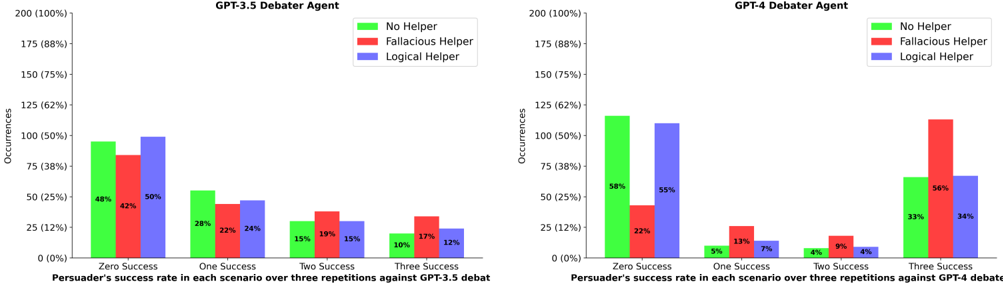

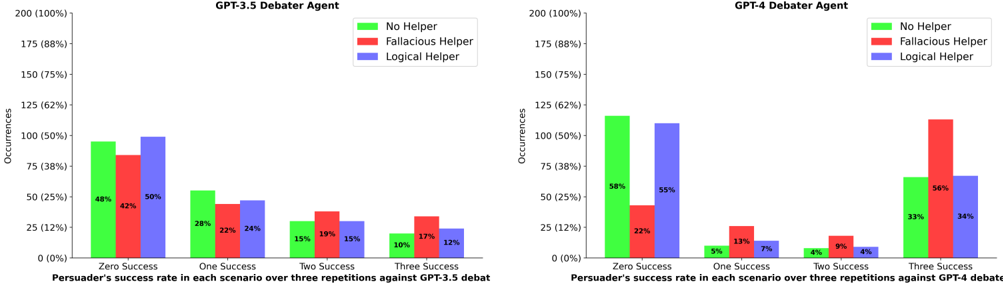

The image presents two bar charts comparing the persuader's success rate in debates against GPT-3.5 and GPT-4, respectively. The success rate is evaluated across scenarios with varying numbers of successful repetitions (Zero, One, Two, Three), and the charts compare the impact of using "No Helper," a "Fallacious Helper," and a "Logical Helper."

### Components/Axes

**Left Chart (GPT-3.5 Debater Agent):**

* **Title:** GPT-3.5 Debater Agent

* **Y-axis:** Occurrences, with scale markers at 0 (0%), 25 (12%), 50 (25%), 75 (38%), 100 (50%), 125 (62%), 150 (75%), 175 (88%), and 200 (100%).

* **X-axis:** Persuader's success rate in each scenario over three repetitions against GPT-3.5 debat. Categories are "Zero Success", "One Success", "Two Success", and "Three Success".

* **Legend:** Located at the top-right of the chart.

* Green: No Helper

* Red: Fallacious Helper

* Blue: Logical Helper

**Right Chart (GPT-4 Debater Agent):**

* **Title:** GPT-4 Debater Agent

* **Y-axis:** Occurrences, with scale markers at 0 (0%), 25 (12%), 50 (25%), 75 (38%), 100 (50%), 125 (62%), 150 (75%), 175 (88%), and 200 (100%).

* **X-axis:** Persuader's success rate in each scenario over three repetitions against GPT-4 debate. Categories are "Zero Success", "One Success", "Two Success", and "Three Success".

* **Legend:** Located at the top-right of the chart.

* Green: No Helper

* Red: Fallacious Helper

* Blue: Logical Helper

### Detailed Analysis

**GPT-3.5 Debater Agent Chart:**

* **Zero Success:**

* No Helper (Green): Approximately 48%

* Fallacious Helper (Red): Approximately 42%

* Logical Helper (Blue): Approximately 50%

* **One Success:**

* No Helper (Green): Approximately 28%

* Fallacious Helper (Red): Approximately 22%

* Logical Helper (Blue): Approximately 24%

* **Two Success:**

* No Helper (Green): Approximately 15%

* Fallacious Helper (Red): Approximately 19%

* Logical Helper (Blue): Approximately 15%

* **Three Success:**

* No Helper (Green): Approximately 10%

* Fallacious Helper (Red): Approximately 17%

* Logical Helper (Blue): Approximately 12%

**GPT-4 Debater Agent Chart:**

* **Zero Success:**

* No Helper (Green): Approximately 58%

* Fallacious Helper (Red): Approximately 22%

* Logical Helper (Blue): Approximately 55%

* **One Success:**

* No Helper (Green): Approximately 5%

* Fallacious Helper (Red): Approximately 13%

* Logical Helper (Blue): Approximately 7%

* **Two Success:**

* No Helper (Green): Approximately 4%

* Fallacious Helper (Red): Approximately 9%

* Logical Helper (Blue): Approximately 4%

* **Three Success:**

* No Helper (Green): Approximately 33%

* Fallacious Helper (Red): Approximately 56%

* Logical Helper (Blue): Approximately 34%

### Key Observations

* **GPT-3.5:** For GPT-3.5, the "Logical Helper" shows a slightly higher success rate in the "Zero Success" category compared to "No Helper" and "Fallacious Helper." The success rates decrease as the number of successful repetitions increases for all helper types.

* **GPT-4:** For GPT-4, the "Fallacious Helper" has a significantly higher success rate in the "Three Success" category compared to "No Helper" and "Logical Helper." The "No Helper" scenario has the highest success rate in the "Zero Success" category.

### Interpretation

The charts illustrate the impact of different types of helpers on the persuader's success rate against GPT-3.5 and GPT-4 debater agents. The data suggests that the effectiveness of a helper depends on the specific AI model being debated against and the desired outcome (number of successful repetitions).

* **GPT-3.5:** A logical helper seems to provide a slight advantage when the goal is to achieve at least some level of success (Zero Success).

* **GPT-4:** A fallacious helper is surprisingly effective when the goal is to achieve a high number of successful repetitions (Three Success). This could indicate that GPT-4 is more susceptible to flawed reasoning or that a fallacious argument is more persuasive in certain contexts. The "No Helper" condition performs best when no successes are needed.

The data highlights the nuanced relationship between AI models, argumentation strategies, and the role of helpers in debates. It suggests that the optimal strategy for persuasion may vary depending on the specific AI model being targeted.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Bar Chart: Persuader Success Rate vs. Helper Type

### Overview

The image presents two side-by-side bar charts comparing the success rate of a "Persuader" against GPT-3.5 and GPT-4 in a debate scenario. The charts show the distribution of "Occurrences" across different levels of success (Zero, One, Two, Three) for each type of "Helper" (No Helper, Fallacious Helper, Logical Helper). The x-axis represents the success levels, and the y-axis represents the number of occurrences, expressed as both a count and a percentage.

### Components/Axes

* **Title (Left Chart):** "GPT-3.5 Debater Agent"

* **Title (Right Chart):** "GPT-4 Debater Agent"

* **X-axis Label:** "Persuader's success rate in each scenario over three repetitions against GPT-3.5 debate" (Left Chart) / "Persuader's success rate in each scenario over three repetitions against GPT-4 debate" (Right Chart)

* **Y-axis Label:** "Occurrences" (with scale from 0 to 200, also showing percentage equivalents: 0% to 100%)

* **Legend:**

* Green: "No Helper"

* Red: "Fallacious Helper"

* Blue: "Logical Helper"

* **X-axis Markers:** "Zero Success", "One Success", "Two Success", "Three Success"

### Detailed Analysis or Content Details

**Left Chart (GPT-3.5):**

* **No Helper (Green):**

* Zero Success: Approximately 48% (96 occurrences)

* One Success: Approximately 50% (100 occurrences)

* Two Success: Approximately 22% (44 occurrences)

* Three Success: Approximately 17% (34 occurrences)

* **Fallacious Helper (Red):**

* Zero Success: Approximately 42% (84 occurrences)

* One Success: Approximately 28% (56 occurrences)

* Two Success: Approximately 15% (30 occurrences)

* Three Success: Approximately 10% (20 occurrences)

* **Logical Helper (Blue):**

* Zero Success: Approximately 50% (100 occurrences)

* One Success: Approximately 24% (48 occurrences)

* Two Success: Approximately 19% (38 occurrences)

* Three Success: Approximately 12% (24 occurrences)

**Right Chart (GPT-4):**

* **No Helper (Green):**

* Zero Success: Approximately 58% (116 occurrences)

* One Success: Approximately 22% (44 occurrences)

* Two Success: Approximately 4% (8 occurrences)

* Three Success: Approximately 33% (66 occurrences)

* **Fallacious Helper (Red):**

* Zero Success: Approximately 55% (110 occurrences)

* One Success: Approximately 13% (26 occurrences)

* Two Success: Approximately 7% (14 occurrences)

* Three Success: Approximately 56% (112 occurrences)

* **Logical Helper (Blue):**

* Zero Success: Approximately 34% (68 occurrences)

* One Success: Approximately 34% (68 occurrences)

* Two Success: Approximately 9% (18 occurrences)

* Three Success: Approximately 34% (68 occurrences)

### Key Observations

* **GPT-3.5:** The "No Helper" and "Logical Helper" scenarios show similar distributions, with a peak at "Zero Success" and "One Success". The "Fallacious Helper" consistently results in lower occurrences at "Zero Success" and higher occurrences at lower success levels.

* **GPT-4:** The "No Helper" scenario has a high occurrence at "Zero Success". The "Fallacious Helper" shows a significant peak at "Three Success", indicating it may be surprisingly effective in some cases. The "Logical Helper" shows a more even distribution across all success levels.

* **Comparison:** GPT-4 appears to be more resistant to the "No Helper" scenario, with a higher percentage of "Three Success" outcomes compared to GPT-3.5. The "Fallacious Helper" seems to have a more pronounced effect on GPT-4, leading to a higher success rate in some scenarios.

### Interpretation

The data suggests that the type of "Helper" used significantly impacts the Persuader's success rate in debates against both GPT-3.5 and GPT-4. The "Fallacious Helper" appears to be counterintuitively effective against GPT-4, potentially because GPT-4 attempts to address the fallacies directly, giving the persuader an opening. The "Logical Helper" doesn't consistently improve the Persuader's success rate against GPT-3.5, suggesting that simply providing logical arguments isn't enough to overcome GPT-3.5's debating capabilities. GPT-4 demonstrates greater resilience to the "No Helper" scenario, indicating an improved ability to defend against unassisted persuasion attempts. The differences in the distributions between the two models highlight the advancements in reasoning and debate skills between GPT-3.5 and GPT-4. The data also suggests that the nature of the debate and the specific fallacies employed could play a crucial role in determining the outcome.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Grouped Bar Charts: Debater Agent Success Rates

### Overview

The image displays two side-by-side grouped bar charts comparing the performance of two AI models, GPT-3.5 and GPT-4, acting as "Debater Agents." The charts measure the "Persuader's success rate" against these agents under three different helper conditions over three repetitions. The left chart is titled "GPT-3.5 Debater Agent" and the right chart is titled "GPT-4 Debater Agent."

### Components/Axes

* **Chart Titles:** "GPT-3.5 Debater Agent" (left), "GPT-4 Debater Agent" (right).

* **Y-Axis (Both Charts):** Labeled "Occurrences." The scale runs from 0 to 200, with major tick marks at 0, 25, 50, 75, 100, 125, 150, 175, 200. Corresponding percentages are shown in parentheses: (0%), (12%), (25%), (38%), (50%), (62%), (75%), (88%), (100%).

* **X-Axis (Both Charts):** Labeled "Persuader's success rate in each scenario over three repetitions against GPT-3.5 debate" (left) and "...against GPT-4 debate" (right). The categories are:

* Zero Success

* One Success

* Two Success

* Three Success

* **Legend (Top-Right of each chart):**

* **Green Bar:** "No Helper"

* **Red Bar:** "Fallacious Helper"

* **Blue Bar:** "Logical Helper"

### Detailed Analysis

**GPT-3.5 Debater Agent (Left Chart):**

* **Trend Verification:** The overall trend shows a decline in occurrences as the number of successes increases. The "No Helper" (green) and "Logical Helper" (blue) conditions show a steady downward slope. The "Fallacious Helper" (red) condition peaks at "Zero Success" and then declines, but remains the highest bar in the "Two Success" and "Three Success" categories.

* **Data Points (Approximate Occurrences & Labeled Percentages):**

* **Zero Success:**

* No Helper (Green): ~95 occurrences (48%)

* Fallacious Helper (Red): ~85 occurrences (42%)

* Logical Helper (Blue): ~100 occurrences (50%)

* **One Success:**

* No Helper (Green): ~55 occurrences (28%)

* Fallacious Helper (Red): ~45 occurrences (22%)

* Logical Helper (Blue): ~48 occurrences (24%)

* **Two Success:**

* No Helper (Green): ~30 occurrences (15%)

* Fallacious Helper (Red): ~38 occurrences (19%)

* Logical Helper (Blue): ~30 occurrences (15%)

* **Three Success:**

* No Helper (Green): ~20 occurrences (10%)

* Fallacious Helper (Red): ~34 occurrences (17%)

* Logical Helper (Blue): ~24 occurrences (12%)

**GPT-4 Debater Agent (Right Chart):**

* **Trend Verification:** The trend is more complex. For "No Helper" and "Logical Helper," occurrences are highest at "Zero Success" and drop sharply. For the "Fallacious Helper," occurrences are lowest at "Zero Success" and rise dramatically to peak at "Three Success."

* **Data Points (Approximate Occurrences & Labeled Percentages):**

* **Zero Success:**

* No Helper (Green): ~115 occurrences (58%)

* Fallacious Helper (Red): ~45 occurrences (22%)

* Logical Helper (Blue): ~110 occurrences (55%)

* **One Success:**

* No Helper (Green): ~10 occurrences (5%)

* Fallacious Helper (Red): ~26 occurrences (13%)

* Logical Helper (Blue): ~14 occurrences (7%)

* **Two Success:**

* No Helper (Green): ~8 occurrences (4%)

* Fallacious Helper (Red): ~18 occurrences (9%)

* Logical Helper (Blue): ~8 occurrences (4%)

* **Three Success:**

* No Helper (Green): ~66 occurrences (33%)

* Fallacious Helper (Red): ~112 occurrences (56%)

* Logical Helper (Blue): ~68 occurrences (34%)

### Key Observations

1. **Inverse Performance Pattern:** The two models exhibit nearly opposite patterns. GPT-3.5 is most frequently unsuccessful (Zero Success) with all helpers, while GPT-4 is most frequently unsuccessful only when it has No Helper or a Logical Helper.

2. **Fallacious Helper Impact:** The "Fallacious Helper" has a dramatically different effect on each model. For GPT-3.5, it slightly reduces Zero Success but leads to the highest rates of partial success (Two/Three Success). For GPT-4, it drastically reduces Zero Success and is the dominant condition for achieving Three Success (56%).

3. **Logical Helper Ineffectiveness:** The "Logical Helper" performs very similarly to the "No Helper" condition for both models, suggesting it provided little to no advantage in these debate scenarios.

4. **GPT-4's Polarized Results:** GPT-4's outcomes are more binary: it either fails completely (Zero Success) or succeeds completely (Three Success), especially with helpers. The middle outcomes (One/Two Success) are rare.

### Interpretation

The data suggests a fundamental difference in how GPT-3.5 and GPT-4 process and are influenced by helper arguments during a debate task.

* **GPT-3.5** appears to be a more consistent but less effective debater. Its performance degrades predictably with the persuader's success, and it shows only modest, incremental changes when provided with helpers. The fallacious helper may introduce noise that occasionally helps it, but not systematically.

* **GPT-4** demonstrates higher potential but also higher volatility. Its strong baseline performance (high Zero Success with no helper) is completely disrupted by the introduction of a fallacious helper, which paradoxically leads to its highest success rates. This could indicate that GPT-4 is either more susceptible to being misled by fallacious arguments (leading to unpredictable outcomes) or that it can leverage the structure of any helper argument, even a flawed one, to improve its own reasoning in a way GPT-3.5 cannot. The lack of impact from the logical helper is surprising and may indicate the helper's logic was not aligned with the debate's persuasive requirements or that GPT-4's own logic was already sufficient.

**In essence, the charts reveal that more advanced models (GPT-4) may interact with external information (helpers) in more complex and non-linear ways, leading to greater performance swings, while less advanced models (GPT-3.5) show more predictable, but lower-ceiling, behavior.** The "Fallacious Helper" acts as a disruptive catalyst for GPT-4, while being a minor perturbation for GPT-3.5.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Charts: Persuader Success Rates with Different Helper Agents

### Overview

The image contains two side-by-side bar charts comparing the success rates of persuaders against GPT-3.5 and GPT-4 debater agents across three helper scenarios: No Helper, Fallacious Helper, and Logical Helper. Each chart shows occurrences (in percentages) for persuader success rates categorized as Zero, One, Two, or Three Successes over three debate repetitions.

---

### Components/Axes

**Left Chart (GPT-3.5 Debater Agent):**

- **X-Axis**: Persuader's success rate categories:

- Zero Success

- One Success

- Two Success

- Three Success

- **Y-Axis**: Occurrences (0% to 200%, labeled as percentages)

- **Legend**:

- Green: No Helper

- Red: Fallacious Helper

- Blue: Logical Helper

- **Title**: "GPT-3.5 Debater Agent" (top-center)

**Right Chart (GPT-4 Debater Agent):**

- Identical structure to the left chart but with different numerical values.

- **Title**: "GPT-4 Debater Agent" (top-center)

**Spatial Grounding**:

- Legends are positioned in the top-right corner of each chart.

- Bars are clustered by helper type (green/red/blue) within each success category.

---

### Detailed Analysis

**GPT-3.5 Debater Agent (Left Chart):**

- **Zero Success**:

- No Helper: 48%

- Fallacious Helper: 42%

- Logical Helper: 50%

- **One Success**:

- No Helper: 28%

- Fallacious Helper: 22%

- Logical Helper: 24%

- **Two Success**:

- No Helper: 15%

- Fallacious Helper: 19%

- Logical Helper: 15%

- **Three Success**:

- No Helper: 10%

- Fallacious Helper: 17%

- Logical Helper: 12%

**GPT-4 Debater Agent (Right Chart):**

- **Zero Success**:

- No Helper: 58%

- Fallacious Helper: 22%

- Logical Helper: 55%

- **One Success**:

- No Helper: 5%

- Fallacious Helper: 13%

- Logical Helper: 7%

- **Two Success**:

- No Helper: 4%

- Fallacious Helper: 9%

- Logical Helper: 4%

- **Three Success**:

- No Helper: 33%

- Fallacious Helper: 56%

- Logical Helper: 34%

---

### Key Observations

1. **GPT-3.5 Trends**:

- Logical Helpers show the highest success in Zero Success (50%) but decline sharply in higher success categories.

- Fallacious Helpers outperform others in Three Success (17%).

- No Helper performs moderately across all categories.

2. **GPT-4 Trends**:

- Logical Helpers dominate Zero Success (55%) but underperform in higher success categories.

- Fallacious Helpers achieve the highest Three Success rate (56%), significantly outperforming others.

- No Helper shows inconsistent performance, peaking at Zero Success (58%) but dropping to 4% in Two Success.

3. **Cross-Model Comparison**:

- GPT-4 shows a stronger correlation between Fallacious Helpers and high persuader success (56% vs. GPT-3.5's 17%).

- Logical Helpers underperform in GPT-4's Three Success category (34% vs. Fallacious 56%).

---

### Interpretation

The data suggests that **Fallacious Helper agents** are disproportionately effective in scenarios where persuaders achieve high success rates (e.g., Three Success), particularly against GPT-4. This could indicate that fallacious reasoning exploits weaknesses in GPT-4's debate strategy. Conversely, **Logical Helpers** perform best in low-success scenarios (Zero Success) but struggle to maintain effectiveness in high-stakes debates. The **No Helper** baseline shows mixed results, suggesting that helper agents generally improve persuader performance, but their impact varies by model and helper type.

Notably, the stark contrast in GPT-4's Three Success rates (Fallacious 56% vs. Logical 34%) raises questions about the robustness of logical reasoning frameworks against adversarial tactics. This aligns with broader AI safety concerns about deceptive alignment in language models.

DECODING INTELLIGENCE...