## Flowchart: Text Generation Process with Draft and Target Models

### Overview

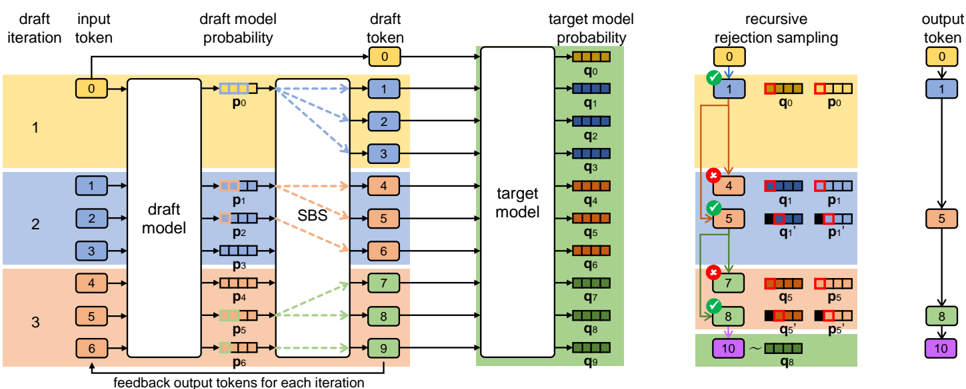

The image depicts a multi-stage text generation pipeline involving draft models, target models, and recursive rejection sampling. The process includes iterative refinement through feedback loops and probabilistic token selection.

### Components/Axes

1. **Left Section (Draft Iterations)**

- **Draft Iteration Blocks**: Three horizontal bands (yellow, blue, orange) labeled 1, 2, 3

- **Input Tokens**: Labeled 0-6 with color-coded blocks (yellow: 0, blue: 1-3, orange: 4-6)

- **Draft Model Probabilities**: p₀-p₆ with dashed arrows connecting to draft model

- **Feedback Output Tokens**: p₀-p₆ with green arrows pointing to target model

2. **Center Section (Target Model)**

- **Target Model Probabilities**: q₀-q₉ with colored blocks (yellow: q₀, blue: q₁-q₃, green: q₄-q₉)

- **Recursive Rejection Sampling**:

- Checkmarks (✓) and X marks (✗) indicating token acceptance/rejection

- Color-coded blocks matching draft/target model probabilities

3. **Right Section (Output)**

- **Output Tokens**: Labeled 0, 1, 5, 8, 10 with color progression (yellow → blue → green → purple)

- **Flow Arrows**: Connect rejection sampling to final output

### Detailed Analysis

1. **Draft Model Process**

- Input tokens (0-6) are processed through three draft iterations

- Each iteration generates draft model probabilities (p₀-p₆)

- Feedback loop returns probabilities to target model

2. **Target Model Evaluation**

- Target model generates probabilities q₀-q₉ for each token

- Recursive rejection sampling compares draft (p) and target (q) probabilities

- Acceptance (✓) occurs when draft and target probabilities align (e.g., p₁/q₁)

3. **Output Generation**

- Selected tokens (0,1,5,8,10) show progressive color change from yellow to purple

- Output sequence demonstrates iterative refinement through rejection sampling

### Key Observations

1. **Iterative Refinement**: Three draft iterations suggest progressive text generation improvement

2. **Probabilistic Gating**: Rejection sampling uses both draft and target model probabilities for token selection

3. **Color Coding**:

- Yellow (0-3) → Blue (4-6) → Green (7-9) → Purple (10) indicates increasing confidence

- Red X marks show rejected tokens (e.g., token 4 rejected in iteration 2)

4. **Feedback Mechanism**: Green arrows show continuous feedback from draft to target model

### Interpretation

This diagram represents a sophisticated text generation system combining:

1. **Draft Models**: For initial token generation with iterative refinement

2. **Target Models**: As quality benchmarks for token evaluation

3. **Rejection Sampling**: To ensure output quality through probabilistic comparison

The system appears to implement a reinforcement learning from human feedback (RLHF)-like architecture, where:

- Draft models generate candidate tokens

- Target models evaluate quality

- Rejection sampling optimizes the final output sequence

- Feedback loops enable continuous model improvement

The color progression and token selection pattern suggest a mechanism for balancing creativity (diverse draft tokens) with quality control (target model filtering), typical in modern language model architectures.