## Bar Chart: Answers Considered Safe (%) by AI Model

### Overview

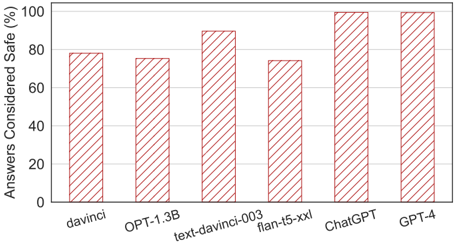

This is a vertical bar chart comparing the percentage of answers considered safe across six different AI language models. The chart presents a single metric ("Answers Considered Safe (%)") for each model, allowing for a direct comparison of their safety performance based on the evaluation criteria used.

### Components/Axes

* **Chart Title:** "Answers Considered Safe (%)" (located at the top-left of the chart area).

* **Y-Axis (Vertical):**

* **Label:** "Answers Considered Safe (%)"

* **Scale:** Linear scale from 0 to 100.

* **Major Tick Marks:** 0, 20, 40, 60, 80, 100.

* **Grid Lines:** Horizontal, light gray grid lines extend from each major tick mark across the chart.

* **X-Axis (Horizontal):**

* **Label:** None (implicit: AI Model).

* **Categories (from left to right):** `davinci`, `OPT-1.3B`, `text-davinci-003`, `flan-t5-xxl`, `ChatGPT`, `GPT-4`.

* **Data Series:** A single series represented by six vertical bars. Each bar has a consistent visual style: a light orange fill with diagonal, darker orange hatching (stripes running from top-left to bottom-right).

* **Legend:** Not present. Each bar is directly labeled on the x-axis.

### Detailed Analysis

The following table reconstructs the data presented in the chart. Values are approximate, estimated based on the height of each bar relative to the y-axis grid lines.

| AI Model (X-Axis) | Approximate "Answers Considered Safe" (%) | Visual Trend & Positioning |

| :--- | :--- | :--- |

| **davinci** | ~78% | The first bar on the left. Its top is slightly below the 80% grid line. |

| **OPT-1.3B** | ~76% | The second bar. Slightly shorter than the `davinci` bar, with its top a bit further below the 80% line. |

| **text-davinci-003** | ~90% | The third bar. Noticeably taller than the first two, with its top positioned midway between the 80% and 100% lines. |

| **flan-t5-xxl** | ~74% | The fourth bar. This is the shortest bar in the chart, with its top clearly below the 80% line and lower than `OPT-1.3B`. |

| **ChatGPT** | ~99% | The fifth bar. Nearly reaches the 100% line, making it one of the two tallest bars. |

| **GPT-4** | ~99% | The sixth and final bar on the right. Visually identical in height to the `ChatGPT` bar, also nearly at 100%. |

**Trend Verification:** The visual trend is not linear. Performance starts in the mid-to-high 70s (`davinci`, `OPT-1.3B`), jumps significantly for `text-davinci-003`, dips to the lowest point for `flan-t5-xxl`, and then peaks at near-perfect scores for `ChatGPT` and `GPT-4`.

### Key Observations

1. **Performance Clustering:** The models fall into three distinct performance clusters:

* **High Safety (~99%):** `ChatGPT` and `GPT-4`.

* **Moderate-High Safety (~90%):** `text-davinci-003`.

* **Moderate Safety (~74-78%):** `davinci`, `OPT-1.3B`, and `flan-t5-xxl`.

2. **Notable Outlier:** `flan-t5-xxl` is the clear underperformer in this specific evaluation, scoring lower than even the older `davinci` model.

3. **Generational Improvement:** Within the OpenAI model lineage shown (`davinci` -> `text-davinci-003` -> `ChatGPT`/`GPT-4`), there is a clear and substantial improvement in the safety metric.

4. **Plateau at the Top:** The performance difference between `ChatGPT` and `GPT-4` on this specific metric is negligible, suggesting a potential ceiling effect for this evaluation method.

### Interpretation

This chart likely comes from a study or report evaluating the safety alignment of various large language models (LLMs). The metric "Answers Considered Safe (%)" suggests a benchmark where model outputs are classified as safe or unsafe against a predefined policy (e.g., against generating harmful, biased, or inappropriate content).

**What the data suggests:**

* **Significant Progress in Safety:** The data demonstrates a strong positive trend in the safety capabilities of commercial, instruction-tuned models from OpenAI, culminating in near-perfect scores for their latest offerings.

* **Model Architecture & Training Matters:** The poor performance of `flan-t5-xxl`, a model from a different family (Google's T5), indicates that safety performance is not universal and depends heavily on specific training techniques, alignment procedures, and evaluation benchmarks. It may excel in other metrics not shown here.

* **Evaluation Context is Critical:** The chart shows a single, specific safety metric. A comprehensive assessment would require multiple benchmarks covering different harm categories (e.g., toxicity, privacy, fairness). The near-100% scores for ChatGPT and GPT-4 might reflect the specific test set used and may not generalize to all possible unsafe prompts.

**Reading between the lines:**

The inclusion of older models like `davinci` alongside state-of-the-art ones serves as a historical benchmark, highlighting the rapid iteration in AI safety. The dip for `flan-t5-xxl` is a crucial data point, warning against assuming all advanced models perform equally on safety. It underscores the importance of independent, transparent evaluations across diverse model architectures. The chart's primary message is likely to showcase the safety advancements of the `ChatGPT`/`GPT-4` series, but a nuanced reading reveals the complexity and variability of achieving "safety" in AI systems.