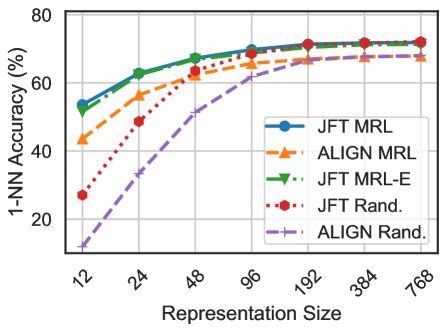

## Line Chart: 1-NN Accuracy vs. Representation Size for Different Pre-training Methods

### Overview

The image is a line chart comparing the performance of five different model training/initialization methods. It plots the 1-Nearest Neighbor (1-NN) classification accuracy (as a percentage) against the model's representation size. The chart demonstrates how accuracy improves with larger representation sizes for each method and reveals performance gaps between them.

### Components/Axes

* **Chart Type:** Multi-series line chart with markers.

* **Y-Axis (Vertical):**

* **Label:** `1-NN Accuracy (%)`

* **Scale:** Linear, ranging from 0 to 80.

* **Major Ticks:** 0, 20, 40, 60, 80.

* **X-Axis (Horizontal):**

* **Label:** `Representation Size`

* **Scale:** Logarithmic (base 2), with discrete, labeled points.

* **Data Points (Markers):** 12, 24, 48, 96, 192, 384, 768.

* **Legend:** Located in the bottom-right quadrant of the chart area. It contains five entries, each with a unique color, line style, and marker symbol.

1. **JFT MRL:** Blue solid line with circle markers (●).

2. **ALIGN MRL:** Orange dashed line with upward-pointing triangle markers (▲).

3. **JFT MRL-E:** Green dash-dot line with downward-pointing triangle markers (▼).

4. **JFT Rand.:** Red dotted line with circle markers (●).

5. **ALIGN Rand.:** Purple dashed line with plus sign markers (+).

### Detailed Analysis

**Trend Verification & Data Point Extraction:**

All five series show a positive correlation between representation size and accuracy, with the rate of improvement diminishing as size increases (logarithmic growth).

1. **JFT MRL (Blue, ●):**

* **Trend:** Starts highest, increases steadily, and plateaus at the top.

* **Approximate Values:** Size 12: ~53%, Size 24: ~63%, Size 48: ~68%, Size 96: ~70%, Size 192: ~72%, Size 384: ~72%, Size 768: ~72%.

2. **ALIGN MRL (Orange, ▲):**

* **Trend:** Follows a similar curve to JFT MRL but consistently below it. Plateaus slightly lower.

* **Approximate Values:** Size 12: ~43%, Size 24: ~56%, Size 48: ~63%, Size 96: ~68%, Size 192: ~69%, Size 384: ~69%, Size 768: ~69%.

3. **JFT MRL-E (Green, ▼):**

* **Trend:** Nearly identical to JFT MRL, overlapping closely throughout. Ends at the same peak.

* **Approximate Values:** Size 12: ~55%, Size 24: ~64%, Size 48: ~68%, Size 96: ~70%, Size 192: ~72%, Size 384: ~72%, Size 768: ~72%.

4. **JFT Rand. (Red, ●):**

* **Trend:** Starts much lower than the MRL methods but shows a very steep initial improvement, converging with the ALIGN MRL line around size 96.

* **Approximate Values:** Size 12: ~27%, Size 24: ~49%, Size 48: ~63%, Size 96: ~68%, Size 192: ~70%, Size 384: ~71%, Size 768: ~71%.

5. **ALIGN Rand. (Purple, +):**

* **Trend:** Starts the lowest of all methods. Shows steady, significant improvement but remains the lowest-performing series at every data point.

* **Approximate Values:** Size 12: ~10%, Size 24: ~32%, Size 48: ~51%, Size 96: ~62%, Size 192: ~66%, Size 384: ~67%, Size 768: ~67%.

### Key Observations

1. **Performance Hierarchy:** A clear hierarchy is established and maintained across all representation sizes: JFT MRL ≈ JFT MRL-E > ALIGN MRL > JFT Rand. > ALIGN Rand.

2. **Pre-training vs. Random:** Methods using pre-trained weights (MRL variants) significantly outperform their randomly initialized counterparts (Rand.) at smaller representation sizes. This gap narrows but does not close as size increases.

3. **Diminishing Returns:** All curves show strong diminishing returns. The most substantial accuracy gains occur between sizes 12 and 96. Beyond size 192, improvements are marginal (1-2%).

4. **Convergence of Random Initialization:** The `JFT Rand.` method shows remarkable catch-up, nearly matching the `ALIGN MRL` method from size 96 onward, suggesting that with sufficient model capacity, random initialization on the JFT dataset can approach the performance of pre-training on ALIGN.

5. **Dataset Impact:** For both MRL and Rand. methods, models associated with the "JFT" dataset consistently outperform those associated with the "ALIGN" dataset at equivalent sizes and training regimes.

### Interpretation

This chart provides a quantitative analysis of how model scale (representation size) and training methodology (pre-training dataset and technique) interact to determine performance on a 1-NN evaluation task.

* **The Value of Pre-training:** The data strongly suggests that pre-training (MRL methods) provides a crucial "head start," especially for smaller models. This is evident in the large accuracy gap at size 12. Pre-training likely instills useful, generalizable features that are immediately effective.

* **Scale Can Compensate for Methodology:** The steep ascent of the `JFT Rand.` line demonstrates that increasing model capacity can, to a significant degree, compensate for the lack of sophisticated pre-training. However, it never surpasses the pre-trained JFT models, indicating an enduring advantage to the pre-training process itself.

* **Dataset Quality/Scale Matters:** The consistent superiority of JFT-based models over ALIGN-based models, regardless of training method, implies that the JFT dataset may be larger, more diverse, or more relevant to the evaluation task than the ALIGN dataset.

* **Practical Implication:** For resource-constrained applications using small models, investing in pre-training is critical. For very large models, the choice of pre-training dataset (JFT vs. ALIGN) remains a more significant performance factor than the specific MRL technique (as seen by the overlap of JFT MRL and JFT MRL-E). The plateau after size 192 suggests a practical upper bound for this specific task and evaluation metric, beyond which further scaling is inefficient.