TECHNICAL ASSET FINGERPRINT

e605d4d6d477c959d8d69166

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

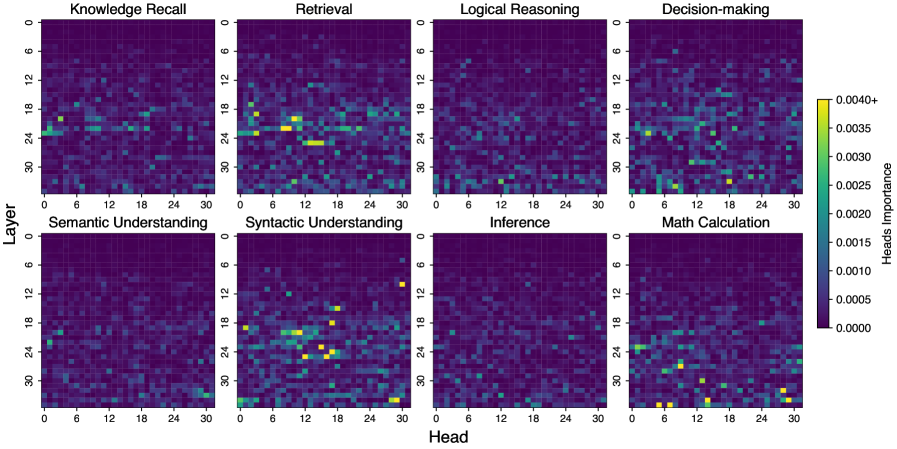

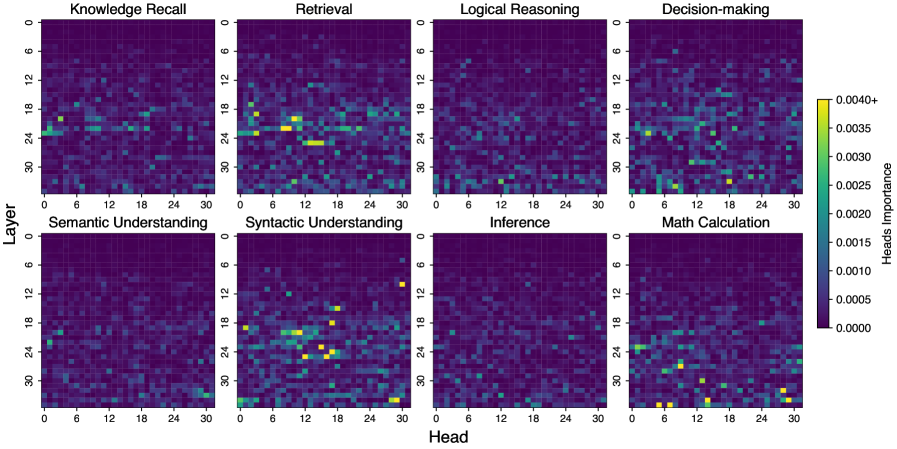

## Heatmap Grid: Head Importance Across Cognitive Tasks

### Overview

The image displays a grid of eight heatmaps arranged in a 2x4 layout. Each heatmap visualizes the "Heads Importance" (likely attention head importance scores) across different layers and heads of a neural network model for a specific cognitive task. The overall purpose is to show which attention heads in which layers are most important for different types of reasoning and understanding tasks.

### Components/Axes

* **Grid Structure:** 8 individual heatmaps in 2 rows and 4 columns.

* **Task Titles (Top of each heatmap):**

* Top Row (Left to Right): `Knowledge Recall`, `Retrieval`, `Logical Reasoning`, `Decision-making`

* Bottom Row (Left to Right): `Semantic Understanding`, `Syntactic Understanding`, `Inference`, `Math Calculation`

* **Axes (Common to all heatmaps):**

* **Y-axis (Vertical):** Labeled `Layer`. Scale runs from 0 at the top to 30 at the bottom, with major ticks at 0, 6, 12, 18, 24, 30.

* **X-axis (Horizontal):** Labeled `Head`. Scale runs from 0 on the left to 30 on the right, with major ticks at 0, 6, 12, 18, 24, 30.

* **Color Bar (Right side of the grid):**

* **Label:** `Heads Importance`

* **Scale:** A vertical gradient from dark purple (bottom) to bright yellow (top).

* **Tick Values (Approximate):** 0.0000, 0.0005, 0.0010, 0.0015, 0.0020, 0.0025, 0.0030, 0.0035, 0.0040+.

* **Interpretation:** Darker colors (purple/blue) indicate low importance. Brighter colors (green/yellow) indicate higher importance. The highest value is marked as `0.0040+`.

### Detailed Analysis

Each heatmap is a 31x31 grid (Layers 0-30, Heads 0-30). The color of each cell represents the importance score for that specific head in that specific layer for the given task.

**1. Knowledge Recall:**

* **Trend:** Importance is relatively diffuse and low across most of the grid. There is a faint, scattered pattern of slightly higher importance (teal/green) in the middle layers (approx. layers 12-24) across various heads. No single head or layer shows very high (yellow) importance.

**2. Retrieval:**

* **Trend:** Shows more distinct clusters of high importance compared to Knowledge Recall.

* **Key Data Points:** Several bright yellow/green spots are visible, indicating high importance.

* A notable cluster exists around **Layer 18-24, Head 6-12**.

* Another cluster appears around **Layer 24-27, Head 12-18**.

* Scattered high-importance points are also present in lower layers (e.g., near Layer 6, Head 18).

**3. Logical Reasoning:**

* **Trend:** Very sparse and low importance overall. The heatmap is predominantly dark purple/blue. A few isolated, faintly brighter (teal) points are scattered, but no strong clusters or high-importance (yellow) heads are evident.

**4. Decision-making:**

* **Trend:** Similar sparsity to Logical Reasoning, but with a few more noticeable points of medium importance.

* **Key Data Points:** Isolated brighter spots (green) appear, for example, near **Layer 24, Head 6** and **Layer 27, Head 18**. No strong yellow clusters.

**5. Semantic Understanding:**

* **Trend:** Very low and diffuse importance, closely resembling the pattern of Logical Reasoning. The grid is almost uniformly dark with minimal variation.

**6. Syntactic Understanding:**

* **Trend:** This heatmap shows the most pronounced and structured pattern of high importance.

* **Key Data Points:** A clear, dense cluster of high-importance (yellow) heads is located in the **lower-middle to lower layers**.

* The core of the cluster spans approximately **Layers 18-27** and **Heads 6-18**.

* The brightest yellow points (importance >0.0035) are concentrated within this region.

* A few high-importance points also appear at the very bottom layer (Layer 30).

**7. Inference:**

* **Trend:** Extremely sparse and low importance. This is the darkest heatmap, with almost no visible variation from the baseline dark purple. It suggests very few heads are specifically important for this task as measured.

**8. Math Calculation:**

* **Trend:** Shows a scattered, non-clustered pattern of medium to high importance.

* **Key Data Points:** High-importance (yellow/green) points are distributed across various layers and heads without forming a single dense cluster.

* Notable points include: **Layer 24, Head 0-3**; **Layer 27, Head 12**; **Layer 30, Head 6 and 24**.

* The distribution appears more random or distributed compared to the focused cluster in Syntactic Understanding.

### Key Observations

1. **Task-Specific Specialization:** The importance of attention heads is highly task-dependent. Patterns vary dramatically between tasks like Syntactic Understanding (dense cluster) and Inference (almost no signal).

2. **Layer Preference:** For tasks showing clear patterns (Retrieval, Syntactic Understanding, Math Calculation), important heads tend to be located in the **middle to lower layers** (approx. layers 12-30). The top layers (0-6) show very low importance across all tasks.

3. **Cluster vs. Scatter:** Syntactic Understanding and Retrieval show localized clusters of important heads. Math Calculation shows a more scattered distribution. Knowledge Recall, Logical Reasoning, Decision-making, Semantic Understanding, and Inference show diffuse or negligible patterns.

4. **Relative Importance:** The highest absolute importance scores (brightest yellow) are observed in the **Syntactic Understanding** and **Retrieval** heatmaps.

### Interpretation

This visualization provides a Peircean map of a model's internal "division of labor." It suggests that different cognitive tasks rely on distinct subsets of the model's attention mechanism, localized in specific layers and heads.

* **Syntactic Understanding** appears to be a highly specialized function, relying on a dedicated, concentrated module in the network's lower-middle layers. This aligns with linguistic theory where syntax is a foundational, rule-based processing stage.

* **Retrieval** also shows specialization, but the cluster is in a slightly different location, indicating a separate but possibly related circuit for accessing stored information.

* The **scattered pattern for Math Calculation** might indicate that mathematical reasoning is a more distributed process, engaging various capabilities across the network rather than a single dedicated "math module."

* The **near-absence of signal for Inference and Semantic Understanding** is a critical anomaly. This could mean: a) these tasks are so complex they don't rely on specific heads but on diffuse, global interactions not captured by this metric; b) the importance metric used is not sensitive to the type of processing these tasks require; or c) these capabilities are not well-developed or are represented differently in this model.

* The **general importance of middle/lower layers** across active tasks suggests these layers handle more task-specific, syntactic, and retrieval-oriented processing, while the very top layers may handle more abstract integration or output generation that is common across tasks.

In summary, the image reveals a model with clear, task-dependent specialization in its attention heads, particularly for syntactic and retrieval tasks, while highlighting potential gaps or different representational strategies for higher-order reasoning like inference and deep semantic understanding.

DECODING INTELLIGENCE...