## Diagram: Neural Network Architecture Comparison (AnalogNAS T500 vs. ResNet32)

### Overview

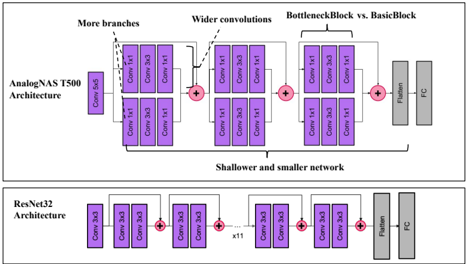

The image is a technical diagram comparing the architectural structures of two convolutional neural networks: the **AnalogNAS T500 Architecture** (top) and the **ResNet32 Architecture** (bottom). The diagram uses a block-based schematic to illustrate the sequence and connectivity of layers, highlighting key design differences between the two models.

### Components and Flow

The diagram is divided into two horizontal panels, each representing one architecture. The flow in both is from left (input) to right (output).

**1. AnalogNAS T500 Architecture (Top Panel):**

* **Input:** A single purple block labeled "Conv 3x3".

* **Main Body:** Consists of three sequential stages, each containing multiple convolutional blocks.

* **Stage 1:** Contains two parallel branches. Each branch has two purple blocks labeled "Conv 3x3". An annotation arrow points to this section with the text **"More branches"**.

* **Stage 2:** Contains two parallel branches. Each branch has two purple blocks labeled "Conv 3x3". An annotation arrow points to this section with the text **"Wider convolutions"**.

* **Stage 3:** Contains two parallel branches. Each branch has two purple blocks labeled "Conv 3x3". An annotation arrow points to this section with the text **"BottleneckBlock vs. BasicBlock"**.

* **Connections:** The output of each stage is combined via a red circle with a plus sign (representing an element-wise addition or skip connection) before feeding into the next stage.

* **Output Head:** After the final stage, the flow goes to a gray block labeled **"Flatten"**, followed by a final gray block labeled **"FC"** (Fully Connected layer).

* **Overall Annotation:** A bracket underneath the three stages is labeled **"Shallower and smaller network"**.

**2. ResNet32 Architecture (Bottom Panel):**

* **Input:** A single purple block labeled "Conv 3x3".

* **Main Body:** Consists of a sequence of blocks with residual connections.

* The first block after input contains two purple "Conv 3x3" blocks. Its output is added (via a red plus circle) to the original input (a skip connection).

* This pattern repeats. A label **"x15"** is placed below the connection line between the second and third visible block groups, indicating this basic residual block structure is repeated 15 times.

* The final visible block group also contains two purple "Conv 3x3" blocks with a residual addition.

* **Output Head:** Similar to the top architecture, the flow ends with a gray **"Flatten"** block and a gray **"FC"** block.

**Spatial Grounding & Element Identification:**

* **Legend/Color Key:** Implicit. Purple blocks represent convolutional layers ("Conv 3x3"). Gray blocks represent the final processing layers ("Flatten", "FC"). Red circles with "+" represent element-wise addition nodes.

* **Positioning:** The architecture names are on the far left of each panel. The descriptive annotations ("More branches", etc.) are placed above the specific stages they describe in the AnalogNAS T500 panel. The "x15" repetition label is centered below the main body of the ResNet32 diagram.

### Detailed Analysis

**AnalogNAS T500 Layer Sequence (Approximate):**

1. Initial Conv 3x3.

2. Stage 1: Two parallel branches, each with [Conv 3x3 -> Conv 3x3]. Outputs are summed.

3. Stage 2: Two parallel branches, each with [Conv 3x3 -> Conv 3x3]. Outputs are summed.

4. Stage 3: Two parallel branches, each with [Conv 3x3 -> Conv 3x3]. Outputs are summed.

5. Flatten -> FC.

**ResNet32 Layer Sequence (Approximate):**

1. Initial Conv 3x3.

2. A basic residual block (two Conv 3x3 layers with a skip connection) is repeated approximately 15 times in sequence.

3. Flatten -> FC.

**Key Structural Differences Highlighted by Annotations:**

* **Branching:** AnalogNAS uses parallel branches within stages ("More branches"), while ResNet32 uses a single path with skip connections.

* **Width:** AnalogNAS is noted to have "Wider convolutions," though the block labels ("Conv 3x3") do not specify channel width.

* **Block Type:** AnalogNAS is annotated as using "BottleneckBlock vs. BasicBlock," suggesting a comparison of internal block design, though the visual representation for both is identical (two "Conv 3x3" blocks).

* **Depth/Size:** The overall label states AnalogNAS is a "Shallower and smaller network" compared to ResNet32, which is implied to be deeper due to the "x15" repetition.

### Key Observations

1. **Visual Similarity vs. Annotated Difference:** The graphical representation of the convolutional blocks is identical across both architectures (purple "Conv 3x3"). The critical differences are conveyed entirely through the textual annotations and the macro-structure (parallel branches vs. sequential repetition).

2. **Repetition vs. Parallelism:** ResNet32's depth is achieved through sequential repetition of a simple block (x15). AnalogNAS T500's complexity is achieved through parallel branching within its stages.

3. **Ambiguity in "Conv 3x3":** The label "Conv 3x3" specifies the kernel size but omits other critical parameters like the number of filters (channels), stride, and padding, which are essential for a full technical understanding.

4. **Identical Output Heads:** Both architectures conclude with an identical Flatten -> FC sequence, indicating the comparison is focused solely on the feature extraction (convolutional) backbone.

### Interpretation

This diagram is a high-level schematic intended to contrast the **design philosophy** of a neural architecture search (NAS) discovered model (AnalogNAS T500) against a standard, hand-designed residual network (ResNet32).

* **What the Data Suggests:** The annotations propose that the AnalogNAS T500 achieves its goal (presumably efficient inference on analog hardware) through a different structural strategy: using fewer, but wider and more parallelized, stages compared to the deep, sequential, and uniform structure of ResNet32. The claim of being "shallower and smaller" implies potential advantages in latency or parameter efficiency.

* **Relationship Between Elements:** The diagram positions the two architectures as direct alternatives. The annotations on the AnalogNAS side act as a direct critique or point of differentiation from the ResNet32 baseline. The red addition nodes are critical, showing that both architectures rely on residual learning principles, but apply them in different topological patterns (across parallel branches vs. along a deep sequence).

* **Notable Anomalies/Outliers:** The most significant "outlier" is the **lack of quantitative data**. The diagram makes qualitative claims ("wider," "shallower") without providing the corresponding numbers (e.g., channel counts, total layers, FLOPs, parameter count). Therefore, its primary purpose is **conceptual illustration** rather than technical specification. A viewer cannot reconstruct the exact models from this image alone; they would need the accompanying research paper for the precise architectural hyperparameters. The diagram successfully communicates a *structural narrative* about efficiency through parallelism versus depth.