## Neural Network Architectures: AnalogNAS T500 vs. ResNet32

### Overview

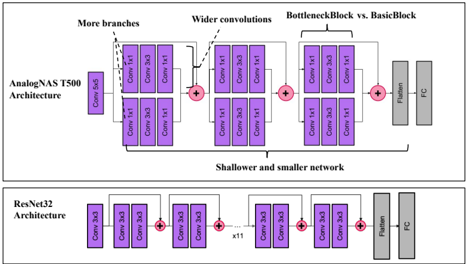

The image presents a comparison of two neural network architectures: AnalogNAS T500 and ResNet32. It illustrates the structural differences between the two, highlighting aspects such as the number of branches, convolution widths, and overall network depth.

### Components/Axes

* **AnalogNAS T500 Architecture:** Depicted in the upper portion of the image.

* Components: Conv 5x5, Conv 1x1, Conv 3x3, Addition nodes (+), Flatten, FC (Fully Connected Layer).

* Annotations: "More branches", "Wider convolutions", "BottleneckBlock vs. BasicBlock", "Shallower and smaller network".

* **ResNet32 Architecture:** Depicted in the lower portion of the image.

* Components: Conv 3x3, Addition nodes (+), Flatten, FC (Fully Connected Layer).

* Annotation: "x11" indicating a repeating block.

### Detailed Analysis

**AnalogNAS T500 Architecture:**

* Starts with a Conv 5x5 block.

* Splits into two branches, each containing Conv 1x1 and Conv 3x3 blocks. These branches are then added together.

* The network then proceeds with wider convolutions, consisting of Conv 1x1 and Conv 3x3 blocks, followed by an addition node.

* A "BottleneckBlock vs. BasicBlock" section follows, containing Conv 1x1 and Conv 3x3 blocks, again followed by an addition node.

* Finally, the network includes a Flatten layer and a Fully Connected (FC) layer.

**ResNet32 Architecture:**

* Begins with a Conv 3x3 block.

* Repeats a block of Conv 3x3, addition node (+) eleven times (indicated by "x11").

* Concludes with a Flatten layer and a Fully Connected (FC) layer.

### Key Observations

* AnalogNAS T500 has more branching and wider convolutions compared to ResNet32.

* ResNet32 is a shallower and smaller network, characterized by repeated blocks of Conv 3x3 and addition nodes.

* Both architectures end with Flatten and FC layers.

### Interpretation

The diagram illustrates the architectural differences between AnalogNAS T500 and ResNet32. AnalogNAS T500 employs a more complex structure with multiple branches and varying convolution sizes, potentially allowing it to capture more intricate features. ResNet32, on the other hand, utilizes a simpler, repetitive structure, which may be more efficient for certain tasks. The "BottleneckBlock vs. BasicBlock" annotation suggests that AnalogNAS T500 may incorporate bottleneck blocks, which are commonly used to reduce computational complexity in deep networks. The "Shallower and smaller network" annotation for ResNet32 indicates that it has fewer layers and parameters compared to AnalogNAS T500. The repetition of the Conv 3x3 block in ResNet32 (x11) is a key characteristic of ResNet architectures, enabling the network to learn hierarchical representations of the input data.