## Sequence Model Diagram: Unidirectional Recurrent Neural Network (RNN) for Language Modeling

### Overview

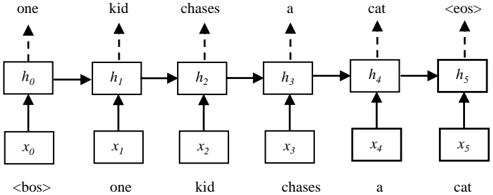

The image is a technical diagram illustrating the architecture and data flow of a unidirectional Recurrent Neural Network (RNN) processing a sentence. It shows the step-by-step transformation of an input sequence ("one kid chases a cat") into a corresponding output sequence, with the inclusion of special start (`<bos>`) and end (`<eos>`) tokens. The diagram is presented in a clean, black-and-white schematic style.

### Components/Axes

The diagram is organized into three horizontal layers, connected by arrows indicating data flow:

1. **Bottom Layer (Input Sequence):** A series of boxes labeled `x₀` through `x₅`. Below each box is a corresponding text token.

2. **Middle Layer (Hidden States):** A series of boxes labeled `h₀` through `h₅`. These are connected sequentially by solid horizontal arrows pointing from left to right.

3. **Top Layer (Output Sequence):** A series of text tokens positioned above each hidden state box, connected by dashed vertical arrows pointing upward.

**Legend/Labels:**

* **Input Tokens (Bottom):** `<bos>`, `one`, `kid`, `chases`, `a`, `cat`

* **Hidden State Variables (Middle):** `h₀`, `h₁`, `h₂`, `h₃`, `h₄`, `h₅`

* **Output Tokens (Top):** `one`, `kid`, `chases`, `a`, `cat`, `<eos>`

* **Input Variable Labels (Bottom):** `x₀`, `x₁`, `x₂`, `x₃`, `x₄`, `x₅`

### Detailed Analysis

The diagram depicts a sequence of 6 time steps (t=0 to t=5). The flow is strictly left-to-right (unidirectional).

**Step-by-Step Breakdown:**

* **Time Step 0:**

* Input (`x₀`): `<bos>` (Begin-of-Sequence token)

* Hidden State (`h₀`): Computed from `x₀` and an initial state (not shown).

* Output: The word `one` is generated from `h₀`.

* **Time Step 1:**

* Input (`x₁`): `one`

* Hidden State (`h₁`): Computed from `x₁` and the previous hidden state `h₀`.

* Output: The word `kid` is generated from `h₁`.

* **Time Step 2:**

* Input (`x₂`): `kid`

* Hidden State (`h₂`): Computed from `x₂` and `h₁`.

* Output: The word `chases` is generated from `h₂`.

* **Time Step 3:**

* Input (`x₃`): `chases`

* Hidden State (`h₃`): Computed from `x₃` and `h₂`.

* Output: The word `a` is generated from `h₃`.

* **Time Step 4:**

* Input (`x₄`): `a`

* Hidden State (`h₄`): Computed from `x₄` and `h₃`.

* Output: The word `cat` is generated from `h₄`.

* **Time Step 5:**

* Input (`x₅`): `cat`

* Hidden State (`h₅`): Computed from `x₅` and `h₄`.

* Output: The special token `<eos>` (End-of-Sequence) is generated from `h₅`.

**Key Relationships:**

* **Solid Horizontal Arrows:** Represent the recurrence connection, where the hidden state `hₜ` from the previous time step is passed as input to compute `hₜ₊₁`.

* **Solid Vertical Arrows (Bottom to Middle):** Show that each input `xₜ` is used to compute the corresponding hidden state `hₜ`.

* **Dashed Vertical Arrows (Middle to Top):** Indicate that each output token is generated (predicted) based on the current hidden state `hₜ`.

### Key Observations

1. **Teacher Forcing / Sequence Alignment:** The diagram shows a "teacher-forcing" or aligned sequence setup. The output at time `t` is the input token for time `t+1`. For example, the output `one` at `t=0` becomes the input `one` at `t=1`. This is a common setup for training language models to predict the next word.

2. **Special Tokens:** The use of `<bos>` and `<eos>` tokens is a standard practice to explicitly mark the beginning and end of a sequence for the model.

3. **Unidirectional Flow:** Information flows only from left to right. The hidden state `h₃` (processing "chases") has access to the context of "one" and "kid" but not to the future words "a" and "cat". This is characteristic of a standard RNN, as opposed to a bidirectional RNN.

4. **Sentence Structure:** The processed sentence is grammatically correct: "one kid chases a cat".

### Interpretation

This diagram is a canonical representation of a **Recurrent Neural Network (RNN) unrolled through time** for a **next-word prediction** or **language modeling** task.

* **What it demonstrates:** It visually explains how an RNN processes sequential data step-by-step, maintaining a "memory" (the hidden state `h`) that gets updated at each time step. The model's goal at each step is to use the current input and its memory of the past to predict the next element in the sequence.

* **How elements relate:** The core relationship is the **temporal dependency**. The hidden state `hₜ` is a function of both the current input `xₜ` and the previous hidden state `hₜ₋₁`. This allows the network to carry forward contextual information. The output at each step is a function of the current hidden state `hₜ`.

* **Underlying Process:** The diagram abstracts away the internal neural network layers (e.g., weight matrices, activation functions like tanh or ReLU) that perform the actual computation within each box (`hₜ = f(xₜ, hₜ₋₁)`). It focuses on the high-level data flow and sequence alignment.

* **Purpose:** This type of visualization is fundamental for teaching and understanding sequence modeling architectures. It clarifies concepts like recurrence, hidden state propagation, and the difference between input, hidden, and output sequences. The specific example sentence makes the abstract concept concrete.