\n

## Diagram: Recurrent Neural Network (RNN) Unfolding

### Overview

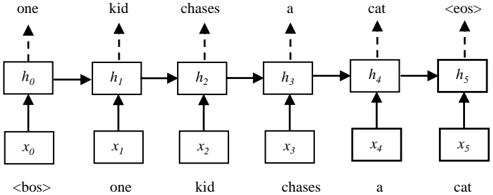

The image depicts an unfolded recurrent neural network (RNN) processing the sentence "one kid chases a cat". The diagram illustrates how the RNN processes sequential data by maintaining a hidden state that is updated at each time step. The diagram shows the input sequence, the hidden states, and the connections between them.

### Components/Axes

The diagram consists of the following components:

* **Input Sequence:** The sentence "one kid chases a cat" is presented both as input tokens (x1 to x5) and as the words themselves. The sequence starts with a beginning-of-sequence token `<bos>` and ends with an end-of-sequence token `<eos>`.

* **Hidden States:** Represented by rectangles labeled h0 to h5. These states capture information about the sequence up to a given time step.

* **Input Tokens:** Represented by rectangles labeled x0 to x5. These are the individual words or tokens fed into the network.

* **Connections:** Arrows indicate the flow of information. Solid arrows represent the flow of the hidden state from one time step to the next. Dashed arrows represent the input of the current token into the hidden state.

### Detailed Analysis / Content Details

The diagram shows a sequence of six steps, corresponding to the words "one", "kid", "chases", "a", "cat", and the end-of-sequence token.

* **Step 0:** The initial hidden state h0 receives the beginning-of-sequence token `<bos>` as input, resulting in x0.

* **Step 1:** The hidden state h1 receives the word "one" (x1) and the previous hidden state h0.

* **Step 2:** The hidden state h2 receives the word "kid" (x2) and the previous hidden state h1.

* **Step 3:** The hidden state h3 receives the word "chases" (x3) and the previous hidden state h2.

* **Step 4:** The hidden state h4 receives the word "a" (x4) and the previous hidden state h3.

* **Step 5:** The hidden state h5 receives the word "cat" (x5) and the previous hidden state h4. The final hidden state h5 also receives the end-of-sequence token `<eos>`.

The tokens are:

* x0: `<bos>`

* x1: "one"

* x2: "kid"

* x3: "chases"

* x4: "a"

* x5: "cat"

### Key Observations

The diagram clearly illustrates the recurrent nature of the network. The hidden state at each time step depends on both the current input and the previous hidden state. This allows the network to maintain a memory of the sequence and use it to process subsequent inputs. The use of `<bos>` and `<eos>` tokens indicates that the network is designed to handle variable-length sequences.

### Interpretation

This diagram demonstrates the fundamental principle of RNNs: processing sequential data by maintaining a hidden state that captures information about the past. The unfolding of the RNN into a series of time steps makes it easier to understand how the network processes the input sequence one element at a time. The diagram highlights the importance of the hidden state in capturing the context of the sequence and using it to make predictions or perform other tasks. The diagram is a conceptual illustration and does not specify the details of the RNN's internal workings, such as the activation functions or the weight matrices. It serves as a high-level overview of the RNN's architecture and how it processes sequential data. The diagram is a common way to visualize RNNs and explain their functionality.