## Diagram: Sequential Hidden State and Input Token Flow

### Overview

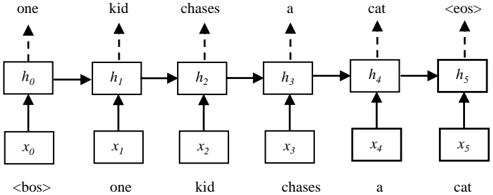

The diagram illustrates a sequential processing flow between hidden states (`h0` to `h5`) and input tokens (`x0` to `x5`). Arrows indicate directional relationships, with vertical connections between `h_i` and `x_i` boxes and horizontal progression from `h0` to `h5`.

### Components/Axes

- **Hidden States (`h0`–`h5`)**: Six sequentially connected boxes on the top row, each labeled with a word above:

- `h0`: "one"

- `h1`: "kid"

- `h2`: "chases"

- `h3`: "a"

- `h4`: "cat"

- `h5`: "<eos>"

- **Input Tokens (`x0`–`x5`)**: Six boxes on the bottom row, each labeled with a token below:

- `x0`: "<bos>"

- `x1`: "one"

- `x2`: "kid"

- `x3`: "chases"

- `x4`: "a"

- `x5`: "cat"

- **Arrows**:

- Vertical arrows connect each `h_i` to `x_i` (e.g., `h0` ↔ `x0`).

- Horizontal arrows link `h0` → `h1` → `h2` → `h3` → `h4` → `h5`.

### Detailed Analysis

- **Token-Hidden State Mapping**:

- Each `h_i` corresponds to the token above it (e.g., `h1` = "kid" maps to `x2` = "kid").

- The first hidden state (`h0`) is linked to the `<bos>` token (`x0`), and the final state (`h5`) to `<eos>` (`x5`).

- **Flow Direction**:

- Input tokens (`x0`–`x5`) feed into hidden states (`h0`–`h5`) sequentially.

- Hidden states propagate forward without feedback loops.

### Key Observations

1. **Sequential Dependency**: The flow suggests a left-to-right processing order, typical of autoregressive models (e.g., RNNs, Transformers).

2. **Special Tokens**: `<bos>` and `<eos>` mark the start and end of the sequence, respectively.

3. **Token Alignment**: Each `h_i` directly aligns with `x_i` (e.g., `h4` = "cat" ↔ `x5` = "cat"), implying a 1:1 correspondence between input and hidden states.

### Interpretation

This diagram likely represents a **language model's encoder-decoder architecture** or a **sequence-to-sequence pipeline**. The hidden states (`h_i`) encode contextual information from input tokens (`x_i`), progressing through the sequence to generate or predict outputs. The inclusion of `<bos>` and `<eos>` indicates the model processes full sentences, while the absence of feedback loops suggests a unidirectional flow (e.g., no attention mechanisms or bidirectional processing). The alignment between `h_i` and `x_i` implies a simplified model where each hidden state directly reflects its corresponding input token, possibly for educational or illustrative purposes.