## Bar Chart: Prediction Flip Rates for Llama-3.2 Models

### Overview

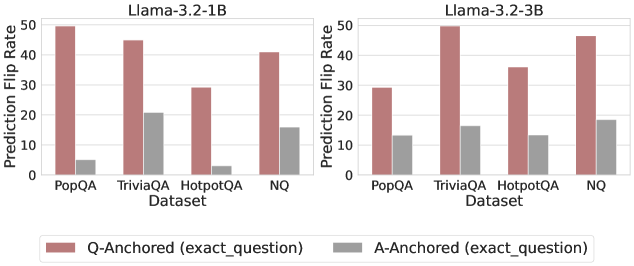

The image displays two grouped bar charts side-by-side, comparing the "Prediction Flip Rate" of two language models (Llama-3.2-1B and Llama-3.2-3B) across four question-answering datasets. Each chart compares two anchoring methods: "Q-Anchored (exact_question)" and "A-Anchored (exact_question)".

### Components/Axes

* **Main Titles (Top of each subplot):**

* Left Chart: `Llama-3.2-1B`

* Right Chart: `Llama-3.2-3B`

* **Y-Axis (Vertical, both charts):**

* **Label:** `Prediction Flip Rate`

* **Scale:** Linear, from 0 to 50, with major tick marks at 0, 10, 20, 30, 40, 50.

* **X-Axis (Horizontal, both charts):**

* **Label:** `Dataset`

* **Categories (from left to right):** `PopQA`, `TriviaQA`, `HotpotQA`, `NQ`.

* **Legend (Bottom center, spanning both charts):**

* **Reddish-brown square:** `Q-Anchored (exact_question)`

* **Gray square:** `A-Anchored (exact_question)`

* **Spatial Layout:** The two charts are positioned horizontally adjacent. The legend is placed below both charts, centered.

### Detailed Analysis

**Data Series & Approximate Values:**

The following values are visual estimates based on bar height relative to the y-axis scale.

**Chart 1: Llama-3.2-1B**

* **Trend:** For all four datasets, the Q-Anchored (reddish-brown) bar is significantly taller than the A-Anchored (gray) bar.

* **Data Points:**

* **PopQA:** Q-Anchored ≈ 50, A-Anchored ≈ 5.

* **TriviaQA:** Q-Anchored ≈ 45, A-Anchored ≈ 20.

* **HotpotQA:** Q-Anchored ≈ 28, A-Anchored ≈ 3.

* **NQ:** Q-Anchored ≈ 40, A-Anchored ≈ 15.

**Chart 2: Llama-3.2-3B**

* **Trend:** Similar to the 1B model, the Q-Anchored bar is taller than the A-Anchored bar for every dataset. The pattern of which dataset has the highest flip rate differs from the 1B model.

* **Data Points:**

* **PopQA:** Q-Anchored ≈ 28, A-Anchored ≈ 13.

* **TriviaQA:** Q-Anchored ≈ 50, A-Anchored ≈ 16.

* **HotpotQA:** Q-Anchored ≈ 35, A-Anchored ≈ 13.

* **NQ:** Q-Anchored ≈ 45, A-Anchored ≈ 18.

### Key Observations

1. **Consistent Disparity:** Across both models and all four datasets, the Prediction Flip Rate is consistently and substantially higher for the Q-Anchored method compared to the A-Anchored method.

2. **Model Size Effect:** The Llama-3.2-3B model shows a different dataset ranking for Q-Anchored flip rates. For the 1B model, PopQA has the highest rate (~50), while for the 3B model, TriviaQA has the highest (~50). The 3B model's rates for PopQA and HotpotQA are notably lower than the 1B model's.

3. **Dataset Sensitivity:** The `HotpotQA` dataset shows the lowest A-Anchored flip rates in both models (≈3 for 1B, ≈13 for 3B), suggesting predictions anchored to its answers are particularly stable.

4. **Scale of Difference:** The ratio between Q-Anchored and A-Anchored flip rates is most extreme for the `PopQA` and `HotpotQA` datasets in the 1B model.

### Interpretation

This visualization demonstrates a fundamental difference in model sensitivity based on anchoring strategy. The "Prediction Flip Rate" likely measures how often a model's output changes when a specific component (the question or the answer) is held constant ("anchored") while other parts of the input vary.

* **Q-Anchored vs. A-Anchored:** The consistently higher flip rates for Q-Anchored anchoring suggest that the models' predictions are far more volatile when the exact question is fixed but the answer context changes, compared to when the exact answer is fixed. This implies the models' outputs are more sensitive to variations in the answer presentation or supporting context than to variations in the question phrasing.

* **Model Scaling:** The change in pattern between the 1B and 3B models indicates that scaling the model size alters its sensitivity profile across different types of QA datasets. The 3B model's lower flip rate on PopQA (compared to its 1B counterpart) might suggest improved robustness or a different internal representation for that specific knowledge domain.

* **Dataset Characteristics:** The notably low A-Anchored flip rate for HotpotQA could be due to the nature of the dataset (e.g., multi-hop reasoning), where fixing the answer provides a very strong, unambiguous signal that stabilizes the model's output regardless of other contextual changes.

In summary, the data strongly suggests that for these Llama-3.2 models, anchoring on the question leads to much less stable predictions than anchoring on the answer, and this relationship is modulated by both model scale and the specific knowledge domain of the dataset.