# Technical Data Extraction: Latency Comparison of LLM Inference Frameworks

## 1. Document Overview

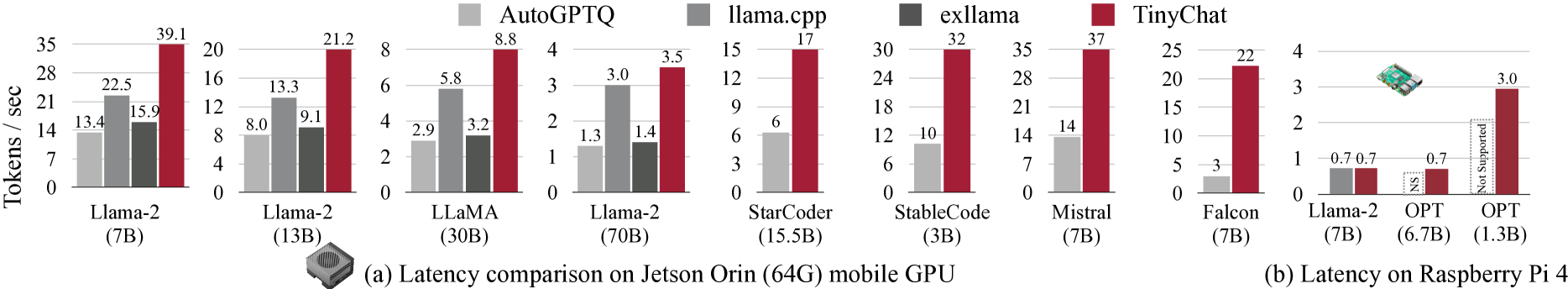

This image contains a series of bar charts comparing the performance (throughput) of four different Large Language Model (LLM) inference frameworks across various models and two hardware platforms: **Jetson Orin (64G) mobile GPU** and **Raspberry Pi 4**.

* **Primary Metric:** Tokens / sec (Higher is better).

* **Language:** English.

---

## 2. Legend and Component Identification

The legend is positioned at the top center of the image.

| Color | Framework | Description |

| :--- | :--- | :--- |

| Light Gray | **AutoGPTQ** | Quantization framework. |

| Medium Gray | **llama.cpp** | C++ based inference engine. |

| Dark Gray | **exllama** | Optimized Llama inference. |

| Dark Red | **TinyChat** | The framework being highlighted for superior performance. |

---

## 3. Section (a): Latency comparison on Jetson Orin (64G) mobile GPU

This section contains eight individual bar charts. The trend across all charts shows **TinyChat** (Dark Red) significantly outperforming the other frameworks, often by a factor of 2x or more.

### Data Table: Jetson Orin Performance (Tokens/sec)

| Model (Size) | AutoGPTQ (Light Gray) | llama.cpp (Med Gray) | exllama (Dark Gray) | TinyChat (Red) |

| :--- | :---: | :---: | :---: | :---: |

| **Llama-2 (7B)** | 13.4 | 22.5 | 15.9 | 39.1 |

| **Llama-2 (13B)** | 8.0 | 13.3 | 9.1 | 21.2 |

| **LLaMA (30B)** | 2.9 | 5.8 | 3.2 | 8.8 |

| **Llama-2 (70B)** | 1.3 | 3.0 | 1.4 | 3.5 |

| **StarCoder (15.5B)** | 6 | N/A | N/A | 17 |

| **StableCode (3B)** | 10 | N/A | N/A | 32 |

| **Mistral (7B)** | 14 | N/A | N/A | 37 |

| **Falcon (7B)** | 3 | N/A | N/A | 22 |

*Note: "N/A" indicates the framework was not represented in that specific model's chart.*

---

## 4. Section (b): Latency on Raspberry Pi 4

This section contains three bar charts. The hardware is represented by a small image of a Raspberry Pi board. The trend shows TinyChat maintaining functionality and performance where other frameworks are either slower or unsupported.

### Data Table: Raspberry Pi 4 Performance (Tokens/sec)

| Model (Size) | llama.cpp (Med Gray) | TinyChat (Red) | Notes |

| :--- | :---: | :---: | :--- |

| **Llama-2 (7B)** | 0.7 | 0.7 | Performance is equal. |

| **OPT (6.7B)** | NS | 0.7 | **NS** (Not Supported) for llama.cpp. |

| **OPT (1.3B)** | Not Supported | 3.0 | llama.cpp indicated as "Not Supported". |

---

## 5. Visual Trend Analysis & Observations

1. **Dominance of TinyChat:** In the Jetson Orin benchmarks, TinyChat consistently achieves the highest throughput. For the **Falcon (7B)** model, TinyChat is over 7 times faster than AutoGPTQ (22 vs 3 tokens/sec).

2. **Scaling Trends:** As model size increases (e.g., Llama-2 7B to 70B), the tokens per second decrease across all frameworks, as expected due to increased computational demand.

3. **Framework Compatibility:**

* **exllama** and **AutoGPTQ** are only shown for the Llama/LLaMA family of models.

* **TinyChat** shows broad compatibility across Llama-2, LLaMA, StarCoder, StableCode, Mistral, Falcon, and OPT.

4. **Edge Hardware Constraints:** On the Raspberry Pi 4, performance drops significantly (below 1 token/sec for larger models), highlighting the extreme resource constraints of the hardware compared to the Jetson Orin. TinyChat shows a unique ability to run OPT models on this hardware where llama.cpp is labeled as "Not Supported" or "NS".