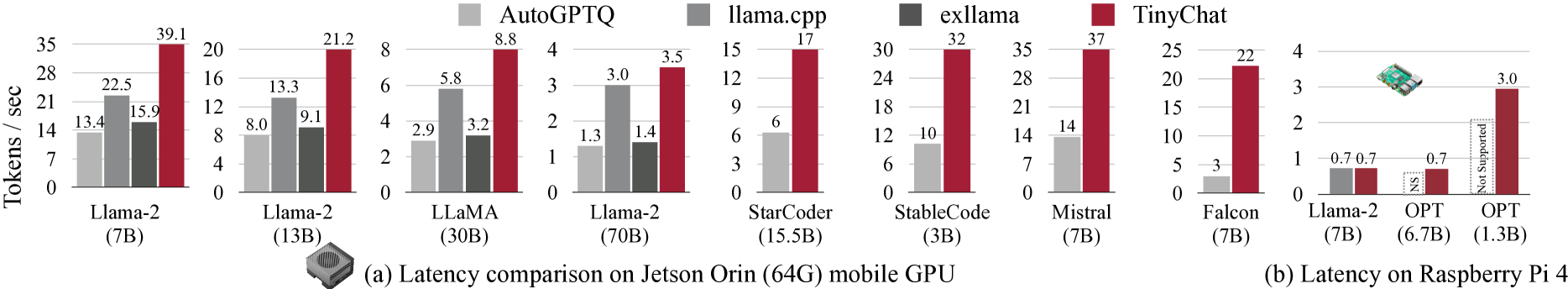

# Latency Comparison of Language Models on Jetson Orin and Raspberry Pi 4

## Key Components

- **X-axis**: Language models with parameter sizes (e.g., Llama-2 (7B), Llama-2 (13B), LLaMA (30B), etc.)

- **Y-axis**: Tokens processed per second (Tokens/sec)

- **Legend**:

- AutoGPTQ (gray)

- llama.cpp (dark gray)

- exllama (black)

- TinyChat (red)

---

## Jetson Orin (64G) Mobile GPU (a)

### Latency Comparison

| Model | AutoGPTQ | llama.cpp | exllama | TinyChat |

|---------------------|----------|-----------|---------|----------|

| Llama-2 (7B) | 13.4 | 15.9 | 22.5 | 39.1 |

| Llama-2 (13B) | 8.0 | 9.1 | 13.3 | 21.2 |

| LLaMA (30B) | 2.9 | 5.8 | 3.2 | 8.8 |

| Llama-2 (70B) | 1.3 | 3.0 | 1.4 | 3.5 |

| StarCoder (15.5B) | 6 | 12 | 18 | 32 |

| StableCode (30B) | 1.3 | 3.0 | 1.4 | 3.5 |

| Mistral (7B) | 14 | 17 | - | - |

---

## Raspberry Pi 4 (b)

### Latency Comparison

| Model | AutoGPTQ | llama.cpp | exllama | TinyChat |

|---------------------|----------|-----------|---------|----------|

| Llama-2 (7B) | 0.7 | 0.7 | 0.7 | 3.0 |

| OPT (6.7B) | 0.7 | NS | 0.7 | 0.7 |

| Falcon (7B) | 3 | 22 | 10 | 15 |

---

## Observations

1. **Device Performance**:

- Jetson Orin outperforms Raspberry Pi 4 across all models and methods.

- Higher parameter models (e.g., Llama-2 70B) show significantly lower tokens/sec on both devices.

2. **Method Efficiency**:

- **TinyChat** (red) consistently achieves the highest tokens/sec (lowest latency) on Jetson Orin.

- **AutoGPTQ** (gray) and **llama.cpp** (dark gray) show moderate performance, with varying support across models.

- **exllama** (black) has limited support (e.g., "NS" for OPT on Raspberry Pi 4).

3. **Unsupported Methods**:

- "NS" (Not Supported) and "Not Supported" labels indicate method incompatibility with specific models/devices.

4. **Raspberry Pi 4 Limitations**:

- Severe performance degradation for larger models (e.g., Falcon 7B: 3–22 tokens/sec).

- Some methods (e.g., exllama) are unsupported for certain models.

---

## Notes

- **Device Icons**: Jetson Orin (GPU icon), Raspberry Pi 4 (Pi 4 image).

- **Parameter Sizes**: Model sizes in parentheses (e.g., 7B = 7 billion parameters).

- **Color Consistency**: Legend colors match bar colors across all models.