TECHNICAL ASSET FINGERPRINT

e70a16f5f046c1d307351c80

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

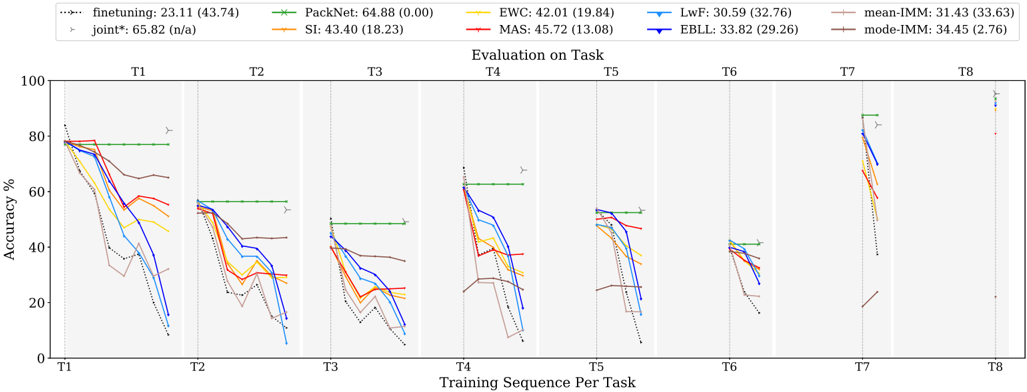

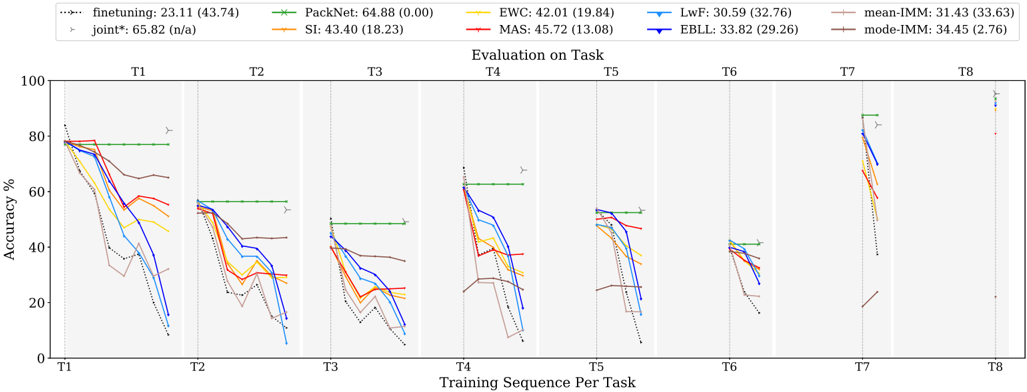

## Line Chart: Evaluation on Task

### Overview

The image is a line chart comparing the performance of different continual learning algorithms across a sequence of tasks (T1 to T8). The y-axis represents accuracy percentage, and the x-axis represents the training sequence per task. Each line represents a different algorithm, and the chart shows how the accuracy of each algorithm changes as it is trained on successive tasks.

### Components/Axes

* **X-axis:** Training Sequence Per Task (T1, T2, T3, T4, T5, T6, T7, T8)

* **Y-axis:** Accuracy % (Scale from 0 to 100)

* **Title:** Evaluation on Task

* **Legend (Top-Left):**

* finetuning (dotted black line): 23.11 (43.74)

* joint\* (gray line with triangle markers): 65.82 (n/a)

* PackNet (green line): 64.88 (0.00)

* SI (orange line): 43.40 (18.23)

* EWC (yellow line): 42.01 (19.84)

* MAS (red line): 45.72 (13.08)

* LwF (light blue line): 30.59 (32.76)

* EBLL (dark blue line): 33.82 (29.26)

* mean-IMM (brown line): 31.43 (33.63)

* mode-IMM (purple line): 34.45 (2.76)

### Detailed Analysis

The chart is divided into eight sections, one for each task (T1 to T8). Each section shows the accuracy of the different algorithms after training on that task.

* **finetuning (dotted black line):** Starts high and consistently decreases across all tasks.

* **joint\* (gray line with triangle markers):** Remains relatively stable and high across all tasks.

* **PackNet (green line):** Remains constant across all tasks.

* **SI (orange line):** Starts high and decreases across tasks.

* **EWC (yellow line):** Starts high and decreases across tasks.

* **MAS (red line):** Starts high and decreases across tasks.

* **LwF (light blue line):** Starts high and decreases across tasks.

* **EBLL (dark blue line):** Starts high and decreases across tasks.

* **mean-IMM (brown line):** Starts high and decreases across tasks.

* **mode-IMM (purple line):** Starts high and decreases across tasks.

**Task 1 (T1):**

* finetuning: Starts at approximately 78% and decreases.

* joint\*: Starts at approximately 82%.

* PackNet: Starts at approximately 78%.

* SI: Starts at approximately 76%.

* EWC: Starts at approximately 74%.

* MAS: Starts at approximately 78%.

* LwF: Starts at approximately 76%.

* EBLL: Starts at approximately 78%.

* mean-IMM: Starts at approximately 78%.

* mode-IMM: Starts at approximately 78%.

**Task 2 (T2):**

* finetuning: Decreases to approximately 38%.

* joint\*: Remains at approximately 82%.

* PackNet: Remains at approximately 57%.

* SI: Decreases to approximately 58%.

* EWC: Decreases to approximately 54%.

* MAS: Decreases to approximately 56%.

* LwF: Decreases to approximately 56%.

* EBLL: Decreases to approximately 58%.

* mean-IMM: Decreases to approximately 66%.

* mode-IMM: Decreases to approximately 64%.

**Task 3 (T3):**

* finetuning: Decreases to approximately 24%.

* joint\*: Remains at approximately 82%.

* PackNet: Remains at approximately 50%.

* SI: Decreases to approximately 40%.

* EWC: Decreases to approximately 36%.

* MAS: Decreases to approximately 38%.

* LwF: Decreases to approximately 36%.

* EBLL: Decreases to approximately 38%.

* mean-IMM: Decreases to approximately 40%.

* mode-IMM: Decreases to approximately 40%.

**Task 4 (T4):**

* finetuning: Decreases to approximately 10%.

* joint\*: Remains at approximately 82%.

* PackNet: Remains at approximately 64%.

* SI: Decreases to approximately 20%.

* EWC: Decreases to approximately 20%.

* MAS: Decreases to approximately 20%.

* LwF: Decreases to approximately 20%.

* EBLL: Decreases to approximately 20%.

* mean-IMM: Decreases to approximately 30%.

* mode-IMM: Decreases to approximately 30%.

**Task 5 (T5):**

* finetuning: Increases to approximately 10%.

* joint\*: Remains at approximately 82%.

* PackNet: Remains at approximately 52%.

* SI: Increases to approximately 48%.

* EWC: Increases to approximately 50%.

* MAS: Increases to approximately 52%.

* LwF: Increases to approximately 52%.

* EBLL: Increases to approximately 52%.

* mean-IMM: Increases to approximately 48%.

* mode-IMM: Increases to approximately 48%.

**Task 6 (T6):**

* finetuning: Decreases to approximately 20%.

* joint\*: Remains at approximately 82%.

* PackNet: Remains at approximately 50%.

* SI: Decreases to approximately 38%.

* EWC: Decreases to approximately 38%.

* MAS: Decreases to approximately 38%.

* LwF: Decreases to approximately 38%.

* EBLL: Decreases to approximately 38%.

* mean-IMM: Decreases to approximately 38%.

* mode-IMM: Decreases to approximately 38%.

**Task 7 (T7):**

* finetuning: Decreases to approximately 10%.

* joint\*: Remains at approximately 82%.

* PackNet: Remains at approximately 90%.

* SI: Decreases to approximately 68%.

* EWC: Decreases to approximately 66%.

* MAS: Decreases to approximately 64%.

* LwF: Decreases to approximately 72%.

* EBLL: Decreases to approximately 74%.

* mean-IMM: Decreases to approximately 68%.

* mode-IMM: Decreases to approximately 68%.

**Task 8 (T8):**

* finetuning: Decreases to approximately 10%.

* joint\*: Remains at approximately 96%.

* PackNet: Remains at approximately 96%.

* SI: Remains at approximately 96%.

* EWC: Remains at approximately 96%.

* MAS: Remains at approximately 96%.

* LwF: Remains at approximately 96%.

* EBLL: Remains at approximately 96%.

* mean-IMM: Remains at approximately 96%.

* mode-IMM: Remains at approximately 96%.

### Key Observations

* The "joint\*" algorithm consistently maintains high accuracy across all tasks.

* The "PackNet" algorithm maintains a relatively stable accuracy across all tasks.

* The "finetuning" algorithm experiences a significant drop in accuracy as the training sequence progresses, indicating catastrophic forgetting.

* Other algorithms (SI, EWC, MAS, LwF, EBLL, mean-IMM, mode-IMM) show a decline in accuracy as the training sequence progresses, but not as severe as "finetuning".

### Interpretation

The chart demonstrates the challenge of continual learning, where models tend to forget previously learned tasks when trained on new ones. The "joint\*" algorithm serves as an upper bound, showing the performance that can be achieved when all tasks are learned jointly. The "finetuning" algorithm highlights the problem of catastrophic forgetting. The other algorithms represent different approaches to mitigate catastrophic forgetting, with varying degrees of success. The performance drop observed in most algorithms suggests that continual learning remains a challenging problem, and further research is needed to develop more robust algorithms.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: Accuracy vs. Training Sequence Per Task

### Overview

This line chart depicts the accuracy performance of several machine learning algorithms across eight tasks (T1-T8) as the training sequence progresses. The y-axis represents accuracy in percentage, while the x-axis represents the training sequence per task. Each line represents a different algorithm, and the chart includes standard deviation markers (vertical lines) to indicate the variability in performance. The chart also includes the mean and standard deviation of the accuracy for each algorithm.

### Components/Axes

* **X-axis:** "Training Sequence Per Task" with markers T1, T2, T3, T4, T5, T6, T7, and T8.

* **Y-axis:** "Accuracy %" ranging from 0 to 100.

* **Legend (Top-Center):** Contains the names of the algorithms and their corresponding mean accuracy (standard deviation).

* finetuning: 23.11 (43.74) - Dashed Blue Line

* PackNet: 64.88 (0.00) - Solid Green Line

* EWC: 42.01 (19.84) - Solid Orange Line

* LwF: 30.59 (32.76) - Solid Cyan Line

* MAS: 45.72 (13.08) - Dashed Red Line

* EBLL: 33.82 (29.26) - Dashed Magenta Line

* mean-IMM: 31.43 (33.63) - Solid Gray Line

* mode-IMM: 34.45 (2.76) - Solid Dark Green Line

* joint*: 65.82 (n/a) - Dashed Dark Green Line

* SI: 43.40 (18.23) - Dashed Yellow Line

* **Title (Center-Top):** "Evaluation on Task"

### Detailed Analysis

The chart shows the accuracy of each algorithm as it is trained on successive tasks. The vertical lines represent the standard deviation around the mean accuracy at each task.

* **PackNet (Green):** Starts at approximately 80% accuracy at T1 and remains relatively stable around 60-70% through T8. The standard deviation is very low (0.00).

* **joint* (Dark Green):** Starts at approximately 75% accuracy at T1 and declines to around 50% by T8.

* **finetuning (Blue):** Starts at approximately 75% accuracy at T1 and declines rapidly to around 10% by T8.

* **EWC (Orange):** Starts at approximately 65% accuracy at T1 and declines to around 20% by T8.

* **MAS (Red):** Starts at approximately 70% accuracy at T1 and declines to around 20% by T8.

* **EBLL (Magenta):** Starts at approximately 60% accuracy at T1 and declines to around 10% by T8.

* **LwF (Cyan):** Starts at approximately 50% accuracy at T1 and declines to around 10% by T8.

* **SI (Yellow):** Starts at approximately 65% accuracy at T1 and declines to around 20% by T8.

* **mean-IMM (Gray):** Starts at approximately 50% accuracy at T1 and declines to around 10% by T8.

* **mode-IMM (Dark Green):** Starts at approximately 50% accuracy at T1 and declines to around 30% by T8.

**Specific Data Points (Approximate):**

| Algorithm | T1 (%) | T2 (%) | T3 (%) | T4 (%) | T5 (%) | T6 (%) | T7 (%) | T8 (%) |

|--------------|--------|--------|--------|--------|--------|--------|--------|--------|

| finetuning | 75 | 60 | 40 | 20 | 10 | 10 | 10 | 10 |

| PackNet | 80 | 70 | 65 | 60 | 60 | 60 | 60 | 60 |

| EWC | 65 | 50 | 40 | 30 | 25 | 20 | 20 | 20 |

| LwF | 50 | 40 | 30 | 20 | 15 | 15 | 10 | 10 |

| MAS | 70 | 55 | 40 | 30 | 20 | 20 | 20 | 20 |

| EBLL | 60 | 45 | 30 | 20 | 15 | 15 | 10 | 10 |

| mean-IMM | 50 | 40 | 30 | 20 | 15 | 15 | 10 | 10 |

| mode-IMM | 50 | 45 | 40 | 35 | 30 | 30 | 30 | 30 |

| joint* | 75 | 65 | 55 | 50 | 45 | 40 | 35 | 30 |

| SI | 65 | 50 | 40 | 30 | 25 | 20 | 20 | 20 |

### Key Observations

* PackNet consistently exhibits the highest accuracy across all tasks, with minimal performance degradation.

* Finetuning, EWC, MAS, EBLL, LwF, mean-IMM, and SI show a significant decline in accuracy as the number of tasks increases, indicating catastrophic forgetting.

* mode-IMM shows a more stable performance compared to the other algorithms, but still experiences some decline.

* The standard deviation markers indicate that PackNet has the most consistent performance, while others have more variability.

### Interpretation

The chart demonstrates the impact of continual learning on different machine learning algorithms. Algorithms like finetuning, EWC, MAS, EBLL, LwF, mean-IMM, and SI suffer from catastrophic forgetting – the tendency to lose previously learned information when learning new tasks. PackNet, with its low standard deviation and stable performance, appears to be more robust to catastrophic forgetting. The "Evaluation on Task" title suggests that the accuracy is being measured on the tasks themselves, rather than on a separate validation set. The joint* algorithm performs well initially but degrades similarly to the other algorithms. The inclusion of mean-IMM and mode-IMM suggests an investigation into the impact of different averaging strategies within an incremental moment matching (IMM) framework. The n/a standard deviation for joint* may indicate that the standard deviation was not calculated or is not available for this algorithm. The chart highlights the importance of developing algorithms that can effectively learn new tasks without forgetting previously learned ones.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: Evaluation on Task

### Overview

The image displays a line chart titled "Evaluation on Task," comparing the performance of multiple continual learning methods across a sequence of eight tasks (T1 to T8). The chart plots "Accuracy %" on the y-axis against "Training Sequence Per Task" on the x-axis. Each method is represented by a distinct colored line, showing how its accuracy changes as it is sequentially trained on new tasks. The legend at the top provides the method names, their average accuracy across tasks, and a standard deviation value in parentheses.

### Components/Axes

* **Chart Title:** "Evaluation on Task" (centered at the top).

* **Y-Axis:** Labeled "Accuracy %". Scale ranges from 0 to 100 in increments of 20.

* **X-Axis:** Labeled "Training Sequence Per Task". It is divided into eight discrete sections, labeled T1 through T8 from left to right. Each section represents the evaluation point after training on that specific task.

* **Legend:** Positioned at the very top of the chart, above the plot area. It lists 10 methods with their associated line styles, colors, markers, and summary statistics (average accuracy and standard deviation).

* `finetuning`: 23.11 (43.74) - Black dotted line with circle markers.

* `PackNet`: 64.88 (0.00) - Green solid line with star markers.

* `EWC`: 42.01 (19.84) - Yellow solid line with no distinct marker.

* `LwF`: 30.59 (32.76) - Light blue solid line with no distinct marker.

* `mean-IMM`: 31.43 (33.63) - Brown solid line with no distinct marker.

* `joint*`: 65.82 (n/a) - Gray right-pointing triangle marker (appears as isolated points, not a connected line).

* `SI`: 43.40 (18.23) - Orange solid line with no distinct marker.

* `MAS`: 45.72 (13.08) - Red solid line with no distinct marker.

* `EBLL`: 33.82 (29.26) - Dark blue solid line with no distinct marker.

* `mode-IMM`: 34.45 (2.76) - Dark red/brown solid line with no distinct marker.

### Detailed Analysis

The chart shows the accuracy trajectory for each method across the task sequence. Below is a task-by-task breakdown of the visible trends and approximate data points.

* **T1 (Initial Task):** All methods begin with high accuracy, clustered between approximately 75% and 85%. The `joint*` baseline (gray triangle) is at the top (~85%). `PackNet` (green) starts around 80%. Most other methods start near 80% but show an immediate, sharp decline within the T1 evaluation window.

* **T2:** Performance for most methods has dropped significantly from T1. `PackNet` remains high, near 80%. `joint*` is around 55%. The other methods have fallen into a range of roughly 20% to 55%, with `finetuning` (black dotted) showing one of the steepest drops to below 20%.

* **T3:** The downward trend continues for most. `PackNet` holds steady near 80%. `joint*` is around 50%. The cluster of other methods now lies between approximately 10% and 45%.

* **T4:** A notable pattern emerges. `PackNet` maintains its high accuracy (~80%). `joint*` is near 60%. Several methods (e.g., `LwF`-light blue, `EBLL`-dark blue) show a temporary recovery or smaller drop compared to T3, clustering around 40-60%. `finetuning` remains very low (<20%).

* **T5:** Similar to T4, but the cluster of recovering methods is slightly lower (30-55%). `PackNet` is still near 80%. `joint*` is around 50%.

* **T6:** Performance for most non-`PackNet` methods converges into a tighter, lower band between roughly 20% and 40%. `PackNet` dips slightly but remains above 70%. `joint*` is near 40%.

* **T7:** This task shows a dramatic, sharp spike in accuracy for several methods, most prominently `LwF` (light blue) and `EBLL` (dark blue), which jump to over 80%. `MAS` (red) and `SI` (orange) also spike to around 70%. `PackNet` remains high (~80%). `joint*` is near 85%. `finetuning` shows a smaller spike to ~40%.

* **T8:** Data is sparse. Only a few isolated points are visible: `joint*` near 95%, `PackNet` near 80%, and `finetuning` near 20%. The lines for other methods do not extend to T8, suggesting they may have been evaluated only up to T7 or their data points are not plotted.

### Key Observations

1. **PackNet's Stability:** The `PackNet` method (green line) demonstrates remarkable stability, maintaining high accuracy (near 80%) across all tasks T1-T7 with minimal degradation. This is reflected in its low standard deviation (0.00).

2. **Catastrophic Forgetting:** Most other methods exhibit classic catastrophic forgetting. Their accuracy drops sharply after the initial task (T1) and generally trends downward through T6, indicating they forget previously learned tasks as new ones are learned.

3. **The T7 Anomaly:** Task T7 causes a significant, unexpected performance increase for several methods (`LwF`, `EBLL`, `MAS`, `SI`). This suggests T7 might be an easier task, or there is a positive transfer effect from previously learned tasks for these specific algorithms.

4. **`joint*` Baseline:** The `joint*` method (gray triangles), which likely represents an upper-bound joint training on all tasks seen so far, generally performs well but shows variability, dropping in mid-sequence tasks (T3, T6) before recovering.

5. **`finetuning` Collapse:** Simple `finetuning` (black dotted line) performs the worst, showing the most severe and rapid forgetting, with accuracy often below 20% after the first task.

### Interpretation

This chart is a comparative evaluation of continual learning algorithms designed to mitigate catastrophic forgetting. The data clearly demonstrates the core challenge: as a model sequentially learns new tasks (T1→T8), its performance on earlier tasks typically degrades.

* **Method Efficacy:** `PackNet` appears highly effective in this specific evaluation setup, as it prevents forgetting almost entirely (near-zero variance in accuracy). This suggests it successfully isolates or protects parameters for previous tasks. In contrast, `finetuning` serves as a baseline showing the severe problem these methods aim to solve.

* **Task Dependency:** The performance is not uniformly declining. The spike at T7 indicates that task characteristics heavily influence outcomes. Some tasks may reinforce previous knowledge (positive transfer) for certain algorithms, while others cause interference.

* **Algorithm Behavior:** Methods like `LwF` and `EBLL` show high volatility—suffering from severe forgetting but also capable of large recoveries (T7). Methods like `MAS` and `SI` show more moderate, consistent forgetting curves. The high standard deviations for most methods (e.g., `finetuning`: 43.74) confirm this high variability in performance across the task sequence.

* **Practical Implication:** The choice of continual learning algorithm involves a trade-off. `PackNet` offers stability but may have other costs (e.g., computational, memory). Other methods offer average performance that is highly task-dependent, requiring careful consideration of the expected task sequence. The chart argues that there is no universal best solution; performance is contingent on both the algorithm and the nature of the tasks being learned.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: Evaluation on Task

### Overview

The image is a multi-line graph comparing the accuracy performance of various machine learning methods across eight sequential tasks (T1–T8). The y-axis represents accuracy percentage (0–100%), and the x-axis represents training sequences per task. The graph includes eight distinct data series, each represented by a unique color and marker, with a legend in the top-left corner. Key trends show significant performance decay for most methods over time, with one method maintaining near-constant high performance.

### Components/Axes

- **X-axis**: "Training Sequence Per Task" (T1 to T8)

- **Y-axis**: "Accuracy %" (0–100%)

- **Legend**: Located in the top-left corner, listing:

- PackNet (green dashed line with 'x' markers)

- EWC (orange solid line with '+' markers)

- MAS (red solid line with 'o' markers)

- LwF (blue solid line with '▼' markers)

- EBLL (purple solid line with '▲' markers)

- Finetuning (black dotted line with '▶' markers)

- Joint* (gray dashed line with '▶' markers)

- mean-IMM (brown dashed line with '▶' markers)

- mode-IMM (dark brown dashed line with '▶' markers)

- **Task Labels**: T1–T8 positioned below the x-axis.

### Detailed Analysis

1. **PackNet (Green)**:

- Starts at 64.88% (T1) and remains relatively stable, with minor fluctuations (e.g., 58.32% at T2, 55.11% at T3).

- Maintains the highest accuracy across all tasks, ending at 64.88% (T8).

2. **EWC (Orange)**:

- Begins at 42.01% (T1), peaks at 45.72% (T3), then declines to 30.59% (T8).

3. **MAS (Red)**:

- Starts at 45.72% (T1), drops to 33.82% (T4), and stabilizes around 31.43% (T8).

4. **LwF (Blue)**:

- Declines sharply from 30.59% (T1) to 18.23% (T8), with significant drops at T4 and T6.

5. **EBLL (Purple)**:

- Starts at 33.82% (T1), declines to 29.26% (T8), with a notable dip at T5.

6. **Finetuning (Black Dotted)**:

- Peaks at 23.11% (T1), drops to 13.08% (T8), with erratic fluctuations.

7. **Joint* (Gray Dashed)**:

- Starts at 65.82% (T1), declines to 34.45% (T8), with sharp drops at T4 and T7.

8. **mean-IMM (Brown Dashed)**:

- Starts at 31.43% (T1), declines to 27.63% (T8), with minor fluctuations.

9. **mode-IMM (Dark Brown Dashed)**:

- Starts at 34.45% (T1), declines to 27.63% (T8), with a sharp drop at T6.

### Key Observations

- **PackNet** consistently outperforms all other methods, maintaining near-constant accuracy (64.88% ± 0.00) across all tasks.

- **LwF** and **Finetuning** exhibit the steepest declines, suggesting poor generalization to new tasks.

- **Joint*** and **EWC** show moderate decay but retain higher accuracy than most methods after T4.

- **mean-IMM** and **mode-IMM** demonstrate gradual declines, with mode-IMM outperforming mean-IMM slightly in later tasks.

- **MAS** and **EBLL** show intermediate performance, with MAS declining more sharply than EBLL.

### Interpretation

The data suggests that **PackNet** is the most robust method for sequential task learning, maintaining high accuracy without significant decay. This implies superior generalization capabilities compared to other methods. In contrast, **LwF** and **Finetuning** suffer from catastrophic forgetting, as their accuracy drops sharply with each new task. The **Joint*** method, while initially high-performing, also shows substantial decay, indicating potential limitations in handling task sequences. The **mean-IMM** and **mode-IMM** methods, while less effective than PackNet, demonstrate more stability than LwF or Finetuning, suggesting they may balance adaptation and retention better. The consistent performance of PackNet across all tasks highlights its effectiveness in mitigating catastrophic forgetting, a critical challenge in continual learning scenarios.

DECODING INTELLIGENCE...