TECHNICAL ASSET FINGERPRINT

e70dbff6dd369970884bed97

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

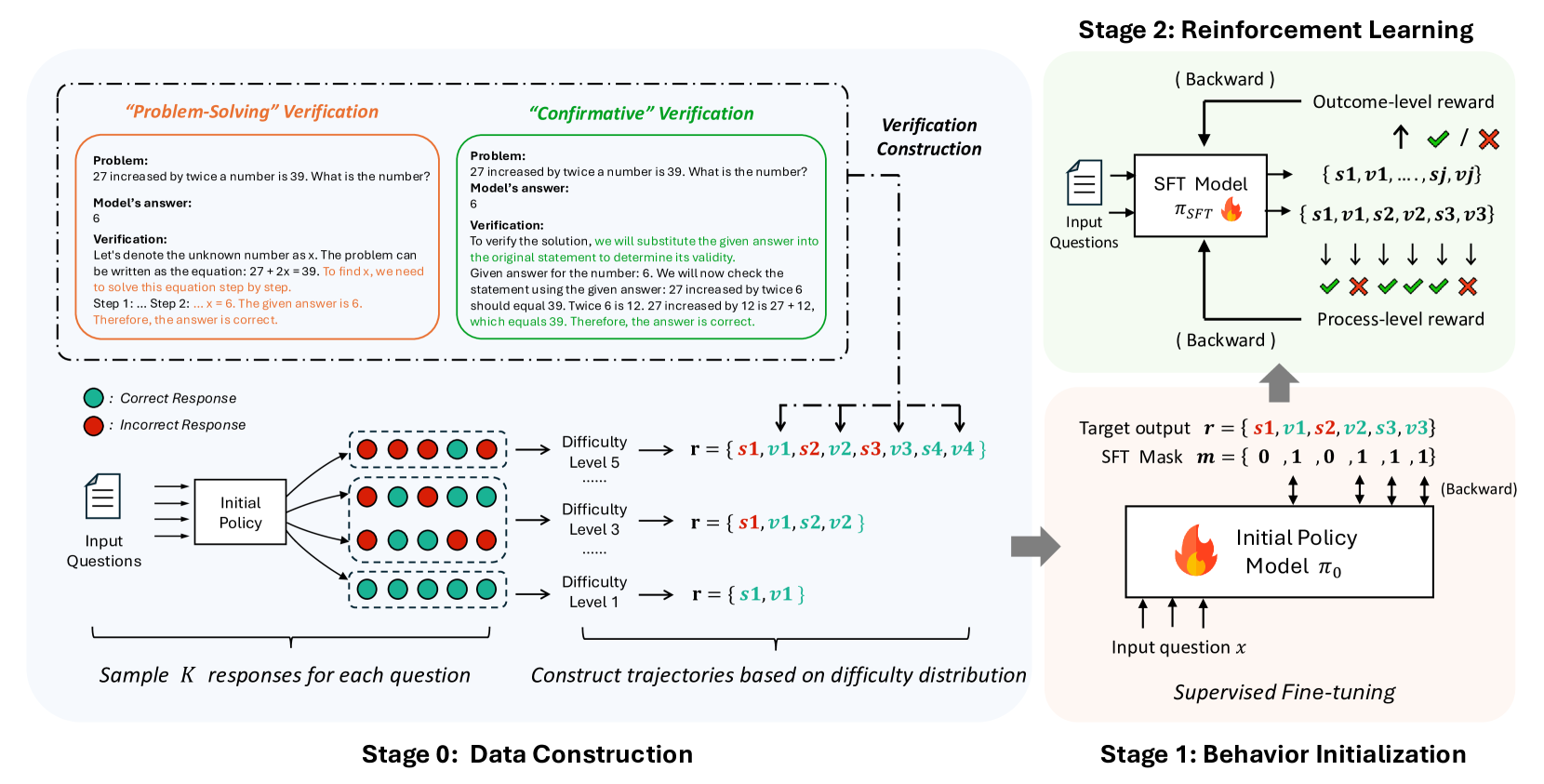

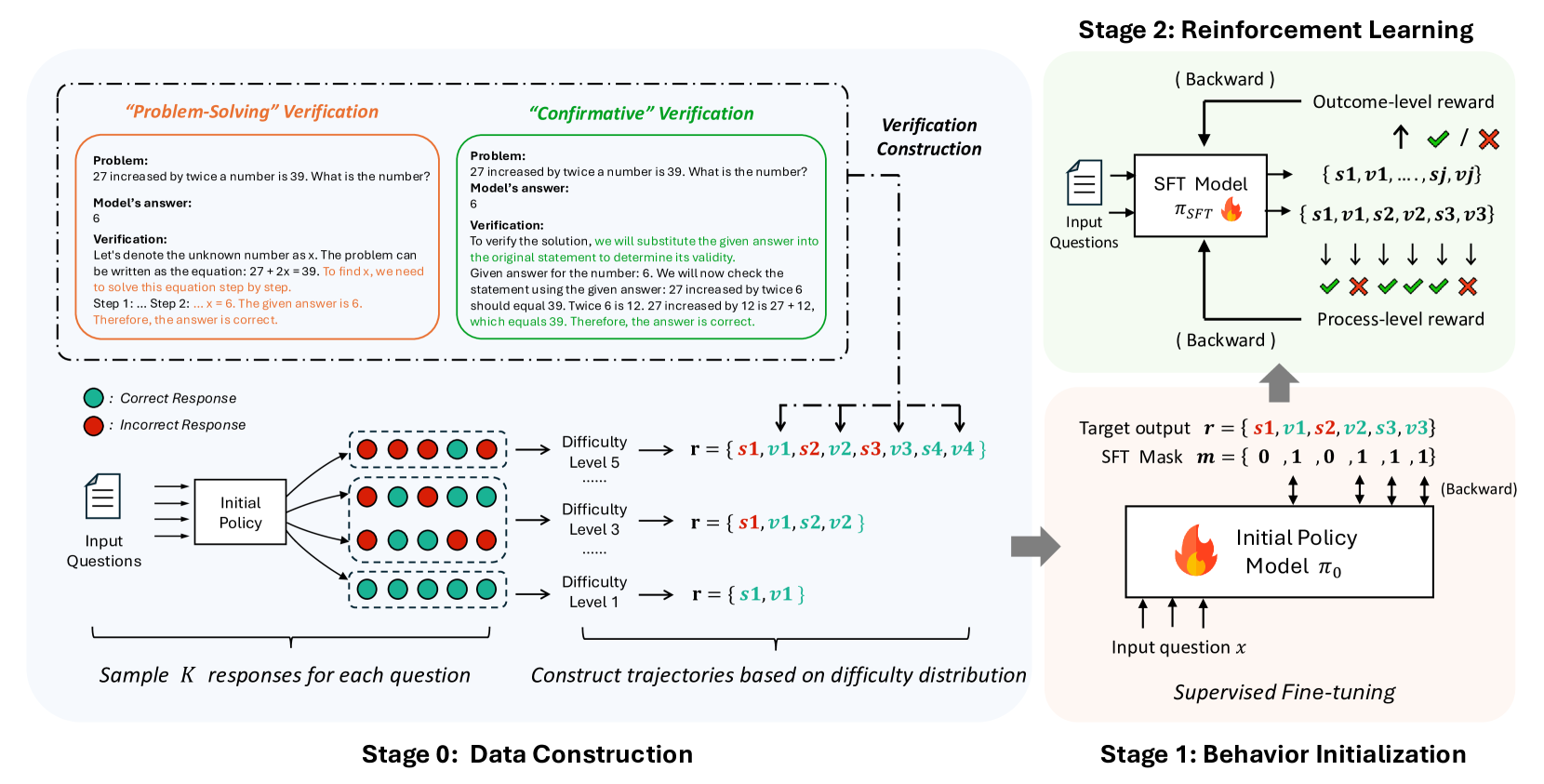

## Data Construction and Reinforcement Learning Diagram

### Overview

The image illustrates a multi-stage process involving data construction, behavior initialization, and reinforcement learning. It details how a model learns to solve problems through verification and trajectory construction based on difficulty levels.

### Components/Axes

* **Stages:** The diagram is divided into three stages:

* Stage 0: Data Construction

* Stage 1: Behavior Initialization

* Stage 2: Reinforcement Learning

* **Verification Methods:** Two verification methods are presented:

* "Problem-Solving" Verification (left side, outlined in orange)

* "Confirmative" Verification (right side, outlined in green)

* **Legend:** Located in the bottom-left corner:

* Green circle: Correct Response

* Red circle: Incorrect Response

* **Difficulty Levels:** Three difficulty levels are shown:

* Difficulty Level 1

* Difficulty Level 3

* Difficulty Level 5

* **Models:**

* Initial Policy Model π₀

* SFT Model π_SFT

### Detailed Analysis or ### Content Details

**Stage 0: Data Construction**

* **Input Questions:** Input questions are fed into an "Initial Policy" block.

* **Sample Responses:** The "Initial Policy" block outputs a set of responses, represented by green (correct) and red (incorrect) circles.

* The first row of responses contains 3 red and 3 green circles.

* The second row of responses contains 4 green and 2 red circles.

* The third row of responses contains 5 green and 1 red circles.

* **Sample K responses for each question:** Text below the responses.

* **Difficulty Levels:** Trajectories are constructed based on difficulty distribution.

* Difficulty Level 1: r = {s1, v1}

* Difficulty Level 3: r = {s1, v1, s2, v2}

* Difficulty Level 5: r = {s1, v1, s2, v2, s3, v3, s4, v4}

* The variables s1, s2, s3, s4 are black.

* The variables v1, v2, v3, v4 are teal.

* The incorrect responses are red.

**Stage 1: Behavior Initialization**

* **Initial Policy Model:** An "Initial Policy Model π₀" block receives "Input question x".

* **Supervised Fine-tuning:** The model undergoes supervised fine-tuning.

* **Target Output:** The target output is defined as r = {s1, v1, s2, v2, s3, v3}.

* The variables s1, s2, s3 are black.

* The variables v1, v2, v3 are teal.

* The incorrect responses are red.

* **SFT Mask:** An SFT Mask m = {0, 1, 0, 1, 1, 1} is applied.

**Stage 2: Reinforcement Learning**

* **SFT Model:** An "SFT Model π_SFT" block receives "Input Questions".

* **Outcome-level reward:** The model receives outcome-level rewards (checkmark for correct, X for incorrect).

* **Process-level reward:** The model receives process-level rewards (checkmarks and X's).

* The sequence of rewards is: checkmark, X, checkmark, checkmark, checkmark, X.

* **Outputs:** The SFT Model outputs two sets of data:

* {s1, v1, ..., sj, vj}

* {s1, v1, s2, v2, s3, v3}

**Verification Sections**

* **"Problem-Solving" Verification:**

* Problem: "27 increased by twice a number is 39. What is the number?"

* Model's answer: 6

* Verification: "Let's denote the unknown number as x. The problem can be written as the equation: 27 + 2x = 39. To find x, we need to solve this equation step by step. Step 1:... Step 2: ... x = 6. The given answer is 6. Therefore, the answer is correct."

* **"Confirmative" Verification:**

* Problem: "27 increased by twice a number is 39. What is the number?"

* Model's answer: 6

* Verification: "To verify the solution, we will substitute the given answer into the original statement to determine its validity. Given answer for the number: 6. We will now check the statement using the given answer: 27 increased by twice 6 should equal 39. Twice 6 is 12. 27 increased by 12 is 27 + 12, which equals 39. Therefore, the answer is correct."

### Key Observations

* The diagram illustrates a learning process that starts with initial data construction, moves to behavior initialization, and culminates in reinforcement learning.

* The difficulty levels increase the complexity of the trajectories.

* The verification methods provide different approaches to validating the model's answers.

### Interpretation

The diagram presents a structured approach to training a model to solve problems. It highlights the importance of data construction, initial behavior setup, and reinforcement learning. The use of different verification methods and difficulty levels suggests a comprehensive training strategy. The SFT Mask in Stage 1 likely serves to focus the model's learning on specific aspects of the target output. The overall process aims to improve the model's accuracy and problem-solving abilities through iterative learning and feedback.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

## Diagram: Stages of Reinforcement Learning for Model Training

### Overview

This diagram illustrates a three-stage process for training a model, likely a language model or a similar AI system, using reinforcement learning. The stages are: Stage 0: Data Construction, Stage 1: Behavior Initialization, and Stage 2: Reinforcement Learning. The diagram details how data is generated, how an initial policy is established, and how reinforcement learning is applied to refine the model's performance.

### Components/Axes

**Stage 0: Data Construction**

* **Input:** "Input Questions" (represented by a document icon).

* **Process:** An "Initial Policy" box receives input questions.

* **Output:** The "Initial Policy" generates "Sample K responses for each question." These responses are visually represented as a grid of colored circles, indicating "Correct Response" (green) and "Incorrect Response" (red).

* **Legend:**

* Green circle: Correct Response

* Red circle: Incorrect Response

* **Further Processing:** These samples are used to "Construct trajectories based on difficulty distribution." This is shown by arrows leading to different difficulty levels:

* Difficulty Level 5

* Difficulty Level 3

* Difficulty Level 1

* **Trajectory Representation:** Each difficulty level is associated with a set of tokens represented as `r = {s1, v1, s2, v2, s3, v3, s4, v4}` for Level 5, `r = {s1, v1, s2, v2}` for Level 3, and `r = {s1, v1}` for Level 1. The colors of the tokens in the example trajectories are:

* Level 5: `s1` (red), `v1` (green), `s2` (red), `v2` (green), `s3` (red), `v3` (green), `s4` (red), `v4` (green)

* Level 3: `s1` (red), `v1` (green), `s2` (red), `v2` (green)

* Level 1: `s1` (red), `v1` (green)

**Verification Construction (Within Stage 0)**

* This section provides two methods of verification for a given problem and model answer.

* **"Problem-Solving" Verification:**

* **Problem:** "27 increased by twice a number is 39. What is the number?"

* **Model's answer:** 6

* **Verification:** A step-by-step explanation of how to solve the equation `27 + 2x = 39` to arrive at `x = 6`.

* **"Confirmative" Verification:**

* **Problem:** "27 increased by twice a number is 39. What is the number?"

* **Model's answer:** 6

* **Verification:** Substituting the answer `6` back into the original statement to confirm its validity. It shows that `27 increased by twice 6` (which is `27 + 12`) equals `39`.

**Stage 1: Behavior Initialization**

* **Process:** "Supervised Fine-tuning" is applied to an "Initial Policy Model π₀".

* **Input:** "Input question x".

* **Output:** The model outputs a "Target output r = {s1, v1, s2, s3, v3}" and an "SFT Mask m = {0, 1, 0, 1, 1, 1, 1}". Arrows indicate a "Backward" pass.

**Stage 2: Reinforcement Learning**

* **Process:** A "SFT Model π_SFT" (presumably the model after Stage 1) is trained using reinforcement learning.

* **Input:** "Input Questions".

* **Outputs:**

* **Outcome-level reward:** Indicated by an upward arrow with checkmarks (✓) and crosses (✗), representing success or failure. The possible outcomes are listed as `{s1, v1, ..., sj, vj}`.

* **Process-level reward:** Indicated by downward arrows with checkmarks (✓) and crosses (✗), representing success or failure at intermediate steps.

* **Feedback Loop:** Both outcome-level and process-level rewards are fed back to the SFT Model via a "Backward" pass.

* **Relationship to Stage 1:** The SFT Model in Stage 2 appears to be an evolution of the Initial Policy Model from Stage 1. The "Target output r" and "SFT Mask m" from Stage 1 are shown feeding into the SFT Model in Stage 2, along with "Input question x".

### Detailed Analysis or Content Details

**Stage 0: Data Construction**

The diagram shows that input questions are processed by an initial policy to generate multiple responses. These responses are categorized as correct (green) or incorrect (red). The distribution of these responses is then used to construct trajectories based on difficulty levels (Level 5, Level 3, Level 1). The token sets associated with each difficulty level suggest a hierarchical structure or varying complexity of generated sequences. For example, Level 5 has the longest sequence of tokens (`s1` through `v4`), while Level 1 has the shortest (`s1`, `v1`). The coloring of these tokens (red for `s` tokens, green for `v` tokens) might indicate specific types of actions or states within the trajectory.

The verification sections provide a concrete example of a mathematical problem and demonstrate two distinct methods for verifying the model's answer:

1. **Problem-Solving:** This method involves solving the problem from scratch to derive the correct answer and comparing it to the model's answer.

2. **Confirmative:** This method involves plugging the model's answer back into the problem statement to check for consistency. Both methods confirm that the model's answer of `6` for the problem "27 increased by twice a number is 39" is correct.

**Stage 1: Behavior Initialization**

This stage focuses on supervised fine-tuning (SFT) of an initial policy model (π₀). The model takes an input question `x` and is trained to produce a target output `r = {s1, v1, s2, s3, v3}`. A corresponding "SFT Mask" `m = {0, 1, 0, 1, 1, 1, 1}` is also generated. The mask likely indicates which parts of the target output are relevant or should be attended to during fine-tuning. The "Backward" arrow suggests that gradients are propagated during this supervised learning process.

**Stage 2: Reinforcement Learning**

This stage applies reinforcement learning to a "SFT Model π_SFT". The model receives input questions and generates outputs that are evaluated by both "Outcome-level reward" and "Process-level reward".

* **Outcome-level reward:** This reward is a global evaluation of the final output. The symbols `✓` and `✗` indicate successful or unsuccessful outcomes, respectively. The set `{s1, v1, ..., sj, vj}` likely represents the possible final states or actions.

* **Process-level reward:** This reward provides feedback on intermediate steps of the model's generation process. The visual representation of multiple downward arrows with `✓` and `✗` suggests that each step in the generated sequence is evaluated.

Both reward signals are fed back to the SFT Model via a "Backward" pass, allowing the model to learn and improve its policy to maximize cumulative rewards. The diagram shows that the "Target output r" and "SFT Mask m" from Stage 1 are inputs to the SFT Model in Stage 2, implying that the supervised fine-tuning in Stage 1 initializes the model for the subsequent reinforcement learning phase.

### Key Observations

* The diagram outlines a structured approach to training a model, moving from data generation and initial policy learning to sophisticated reinforcement learning.

* The use of both "Problem-Solving" and "Confirmative" verification methods in Stage 0 highlights a robust approach to ensuring the correctness of generated data or model outputs.

* The concept of "difficulty distribution" in Stage 0 suggests that the training data is curated to cover a range of problem complexities.

* Stage 1 (Supervised Fine-tuning) serves as a crucial initialization step, preparing the model with a baseline behavior before it undergoes reinforcement learning.

* Stage 2 (Reinforcement Learning) utilizes both global (outcome-level) and granular (process-level) rewards, indicating a comprehensive feedback mechanism for model improvement.

* The "Backward" arrows consistently denote the flow of gradients or error signals in supervised fine-tuning and reinforcement learning.

### Interpretation

This diagram depicts a common pipeline in modern AI development, particularly for tasks involving sequential generation or decision-making, such as natural language processing or game playing.

**Stage 0: Data Construction** lays the groundwork by generating a diverse and verified dataset. The inclusion of verification methods suggests a focus on data quality and correctness, which is paramount for effective model training. The segmentation by difficulty level implies a strategy to gradually expose the model to increasingly complex tasks, a common technique in curriculum learning. The token sets `r = {s1, v1, ...}` likely represent sequences of actions or states, where `s` and `v` might denote different types of tokens or decisions. The coloring of these tokens (red/green) could represent specific attributes or classes within the sequence, potentially related to the correctness or type of action taken.

**Stage 1: Behavior Initialization** is critical for providing the reinforcement learning agent with a reasonable starting point. Supervised fine-tuning on a dataset of input-output pairs (questions and target outputs) allows the model to learn basic patterns and generate plausible responses. The "SFT Mask" might be used to guide the fine-tuning process, focusing the model's attention on specific parts of the input or output that are deemed most important. This stage prevents the reinforcement learning agent from starting with a completely random policy, which would be highly inefficient.

**Stage 2: Reinforcement Learning** is where the model truly learns to optimize its behavior. By receiving rewards based on both the final outcome and the intermediate steps, the model can learn to make better decisions throughout its generation process. The distinction between outcome-level and process-level rewards is significant:

* **Outcome-level reward** is essential for achieving the overall goal.

* **Process-level reward** is crucial for learning efficient and correct intermediate steps, which can lead to better outcomes and prevent common errors. This is particularly important in complex tasks where a single error early on can derail the entire process.

The overall flow suggests a progression from supervised learning to reinforcement learning, a paradigm often referred to as "pre-training and fine-tuning" or "behavior cloning followed by reinforcement learning." The diagram effectively visualizes how an initial, supervised policy is refined through trial and error guided by rewards, leading to a more robust and optimized model. The "Backward" arrows are a consistent visual cue for the backpropagation of errors or gradients, a fundamental mechanism in training deep learning models. The entire process aims to build a model that not only generates correct answers but does so through a sound and efficient reasoning process.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Reinforcement Learning for Verification

### Overview

This diagram illustrates a multi-stage process for training a model to verify mathematical problem solutions using reinforcement learning. The process is broken down into four stages: Data Construction, Reinforcement Learning, Behavior Initialization, and Verification Construction. It details the flow of information, the types of rewards used, and the components involved in each stage.

### Components/Axes

The diagram is structured into four main stages, labeled "Stage 0: Data Construction", "Stage 1: Behavior Initialization", "Stage 2: Reinforcement Learning", and "Verification Construction". Each stage contains several components and arrows indicating the flow of data.

* **Stage 0: Data Construction:** Includes "Input Questions", "Initial Policy", "Difficulty Level 1", "Difficulty Level 3", "Difficulty Level 5", and "Sample k responses for each question". Green circles represent "Correct Response" and red circles represent "Incorrect Response".

* **Stage 1: Behavior Initialization:** Includes "Input question x", "Initial Policy", "Model π₀", and "Supervised Fine-tuning".

* **Stage 2: Reinforcement Learning:** Includes "SFT Model", "Input Questions", "Outcome-level reward", "Process-level reward", and the set of states {s1, v1, s2, v2, s3, v3}.

* **Verification Construction:** Contains two problem examples: "Problem-Solving" Verification and "Confirmatory" Verification.

### Detailed Analysis or Content Details

**Stage 0: Data Construction**

* Input Questions are fed into an Initial Policy.

* The Initial Policy generates responses, which are categorized as either Correct (green circle) or Incorrect (red circle).

* Responses are constructed into trajectories based on difficulty distribution, with three difficulty levels: Level 1, Level 3, and Level 5.

* Difficulty Level 1: r = {s1, v1}

* Difficulty Level 3: r = {s1, v1, s2, v2}

* Difficulty Level 5: r = {s1, v1, s2, v2, s3, v3, s4, v4}

* The output is "Sample k responses for each question".

**Stage 1: Behavior Initialization**

* An Input question x is fed into the Initial Policy.

* The Initial Policy generates a Model π₀.

* The Model π₀ undergoes Supervised Fine-tuning.

**Stage 2: Reinforcement Learning**

* Input Questions are fed into an SFT Model.

* The SFT Model generates a sequence of states {s1, v1, s2, v2, s3, v3}.

* Two types of rewards are used: Outcome-level reward (represented by a checkmark or cross) and Process-level reward.

* The flow is "Backward".

**Verification Construction**

* **"Problem-Solving" Verification:**

* Problem: "27 increased by twice a number is 39. What is the number?"

* Model's answer: 6

* Verification: "Let's denote the unknown number as x. The problem can be written as the equation: 27 + 2x = 39. To find x, we need to solve this equation step by step. Step 1: ... Step 6. The given answer is 6. Therefore, the answer is correct."

* **"Confirmatory" Verification:**

* Problem: "27 increased by twice a number is 39. What is the number?"

* Model's answer: 6

* Verification: "To verify the solution, we will substitute the given answer into the original statement to determine its validity. Given answer for the number 6. We will now check the statement using the given answer: 27 increased by twice 6 should equal 39. Twice 6 is 12. 27 increased by 12 is 27 + 12, which equals 39. Therefore, the answer is correct."

**Target Output**

* Target output r = {s1, v1, s2, v2, s3, v3}

* SFT Mask m = {0, 1, 0, 1, 1, 1, 1}

### Key Observations

* The diagram emphasizes a backward flow of information in the Reinforcement Learning stage, indicated by the "Backward" labels.

* The verification process provides both a problem-solving approach and a confirmatory approach.

* The SFT Mask suggests a selective application of the SFT model to certain states.

* The use of both Outcome-level and Process-level rewards indicates a nuanced reward structure.

### Interpretation

The diagram outlines a sophisticated approach to training a verification model. The data construction stage generates a diverse dataset with varying difficulty levels. The reinforcement learning stage leverages both outcome and process rewards to guide the model's learning. The backward flow suggests a policy gradient approach, where the model learns from the consequences of its actions. The inclusion of both "Problem-Solving" and "Confirmatory" verification methods indicates a desire for robust and reliable verification capabilities. The SFT mask suggests a method for focusing the model's attention on specific parts of the verification process. Overall, the diagram demonstrates a well-structured and thoughtful approach to building a verification system using reinforcement learning. The use of both problem-solving and confirmatory verification suggests a focus on both the correctness of the answer and the validity of the reasoning process.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Diagram: Multi-Stage Training Pipeline for Reasoning Models

### Overview

The image is a technical flowchart illustrating a three-stage pipeline for training a machine learning model to solve reasoning problems. The process begins with **Stage 0: Data Construction**, where training data is generated and verified. This feeds into **Stage 1: Behavior Initialization**, which uses Supervised Fine-Tuning (SFT) to create an initial policy model. Finally, **Stage 2: Reinforcement Learning** refines this model using outcome and process-level rewards. The diagram uses color-coding (green for correct, red for incorrect), flow arrows, and text boxes to detail each step.

### Components/Axes

The diagram is divided into three primary regions, each representing a stage:

1. **Stage 0: Data Construction (Left, light blue background)**

* **Input:** "Input Questions" (document icon).

* **Process:** "Initial Policy" generates multiple responses.

* **Legend:** A green circle denotes ": Correct Response". A red circle denotes ": Incorrect Response".

* **Output:** Responses are grouped by "Difficulty Level" (Level 1, 3, 5) to form reasoning trajectories `r`.

* **Verification Methods:** Two dashed boxes at the top detail verification approaches:

* **"Problem-Solving" Verification (Orange box):** Shows an algebraic problem, a model's answer, and a step-by-step verification solving the equation.

* **"Confirmative" Verification (Green box):** Shows the same problem and answer, but verification substitutes the answer back into the original statement to check its validity.

* **Key Text:** "Sample K responses for each question", "Construct trajectories based on difficulty distribution".

2. **Stage 1: Behavior Initialization (Bottom Right, light pink background)**

* **Title:** "Supervised Fine-tuning".

* **Input:** "Input question x".

* **Model:** "Initial Policy Model π₀" (with a flame icon).

* **Target:** "Target output r = {s1, v1, s2, v2, s3, v3}" and "SFT Mask m = {0, 1, 0, 1, 1, 1}".

* **Flow:** An arrow points from Stage 0's constructed trajectories to this stage. A "(Backward)" arrow indicates gradient flow.

3. **Stage 2: Reinforcement Learning (Top Right, light green background)**

* **Input:** "Input Questions" (document icon) fed into an "SFT Model π_SFT" (with a flame icon).

* **Outputs:** The model generates sequences like `{s1, v1, ..., sj, vj}` and `{s1, v1, s2, v2, s3, v3}`.

* **Rewards:**

* "Outcome-level reward": Indicated by a checkmark (✓) or cross (✗) next to the final answer.

* "Process-level reward": Indicated by a sequence of checkmarks and crosses (✓ ✗ ✓ ✓ ✗) below the model's step-by-step output.

* **Flow:** A "(Backward)" arrow points from the rewards back to the SFT Model. A large grey arrow connects Stage 1 to Stage 2.

### Detailed Analysis

**Stage 0: Data Construction**

* **Verification Example:** The problem is: "27 increased by twice a number is 39. What is the number?" The model's answer is "6".

* *Problem-Solving Verification Text:* "Let's denote the unknown number as x. The problem can be written as the equation: 27 + 2x = 39. To find x, we need to solve this equation step by step. Step 1: ... Step 2: ... x = 6. The given answer is 6. Therefore, the answer is correct."

* *Confirmative Verification Text:* "To verify the solution, we will substitute the given answer into the original statement to determine its validity. Given answer for the number: 6. We will now check the statement using the given answer: 27 increased by twice 6 should equal 39. Twice 6 is 12. 27 increased by 12 is 27 + 12, which equals 39. Therefore, the answer is correct."

* **Trajectory Construction:** For a given question, the Initial Policy samples K responses. These are sorted into difficulty levels.

* Difficulty Level 5: Trajectory `r = {s1, v1, s2, v2, s3, v3, s4, v4}` (8 elements).

* Difficulty Level 3: Trajectory `r = {s1, v1, s2, v2}` (4 elements).

* Difficulty Level 1: Trajectory `r = {s1, v1}` (2 elements).

* The notation `{s, v}` likely represents a "step" and its "verification" or "value".

**Stage 1: Behavior Initialization**

* This stage performs Supervised Fine-Tuning (SFT) on the Initial Policy Model (π₀).

* It uses the constructed trajectories `r` as target outputs.

* The "SFT Mask m = {0, 1, 0, 1, 1, 1}" suggests that only specific parts of the sequence (likely the verification steps `v`) are used for the loss calculation during training.

**Stage 2: Reinforcement Learning**

* The SFT Model (π_SFT) is trained further with RL.

* It receives two types of rewards:

1. **Outcome-level reward:** A single binary signal (✓/✗) for the final answer's correctness.

2. **Process-level reward:** A sequence of binary signals (✓/✗) evaluating the correctness of each intermediate reasoning step.

* Both rewards are used in a "(Backward)" pass to update the model.

### Key Observations

1. **Dual Verification Strategy:** The pipeline employs two distinct methods ("Problem-Solving" and "Confirmative") to verify the correctness of model-generated answers during data construction, ensuring robust labeling.

2. **Difficulty-Aware Data:** Training data is explicitly structured by difficulty level, with more complex problems requiring longer reasoning trajectories (more steps).

3. **Hybrid Reward Structure:** The reinforcement learning stage uses a combination of sparse (outcome) and dense (process-level) rewards, which is a sophisticated approach to guide learning.

4. **Sequential Refinement:** The pipeline shows a clear progression from data generation (Stage 0), to imitation learning (Stage 1), to goal-oriented optimization (Stage 2).

### Interpretation

This diagram outlines a comprehensive methodology for training AI models to perform multi-step reasoning, likely for mathematical or logical problem-solving. The process is designed to overcome key challenges in training such models:

* **Data Scarcity & Quality:** Stage 0 automates the creation of high-quality, verified training data with difficulty annotations, solving the problem of needing large, human-labeled datasets.

* **Learning Stability:** Starting with Supervised Fine-Tuning (Stage 1) on curated trajectories provides a stable behavioral foundation before introducing the more volatile reinforcement learning phase.

* **Credit Assignment:** The use of **process-level rewards** in Stage 2 is particularly significant. It addresses the "credit assignment problem" in long reasoning chains by providing feedback on each step, not just the final answer. This should lead to more reliable and interpretable reasoning patterns.

* **Overall Goal:** The pipeline aims to produce a model that doesn't just arrive at correct answers but does so through a verifiable and correct step-by-step process, enhancing both accuracy and trustworthiness. The "Verification Construction" block linking Stage 0 to the reward signals in Stage 2 indicates that the verification methods developed for data labeling are also used to generate the process-level rewards during RL, creating a cohesive training loop.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Two-Stage Reinforcement Learning Framework for Educational Model Training

### Overview

The diagram illustrates a two-stage process for training an educational model using reinforcement learning (RL) and supervised fine-tuning (SFT). Stage 0 focuses on data construction through problem-solving verification and trajectory generation, while Stage 1 employs RL with outcome-level and process-level rewards to refine the model via backward passes and supervised fine-tuning.

---

### Components/Axes

#### Stage 0: Data Construction

- **Input Questions**: Textual problems (e.g., "27 increased by twice a number is 39...").

- **Initial Policy**: Generates responses (correct/incorrect) for input questions.

- **Verification Construction**:

- **Problem-Solving Verification**: Checks correctness of answers (e.g., "6" for the math problem).

- **Confirmative Verification**: Validates answers by substitution (e.g., "27 + 12 = 39").

- **Difficulty Levels**: Responses categorized into levels 1, 2, 3, and 5.

- **Trajectory Construction**: Builds sequences of states (`s1, s2, ...`) and values (`v1, v2, ...`) based on difficulty distributions.

#### Stage 1: Behavior Initialization

- **SFT Model**: Processes input questions and generates outputs (`r = {s1,v1,s2,v2,...}`).

- **Outcome-Level Reward**: Binary feedback (✅/❌) for final answers.

- **Process-Level Reward**: Step-by-step validation (✅/❌ for individual steps).

- **Target Output**: Sequences of states and values (`r = {s1,v1,s2,v2,s3,v3}`).

- **Task Mask**: Binary indicators (`m = {0,1,0,1,1,1}`) for selective backpropagation.

- **Supervised Fine-Tuning**: Adjusts the initial policy (`π₀`) using labeled data.

---

### Detailed Analysis

#### Stage 0: Data Construction

1. **Input Questions** are fed into an **Initial Policy** to generate **K responses** per question.

2. Responses are verified:

- **Correct** (green circles) and **Incorrect** (red circles) responses are labeled.

- Difficulty levels are assigned (e.g., Level 5 for complex problems).

3. **Trajectories** are constructed by grouping responses into sequences (`s1,v1,s2,v2,...`) based on difficulty distributions.

#### Stage 1: Behavior Initialization

1. **SFT Model** takes input questions and produces trajectories (`r = {s1,v1,s2,v2,...}`).

2. **Outcome-Level Reward** evaluates final answers (✅/❌).

3. **Process-Level Reward** validates intermediate steps (e.g., "27 + 12 = 39" ✅).

4. **Backward Passes** adjust the SFT model using rewards.

5. **Task Mask** filters which elements (`s1,v1,s2,v2,...`) are updated during backpropagation.

6. **Supervised Fine-Tuning** refines the initial policy (`π₀`) using labeled trajectories.

---

### Key Observations

1. **Difficulty Stratification**: Responses are grouped by difficulty (Levels 1–5), ensuring balanced training across problem complexities.

2. **Dual Reward System**: Combines outcome-level (final answer) and process-level (step-by-step) feedback for robust learning.

3. **Selective Backpropagation**: The task mask (`m = {0,1,0,1,1,1}`) prioritizes updates to specific trajectory elements.

4. **Iterative Refinement**: Stage 0 provides high-quality data, while Stage 1 iteratively improves the model via RL and SFT.

---

### Interpretation

This framework demonstrates a hybrid approach to educational model training:

- **Stage 0** ensures diverse, difficulty-stratified data, critical for capturing edge cases and foundational knowledge.

- **Stage 1** leverages RL to refine reasoning patterns, with process-level rewards addressing common errors (e.g., arithmetic mistakes).

- The **task mask** suggests a focus on high-impact trajectory segments during training, optimizing computational efficiency.

- **Supervised Fine-Tuning** acts as a final calibration step, aligning the model with expert-validated solutions.

The integration of verification methods (problem-solving and confirmative) ensures data quality, while the RL-SFT pipeline enables scalable, error-aware model improvement. This approach could be applied to tutoring systems, automated grading, or adaptive learning platforms.

DECODING INTELLIGENCE...