TECHNICAL ASSET FINGERPRINT

e72e773ec14c2dc3ece4600d

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

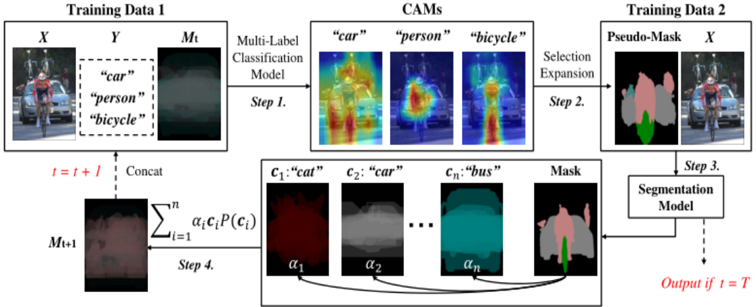

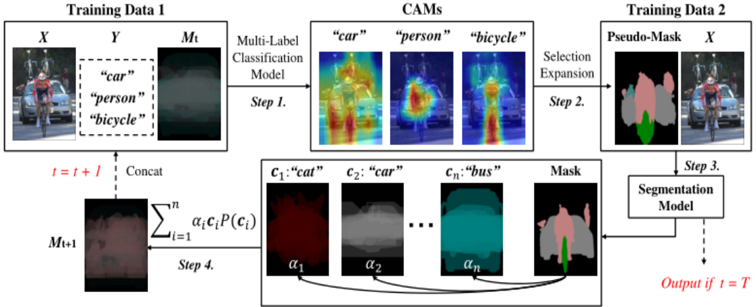

## Diagram: Multi-Label Classification and Segmentation Model Training

### Overview

The image is a diagram illustrating a multi-label classification and segmentation model training process. It shows the flow of data through different steps, including multi-label classification, class activation map (CAM) generation, selection expansion, and segmentation model training. The diagram uses two sets of training data and iteratively refines a mask.

### Components/Axes

* **Training Data 1:** Contains input image (X), labels (Y), and a mask (Mt).

* **X:** An image showing a person on a bicycle, a car, and other background elements.

* **Y:** Labels associated with the image: "car", "person", "bicycle".

* **Mt:** A mask, initially somewhat blurred or undefined.

* **Multi-Label Classification Model:** A model that predicts multiple labels for an input image.

* **Step 1:** The first step involves feeding Training Data 1 into the Multi-Label Classification Model.

* **CAMs (Class Activation Maps):** Visual representations highlighting the regions in the image that contribute most to the classification of specific labels. Three CAMs are shown:

* "car": Highlights the car in the image.

* "person": Highlights the person in the image.

* "bicycle": Highlights the bicycle in the image.

* **Selection Expansion:** A process that uses the CAMs to generate a pseudo-mask.

* **Step 2:** The second step involves Selection Expansion.

* **Training Data 2:** Contains a Pseudo-Mask and the input image (X).

* **Pseudo-Mask:** A mask generated from the CAMs, showing different regions corresponding to different labels.

* **X:** The same input image as in Training Data 1.

* **Segmentation Model:** A model that segments the image into different regions based on the labels.

* **Step 3:** The third step involves feeding Training Data 2 into the Segmentation Model.

* **Mask:** The output of the Segmentation Model, representing the segmented image.

* **c1:"cat", c2:"car", cn:"bus":** Class-specific masks, where each mask corresponds to a specific class.

* **c1:"cat":** A mask for the "cat" class, colored in red.

* **c2:"car":** A mask for the "car" class, colored in gray.

* **cn:"bus":** A mask for the "bus" class, colored in teal.

* **α1, α2, αn:** Weights associated with each class-specific mask.

* **∑i=1n αi * ci * P(ci):** A weighted sum of the class-specific masks, where P(ci) represents the probability of class ci.

* **Mt+1:** The updated mask, which is a combination of the class-specific masks.

* **Step 4:** The fourth step involves combining the class-specific masks to update the mask.

* **t = t + 1:** Indicates an iterative process where the mask is updated in each iteration.

* **Concat:** Indicates that the updated mask (Mt+1) is concatenated with the input data for the next iteration.

* **Output if t = T:** Indicates that the final output is generated when the iteration reaches a termination condition (t = T).

### Detailed Analysis

The diagram illustrates an iterative process for training a segmentation model using multi-label classification.

1. **Initial Training Data:** The process starts with Training Data 1, which includes an image (X) and its corresponding labels (Y).

2. **Multi-Label Classification:** The Multi-Label Classification Model predicts multiple labels for the input image.

3. **CAM Generation:** The CAMs highlight the regions in the image that contribute most to the classification of specific labels.

4. **Selection Expansion:** The CAMs are used to generate a Pseudo-Mask, which provides a rough segmentation of the image.

5. **Segmentation Model Training:** The Segmentation Model is trained using Training Data 2, which includes the Pseudo-Mask and the input image.

6. **Mask Update:** The output of the Segmentation Model is used to update the mask (Mt+1).

7. **Iteration:** The process is repeated iteratively, with the updated mask being used as input for the next iteration.

8. **Final Output:** The final output is generated when the iteration reaches a termination condition (t = T).

The class-specific masks (c1, c2, cn) represent the segmentation of the image into different regions based on the predicted labels. The weights (α1, α2, αn) determine the contribution of each class-specific mask to the final mask.

### Key Observations

* The diagram shows an iterative process for training a segmentation model using multi-label classification.

* The CAMs are used to generate a Pseudo-Mask, which provides a rough segmentation of the image.

* The class-specific masks represent the segmentation of the image into different regions based on the predicted labels.

* The weights determine the contribution of each class-specific mask to the final mask.

### Interpretation

The diagram illustrates a method for training a segmentation model using multi-label classification. The key idea is to use CAMs to generate a Pseudo-Mask, which provides a rough segmentation of the image. This Pseudo-Mask is then used to train a Segmentation Model, which refines the segmentation. The iterative process allows the model to learn from its mistakes and improve its performance over time. The use of class-specific masks and weights allows the model to handle multiple labels and segment the image into different regions based on the predicted labels. This approach is useful for tasks such as object detection and image segmentation, where it is important to identify and segment different objects in an image.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Multi-Label Learning Pipeline for Semantic Segmentation

### Overview

This diagram illustrates a four-step pipeline for semantic segmentation using a multi-label classification approach. The process begins with training data, progresses through a classification model and CAM generation, then utilizes pseudo-mask creation and expansion, and finally culminates in a segmentation model to produce the final output.

### Components/Axes

The diagram is structured into four main steps, labeled "Step 1" through "Step 4". Key components include:

* **Training Data 1:** Consists of an image (X), labels (Y), and a mask (Mt).

* **Multi-Label Classification Model:** The first processing stage.

* **CAMs (Class Activation Maps):** Visual representations of the model's attention for different classes ("car", "person", "bicycle").

* **Training Data 2:** Consists of a Pseudo-Mask and the original image (X).

* **Selection Expansion:** A process to refine the pseudo-masks.

* **Segmentation Model:** The final stage, producing the output.

* **Mathematical Formula:** A summation formula representing a weighted combination of class probabilities.

* **Labels:** "car", "person", "bicycle", "cat", "bus".

* **Parameters:** α1, α2, … αn.

* **Time step:** t, t+1, T.

### Detailed Analysis or Content Details

**Step 1: Multi-Label Classification Model**

* Input: Training Data 1 (X, Y, Mt). Y contains the labels "car", "person", and "bicycle".

* Output: CAMs for "car", "person", and "bicycle".

* CAM for "car": Shows high activation in the region corresponding to the car in the input image. The color scheme appears to range from dark blue (low activation) to bright red/yellow (high activation).

* CAM for "person": Shows high activation in the region corresponding to the person in the input image. Color scheme similar to "car" CAM.

* CAM for "bicycle": Shows high activation in the region corresponding to the bicycle in the input image. Color scheme similar to "car" CAM.

**Step 2: Selection Expansion**

* Input: CAMs from Step 1.

* Output: Pseudo-Mask. The pseudo-mask is a pink/red overlay on the original image, highlighting the detected objects.

**Step 3: Segmentation Model**

* Input: Pseudo-Mask and original image (X) from Training Data 2.

* Output: Final segmented image. The output is indicated by "Output if t = T".

**Step 4: Concatenation and Weighted Summation**

* Input: Image at time step *t* (It) and the mask at time step *t* (Mt).

* Process: Concatenation ("Concat") of It and Mt.

* Mathematical Formula: ∑(i=1 to n) αi * Ci * P(Ci).

* αi: Represents weights.

* Ci: Represents classes ("cat", "car", "bus", and potentially others represented by "...").

* P(Ci): Represents the probability of class Ci.

* Output: An image representing the result of the weighted summation.

### Key Observations

* The pipeline iteratively refines the segmentation through the use of CAMs and pseudo-masks.

* The mathematical formula in Step 4 suggests a weighted averaging of class probabilities, potentially used for refining the segmentation.

* The labels used in the classification model ("car", "person", "bicycle") are different from those used in the weighted summation ("cat", "car", "bus"), indicating a potential shift in the focus of the segmentation task or the use of a broader set of classes.

* The diagram shows a cyclical process, indicated by the feedback loop from Step 4 to Step 1 (t+1).

### Interpretation

This diagram depicts a semi-supervised learning approach to semantic segmentation. The initial multi-label classification model provides a starting point for segmentation, generating CAMs that highlight potential object regions. These CAMs are then used to create pseudo-masks, which serve as training data for a segmentation model. The iterative process, represented by the feedback loop, allows the model to refine its segmentation performance over time. The weighted summation in Step 4 suggests a mechanism for combining information from different classes to improve the accuracy and robustness of the segmentation. The change in labels between the classification and segmentation stages could indicate a transfer learning scenario, where the model is initially trained on a limited set of classes and then fine-tuned on a broader set. The use of pseudo-masks is a common technique for leveraging unlabeled data to improve the performance of segmentation models. The diagram highlights the importance of combining classification and segmentation techniques to achieve accurate and reliable semantic understanding of images.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Process Diagram: Iterative Multi-Label Classification and Segmentation Pipeline

### Overview

The image is a technical flowchart illustrating a four-step, iterative machine learning pipeline. The process begins with multi-label classification on an image, generates Class Activation Maps (CAMs), uses these to create pseudo-masks for a segmentation model, and then refines the classification model in a loop. The diagram is composed of labeled boxes, arrows indicating data flow, and visual examples of data at each stage.

### Components/Axes

The diagram is organized into distinct regions with the following labels and components:

**Top Row (Left to Right):**

1. **Training Data 1 Box:**

* Contains three sub-components labeled `X`, `Y`, and `M_t`.

* `X`: An image of a person riding a bicycle on a street with a car in the background.

* `Y`: A dashed box containing the text labels `"car"`, `"person"`, `"bicycle"`.

* `M_t`: A dark, low-contrast mask image.

* An arrow points from this box to the next, labeled `Multi-Label Classification Model` and `Step 1.`.

2. **CAMs Box:**

* Title: `CAMs`.

* Contains three heatmap images, each with a title above it: `"car"`, `"person"`, `"bicycle"`.

* The heatmaps show activation regions (red/yellow for high activation, blue for low) corresponding to the respective objects in the original image `X`.

* An arrow points from this box to the next, labeled `Selection Expansion` and `Step 2.`.

3. **Training Data 2 Box:**

* Contains two sub-components labeled `Pseudo-Mask` and `X`.

* `Pseudo-Mask`: A color-coded segmentation mask. A legend within the image shows: a pinkish-red region, a grey region, and a green region.

* `X`: The same original image as in Training Data 1.

* An arrow points downward from this box, labeled `Step 3.`.

**Bottom Row (Iterative Loop):**

4. **Segmentation Model Box:**

* A box labeled `Segmentation Model`.

* It receives input from the `Pseudo-Mask` and `X` above (Step 3).

* It outputs a refined `Mask` (shown to its right) and has a dashed arrow pointing down labeled `Output if t = T`.

5. **Refinement & Concatenation Area:**

* **Right Side:** A box containing a series of refined class-specific masks and a final combined mask.

* Labeled masks: `c_1: "cat"` (red), `c_2: "car"` (grey), `c_n: "bus"` (teal).

* Below each mask is a coefficient: `α_1`, `α_2`, `α_n`.

* A final `Mask` image combines these regions (pinkish-red, grey, green).

* Arrows from the coefficients point to a summation formula.

* **Center:** A mathematical formula: `∑_{i=1}^{n} α_i c_i P(c_i)`.

* **Left Side:** A box showing the result of the formula, a new mask `M_{t+1}`.

* A red label `t = t + 1` and the word `Concat` are near an arrow pointing from `M_{t+1}` back to the `M_t` slot in **Training Data 1**, completing the loop. This is labeled `Step 4.`.

**Other Text:**

* `Output if t = T` (in red, bottom right).

* `t = t + 1` (in red, bottom left loop).

### Detailed Analysis

The pipeline executes the following sequential and iterative steps:

**Step 1:** A multi-label classification model is trained on `Training Data 1`, which consists of an image (`X`), its ground-truth labels (`Y`: "car", "person", "bicycle"), and an initial mask (`M_t`).

**Step 2:** The model generates Class Activation Maps (CAMs) for each predicted class ("car", "person", "bicycle"). These heatmaps highlight the image regions most indicative of each class.

**Step 3:** A "Selection Expansion" process uses the CAMs to create a `Pseudo-Mask`. This mask segments the image into regions corresponding to the identified classes (color-coded: pinkish-red, grey, green). This pseudo-mask and the original image `X` form `Training Data 2`.

**Step 4:** `Training Data 2` is used to train a `Segmentation Model`. This model produces a refined output `Mask`.

**Iterative Refinement (Loop):** The process does not end here. The refined mask is decomposed into class-specific component masks (`c_1`, `c_2`, ... `c_n`) with associated coefficients (`α_1`, `α_2`, ... `α_n`). These are combined via the weighted sum formula `∑ α_i c_i P(c_i)` to generate an updated mask `M_{t+1}`. This new mask is concatenated with the original data, incrementing the time step (`t = t + 1`), and fed back into **Step 1** as the new `M_t`. The loop continues until a stopping condition `t = T` is met, at which point the final segmentation output is produced.

### Key Observations

1. **Self-Training Loop:** The core mechanism is an iterative self-training or pseudo-labeling loop. The segmentation model's output is used to generate improved training data (`M_{t+1}`) for the next round of classification.

2. **Class Discrepancy:** There is a notable inconsistency in the class labels. The initial data (`Y`) and CAMs use `"car", "person", "bicycle"`. However, the refinement stage shows masks for `"cat", "car", "bus"`. This suggests the diagram is illustrative, and the specific classes are placeholders.

3. **Mask Evolution:** The mask `M_t` starts as a dark, indistinct image. After the first iteration, it becomes a structured pseudo-mask with clear class regions. The final output mask appears more refined.

4. **Spatial Flow:** The data flows primarily left-to-right in the top row (Steps 1-3), then enters a cyclical, bottom-row loop (Step 4) that feeds back to the start.

### Interpretation

This diagram illustrates a sophisticated **joint learning framework for weakly-supervised semantic segmentation**. The key innovation is the symbiotic relationship between multi-label image classification and pixel-level segmentation.

* **How it works:** The system uses easily obtainable image-level labels (e.g., "this image contains a car and a person") to generate initial localization cues via CAMs. These cues are weak supervision for creating pseudo-masks, which then train a segmentation model. The segmentation model's improved understanding of object shapes and boundaries is fed back to refine the classification model's localization ability in the next iteration.

* **Purpose:** This approach aims to achieve high-quality pixel-wise segmentation without requiring expensive, manually annotated segmentation masks for training. It bootstraps from cheaper image-level labels.

* **Underlying Principle:** The process embodies a **Peircean abductive inference** loop. An initial hypothesis (the multi-label classification) generates observable consequences (the CAMs). These consequences are used to create a new hypothesis (the pseudo-mask). Testing this new hypothesis (training the segmentation model) yields new data (the refined mask), which is used to revise the original hypothesis. Each iteration seeks the most plausible explanation (the accurate segmentation) for the observed data (the image and its labels).

* **Notable Anomaly:** The changing class labels (`"person"/"bicycle"` to `"cat"/"bus"`) are likely a diagrammatic simplification. In a real implementation, the class set would remain consistent throughout the pipeline. This highlights that the diagram is a conceptual schematic rather than a literal depiction of a single experiment.

DECODING INTELLIGENCE...

EXPERT: jina-vlm VERSION 1

RUNTIME: jina-vlm

INTEL_VERIFIED

## Diagram Type: Flowchart

### Overview

The image is a flowchart illustrating the process of training a multi-label classification model using CAMs (Class Activation Maps) for object detection. The flowchart is divided into several steps, each representing a phase in the training process.

### Components/Axes

- **Training Data 1**: Shows images labeled "car," "person," and "bicycle."

- **Training Data 2**: Shows images labeled "car," "person," and "bicycle."

- **CAMs**: Represents the process of generating class activation maps.

- **Multi-Label Classification Model**: The model used for classification.

- **Selection Expansion**: A step in the CAM generation process.

- **Pseudo-Mask**: A mask used in the CAM generation process.

- **Segmentation Model**: The final model used for segmentation.

- **Output if t = T**: The output of the segmentation model when the training is complete.

### Detailed Analysis or ### Content Details

- **Step 1**: The images are processed to generate class activation maps.

- **Step 2**: The class activation maps are used to create a pseudo-mask.

- **Step 3**: The pseudo-mask is used to segment the images.

- **Step 4**: The segmented images are used to train the segmentation model.

### Key Observations

- The flowchart shows a clear progression from training data to the final segmentation model.

- The use of CAMs is a technique used to visualize the importance of different features in the classification process.

- The pseudo-mask is used to refine the segmentation process.

### Interpretation

The flowchart demonstrates the process of training a multi-label classification model using CAMs for object detection. The use of CAMs helps to visualize the importance of different features in the classification process, and the pseudo-mask is used to refine the segmentation process. The final segmentation model is trained using the segmented images, which are generated using the pseudo-mask. This process is repeated for each image in the training data, and the output is the segmentation model when the training is complete. The flowchart provides a clear and concise explanation of the process, making it easy to understand.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Multi-Label Classification and Segmentation Workflow

### Overview

The image depicts a technical workflow for a multi-label classification and segmentation model. It illustrates the process of generating pseudo-masks from training data, using Class Activation Maps (CAMs), and iteratively refining segmentation outputs. The workflow includes steps for data preparation, model inference, feature concatenation, and final segmentation output.

### Components/Axes

1. **Training Data 1**

- **X**: Input image (e.g., a cyclist with a car and bicycle).

- **Y**: Ground-truth labels: `"car"`, `"person"`, `"bicycle"`.

- **M<sub>t</sub>**: Initial pseudo-mask (black-and-white segmentation).

2. **Multi-Label Classification Model**

- Processes **X** and **Y** to generate **CAMs** (Class Activation Maps) for each label.

3. **CAMs**

- Visual heatmaps highlighting regions associated with each label:

- `"car"` (red), `"person"` (blue), `"bicycle"` (green).

4. **Training Data 2**

- **Pseudo-Mask**: Color-coded mask derived from CAMs (e.g., pink, green, gray).

- **X**: Same input image as Training Data 1.

5. **Segmentation Model**

- Takes concatenated features (**M<sub>t+1</sub>**) and outputs a refined mask.

- Final output is labeled as `"Output if t = T"`.

### Detailed Analysis

- **Step 1**: Multi-Label Classification Model infers labels from **X** and **Y**, producing CAMs that visualize object regions.

- **Step 2**: CAMs are expanded to create a pseudo-mask for **Training Data 2**.

- **Step 3**: The pseudo-mask is combined with **X** to refine segmentation.

- **Step 4**: Features from CAMs (`c₁: "car"`, `c₂: "car"`, `cₙ: "bus"`) are concatenated with weights (`α₁`, `α₂`, ..., `αₙ`) to form **M<sub>t+1</sub>**.

- **Step 5**: The segmentation model processes **M<sub>t+1</sub>** to generate the final mask.

### Key Observations

- **Iterative Refinement**: The workflow uses an iterative process (`t = t + 1`) to improve segmentation accuracy.

- **Color Coding**:

- CAMs use distinct colors (red, blue, green) to differentiate labels.

- Pseudo-masks use pink, green, and gray for class-specific regions.

- **Feature Concatenation**: Weights (`α_i`) are applied to CAM features before concatenation, suggesting a weighted fusion of multi-label information.

### Interpretation

This workflow demonstrates a hybrid approach combining multi-label classification and segmentation. The use of CAMs allows the model to focus on relevant regions for each label, while iterative refinement (via pseudo-masks) improves segmentation precision. The weighted concatenation of features (`M<sub>t+1</sub>`) implies a mechanism to prioritize certain labels over others during segmentation. The final output (`t = T`) represents the culmination of this process, likely achieving higher accuracy than initial pseudo-masks.

**Note**: No numerical data or explicit trends are present; the diagram focuses on architectural components and process flow.

DECODING INTELLIGENCE...