\n

## Diagram: Multi-Label Learning Pipeline for Semantic Segmentation

### Overview

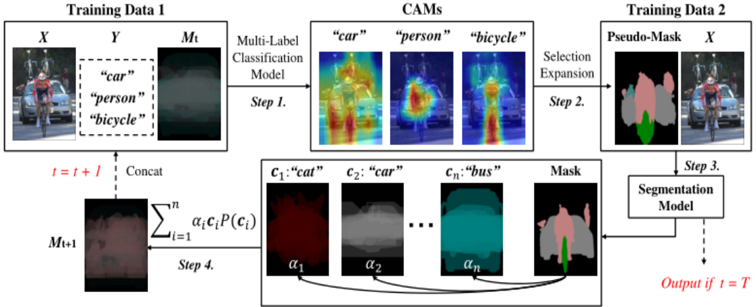

This diagram illustrates a four-step pipeline for semantic segmentation using a multi-label classification approach. The process begins with training data, progresses through a classification model and CAM generation, then utilizes pseudo-mask creation and expansion, and finally culminates in a segmentation model to produce the final output.

### Components/Axes

The diagram is structured into four main steps, labeled "Step 1" through "Step 4". Key components include:

* **Training Data 1:** Consists of an image (X), labels (Y), and a mask (Mt).

* **Multi-Label Classification Model:** The first processing stage.

* **CAMs (Class Activation Maps):** Visual representations of the model's attention for different classes ("car", "person", "bicycle").

* **Training Data 2:** Consists of a Pseudo-Mask and the original image (X).

* **Selection Expansion:** A process to refine the pseudo-masks.

* **Segmentation Model:** The final stage, producing the output.

* **Mathematical Formula:** A summation formula representing a weighted combination of class probabilities.

* **Labels:** "car", "person", "bicycle", "cat", "bus".

* **Parameters:** α1, α2, … αn.

* **Time step:** t, t+1, T.

### Detailed Analysis or Content Details

**Step 1: Multi-Label Classification Model**

* Input: Training Data 1 (X, Y, Mt). Y contains the labels "car", "person", and "bicycle".

* Output: CAMs for "car", "person", and "bicycle".

* CAM for "car": Shows high activation in the region corresponding to the car in the input image. The color scheme appears to range from dark blue (low activation) to bright red/yellow (high activation).

* CAM for "person": Shows high activation in the region corresponding to the person in the input image. Color scheme similar to "car" CAM.

* CAM for "bicycle": Shows high activation in the region corresponding to the bicycle in the input image. Color scheme similar to "car" CAM.

**Step 2: Selection Expansion**

* Input: CAMs from Step 1.

* Output: Pseudo-Mask. The pseudo-mask is a pink/red overlay on the original image, highlighting the detected objects.

**Step 3: Segmentation Model**

* Input: Pseudo-Mask and original image (X) from Training Data 2.

* Output: Final segmented image. The output is indicated by "Output if t = T".

**Step 4: Concatenation and Weighted Summation**

* Input: Image at time step *t* (It) and the mask at time step *t* (Mt).

* Process: Concatenation ("Concat") of It and Mt.

* Mathematical Formula: ∑(i=1 to n) αi * Ci * P(Ci).

* αi: Represents weights.

* Ci: Represents classes ("cat", "car", "bus", and potentially others represented by "...").

* P(Ci): Represents the probability of class Ci.

* Output: An image representing the result of the weighted summation.

### Key Observations

* The pipeline iteratively refines the segmentation through the use of CAMs and pseudo-masks.

* The mathematical formula in Step 4 suggests a weighted averaging of class probabilities, potentially used for refining the segmentation.

* The labels used in the classification model ("car", "person", "bicycle") are different from those used in the weighted summation ("cat", "car", "bus"), indicating a potential shift in the focus of the segmentation task or the use of a broader set of classes.

* The diagram shows a cyclical process, indicated by the feedback loop from Step 4 to Step 1 (t+1).

### Interpretation

This diagram depicts a semi-supervised learning approach to semantic segmentation. The initial multi-label classification model provides a starting point for segmentation, generating CAMs that highlight potential object regions. These CAMs are then used to create pseudo-masks, which serve as training data for a segmentation model. The iterative process, represented by the feedback loop, allows the model to refine its segmentation performance over time. The weighted summation in Step 4 suggests a mechanism for combining information from different classes to improve the accuracy and robustness of the segmentation. The change in labels between the classification and segmentation stages could indicate a transfer learning scenario, where the model is initially trained on a limited set of classes and then fine-tuned on a broader set. The use of pseudo-masks is a common technique for leveraging unlabeled data to improve the performance of segmentation models. The diagram highlights the importance of combining classification and segmentation techniques to achieve accurate and reliable semantic understanding of images.