## Diagram: AI Model Evolution Framework

### Overview

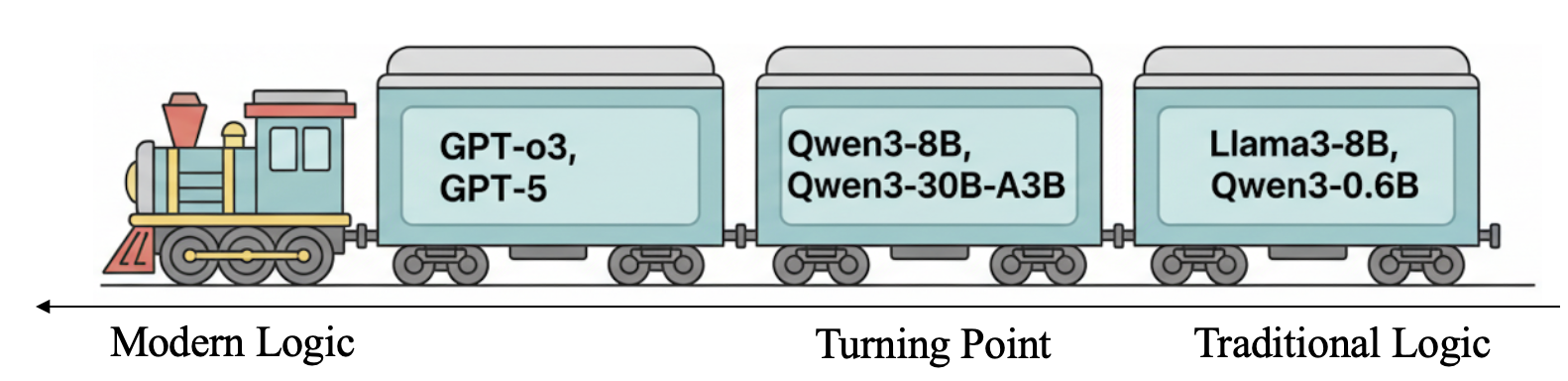

The image depicts a stylized train diagram representing the evolution of AI models across three conceptual phases: "Modern Logic," "Turning Point," and "Traditional Logic." The train engine and three carriages are labeled with specific AI model names, suggesting a progression or transition between eras of AI development.

### Components/Axes

- **Axis Labels**:

- Horizontal axis: "Modern Logic" (left), "Turning Point" (center), "Traditional Logic" (right).

- **Text Embedded in Diagram**:

- **Train Engine**: No explicit label, but visually distinct as the locomotive.

- **Carriage 1 (Modern Logic)**: "GPT-o3, GPT-5"

- **Carriage 2 (Turning Point)**: "Qwen3-8B, Qwen3-30B-A3B"

- **Carriage 3 (Traditional Logic)**: "Llama3-8B, Qwen3-0.6B"

### Detailed Analysis

- **Model Placement**:

- **Modern Logic (Left)**: Dominated by large-scale models (GPT-o3, GPT-5), implying cutting-edge, resource-intensive architectures.

- **Turning Point (Center)**: Features mid-sized models (Qwen3-8B, Qwen3-30B-A3B), suggesting a transitional phase balancing capability and efficiency.

- **Traditional Logic (Right)**: Smaller models (Llama3-8B, Qwen3-0.6B), indicating a shift toward lightweight, cost-effective solutions.

- **Visual Flow**: The train moves left-to-right, symbolizing a chronological or developmental progression.

### Key Observations

1. **Model Size Transition**:

- Modern Logic models are the largest (e.g., GPT-o3, GPT-5), while Traditional Logic models are significantly smaller (e.g., Qwen3-0.6B).

2. **Hybrid Architectures**:

- The Turning Point includes both 8B and 30B parameter models, hinting at experimental or hybrid approaches.

3. **No Numerical Data**: The diagram lacks quantitative metrics (e.g., performance scores, training costs), relying on categorical labels for interpretation.

### Interpretation

The diagram metaphorically illustrates the evolution of AI models from resource-heavy, large-scale systems ("Modern Logic") to more efficient, smaller architectures ("Traditional Logic"). The "Turning Point" phase highlights experimentation with mid-sized models, possibly reflecting industry shifts toward optimizing performance while reducing computational demands. The absence of numerical data limits precise analysis but emphasizes conceptual categorization. This progression aligns with real-world trends where AI development balances capability with sustainability, though the specific model names (e.g., GPT-o3, Qwen3 variants) suggest speculative or future-oriented frameworks.