## Bar Chart: Model Accuracy Comparison (Generation vs. Multiple-choice)

### Overview

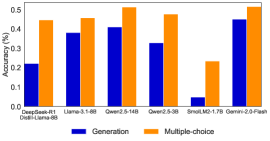

The image is a vertical bar chart comparing the accuracy of six different language models on two distinct task types: "Generation" and "Multiple-choice". The chart displays performance on a scale from 0 to 0.5 (50% accuracy). The models are listed on the x-axis, and their corresponding accuracy scores are represented by paired bars.

### Components/Axes

* **Chart Title:** Partially visible at the top, appears to be "Accuracy (0-0.5)".

* **Y-Axis:**

* **Label:** "Accuracy (0-0.5)"

* **Scale:** Linear, ranging from 0.0 to 0.5 with major tick marks at 0.1 intervals (0.0, 0.1, 0.2, 0.3, 0.4, 0.5).

* **X-Axis:**

* **Label:** None explicitly stated, but contains model names.

* **Categories (from left to right):**

1. Qwen2.5-0.5B-Instruct

2. Llama-3-8B

3. Qwen2.5-14B

4. Qwen2.5-7B

5. SmallThinker-3B-1.7B

6. Qwen2.5-7B-Plain

* **Legend:**

* **Position:** Bottom center of the chart.

* **Items:**

* **Blue Square:** "Generation"

* **Orange Square:** "Multiple-choice"

### Detailed Analysis

The chart presents paired bars for each model. The blue bar represents "Generation" accuracy, and the orange bar represents "Multiple-choice" accuracy. All values are approximate, estimated from the visual height of the bars relative to the y-axis.

| Model Name | Generation Accuracy (Blue Bar, Approx.) | Multiple-choice Accuracy (Orange Bar, Approx.) |

| :--- | :--- | :--- |

| Qwen2.5-0.5B-Instruct | ~0.22 | ~0.45 |

| Llama-3-8B | ~0.39 | ~0.47 |

| Qwen2.5-14B | ~0.40 | ~0.52 |

| Qwen2.5-7B | ~0.33 | ~0.48 |

| SmallThinker-3B-1.7B | ~0.05 | ~0.24 |

| Qwen2.5-7B-Plain | ~0.45 | ~0.52 |

**Trend Verification:**

* **Generation (Blue Bars):** The trend is generally upward from left to right, with a significant dip for the "SmallThinker" model. The highest value is for "Qwen2.5-7B-Plain" (~0.45), and the lowest is for "SmallThinker-3B-1.7B" (~0.05).

* **Multiple-choice (Orange Bars):** The trend is more stable and consistently higher than the Generation scores. Values range from ~0.24 (SmallThinker) to ~0.52 (Qwen2.5-14B and Qwen2.5-7B-Plain).

### Key Observations

1. **Consistent Performance Gap:** For every model shown, the accuracy on "Multiple-choice" tasks is higher than on "Generation" tasks. The gap is most pronounced for the "Qwen2.5-0.5B-Instruct" and "SmallThinker-3B-1.7B" models.

2. **Model Performance Hierarchy:** The "Qwen2.5-14B" and "Qwen2.5-7B-Plain" models achieve the highest scores in both categories, with near-identical performance (~0.52) on the multiple-choice task.

3. **Significant Outlier:** The "SmallThinker-3B-1.7B" model is a clear outlier, performing substantially worse than all other models on both task types, especially on the generation task where its accuracy is near zero.

4. **Converging Performance:** The performance gap between the two task types narrows for the higher-performing models. For "Qwen2.5-7B-Plain", the scores are very close (~0.45 vs. ~0.52).

### Interpretation

This chart demonstrates a clear and consistent trend: the evaluated language models find "Multiple-choice" tasks significantly easier than open-ended "Generation" tasks. This suggests that constrained, recognition-based tasks (selecting from options) are less challenging for current model architectures than generative tasks requiring the creation of novel, coherent text.

The data implies that model scale and training (as seen in the progression from 0.5B to 14B parameters in the Qwen series) generally improve performance on both task types. However, the "SmallThinker" model's poor performance indicates that not all small models are equal; its specific architecture or training may be ill-suited for these benchmarks.

The near-parity in performance between "Qwen2.5-14B" and "Qwen2.5-7B-Plain" on the multiple-choice task is notable. It suggests that for this specific task type, a well-tuned 7B model can match a larger 14B model, highlighting the importance of model configuration and fine-tuning over raw parameter count alone. The chart ultimately serves as a comparative benchmark, illustrating the current state of model capabilities across different cognitive tasks.