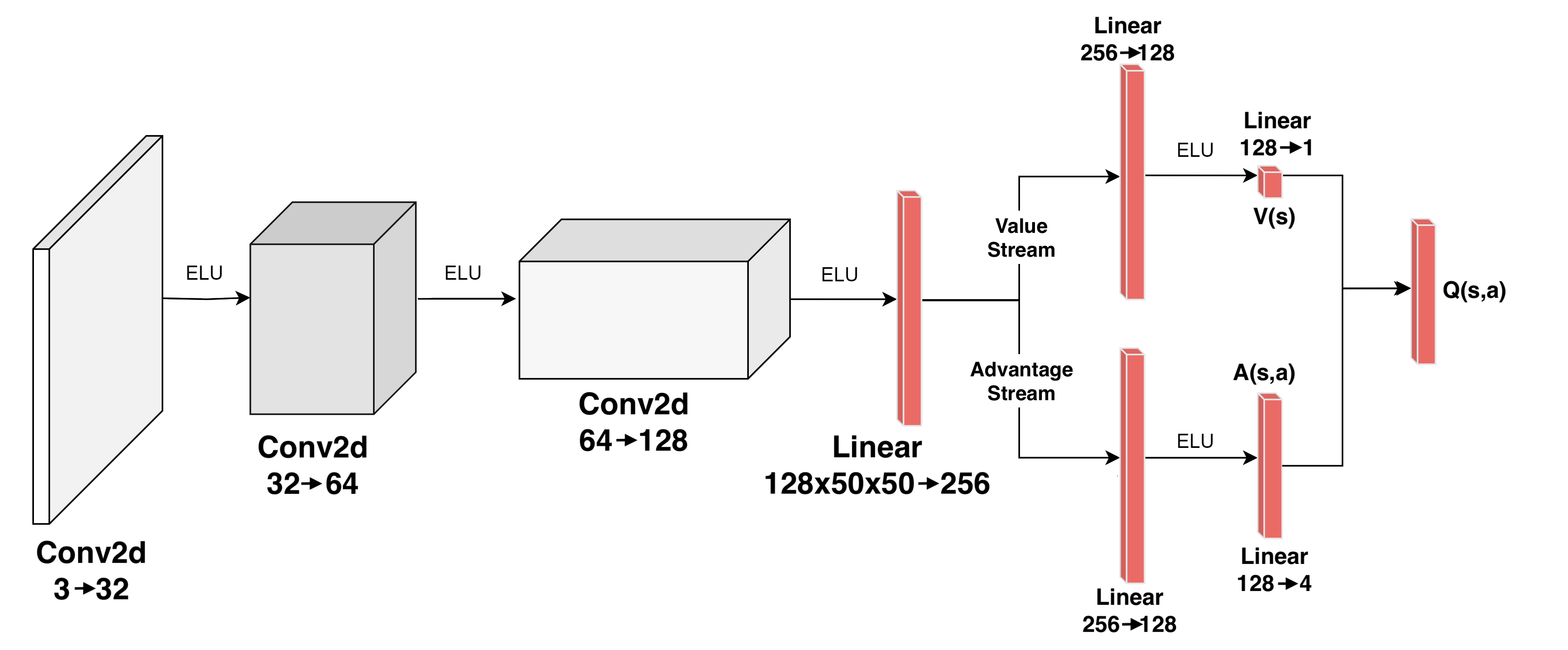

## Neural Network Architecture Diagram: Dueling Deep Q-Network (DQN)

### Overview

The image displays a detailed architectural diagram of a convolutional neural network designed for reinforcement learning, specifically a Dueling Deep Q-Network (DQN). The network processes an input (likely an image state) through a series of convolutional layers, then splits into two parallel streams—a Value Stream and an Advantage Stream—before combining their outputs to produce the final Q-value estimate, Q(s,a). The flow is from left to right.

### Components/Axes

The diagram is composed of interconnected blocks representing layers and operations. All text labels are in English.

**Input Layer (Leftmost):**

* A rectangular prism representing the input tensor.

* Label below: `Conv2d 3→32`. This indicates a 2D convolutional layer taking 3 input channels (e.g., RGB image) and outputting 32 feature maps.

**First Processing Block:**

* An arrow labeled `ELU` (Exponential Linear Unit activation function) points from the input to the next block.

* A 3D rectangular block representing the output of the first convolution.

* Label below: `Conv2d 32→64`. This is a second convolutional layer taking 32 input channels and outputting 64.

**Second Processing Block:**

* An arrow labeled `ELU` points from the previous block to the next.

* A larger 3D rectangular block.

* Label below: `Conv2d 64→128`. This is a third convolutional layer taking 64 input channels and outputting 128.

**Flattening & Initial Linear Layer:**

* An arrow labeled `ELU` points from the last convolutional block to a vertical red bar.

* The red bar represents a fully connected (Linear) layer.

* Label below: `Linear 128x50x50→256`. This layer flattens the input (presumably 128 channels of 50x50 spatial dimensions) and projects it to a 256-dimensional vector.

**Stream Split:**

* The output of the `Linear 128x50x50→256` layer splits into two parallel paths.

* **Upper Path Label:** `Value Stream`

* **Lower Path Label:** `Advantage Stream`

**Value Stream (Upper Path):**

1. A vertical red bar labeled above: `Linear 256→128`.

2. An arrow labeled `ELU` points to a smaller red bar.

3. The smaller red bar is labeled above: `Linear 128→1`.

4. The output of this final layer is labeled `V(s)`, representing the state-value function.

**Advantage Stream (Lower Path):**

1. A vertical red bar labeled below: `Linear 256→128`.

2. An arrow labeled `ELU` points to a smaller red bar.

3. The smaller red bar is labeled below: `Linear 128→4`.

4. The output of this final layer is labeled `A(s,a)`, representing the advantage function for each action.

**Output Combination:**

* Arrows from both `V(s)` and `A(s,a)` converge.

* They point to a final vertical red bar on the far right.

* The output of this final combination is labeled `Q(s,a)`, representing the estimated Q-value for the given state and action.

### Detailed Analysis

**Layer-by-Layer Data Flow:**

1. **Input:** 3-channel image data.

2. **Conv2d (3→32):** Produces 32 feature maps. Activated by ELU.

3. **Conv2d (32→64):** Produces 64 feature maps. Activated by ELU.

4. **Conv2d (64→128):** Produces 128 feature maps of spatial size 50x50 (inferred from the subsequent Linear layer label). Activated by ELU.

5. **Linear (Flatten & Project):** The 128*50*50 = 320,000-dimensional flattened vector is projected to a 256-dimensional hidden representation.

6. **Dueling Split:** The 256-dim vector is fed into two separate streams.

* **Value Stream:** 256 → 128 (ELU) → 1. Outputs a single scalar V(s).

* **Advantage Stream:** 256 → 128 (ELU) → 4. Outputs a 4-dimensional vector A(s,a), implying the action space has 4 discrete actions.

7. **Q-Value Calculation:** The final Q(s,a) is computed by combining V(s) and A(s,a). The standard dueling architecture formula is: Q(s,a) = V(s) + (A(s,a) - mean(A(s,a'))). The diagram shows the combination step but does not specify the exact arithmetic.

**Spatial Grounding:**

* The legend/labels are placed directly above or below their corresponding components.

* The `Value Stream` label is positioned above the split point, aligned with the upper path.

* The `Advantage Stream` label is positioned below the split point, aligned with the lower path.

* The final output `Q(s,a)` is positioned to the right of the combining layer, at the far right of the diagram.

### Key Observations

1. **Dueling Architecture:** The defining feature is the split into Value and Advantage streams after the convolutional feature extractor. This is a hallmark of the Dueling DQN architecture, which separates the estimation of state value from the relative advantage of each action.

2. **Activation Function:** The network consistently uses the ELU (Exponential Linear Unit) activation function after every convolutional and linear layer (except the final output layers of each stream).

3. **Dimensionality Reduction:** There is a significant reduction in dimensionality from the convolutional output (128x50x50) to the first linear layer (256), indicating aggressive feature compression.

4. **Action Space:** The advantage stream outputs 4 values (`Linear 128→4`), specifying that the agent is designed for an environment with exactly four possible discrete actions.

5. **Visual Representation:** Convolutional layers are shown as 3D blocks, while linear layers are shown as vertical red bars. Arrows indicate the direction of data flow.

### Interpretation

This diagram illustrates a sophisticated deep reinforcement learning model. The convolutional front-end is designed to process visual input (e.g., from a video game or camera), extracting hierarchical features through three layers of increasing depth (32, 64, 128 channels).

The core innovation is the dueling structure. By learning V(s) (how good is this state generally?) separately from A(s,a) (how much better is this action compared to others in this state?), the network can learn which states are valuable without having to learn the effect of each action in every single state. This leads to more stable and efficient learning, especially in environments where the value of a state is often independent of the action taken.

The output `Q(s,a)` is the final Q-value used for action selection (e.g., via an epsilon-greedy policy). The network's design suggests it is tailored for a specific task with a small, discrete action space (4 actions) and visual state observations of size 50x50 pixels with 3 color channels. The consistent use of ELU activations may help mitigate the vanishing gradient problem and allow for faster convergence during training.