## [Line Chart]: Reward vs Steps (Mean Min/Max)

### Overview

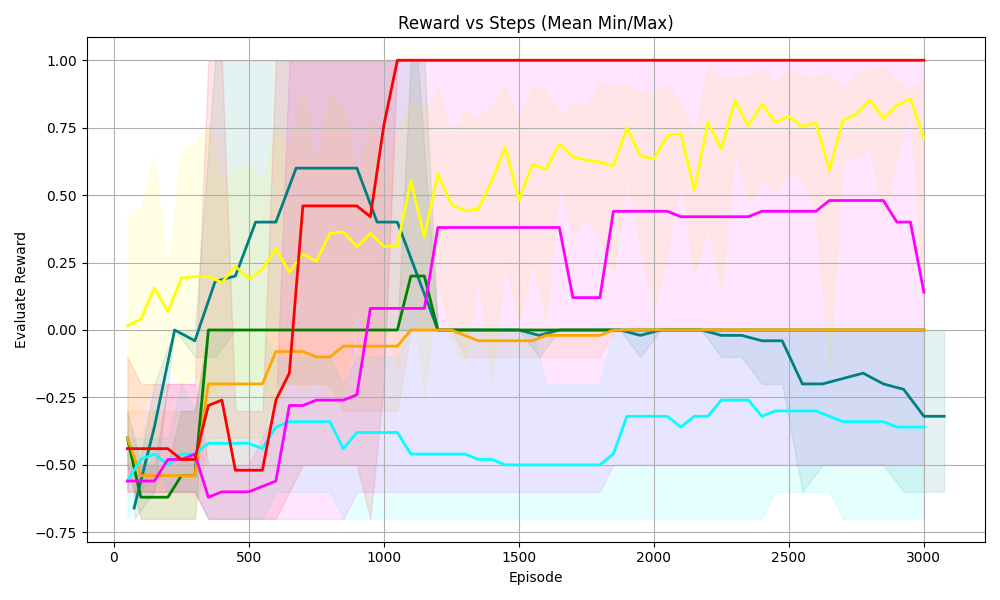

The image is a line chart titled *“Reward vs Steps (Mean Min/Max)”* (note: the x-axis is labeled “Episode,” suggesting “Steps” may refer to episodes). It plots **“Evaluate Reward”** (y-axis) against **“Episode”** (x-axis) for multiple data series, with shaded regions representing the *minimum and maximum values* (mean min/max) for each series.

### Components/Axes

- **Title**: *“Reward vs Steps (Mean Min/Max)”*

- **X-axis**: Label = *“Episode”*; Ticks at 0, 500, 1000, 1500, 2000, 2500, 3000.

- **Y-axis**: Label = *“Evaluate Reward”*; Ticks at -0.75, -0.50, -0.25, 0.00, 0.25, 0.50, 0.75, 1.00.

- **Data Series (Lines + Shaded Regions)**: Multiple colored lines (red, yellow, magenta, teal, green, orange, dark teal) with corresponding shaded regions (light red, light yellow, light pink, light cyan, light green, light orange, light blue) indicating min/max ranges.

### Detailed Analysis

We analyze each series (color, trend, key points):

1. **Red Line (Light Red Shaded Region)**

- **Trend**: Sharp increase from ~-0.5 (episode 0) to 1.0 (episode ~1000), then flat at 1.0.

- **Key Points**: Reaches the *maximum reward (1.0)* by episode 1000 and maintains it.

2. **Yellow Line (Light Yellow Shaded Region)**

- **Trend**: Fluctuating upward trend, starting at ~0 (episode 0), peaking around 0.8–0.9 by episode 3000.

- **Key Points**: Consistent growth with variability (shaded region shows min/max fluctuations).

3. **Magenta (Pink) Line (Light Pink Shaded Region)**

- **Trend**: Rises from ~-0.5 (episode 0) to ~0.4–0.5 (episode ~1500), then fluctuates (dip around episode 1750) but stabilizes.

- **Key Points**: Moderate growth, with a temporary drop in reward.

4. **Teal (Cyan) Line (Light Cyan Shaded Region)**

- **Trend**: Fluctuates around -0.5 to -0.25, with a slight upward trend toward episode 3000.

- **Key Points**: Low reward with high variability (shaded region is wide).

5. **Green Line (Light Green Shaded Region)**

- **Trend**: Rises from ~-0.75 (episode 0) to 0 (episode ~500), then flat at 0.

- **Key Points**: Reaches *neutral reward (0)* early and maintains it.

6. **Orange Line (Light Orange Shaded Region)**

- **Trend**: Rises from ~-0.5 (episode 0) to 0 (episode ~500), then flat at 0.

- **Key Points**: Similar to the green line, reaches neutral reward early.

7. **Dark Teal Line (Light Blue Shaded Region)**

- **Trend**: Rises from ~-0.75 (episode 0) to ~0.6 (episode ~1000), then drops to ~-0.25 (episode 3000).

- **Key Points**: Initial growth followed by a decline, with a wide shaded region (high variability).

### Key Observations

- **Red Line**: Outperforms all others, reaching and maintaining the *maximum reward (1.0)* by episode 1000.

- **Yellow Line**: Shows consistent growth with variability, approaching high reward (~0.8–0.9) by episode 3000.

- **Green/Orange Lines**: Stabilize at *neutral reward (0)* early, with minimal variability.

- **Teal Line**: Remains in the low reward range with high variability.

- **Dark Teal Line**: Initial success followed by decline, indicating potential instability.

- **Shaded Regions**: Wide for teal and dark teal (high variability), narrow for red (low variability after episode 1000).

### Interpretation

This chart likely represents the performance of different reinforcement learning agents (or algorithms) over episodes, where *“Evaluate Reward”* measures their success.

- The **red line**’s rapid rise to maximum reward suggests a *highly effective agent* (e.g., a well-tuned algorithm).

- The **yellow line**’s steady growth indicates a *reliable, if slower, agent* (consistent improvement over time).

- **Green/orange lines** stabilize at neutral reward, possibly indicating agents that learn to avoid negative rewards but do not excel.

- The **teal line**’s low, variable reward suggests a *struggling agent* (poor performance with high unpredictability).

- The **dark teal line**’s decline hints at *overfitting or instability* (initial success followed by failure).

The shaded regions (min/max) show the range of performance: red has the narrowest range (consistent), while teal/dark teal have the widest (unpredictable). This data helps identify which agents are most effective, stable, or prone to failure over time—critical for optimizing reinforcement learning systems.

(Note: No non-English text is present in the image.)