TECHNICAL ASSET FINGERPRINT

e84d2a1610e8d193c0c43fb0

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Charts: Model Accuracy vs. Round

### Overview

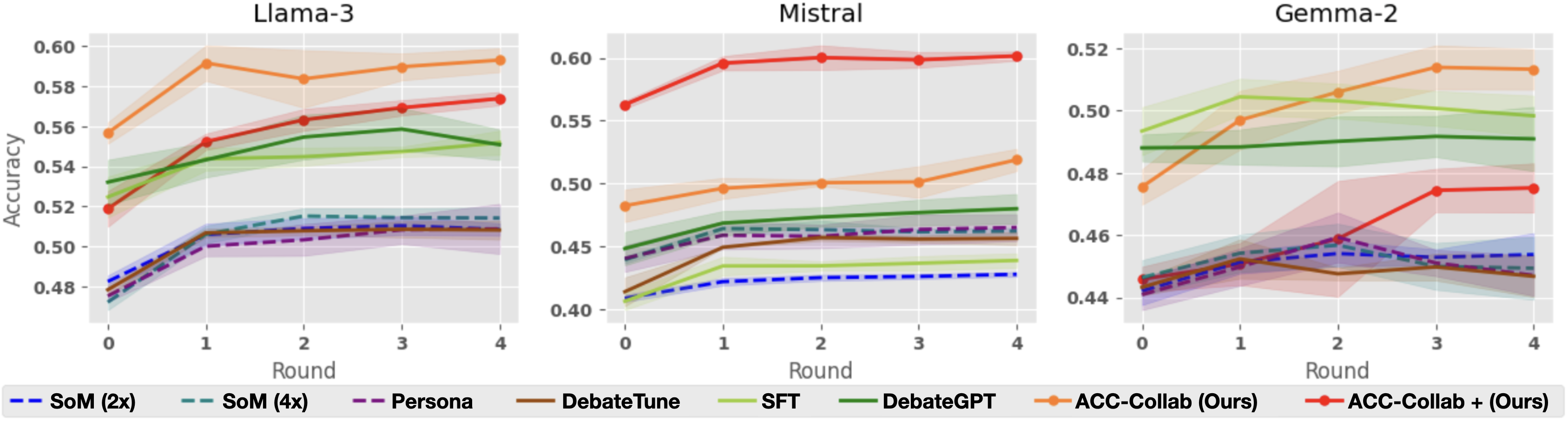

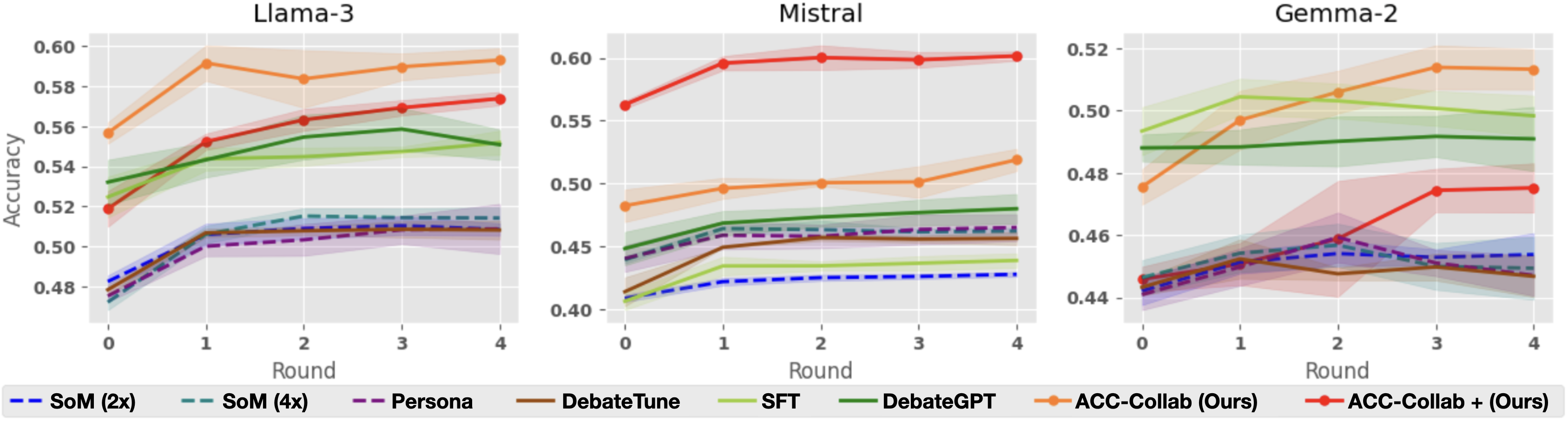

The image presents three line charts comparing the accuracy of different models (Llama-3, Mistral, and Gemma-2) across several rounds of interaction or training. Each chart displays the performance of multiple methods, including "SoM (2x)", "SoM (4x)", "Persona", "DebateTune", "SFT", "DebateGPT", "ACC-Collab (Ours)", and "ACC-Collab + (Ours)". The x-axis represents the round number (0 to 4), and the y-axis represents the accuracy score. Shaded regions around each line indicate the uncertainty or variance in the accuracy.

### Components/Axes

* **Titles:**

* Top-left chart: "Llama-3"

* Top-middle chart: "Mistral"

* Top-right chart: "Gemma-2"

* **X-axis:** "Round", with markers at 0, 1, 2, 3, and 4.

* **Y-axis:** "Accuracy", ranging from 0.48 to 0.60 for Llama-3, 0.40 to 0.60 for Mistral, and 0.44 to 0.52 for Gemma-2.

* **Legend:** Located at the bottom of the image, associating line colors and styles with specific methods:

* Blue dashed line: "SoM (2x)"

* Teal dashed line: "SoM (4x)"

* Purple dashed line: "Persona"

* Brown solid line: "DebateTune"

* Light green solid line: "SFT"

* Dark green solid line: "DebateGPT"

* Orange solid line: "ACC-Collab (Ours)"

* Red solid line: "ACC-Collab + (Ours)"

### Detailed Analysis

#### Llama-3 Chart

* **SoM (2x)** (Blue dashed): Starts at approximately 0.48 and increases to around 0.51, then remains relatively stable.

* **SoM (4x)** (Teal dashed): Similar to SoM (2x), starting around 0.48 and increasing to approximately 0.51, then stabilizing.

* **Persona** (Purple dashed): Starts around 0.48 and increases to approximately 0.50, then stabilizes.

* **DebateTune** (Brown solid): Starts around 0.48 and increases to approximately 0.51, then stabilizes.

* **SFT** (Light green solid): Starts around 0.52 and increases to approximately 0.56.

* **DebateGPT** (Dark green solid): Starts around 0.53 and increases to approximately 0.55.

* **ACC-Collab (Ours)** (Orange solid): Starts around 0.56, increases to approximately 0.59 at round 1, then decreases slightly to approximately 0.58 by round 4.

* **ACC-Collab + (Ours)** (Red solid): Starts around 0.52, increases to approximately 0.57 by round 4.

#### Mistral Chart

* **SoM (2x)** (Blue dashed): Starts around 0.40 and increases to approximately 0.43, then remains relatively stable.

* **SoM (4x)** (Teal dashed): Starts around 0.45 and increases slightly to approximately 0.47, then stabilizes.

* **Persona** (Purple dashed): Starts around 0.45 and increases slightly to approximately 0.47, then stabilizes.

* **DebateTune** (Brown solid): Starts around 0.42 and increases to approximately 0.46, then stabilizes.

* **SFT** (Light green solid): Starts around 0.44 and increases slightly to approximately 0.46, then stabilizes.

* **DebateGPT** (Dark green solid): Starts around 0.45 and increases slightly to approximately 0.48, then stabilizes.

* **ACC-Collab (Ours)** (Orange solid): Starts around 0.48, increases to approximately 0.50 by round 1, then remains relatively stable.

* **ACC-Collab + (Ours)** (Red solid): Starts around 0.57, increases to approximately 0.60 by round 1, then remains relatively stable.

#### Gemma-2 Chart

* **SoM (2x)** (Blue dashed): Starts around 0.44 and increases to approximately 0.46, then remains relatively stable.

* **SoM (4x)** (Teal dashed): Starts around 0.48 and increases slightly to approximately 0.51, then stabilizes.

* **Persona** (Purple dashed): Starts around 0.44 and increases slightly to approximately 0.46, then stabilizes.

* **DebateTune** (Brown solid): Starts around 0.44 and increases slightly to approximately 0.45, then stabilizes.

* **SFT** (Light green solid): Starts around 0.48 and increases slightly to approximately 0.49, then stabilizes.

* **DebateGPT** (Dark green solid): Starts around 0.49 and increases slightly to approximately 0.50, then stabilizes.

* **ACC-Collab (Ours)** (Orange solid): Starts around 0.48, increases to approximately 0.52 by round 4.

* **ACC-Collab + (Ours)** (Red solid): Starts around 0.45, increases to approximately 0.48 by round 3, then decreases slightly to approximately 0.47 by round 4.

### Key Observations

* **ACC-Collab + (Ours)** generally performs well across all models, often achieving the highest accuracy or a close second.

* **ACC-Collab (Ours)** also shows strong performance, particularly in the Llama-3 and Gemma-2 models.

* **SoM (2x), SoM (4x), Persona, and DebateTune** tend to have lower and more stable accuracy scores compared to the other methods.

* The accuracy improvements tend to occur in the initial rounds (0-1), with diminishing returns in later rounds.

* The shaded regions indicate varying degrees of uncertainty in the accuracy, with some methods showing more consistent performance than others.

### Interpretation

The charts suggest that "ACC-Collab + (Ours)" and "ACC-Collab (Ours)" are effective methods for improving model accuracy across different language models (Llama-3, Mistral, and Gemma-2). The relatively flat performance of "SoM (2x)", "SoM (4x)", "Persona", and "DebateTune" indicates that these methods may not be as effective in this context. The initial rounds of interaction or training appear to be the most crucial for achieving accuracy gains. The uncertainty regions highlight the variability in performance, which could be due to factors such as data sampling or model initialization. The data demonstrates the relative effectiveness of different training or fine-tuning approaches for language models.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: Accuracy vs. Round for Different Models and Training Methods

### Overview

The image presents three line charts, each displaying the accuracy of different language models (Llama-3, Mistral, and Gemma-2) across four rounds of evaluation. Each chart shows the performance of several training methods: SoM (2x and 4x), Persona, DebateTune, SFT, DebateGPT, and ACC-Collab (Ours) and ACC-Collab + (Ours). The y-axis represents accuracy, and the x-axis represents the round number.

### Components/Axes

* **X-axis:** "Round" with values 0, 1, 2, 3, and 4.

* **Y-axis:** "Accuracy" with a scale ranging from approximately 0.44 to 0.60.

* **Models (Charts):** Llama-3, Mistral, Gemma-2. Each model has its own dedicated chart.

* **Training Methods (Legend):**

* SoM (2x) - Dashed light blue line

* SoM (4x) - Dashed dark blue line

* Persona - Dashed purple line

* DebateTune - Solid yellow line

* SFT - Solid dark green line

* DebateGPT - Solid light green line

* ACC-Collab (Ours) - Solid orange line

* ACC-Collab + (Ours) - Solid red line

### Detailed Analysis or Content Details

**Llama-3 Chart:**

* **ACC-Collab (Ours):** Starts at approximately 0.58, decreases slightly to 0.57 at round 1, then remains relatively stable around 0.57-0.58 through round 4.

* **ACC-Collab + (Ours):** Starts at approximately 0.57, increases to a peak of approximately 0.59 at round 1, then decreases to approximately 0.57 by round 4.

* **DebateGPT:** Starts at approximately 0.53, increases to approximately 0.56 at round 1, then remains relatively stable around 0.55-0.56 through round 4.

* **DebateTune:** Starts at approximately 0.54, increases to approximately 0.56 at round 1, then remains relatively stable around 0.55-0.56 through round 4.

* **SFT:** Starts at approximately 0.52, increases to approximately 0.54 at round 1, then remains relatively stable around 0.53-0.54 through round 4.

* **Persona:** Starts at approximately 0.49, increases to approximately 0.51 at round 1, then remains relatively stable around 0.50-0.51 through round 4.

* **SoM (4x):** Starts at approximately 0.49, increases to approximately 0.51 at round 1, then remains relatively stable around 0.50-0.51 through round 4.

* **SoM (2x):** Starts at approximately 0.48, increases to approximately 0.50 at round 1, then remains relatively stable around 0.49-0.50 through round 4.

**Mistral Chart:**

* **ACC-Collab (Ours):** Starts at approximately 0.60, decreases to approximately 0.58 at round 1, then remains relatively stable around 0.58-0.60 through round 4.

* **ACC-Collab + (Ours):** Starts at approximately 0.59, remains relatively stable around 0.58-0.59 through round 4.

* **DebateGPT:** Starts at approximately 0.49, increases to approximately 0.51 at round 1, then remains relatively stable around 0.50-0.51 through round 4.

* **DebateTune:** Starts at approximately 0.48, increases to approximately 0.50 at round 1, then remains relatively stable around 0.49-0.50 through round 4.

* **SFT:** Starts at approximately 0.46, increases to approximately 0.48 at round 1, then remains relatively stable around 0.47-0.48 through round 4.

* **Persona:** Starts at approximately 0.45, increases to approximately 0.47 at round 1, then remains relatively stable around 0.46-0.47 through round 4.

* **SoM (4x):** Starts at approximately 0.44, increases to approximately 0.46 at round 1, then remains relatively stable around 0.45-0.46 through round 4.

* **SoM (2x):** Starts at approximately 0.43, increases to approximately 0.45 at round 1, then remains relatively stable around 0.44-0.45 through round 4.

**Gemma-2 Chart:**

* **ACC-Collab (Ours):** Starts at approximately 0.51, decreases slightly to approximately 0.50 at round 1, then remains relatively stable around 0.50-0.51 through round 4.

* **ACC-Collab + (Ours):** Starts at approximately 0.50, remains relatively stable around 0.49-0.50 through round 4.

* **DebateGPT:** Starts at approximately 0.48, increases to approximately 0.49 at round 1, then remains relatively stable around 0.48-0.49 through round 4.

* **DebateTune:** Starts at approximately 0.47, increases to approximately 0.48 at round 1, then remains relatively stable around 0.47-0.48 through round 4.

* **SFT:** Starts at approximately 0.46, increases to approximately 0.47 at round 1, then remains relatively stable around 0.46-0.47 through round 4.

* **Persona:** Starts at approximately 0.45, increases to approximately 0.46 at round 1, then remains relatively stable around 0.45-0.46 through round 4.

* **SoM (4x):** Starts at approximately 0.44, increases to approximately 0.45 at round 1, then remains relatively stable around 0.44-0.45 through round 4.

* **SoM (2x):** Starts at approximately 0.43, increases to approximately 0.44 at round 1, then remains relatively stable around 0.43-0.44 through round 4.

### Key Observations

* ACC-Collab (Ours) consistently achieves the highest accuracy across all models and rounds, although the gains are modest.

* ACC-Collab + (Ours) generally performs slightly worse than ACC-Collab (Ours).

* SoM (2x) and SoM (4x) consistently have the lowest accuracy across all models.

* The accuracy curves for most training methods tend to plateau after round 1, indicating diminishing returns from further rounds.

* Mistral consistently shows the highest overall accuracy compared to Llama-3 and Gemma-2.

### Interpretation

The data suggests that the "ACC-Collab (Ours)" training method is the most effective for improving the accuracy of these language models, but the improvements are not substantial. The relatively flat accuracy curves after round 1 indicate that the models may be reaching a point of diminishing returns with further training. The differences in initial accuracy and overall performance between the models (Llama-3, Mistral, and Gemma-2) suggest inherent differences in their architectures or pre-training data. The consistently low performance of SoM (2x) and SoM (4x) suggests that this training method is less effective than the others. The slight decrease in accuracy for ACC-Collab (Ours) in Llama-3 and Gemma-2 after round 1 could indicate overfitting or the need for regularization techniques. The data highlights the importance of choosing the right training method and model for a specific task, as well as the potential for diminishing returns from continued training.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Charts: Performance of Various Methods Across Three AI Models

### Overview

The image displays three side-by-side line charts, each tracking the "Accuracy" (y-axis) of eight different methods over five sequential "Rounds" (x-axis, 0-4). The charts are titled by the base AI model being evaluated: "Llama-3", "Mistral", and "Gemma-2". A shared legend at the bottom identifies the eight methods, which are distinguished by line color and style (solid or dashed). Each data series includes a shaded region, likely representing confidence intervals or standard deviation.

### Components/Axes

* **Titles:** Three individual chart titles: "Llama-3" (left), "Mistral" (center), "Gemma-2" (right).

* **Y-Axis:** Labeled "Accuracy". The scale varies per chart:

* Llama-3: 0.48 to 0.60 (increments of 0.02).

* Mistral: 0.40 to 0.60 (increments of 0.05).

* Gemma-2: 0.44 to 0.52 (increments of 0.02).

* **X-Axis:** Labeled "Round" for all three charts, with markers at 0, 1, 2, 3, and 4.

* **Legend:** Located at the bottom of the entire figure, spanning its width. It defines the following eight methods:

1. `SoM (2x)`: Blue dashed line.

2. `SoM (4x)`: Teal dashed line.

3. `Persona`: Purple dashed line.

4. `DebateTune`: Brown solid line.

5. `SFT`: Light green solid line.

6. `DebateGPT`: Dark green solid line.

7. `ACC-Collab (Ours)`: Orange solid line with circular markers.

8. `ACC-Collab + (Ours)`: Red solid line with circular markers.

### Detailed Analysis

**Chart 1: Llama-3**

* **Trend Verification & Data Points:**

* `ACC-Collab (Ours)` (Orange): Starts highest at ~0.555 (Round 0), peaks at ~0.592 (Round 1), then stabilizes around 0.585-0.592. It is the top-performing method throughout.

* `ACC-Collab + (Ours)` (Red): Starts at ~0.518, shows a steady upward trend to ~0.573 by Round 4, becoming the second-highest.

* `DebateGPT` (Dark Green): Starts at ~0.532, rises to ~0.558 (Round 3), then dips slightly to ~0.551 (Round 4).

* `SFT` (Light Green): Starts at ~0.525, rises gently to ~0.548 (Round 3), then dips to ~0.540.

* The four dashed-line methods (`SoM (2x)`, `SoM (4x)`, `Persona`, `DebateTune`) cluster tightly between ~0.475 and ~0.515. They all show a sharp initial increase from Round 0 to 1, then plateau. `SoM (4x)` (Teal) appears marginally highest within this cluster by Round 4 (~0.515).

**Chart 2: Mistral**

* **Trend Verification & Data Points:**

* `ACC-Collab + (Ours)` (Red): Dominates this chart. Starts at ~0.562, jumps to ~0.598 (Round 1), and remains flat at ~0.600 through Round 4.

* `ACC-Collab (Ours)` (Orange): Starts at ~0.482, rises steadily to ~0.518 (Round 4). It is clearly separated below the red line but above all others.

* `DebateGPT` (Dark Green): Starts at ~0.448, rises to ~0.478 (Round 4).

* `SFT` (Light Green): Starts lowest at ~0.405, rises to ~0.438 (Round 4).

* The dashed-line cluster (`SoM (2x)`, `SoM (4x)`, `Persona`, `DebateTune`) is again tightly grouped, starting between ~0.410-0.440 and ending between ~0.455-0.465. `DebateTune` (Brown) is at the bottom of this cluster.

**Chart 3: Gemma-2**

* **Trend Verification & Data Points:**

* `ACC-Collab (Ours)` (Orange): Starts at ~0.476, shows a strong upward trend to peak at ~0.515 (Round 3), then dips slightly to ~0.513. It is the top performer from Round 2 onward.

* `SFT` (Light Green): Starts highest at ~0.493, peaks at ~0.505 (Round 1), then declines to ~0.498 (Round 4).

* `DebateGPT` (Dark Green): Starts at ~0.488, remains very flat, ending at ~0.490.

* `ACC-Collab + (Ours)` (Red): Starts low at ~0.445, shows a late surge from Round 2 (~0.458) to Round 3 (~0.475), ending at ~0.476.

* The dashed-line cluster (`SoM (2x)`, `SoM (4x)`, `Persona`, `DebateTune`) starts between ~0.440-0.448. They show modest gains, ending between ~0.448-0.455. `DebateTune` (Brown) shows a notable dip at Round 2 before recovering.

### Key Observations

1. **Model-Dependent Performance:** The relative effectiveness of the methods varies significantly by base model. `ACC-Collab + (Ours)` is dominant on Mistral, competitive on Llama-3, but underperforms on Gemma-2 until later rounds. `ACC-Collab (Ours)` is consistently strong, leading on Llama-3 and Gemma-2.

2. **Clustering of Baselines:** The four methods represented by dashed lines (`SoM (2x)`, `SoM (4x)`, `Persona`, `DebateTune`) consistently form a low-performing cluster with very similar trajectories across all three models.

3. **Round 0 to 1 Jump:** Nearly all methods show their most significant accuracy gain between Round 0 and Round 1, after which improvements become more gradual or plateau.

4. **Uncertainty Bands:** The shaded confidence intervals are notably wider for the `ACC-Collab` variants (orange and red lines), suggesting higher variance in their performance compared to the more tightly banded baseline methods.

### Interpretation

The data suggests that the proposed methods, **ACC-Collab (Ours)** and its variant **ACC-Collab + (Ours)**, generally outperform the baseline techniques (SoM, Persona, DebateTune, SFT, DebateGPT) in multi-round accuracy evaluation across different large language models. The "Ours" label indicates these are the novel contributions of the paper or work from which this figure is taken.

The performance hierarchy is not absolute but is **model-sensitive**. This implies that the optimal collaborative or debate strategy may depend on the underlying capabilities or biases of the base model (Llama-3 vs. Mistral vs. Gemma-2). The consistent underperformance and clustering of the dashed-line methods suggest they represent a similar class of simpler or less effective approaches.

The significant improvement from Round 0 to Round 1 across the board indicates that even a single round of interaction or refinement provides substantial benefit over the base model's initial output. The plateauing effect suggests diminishing returns with additional rounds for most methods. The wider variance for the ACC-Collab methods might be a trade-off for their higher peak performance, indicating they are potentially more sensitive to initial conditions or input variations.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Model Performance Across Rounds (Llama-3, Mistral, Gemma-2)

### Overview

The image contains three line graphs comparing the accuracy of different AI models across four rounds of evaluation. Each graph represents a different base model (Llama-3, Mistral, Gemma-2), with multiple data series showing performance trends for various training methodologies. The graphs use colored lines with shaded confidence intervals to represent model accuracy over time.

### Components/Axes

- **X-axis**: "Round" (0 to 4), representing evaluation rounds

- **Y-axis**: "Accuracy" (0.40 to 0.60), measured as a decimal

- **Legends**: Located at the bottom of each graph, mapping colors to models:

- Blue dashed: SoM (2x)

- Teal dashed: SoM (4x)

- Purple dashed: Persona

- Brown solid: DebateTune

- Green solid: SFT

- Dark green solid: DebateGPT

- Orange solid: ACC-Collab (Ours)

- Red solid: ACC-Collab + (Ours)

### Detailed Analysis

#### Llama-3 Graph

- **Orange line (ACC-Collab (Ours))**: Starts at 0.56 (Round 0), peaks at 0.59 (Round 4)

- **Green line (DebateGPT)**: Starts at 0.52 (Round 0), peaks at 0.56 (Round 2), then declines to 0.55 (Round 4)

- **Blue dashed (SoM 2x)**: Starts at 0.48 (Round 0), rises to 0.51 (Round 2), then plateaus

- **Teal dashed (SoM 4x)**: Starts at 0.47 (Round 0), rises to 0.51 (Round 2), then declines to 0.50 (Round 4)

- **Purple dashed (Persona)**: Starts at 0.49 (Round 0), rises to 0.51 (Round 2), then declines to 0.50 (Round 4)

- **Brown solid (DebateTune)**: Starts at 0.49 (Round 0), rises to 0.51 (Round 2), then declines to 0.50 (Round 4)

- **Green solid (SFT)**: Starts at 0.52 (Round 0), rises to 0.55 (Round 2), then declines to 0.54 (Round 4)

#### Mistral Graph

- **Red line (ACC-Collab + (Ours))**: Starts at 0.55 (Round 0), rises to 0.60 (Round 1), plateaus at 0.59-0.60

- **Orange line (ACC-Collab (Ours))**: Starts at 0.49 (Round 0), rises to 0.50 (Round 1), plateaus at 0.50-0.51

- **Blue dashed (SoM 2x)**: Starts at 0.40 (Round 0), rises to 0.43 (Round 4)

- **Teal dashed (SoM 4x)**: Starts at 0.45 (Round 0), rises to 0.47 (Round 1), plateaus at 0.47-0.48

- **Purple dashed (Persona)**: Starts at 0.44 (Round 0), rises to 0.47 (Round 1), plateaus at 0.47-0.48

- **Brown solid (DebateTune)**: Starts at 0.43 (Round 0), rises to 0.46 (Round 1), plateaus at 0.46-0.47

- **Green solid (SFT)**: Starts at 0.42 (Round 0), rises to 0.45 (Round 1), plateaus at 0.45-0.46

- **Dark green solid (DebateGPT)**: Starts at 0.45 (Round 0), rises to 0.48 (Round 1), plateaus at 0.48-0.49

#### Gemma-2 Graph

- **Orange line (ACC-Collab (Ours))**: Starts at 0.48 (Round 0), rises to 0.51 (Round 4)

- **Red line (ACC-Collab + (Ours))**: Starts at 0.44 (Round 0), rises to 0.49 (Round 4)

- **Blue dashed (SoM 2x)**: Starts at 0.44 (Round 0), rises to 0.46 (Round 2), plateaus at 0.46-0.47

- **Teal dashed (SoM 4x)**: Starts at 0.45 (Round 0), rises to 0.47 (Round 2), plateaus at 0.47-0.48

- **Purple dashed (Persona)**: Starts at 0.44 (Round 0), rises to 0.47 (Round 2), plateaus at 0.47-0.48

- **Brown solid (DebateTune)**: Starts at 0.43 (Round 0), rises to 0.46 (Round 2), plateaus at 0.46-0.47

- **Green solid (SFT)**: Starts at 0.47 (Round 0), rises to 0.50 (Round 2), plateaus at 0.50-0.51

- **Dark green solid (DebateGPT)**: Starts at 0.48 (Round 0), rises to 0.49 (Round 2), plateaus at 0.49-0.50

### Key Observations

1. **ACC-Collab Methods**: Consistently outperform other models across all three base models, with ACC-Collab + (Ours) showing the highest accuracy gains

2. **SoM Models**: Show improvement in early rounds but plateau or decline in later rounds, with 4x versions generally outperforming 2x

3. **DebateGPT**: Shows strong performance in Mistral but underperforms in Llama-3 and Gemma-2 compared to ACC-Collab methods

4. **SFT**: Shows moderate improvement across all models but lags behind ACC-Collab methods

5. **Confidence Intervals**: Shaded areas indicate variability, with ACC-Collab methods showing narrower intervals suggesting more consistent performance

### Interpretation

The data demonstrates that the ACC-Collab methodology (both base and enhanced versions) consistently delivers superior performance across all evaluated models, particularly in later rounds. This suggests that collaborative training approaches may be more effective than individual model training strategies. The SoM models show diminishing returns with increased complexity (4x vs 2x), while DebateGPT's performance varies significantly between base models. The shaded confidence intervals indicate that ACC-Collab methods have more reliable performance, with less variance between rounds. These findings imply that collaborative training frameworks could be prioritized for developing more robust AI systems, particularly when dealing with complex tasks requiring sustained performance across multiple evaluation rounds.

DECODING INTELLIGENCE...