TECHNICAL ASSET FINGERPRINT

e884621714842126f30d3b04

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Stacked Bar Chart: Error Analysis of Language Models

### Overview

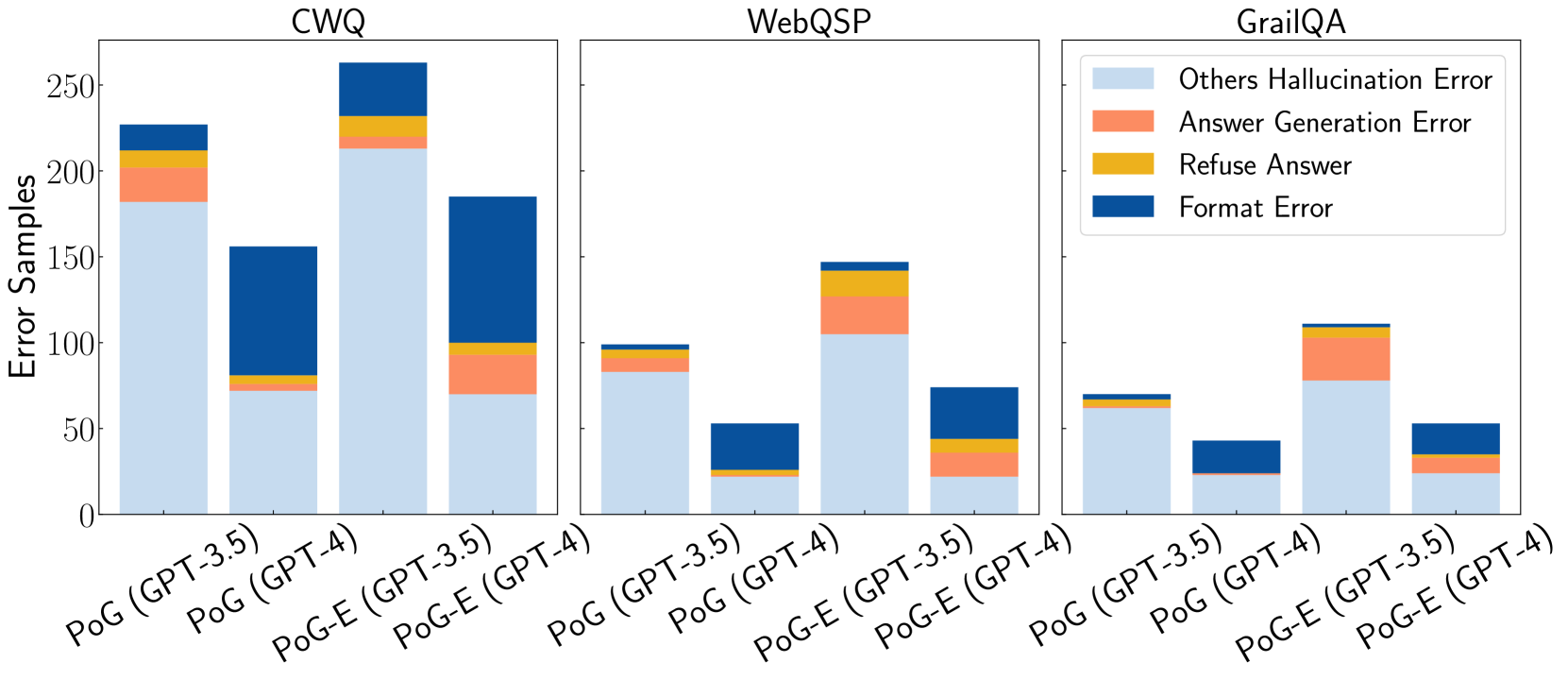

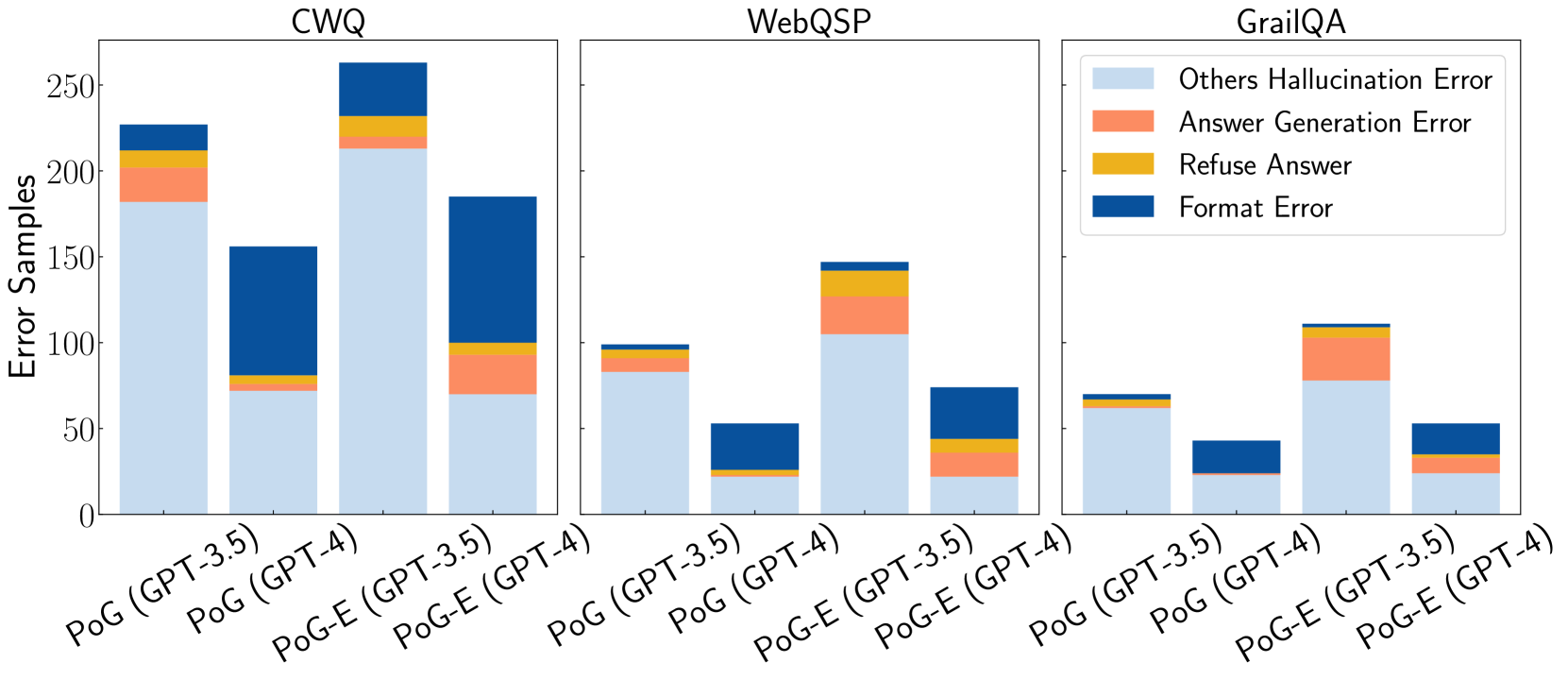

The image presents a stacked bar chart comparing the error profiles of different language models (GPT-3.5 and GPT-4) under two prompting strategies (PoG and PoG-E) across three question answering datasets (CWQ, WebQSP, and GrailQA). The chart breaks down the errors into four categories: Others Hallucination Error, Answer Generation Error, Refuse Answer, and Format Error.

### Components/Axes

* **Title:** Error Samples

* **Y-axis:**

* Label: Error Samples

* Scale: 0 to 250, with tick marks at 0, 50, 100, 150, 200, and 250.

* **X-axis:**

* Categories: CWQ, WebQSP, GrailQA

* Sub-categories (within each category):

* PoG (GPT-3.5)

* PoG (GPT-4)

* PoG-E (GPT-3.5)

* PoG-E (GPT-4)

* **Legend (top-right):**

* Others Hallucination Error (light blue)

* Answer Generation Error (coral)

* Refuse Answer (gold)

* Format Error (dark blue)

### Detailed Analysis

**CWQ Dataset:**

* **PoG (GPT-3.5):**

* Others Hallucination Error: ~180

* Answer Generation Error: ~30

* Refuse Answer: ~10

* Format Error: ~10

* **PoG (GPT-4):**

* Others Hallucination Error: ~70

* Answer Generation Error: ~0

* Refuse Answer: ~10

* Format Error: ~70

* **PoG-E (GPT-3.5):**

* Others Hallucination Error: ~210

* Answer Generation Error: ~20

* Refuse Answer: ~10

* Format Error: ~20

* **PoG-E (GPT-4):**

* Others Hallucination Error: ~70

* Answer Generation Error: ~0

* Refuse Answer: ~30

* Format Error: ~90

**WebQSP Dataset:**

* **PoG (GPT-3.5):**

* Others Hallucination Error: ~80

* Answer Generation Error: ~0

* Refuse Answer: ~10

* Format Error: ~10

* **PoG (GPT-4):**

* Others Hallucination Error: ~20

* Answer Generation Error: ~0

* Refuse Answer: ~0

* Format Error: ~30

* **PoG-E (GPT-3.5):**

* Others Hallucination Error: ~110

* Answer Generation Error: ~30

* Refuse Answer: ~10

* Format Error: ~0

* **PoG-E (GPT-4):**

* Others Hallucination Error: ~70

* Answer Generation Error: ~0

* Refuse Answer: ~10

* Format Error: ~0

**GrailQA Dataset:**

* **PoG (GPT-3.5):**

* Others Hallucination Error: ~60

* Answer Generation Error: ~0

* Refuse Answer: ~5

* Format Error: ~5

* **PoG (GPT-4):**

* Others Hallucination Error: ~20

* Answer Generation Error: ~0

* Refuse Answer: ~0

* Format Error: ~25

* **PoG-E (GPT-3.5):**

* Others Hallucination Error: ~80

* Answer Generation Error: ~20

* Refuse Answer: ~10

* Format Error: ~0

* **PoG-E (GPT-4):**

* Others Hallucination Error: ~25

* Answer Generation Error: ~5

* Refuse Answer: ~0

* Format Error: ~20

### Key Observations

* **Hallucination Errors:** "Others Hallucination Error" is generally the most prevalent error type across all datasets and model configurations, indicated by the height of the light blue section.

* **Format Errors:** "Format Error" is a significant error type, especially for PoG (GPT-4) on the CWQ dataset.

* **Model Performance:** GPT-4 generally exhibits lower "Others Hallucination Error" compared to GPT-3.5, suggesting improved accuracy.

* **Prompting Strategy:** The impact of the PoG-E prompting strategy varies across datasets and error types.

### Interpretation

The stacked bar chart provides a detailed comparison of error profiles for different language models and prompting strategies on various question-answering datasets. The data suggests that GPT-4 generally outperforms GPT-3.5 in terms of hallucination errors. The choice of prompting strategy (PoG vs. PoG-E) can significantly influence the error distribution, highlighting the importance of prompt engineering. The prevalence of "Others Hallucination Error" across all configurations indicates a persistent challenge in ensuring the factual accuracy of language model outputs. The "Format Error" suggests that the models sometimes struggle to produce outputs in the expected format, which could be addressed through improved training or output constraints.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

## Stacked Bar Chart: Error Samples by Dataset and Model Configuration

### Overview

This image displays a set of three stacked bar charts, each representing a different dataset: CWQ, WebQSP, and GrailQA. Within each dataset, there are four bars, representing different model configurations: PoG (GPT-3.5), PoG (GPT-4), PoG-E (GPT-3.5), and PoG-E (GPT-4). Each bar is segmented to show the breakdown of error samples into four categories: "Others Hallucination Error", "Answer Generation Error", "Refuse Answer", and "Format Error". The y-axis represents the "Error Samples".

### Components/Axes

**Global Elements:**

* **Y-axis Title:** "Error Samples" (positioned vertically on the left side of the chart area).

* **Y-axis Scale:** Ranges from 0 to 250, with major tick marks at 0, 50, 100, 150, 200, and 250.

* **Legend:** Located in the top-right quadrant of the overall image. It maps colors to error categories:

* Light Blue: "Others Hallucination Error"

* Coral/Light Orange: "Answer Generation Error"

* Yellow/Gold: "Refuse Answer"

* Dark Blue: "Format Error"

**Chart Titles (from left to right):**

1. CWQ

2. WebQSP

3. GrailQA

**X-axis Labels (common across all charts, rotated for readability):**

* PoG (GPT-3.5)

* PoG (GPT-4)

* PoG-E (GPT-3.5)

* PoG-E (GPT-4)

### Detailed Analysis

**Chart 1: CWQ**

* **PoG (GPT-3.5):**

* Others Hallucination Error (light blue): Approximately 175 samples.

* Answer Generation Error (coral): Approximately 25 samples (total ~200).

* Refuse Answer (yellow): Approximately 5 samples (total ~205).

* Format Error (dark blue): Approximately 20 samples (total ~225).

* **Total:** Approximately 225 samples.

* **PoG (GPT-4):**

* Others Hallucination Error (light blue): Approximately 75 samples.

* Answer Generation Error (coral): Approximately 5 samples (total ~80).

* Refuse Answer (yellow): Approximately 0 samples (total ~80).

* Format Error (dark blue): Approximately 75 samples (total ~155).

* **Total:** Approximately 155 samples.

* **PoG-E (GPT-3.5):**

* Others Hallucination Error (light blue): Approximately 210 samples.

* Answer Generation Error (coral): Approximately 15 samples (total ~225).

* Refuse Answer (yellow): Approximately 5 samples (total ~230).

* Format Error (dark blue): Approximately 20 samples (total ~250).

* **Total:** Approximately 250 samples.

* **PoG-E (GPT-4):**

* Others Hallucination Error (light blue): Approximately 85 samples.

* Answer Generation Error (coral): Approximately 5 samples (total ~90).

* Refuse Answer (yellow): Approximately 0 samples (total ~90).

* Format Error (dark blue): Approximately 100 samples (total ~190).

* **Total:** Approximately 190 samples.

**Chart 2: WebQSP**

* **PoG (GPT-3.5):**

* Others Hallucination Error (light blue): Approximately 95 samples.

* Answer Generation Error (coral): Approximately 5 samples (total ~100).

* Refuse Answer (yellow): Approximately 0 samples (total ~100).

* Format Error (dark blue): Approximately 0 samples (total ~100).

* **Total:** Approximately 100 samples.

* **PoG (GPT-4):**

* Others Hallucination Error (light blue): Approximately 20 samples.

* Answer Generation Error (coral): Approximately 0 samples (total ~20).

* Refuse Answer (yellow): Approximately 0 samples (total ~20).

* Format Error (dark blue): Approximately 30 samples (total ~50).

* **Total:** Approximately 50 samples.

* **PoG-E (GPT-3.5):**

* Others Hallucination Error (light blue): Approximately 100 samples.

* Answer Generation Error (coral): Approximately 25 samples (total ~125).

* Refuse Answer (yellow): Approximately 5 samples (total ~130).

* Format Error (dark blue): Approximately 0 samples (total ~130).

* **Total:** Approximately 130 samples.

* **PoG-E (GPT-4):**

* Others Hallucination Error (light blue): Approximately 45 samples.

* Answer Generation Error (coral): Approximately 5 samples (total ~50).

* Refuse Answer (yellow): Approximately 0 samples (total ~50).

* Format Error (dark blue): Approximately 30 samples (total ~80).

* **Total:** Approximately 80 samples.

**Chart 3: GrailQA**

* **PoG (GPT-3.5):**

* Others Hallucination Error (light blue): Approximately 60 samples.

* Answer Generation Error (coral): Approximately 5 samples (total ~65).

* Refuse Answer (yellow): Approximately 0 samples (total ~65).

* Format Error (dark blue): Approximately 0 samples (total ~65).

* **Total:** Approximately 65 samples.

* **PoG (GPT-4):**

* Others Hallucination Error (light blue): Approximately 30 samples.

* Answer Generation Error (coral): Approximately 0 samples (total ~30).

* Refuse Answer (yellow): Approximately 0 samples (total ~30).

* Format Error (dark blue): Approximately 20 samples (total ~50).

* **Total:** Approximately 50 samples.

* **PoG-E (GPT-3.5):**

* Others Hallucination Error (light blue): Approximately 110 samples.

* Answer Generation Error (coral): Approximately 30 samples (total ~140).

* Refuse Answer (yellow): Approximately 5 samples (total ~145).

* Format Error (dark blue): Approximately 0 samples (total ~145).

* **Total:** Approximately 145 samples.

* **PoG-E (GPT-4):**

* Others Hallucination Error (light blue): Approximately 25 samples.

* Answer Generation Error (coral): Approximately 5 samples (total ~30).

* Refuse Answer (yellow): Approximately 0 samples (total ~30).

* Format Error (dark blue): Approximately 25 samples (total ~55).

* **Total:** Approximately 55 samples.

### Key Observations

* **Dominant Error Type:** Across all datasets and configurations, "Others Hallucination Error" (light blue) is consistently the largest component of the total error samples.

* **Dataset Variation:** The total number of error samples varies significantly by dataset. CWQ generally shows the highest total error samples, followed by WebQSP and then GrailQA.

* **Model Configuration Impact:**

* Within each dataset, the "PoG-E (GPT-3.5)" configuration often exhibits a higher total number of error samples compared to "PoG (GPT-3.5)".

* The "GPT-4" models ("PoG (GPT-4)" and "PoG-E (GPT-4)") generally show fewer total error samples than their GPT-3.5 counterparts, particularly in the CWQ and WebQSP datasets.

* **Format Error:** "Format Error" (dark blue) is a significant contributor in some specific cases, notably for "PoG (GPT-4)" in CWQ and WebQSP, and "PoG-E (GPT-4)" in GrailQA.

* **Refuse Answer and Answer Generation Error:** These categories ("Refuse Answer" - yellow, "Answer Generation Error" - coral) are generally much smaller components of the total errors, often appearing as thin slices or being absent in many bars.

### Interpretation

This chart visually demonstrates the error distribution across different datasets and model configurations. The data suggests that:

1. **Hallucination is a Pervasive Issue:** The consistent dominance of "Others Hallucination Error" indicates that generating factually incorrect or fabricated information is a primary challenge for these models, regardless of the dataset or specific configuration. This points to a fundamental limitation in their knowledge grounding or reasoning capabilities.

2. **Dataset Complexity and Model Performance:** The variation in total error samples across datasets (CWQ > WebQSP > GrailQA) implies that the complexity or nature of the questions in these datasets impacts model performance. CWQ, with the highest errors, might pose more challenging reasoning or knowledge retrieval tasks.

3. **GPT-4's Potential Improvement:** The trend of lower total errors for GPT-4 models compared to GPT-3.5 models, especially in CWQ and WebQSP, suggests that GPT-4 offers an improvement in reducing overall errors. This could be attributed to enhanced reasoning, better knowledge recall, or improved generation capabilities.

4. **Configuration-Specific Trade-offs:** The "PoG-E" configuration, while sometimes leading to higher total errors with GPT-3.5, might be designed for different objectives or have different architectural properties that influence error types. The data suggests that "PoG-E (GPT-3.5)" might be more prone to hallucination or other errors than the base "PoG (GPT-3.5)".

5. **Specific Error Bottlenecks:** The presence of significant "Format Error" in certain configurations (e.g., PoG (GPT-4) in CWQ) highlights that while GPT-4 might reduce general errors, specific issues like output formatting can still be problematic depending on the task and model. The relative scarcity of "Refuse Answer" and "Answer Generation Error" suggests these are less common failure modes compared to hallucination.

In essence, the chart provides a comparative analysis of model robustness and error profiles. It underscores the ongoing challenge of factual accuracy (hallucination) in large language models and highlights the potential benefits of newer model versions (GPT-4) and specific configurations in mitigating these issues, while also revealing dataset-dependent performance variations.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Bar Chart: Error Analysis of Language Models on Question Answering Datasets

### Overview

The image presents a grouped bar chart comparing the error types of two language models, GPT-3.5 and GPT-4, across three question answering datasets: CWQ, WebQSP, and GrailQA. The chart visualizes the number of error samples for each model on each dataset, categorized by error type: Others Hallucination Error, Answer Generation Error, Refuse Answer, and Format Error.

### Components/Axes

* **X-axis:** Represents the combination of dataset and model. The categories are: "PoG (GPT-3.5)", "PoG (GPT-4)", "PoG-E (GPT-3.5)", "PoG-E (GPT-4)" for each dataset (CWQ, WebQSP, GrailQA). "PoG" and "PoG-E" likely represent different prompting strategies.

* **Y-axis:** Labeled "Error Samples", with a scale ranging from 0 to 250, incrementing by 50.

* **Legend:** Located in the top-right corner, defines the color coding for each error type:

* Light Blue: Others Hallucination Error

* Orange: Answer Generation Error

* Red: Refuse Answer

* Dark Blue: Format Error

* **Chart Title:** Each dataset (CWQ, WebQSP, GrailQA) has its own title displayed above the corresponding bar groups.

### Detailed Analysis or Content Details

**CWQ Dataset:**

* **PoG (GPT-3.5):** Total error samples ≈ 230. Breakdown: Others Hallucination Error ≈ 80, Answer Generation Error ≈ 100, Refuse Answer ≈ 30, Format Error ≈ 20.

* **PoG (GPT-4):** Total error samples ≈ 180. Breakdown: Others Hallucination Error ≈ 50, Answer Generation Error ≈ 90, Refuse Answer ≈ 20, Format Error ≈ 20.

* **PoG-E (GPT-3.5):** Total error samples ≈ 250. Breakdown: Others Hallucination Error ≈ 100, Answer Generation Error ≈ 100, Refuse Answer ≈ 30, Format Error ≈ 20.

* **PoG-E (GPT-4):** Total error samples ≈ 170. Breakdown: Others Hallucination Error ≈ 50, Answer Generation Error ≈ 80, Refuse Answer ≈ 20, Format Error ≈ 20.

**WebQSP Dataset:**

* **PoG (GPT-3.5):** Total error samples ≈ 100. Breakdown: Others Hallucination Error ≈ 30, Answer Generation Error ≈ 50, Refuse Answer ≈ 10, Format Error ≈ 10.

* **PoG (GPT-4):** Total error samples ≈ 80. Breakdown: Others Hallucination Error ≈ 20, Answer Generation Error ≈ 40, Refuse Answer ≈ 10, Format Error ≈ 10.

* **PoG-E (GPT-3.5):** Total error samples ≈ 120. Breakdown: Others Hallucination Error ≈ 40, Answer Generation Error ≈ 60, Refuse Answer ≈ 10, Format Error ≈ 10.

* **PoG-E (GPT-4):** Total error samples ≈ 70. Breakdown: Others Hallucination Error ≈ 20, Answer Generation Error ≈ 30, Refuse Answer ≈ 10, Format Error ≈ 10.

**GrailQA Dataset:**

* **PoG (GPT-3.5):** Total error samples ≈ 50. Breakdown: Others Hallucination Error ≈ 20, Answer Generation Error ≈ 20, Refuse Answer ≈ 5, Format Error ≈ 5.

* **PoG (GPT-4):** Total error samples ≈ 40. Breakdown: Others Hallucination Error ≈ 10, Answer Generation Error ≈ 20, Refuse Answer ≈ 5, Format Error ≈ 5.

* **PoG-E (GPT-3.5):** Total error samples ≈ 60. Breakdown: Others Hallucination Error ≈ 20, Answer Generation Error ≈ 30, Refuse Answer ≈ 5, Format Error ≈ 5.

* **PoG-E (GPT-4):** Total error samples ≈ 50. Breakdown: Others Hallucination Error ≈ 10, Answer Generation Error ≈ 20, Refuse Answer ≈ 10, Format Error ≈ 10.

### Key Observations

* **GPT-4 consistently outperforms GPT-3.5** across all datasets and prompting strategies (PoG and PoG-E) in terms of overall error samples.

* **Answer Generation Error is the dominant error type** for both models across all datasets.

* **PoG-E generally results in higher error counts than PoG** for both models, suggesting that the "E" prompting strategy may introduce more errors.

* **Format Error and Refuse Answer errors are relatively low** compared to the other two error types.

* **The error sample counts decrease as the dataset complexity increases** (CWQ > WebQSP > GrailQA).

### Interpretation

The data suggests that GPT-4 is a more reliable language model for question answering tasks than GPT-3.5, as it produces fewer errors across various datasets. However, both models struggle with generating accurate answers, as this is the most frequent type of error. The "PoG-E" prompting strategy appears to be less effective than "PoG," potentially due to increased complexity or ambiguity. The decreasing error counts with more complex datasets could indicate that the models perform better on tasks requiring more reasoning or knowledge. The relatively low occurrence of Format and Refuse Answer errors suggests that the models are generally capable of producing responses in the expected format and are not overly cautious about refusing to answer. This analysis provides valuable insights into the strengths and weaknesses of these language models and can inform future research and development efforts. The differences between PoG and PoG-E suggest that prompt engineering is a critical factor in model performance.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Stacked Bar Chart: Error Analysis Across Three QA Datasets

### Overview

The image displays three separate stacked bar charts arranged horizontally, comparing the distribution of error types for four different model configurations across three question-answering (QA) datasets: CWQ, WebQSP, and GrailQA. The y-axis for all charts is labeled "Error Samples," indicating the count of erroneous responses. Each chart contains four bars, representing two base methods (PoG and PoG-E) each run with two underlying language models (GPT-3.5 and GPT-4).

### Components/Axes

* **Chart Titles (Top Center):** "CWQ", "WebQSP", "GrailQA"

* **Y-Axis Label (Left Side, Shared):** "Error Samples"

* **Y-Axis Scale:** Linear scale from 0 to 250, with major ticks at 0, 50, 100, 150, 200, 250.

* **X-Axis Labels (Bottom of each chart):** Four categories per chart:

1. `PoG (GPT-3.5)`

2. `PoG (GPT-4)`

3. `PoG-E (GPT-3.5)`

4. `PoG-E (GPT-4)`

* **Legend (Top Right of the GrailQA chart):** A box containing four colored squares with corresponding labels:

* Light Blue: `Others Hallucination Error`

* Orange: `Answer Generation Error`

* Yellow: `Refuse Answer`

* Dark Blue: `Format Error`

### Detailed Analysis

**1. CWQ Chart (Leftmost)**

* **Trend:** The total error count is highest for the PoG-E (GPT-3.5) configuration. The PoG (GPT-4) configuration shows a notably different error composition, with a very large "Format Error" segment.

* **Data Points (Approximate Values):**

* **PoG (GPT-3.5):** Total ~225. Others Hallucination: ~180. Answer Generation: ~25. Refuse Answer: ~10. Format Error: ~10.

* **PoG (GPT-4):** Total ~155. Others Hallucination: ~75. Answer Generation: ~5. Refuse Answer: ~5. Format Error: ~70.

* **PoG-E (GPT-3.5):** Total ~260. Others Hallucination: ~215. Answer Generation: ~15. Refuse Answer: ~10. Format Error: ~20.

* **PoG-E (GPT-4):** Total ~185. Others Hallucination: ~70. Answer Generation: ~25. Refuse Answer: ~5. Format Error: ~85.

**2. WebQSP Chart (Center)**

* **Trend:** Overall error counts are lower than in CWQ. The PoG-E (GPT-3.5) configuration again has the highest total errors. The "Format Error" segment is prominent in the GPT-4 based models.

* **Data Points (Approximate Values):**

* **PoG (GPT-3.5):** Total ~100. Others Hallucination: ~85. Answer Generation: ~10. Refuse Answer: ~3. Format Error: ~2.

* **PoG (GPT-4):** Total ~55. Others Hallucination: ~25. Answer Generation: ~2. Refuse Answer: ~3. Format Error: ~25.

* **PoG-E (GPT-3.5):** Total ~145. Others Hallucination: ~105. Answer Generation: ~25. Refuse Answer: ~10. Format Error: ~5.

* **PoG-E (GPT-4):** Total ~75. Others Hallucination: ~25. Answer Generation: ~15. Refuse Answer: ~5. Format Error: ~30.

**3. GrailQA Chart (Rightmost)**

* **Trend:** This dataset shows the lowest overall error counts. The pattern of PoG-E (GPT-3.5) having the most errors and GPT-4 models having larger "Format Error" segments continues.

* **Data Points (Approximate Values):**

* **PoG (GPT-3.5):** Total ~70. Others Hallucination: ~60. Answer Generation: ~5. Refuse Answer: ~3. Format Error: ~2.

* **PoG (GPT-4):** Total ~45. Others Hallucination: ~25. Answer Generation: ~2. Refuse Answer: ~1. Format Error: ~17.

* **PoG-E (GPT-3.5):** Total ~110. Others Hallucination: ~75. Answer Generation: ~25. Refuse Answer: ~5. Format Error: ~5.

* **PoG-E (GPT-4):** Total ~55. Others Hallucination: ~25. Answer Generation: ~10. Refuse Answer: ~3. Format Error: ~17.

### Key Observations

1. **Dominant Error Type:** "Others Hallucination Error" (light blue) is consistently the largest error component across all datasets and models, especially for GPT-3.5 based configurations.

2. **Model Comparison:** GPT-4 based models (`PoG (GPT-4)` and `PoG-E (GPT-4)`) consistently show a much larger proportion of "Format Error" (dark blue) compared to their GPT-3.5 counterparts.

3. **Method Comparison:** The `PoG-E` method generally results in a higher total number of error samples than the `PoG` method when using the same underlying language model (GPT-3.5 or GPT-4).

4. **Dataset Difficulty:** The total error counts are highest for CWQ, intermediate for WebQSP, and lowest for GrailQA, suggesting varying levels of difficulty or error propensity across these benchmarks for the tested models.

### Interpretation

This visualization provides a diagnostic breakdown of *why* models fail on these QA tasks, moving beyond simple accuracy metrics. The data suggests two key insights:

1. **Hallucination is the Primary Failure Mode:** The overwhelming prevalence of "Others Hallucination Error" indicates that the core challenge for these models is generating factually incorrect or unsupported information, rather than refusing to answer or making simple formatting mistakes.

2. **Model Capability Affects Error Type:** The shift from hallucination-dominated errors in GPT-3.5 to a significant share of format errors in GPT-4 implies that as models become more capable (GPT-4), they may be better at avoiding factual hallucinations but encounter new failures in adhering to strict output protocols or structured response formats required by the evaluation framework. This highlights a potential trade-off or a new area for refinement in more advanced models.

The consistent pattern across three distinct datasets strengthens the reliability of these observations. The `PoG-E` method, while potentially offering other benefits, appears to increase the raw number of errors, particularly hallucinations, compared to the base `PoG` method.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Error Samples by Model and Dataset

### Overview

The chart compares error samples across three question-answering datasets (CWQ, WebQSP, GrailQA) for two models (PoG and PoG-E) using GPT-3.5 and GPT-4. Each bar is segmented into four error types: Others Hallucination Error (light blue), Answer Generation Error (orange), Refuse Answer (yellow), and Format Error (dark blue). The y-axis represents error sample counts, with values ranging from 0 to 250.

### Components/Axes

- **X-axis**: Model/Dataset combinations:

- CWQ: PoG (GPT-3.5), PoG (GPT-4), PoG-E (GPT-3.5), PoG-E (GPT-4)

- WebQSP: PoG (GPT-3.5), PoG (GPT-4), PoG-E (GPT-3.5), PoG-E (GPT-4)

- GrailQA: PoG (GPT-3.5), PoG (GPT-4), PoG-E (GPT-3.5), PoG-E (GPT-4)

- **Y-axis**: "Error Samples" (0–250)

- **Legend**: Located on the right, with color-coded error types:

- Light blue: Others Hallucination Error

- Orange: Answer Generation Error

- Yellow: Refuse Answer

- Dark blue: Format Error

### Detailed Analysis

1. **CWQ Dataset**:

- **PoG (GPT-4)**: Tallest bar (~220 total errors). Format Error (dark blue) dominates (~120), followed by Others Hallucination Error (~80), Answer Generation Error (~15), and Refuse Answer (~5).

- **PoG-E (GPT-4)**: Second-tallest (~190 total). Format Error (~90), Others Hallucination Error (~70), Answer Generation Error (~20), Refuse Answer (~10).

2. **WebQSP Dataset**:

- **PoG-E (GPT-4)**: Tallest bar (~140 total). Answer Generation Error (orange, ~50) is largest, followed by Others Hallucination Error (~60), Format Error (~25), and Refuse Answer (~5).

- **PoG (GPT-4)**: ~100 total. Answer Generation Error (~30), Others Hallucination Error (~40), Format Error (~20), Refuse Answer (~10).

3. **GrailQA Dataset**:

- **PoG-E (GPT-4)**: Tallest bar (~110 total). Others Hallucination Error (light blue, ~60) dominates, followed by Answer Generation Error (~30), Refuse Answer (~10), and Format Error (~10).

- **PoG (GPT-4)**: ~80 total. Others Hallucination Error (~40), Answer Generation Error (~25), Refuse Answer (~10), Format Error (~5).

### Key Observations

- **Model Performance**: GPT-4 models consistently show higher error counts than GPT-3.5 across all datasets.

- **Error Type Dominance**:

- **CWQ**: Format Error is most prevalent.

- **WebQSP**: Answer Generation Error is most prevalent.

- **GrailQA**: Others Hallucination Error is most prevalent.

- **PoG-E vs. PoG**: PoG-E models generally have fewer errors than PoG in WebQSP and GrailQA but more in CWQ.

### Interpretation

The data suggests that model performance varies significantly by dataset. GPT-4 models exhibit higher error rates overall, with PoG-E performing better in WebQSP and GrailQA but worse in CWQ. The error type distribution highlights dataset-specific challenges:

- **CWQ**: Struggles with format adherence.

- **WebQSP**: Faces issues with answer generation accuracy.

- **GrailQA**: Prone to hallucination errors. These trends imply that model fine-tuning or dataset-specific adjustments may be necessary to address these error patterns.

DECODING INTELLIGENCE...