## Diagram: Human-AI Interaction Loops for Problem Solving

### Overview

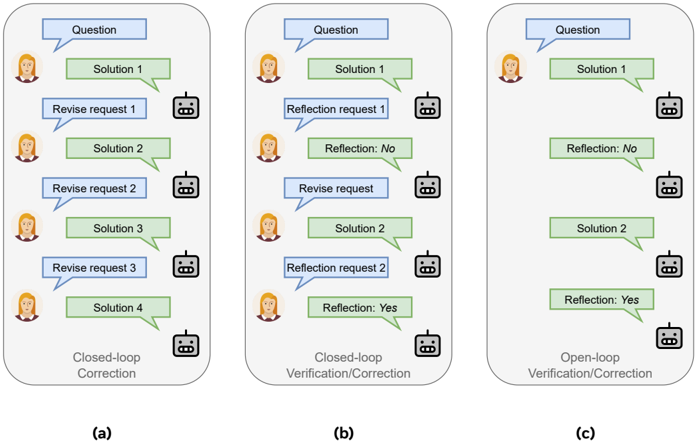

The image displays three distinct flowcharts, labeled (a), (b), and (c), illustrating different paradigms for iterative problem-solving and verification between a human user (represented by a female avatar) and an AI system (represented by a robot icon). Each diagram shows a sequence of interactions involving questions, solutions, revision requests, and self-reflection steps.

### Components/Axes

The diagram is divided into three vertical panels, each contained within a rounded rectangle.

* **Panel (a):** Titled "Closed-loop Correction" at the bottom.

* **Panel (b):** Titled "Closed-loop Verification/Correction" at the bottom.

* **Panel (c):** Titled "Open-loop Verification/Correction" at the bottom.

**Common Visual Elements:**

* **Human Avatar:** A stylized icon of a woman with brown hair, positioned on the left side of each interaction sequence.

* **Robot Icon:** A simple line-drawing of a robot head, positioned on the right side of each interaction sequence.

* **Text Boxes:** Rectangular boxes containing text, connected by directional arrows indicating the flow of conversation.

* **Blue Boxes:** Contain prompts or requests (e.g., "Question", "Revise request 1").

* **Green Boxes:** Contain outputs or responses (e.g., "Solution 1", "Reflection: No").

* **Arrows:** Solid black lines with arrowheads showing the direction of interaction, typically flowing from the human to the AI and back.

### Detailed Analysis

**Panel (a): Closed-loop Correction**

This panel depicts a linear, iterative correction process driven entirely by human feedback.

1. **Flow:** Human (Question) → AI (Solution 1) → Human (Revise request 1) → AI (Solution 2) → Human (Revise request 2) → AI (Solution 3) → Human (Revise request 3) → AI (Solution 4).

2. **Key Characteristic:** The AI provides a new solution only after receiving an explicit "Revise request" from the human. There are three cycles of revision shown.

**Panel (b): Closed-loop Verification/Correction**

This panel introduces an AI self-reflection step before revision.

1. **Flow:** Human (Question) → AI (Solution 1) → AI (Reflection request 1) → AI (Reflection: No) → Human (Revise request) → AI (Solution 2) → AI (Reflection request 2) → AI (Reflection: Yes).

2. **Key Characteristic:** After providing an initial solution, the AI internally requests a reflection and evaluates its own output ("Reflection: No"). Only after this failed self-check does the human provide a "Revise request." A second solution is generated, followed by another reflection cycle that passes ("Reflection: Yes").

**Panel (c): Open-loop Verification/Correction**

This panel shows a more autonomous process with minimal human intervention after the initial prompt.

1. **Flow:** Human (Question) → AI (Solution 1) → AI (Reflection: No) → AI (Solution 2) → AI (Reflection: Yes).

2. **Key Characteristic:** The AI autonomously moves from its first solution to a self-reflection ("Reflection: No"), then generates a second solution and reflects again ("Reflection: Yes"). There are no explicit "Reflection request" or "Revise request" boxes from either party; the process appears to be internally driven by the AI after the initial question.

### Key Observations

1. **Progression of Autonomy:** The diagrams show a clear progression from fully human-guided correction (a), to AI-assisted verification with human oversight (b), to a largely autonomous AI verification loop (c).

2. **Visual Consistency:** The color coding (blue for prompts, green for outputs) and iconography are consistent across all three panels, making the comparison straightforward.

3. **Structural Difference:** Panel (c) is notably shorter and has fewer interaction steps than (a) or (b), visually emphasizing its "open-loop" and more efficient nature.

4. **Reflection Outcome:** In both (b) and (c), the first self-reflection yields "No" (indicating a problem), leading to a new solution, while the second yields "Yes" (indicating acceptance).

### Interpretation

This diagram illustrates a conceptual framework for evaluating different levels of agency and interaction in human-AI collaborative systems.

* **What it demonstrates:** It contrasts three models for iterative improvement of AI outputs. Model (a) represents a traditional, human-in-the-loop feedback system. Model (b) introduces a "chain-of-verification" or self-critique step, where the AI checks its work before presenting it for human review, potentially increasing efficiency. Model (c) represents a fully autonomous self-correction loop, where the AI identifies and fixes its own errors without intermediate human prompts.

* **Relationship between elements:** The human role diminishes from an active corrector in (a) to a passive initiator in (c). The AI's role evolves from a passive answer-generator to an active agent capable of self-evaluation and autonomous revision. The "Reflection" step is the critical component that enables the transition from simple correction to verified correction.

* **Implications:** The progression suggests a research or development goal in AI: to create systems that can reliably self-verify and self-correct, reducing the need for constant human supervision while maintaining or improving output quality. The "open-loop" model in (c) is presented as the most advanced, implying it is a desirable target for creating more robust and independent AI assistants. The diagrams likely serve to explain or compare methodologies in a technical paper on AI alignment, training, or interactive systems.