TECHNICAL ASSET FINGERPRINT

e8dc949d75ed5236c057fe06

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

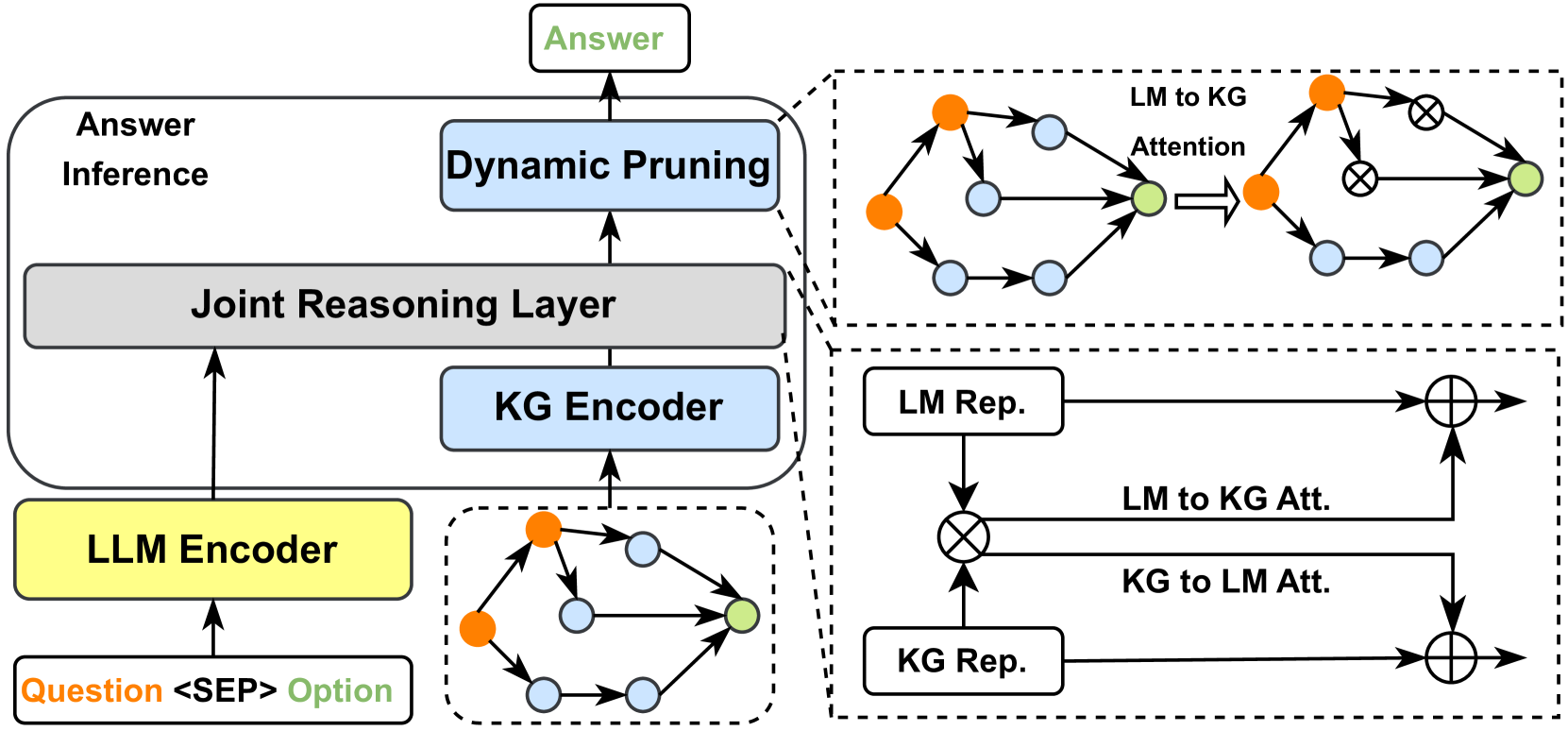

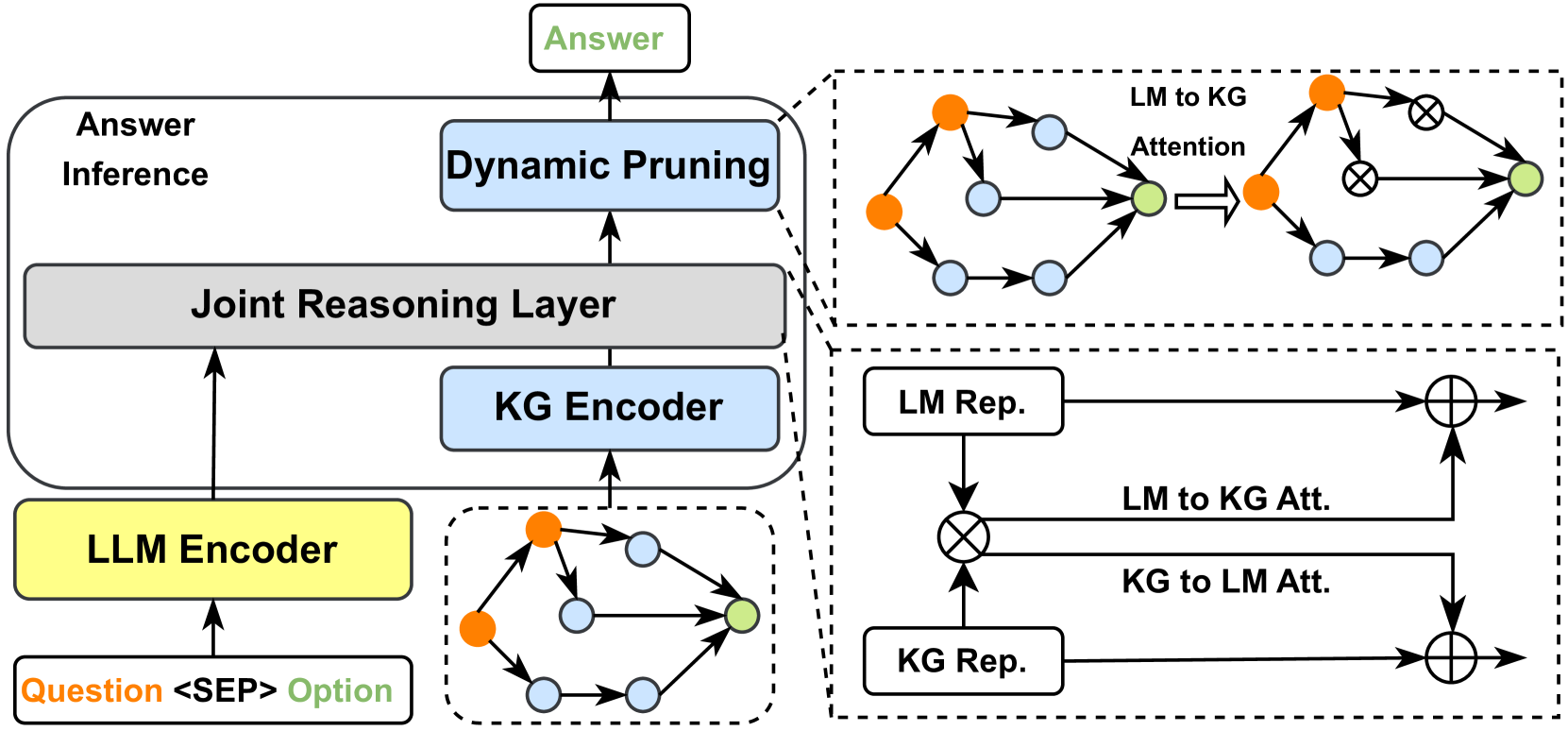

## [Diagram]: System Architecture for Joint Language Model and Knowledge Graph Reasoning

### Overview

This image is a technical system architecture diagram illustrating a model that combines a Large Language Model (LLM) with a Knowledge Graph (KG) for question answering. The process flows from an input question and option, through encoding and joint reasoning layers, to a final answer, incorporating a dynamic pruning mechanism. The diagram is divided into a main flowchart on the left and two detailed sub-diagrams on the right, enclosed in dashed boxes.

### Components/Flow

The diagram is organized into several interconnected blocks and sub-diagrams.

**Main Flowchart (Left Side):**

* **Input (Bottom-Left):** A white box labeled `Question <SEP> Option`. The text "Question" is in orange, and "Option" is in green, separated by `<SEP>`.

* **LLM Encoder (Bottom-Left, above input):** A yellow rectangular box labeled `LLM Encoder`. An arrow points from the input box to this encoder.

* **KG Encoder (Center-Left):** A light blue rectangular box labeled `KG Encoder`. It receives input from a sub-diagram representing a knowledge graph structure.

* **Joint Reasoning Layer (Center):** A large grey rectangular box labeled `Joint Reasoning Layer`. It receives upward arrows from both the `LLM Encoder` and the `KG Encoder`.

* **Dynamic Pruning (Top-Center):** A light blue rectangular box labeled `Dynamic Pruning`. It receives an upward arrow from the `Joint Reasoning Layer`.

* **Answer Inference (Top-Left):** A label `Answer Inference` placed to the left of the `Dynamic Pruning` box, indicating the overall stage.

* **Output (Top-Center):** A white box labeled `Answer` in green text. An arrow points from the `Dynamic Pruning` box to this output.

**Sub-Diagram 1 (Top-Right, dashed box):**

This details the "LM to KG Attention" mechanism.

* **Components:** It shows two graph structures. The left graph has orange nodes (representing LM entities) and light blue nodes (representing KG entities) connected by arrows. A green node is highlighted.

* **Process:** An arrow labeled `LM to KG Attention` points from the left graph to a right graph. The right graph shows the same structure but with some connections marked with an "X" inside a circle, indicating pruning or masking.

* **Text:** The label `LM to KG Attention` is placed above the arrow connecting the two graphs.

**Sub-Diagram 2 (Bottom-Right, dashed box):**

This details the bidirectional attention mechanism between LM and KG representations.

* **Components:**

* Two white boxes: `LM Rep.` (top) and `KG Rep.` (bottom).

* Two circular nodes with a cross inside (⊗), representing attention operations.

* Two circular nodes with a plus inside (⊕), representing summation or fusion operations.

* **Flow & Labels:**

* An arrow from `LM Rep.` goes to the top ⊗ node.

* An arrow from `KG Rep.` goes to the bottom ⊗ node.

* A horizontal arrow labeled `LM to KG Att.` connects the two ⊗ nodes.

* A horizontal arrow labeled `KG to LM Att.` connects the two ⊗ nodes in the opposite direction.

* The output of the top ⊗ node goes to the top ⊕ node.

* The output of the bottom ⊗ node goes to the bottom ⊕ node.

* The original `LM Rep.` and `KG Rep.` also feed directly into their respective ⊕ nodes.

* Final arrows point rightward from the ⊕ nodes, indicating the fused representations.

**Embedded Knowledge Graph Icon (Center-Bottom, dashed box):**

* A small graph icon with orange, light blue, and green nodes connected by arrows. This icon is the source input for the `KG Encoder`.

### Detailed Analysis

The diagram describes a multi-stage pipeline:

1. **Input Encoding:** The input "Question <SEP> Option" is processed by the `LLM Encoder`. Simultaneously, a knowledge graph structure is processed by the `KG Encoder`.

2. **Joint Reasoning:** The outputs from both encoders are fed into the `Joint Reasoning Layer`. This is the core fusion module.

3. **Attention Mechanisms (Detailed on the right):**

* **LM to KG Attention (Top-Right):** This mechanism allows the language model representations to attend to relevant parts of the knowledge graph, effectively pruning irrelevant KG nodes (shown by the crossed-out connections).

* **Bidirectional Attention (Bottom-Right):** This shows a more detailed interaction where `LM Rep.` and `KG Rep.` attend to each other (`LM to KG Att.` and `KG to LM Att.`). The attended features are then combined with the original representations via summation (⊕).

4. **Dynamic Pruning:** The output of the joint reasoning layer undergoes `Dynamic Pruning`, likely to refine the reasoning path or remove noise before final inference.

5. **Output:** The final `Answer` is generated.

### Key Observations

* **Color Coding:** Colors are used consistently: Orange for LM-related elements, Light Blue for KG-related elements and the pruning module, Green for the final answer and a key node, and Yellow for the LLM Encoder.

* **Flow Direction:** The primary data flow is upward from input to output. The sub-diagrams on the right provide lateral, detailed explanations of internal mechanisms.

* **Pruning Visualization:** The top-right sub-diagram explicitly visualizes the pruning concept by showing connections being "crossed out" after attention.

* **Modular Design:** The architecture is highly modular, with clear separation between encoding, reasoning, attention, and pruning stages.

### Interpretation

This diagram represents a sophisticated neuro-symbolic architecture designed to enhance question-answering by grounding a language model's reasoning in a structured knowledge graph. The key innovation appears to be the **dynamic and bidirectional attention mechanisms** between the LM and KG representations, coupled with a **pruning step**.

* **What it demonstrates:** The system doesn't just use the KG as a static lookup; it actively reasons over the graph structure. The "LM to KG Attention" allows the model to focus on relevant KG subgraphs for a given question, while the bidirectional attention in the lower sub-diagram suggests a deep, iterative fusion of textual and structural knowledge.

* **Relationships:** The `Joint Reasoning Layer` is the central hub where the two knowledge sources (textual from LM, structural from KG) interact. The `Dynamic Pruning` module acts as a filter, ensuring the final answer inference is based on the most salient information extracted through this joint reasoning.

* **Purpose:** This architecture likely aims to improve answer accuracy, explainability (by tracing reasoning through the KG), and efficiency (via pruning) compared to using an LLM alone. It addresses the common LLM limitation of hallucination or lack of factual grounding by tightly coupling it with a verified knowledge source.

DECODING INTELLIGENCE...