```markdown

## Diagram: Knowledge Graph-Based Question Answering System Architecture

### Overview

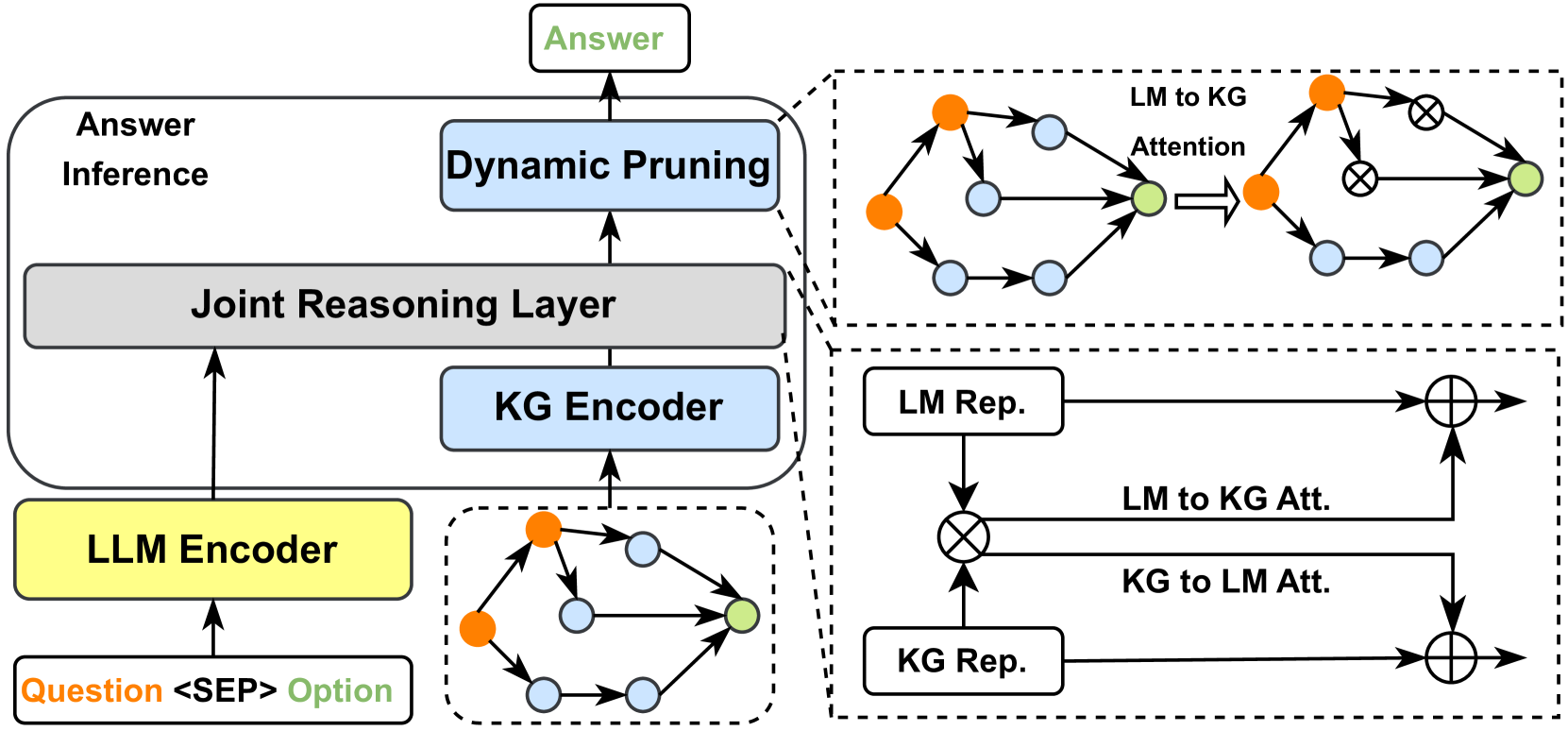

The diagram illustrates a multi-stage pipeline for a question-answering system that integrates large language models (LLMs) with knowledge graphs (KGs). It includes components for encoding, joint reasoning, dynamic pruning, and answer inference, with explicit attention mechanisms between language and knowledge representations.

### Components/Axes

- **Key Components**:

- **LLM Encoder**: Processes input questions and options (orange/green nodes).

- **KG Encoder**: Encodes knowledge graph data (blue nodes).

- **Joint Reasoning Layer**: Combines LLM and KG representations.

- **Dynamic Pruning**: Refines intermediate results.

- **Answer Inference**: Finalizes the answer.

- **Attention Mechanisms**:

- **LM to KG Attention**: Cross-attention between language and knowledge representations.

- **KG to LM Attention**: Reverse cross-attention.

- **Self-Attention Blocks**: Within LLM and KG representations.

### Detailed Analysis

1. **Input Flow**:

- **Question <SEP> Option**: Input text is split into question and options (orange/green nodes).

- **LLM Encoder**: Generates language model representations (`LM Rep.`).

- **KG Encoder**: Processes knowledge graph data (`KG Rep.`).

2. **Joint Reasoning**:

- **Cross-Attention**:

- `LM Rep.` → `KG Att.` (orange → blue edges).

- `KG Rep.` → `LM Att.` (blue → orange edges).

- **Self-Attention**:

- LLM representations refine