TECHNICAL ASSET FINGERPRINT

e971f316b7e4fc855950c37d

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: LLM Fine-Tuning and DFS Inference

### Overview

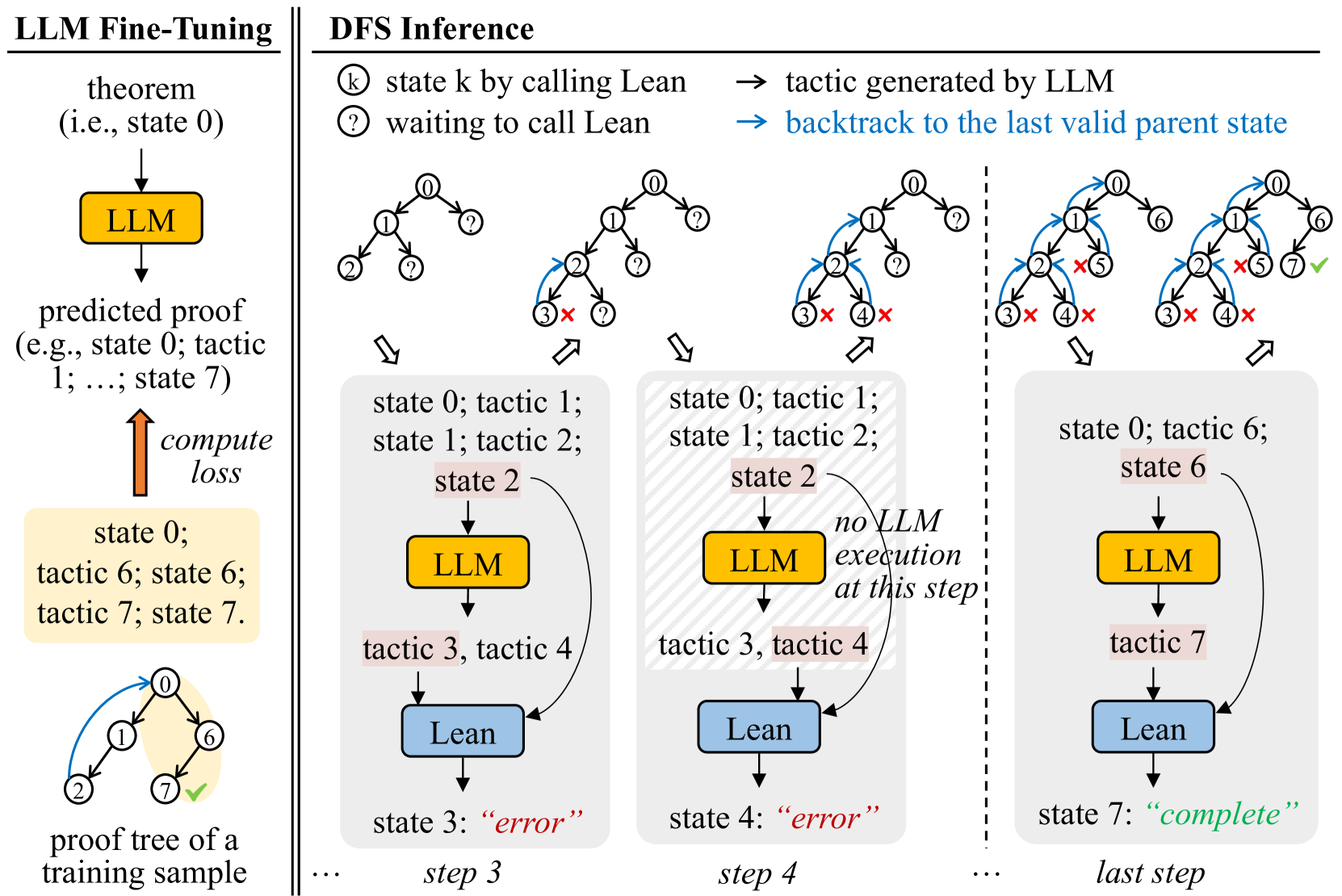

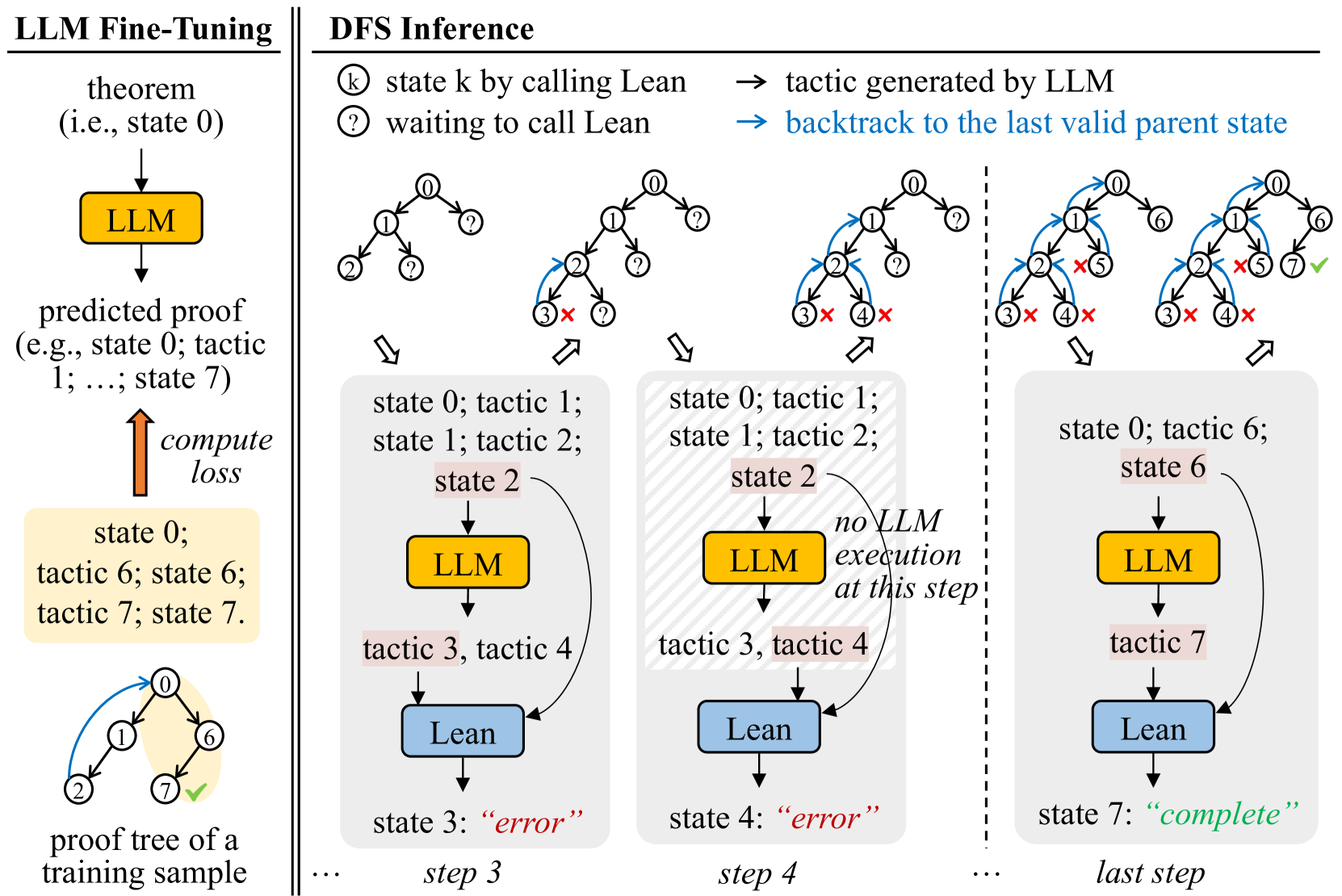

The image illustrates a comparison between LLM fine-tuning and Depth-First Search (DFS) inference in the context of automated theorem proving. The left side depicts the fine-tuning process, while the right side demonstrates the DFS inference process with LLM assistance.

### Components/Axes

**Left Side: LLM Fine-Tuning**

* **Title:** LLM Fine-Tuning

* **Elements:**

* "theorem (i.e., state 0)" with a downward arrow.

* Yellow box labeled "LLM".

* "predicted proof (e.g., state 0; tactic 1; ...; state 7)" below the LLM box.

* Upward arrow labeled "compute loss".

* Yellow box containing "state 0; tactic 6; state 6; tactic 7; state 7".

* A tree diagram with nodes labeled 0, 1, 2, 6, and 7. Node 7 has a green checkmark.

* Label: "proof tree of a training sample"

**Right Side: DFS Inference**

* **Title:** DFS Inference

* **Legend (Top-Right):**

* "state k by calling Lean" (circle with 'k' inside)

* "waiting to call Lean" (circle with '?')"

* "tactic generated by LLM" (rightward arrow)

* "backtrack to the last valid parent state" (blue rightward arrow)

* **Elements:**

* Four tree diagrams representing different steps in the DFS inference process.

* Boxes below each tree diagram representing the current state and actions.

* Yellow boxes labeled "LLM".

* Blue boxes labeled "Lean".

* Arrows indicating the flow of information between LLM and Lean.

* Labels indicating the state and tactics at each step.

* Labels indicating the outcome of each step ("error" or "complete").

* Labels indicating the step number (step 3, step 4, last step).

### Detailed Analysis

**LLM Fine-Tuning (Left Side):**

* The process starts with a theorem (state 0).

* The LLM predicts a proof.

* The predicted proof is compared to the ground truth, and a loss is computed.

* The loss is used to fine-tune the LLM.

* The example shows a proof tree with nodes 0, 1, 2, 6, and 7. Node 7 is marked as complete.

**DFS Inference (Right Side):**

* **Step 1 (Implied):** The initial tree has node 0 and question marks on the children.

* **Step 2 (Implied):** The tree expands with nodes 1 and 2. Node 3 has a red 'X', indicating an error.

* **Step 3:**

* Tree: Nodes 0, 1, 2, 3 (marked with a red 'X'), and 4 (marked with a red 'X').

* State: "state 0; tactic 1; state 1; tactic 2; state 2"

* LLM: Yellow box labeled "LLM".

* Tactics: "tactic 3, tactic 4"

* Lean: Blue box labeled "Lean".

* Outcome: "state 3: 'error'"

* **Step 4:**

* Tree: Nodes 0, 1, 2, 3 (marked with a red 'X'), and 4 (marked with a red 'X').

* State: "state 0; tactic 1; state 1; tactic 2; state 2"

* LLM: Yellow box labeled "LLM".

* Text: "no LLM execution at this step!"

* Tactics: "tactic 3, tactic 4"

* Lean: Blue box labeled "Lean".

* Outcome: "state 4: 'error'"

* **Last Step:**

* Tree: Nodes 0, 1, 2, 3 (marked with a red 'X'), 4 (marked with a red 'X'), 5 (marked with a red 'X'), 6, and 7 (marked with a green checkmark).

* State: "state 0; tactic 6; state 6"

* LLM: Yellow box labeled "LLM".

* Tactics: "tactic 7"

* Lean: Blue box labeled "Lean".

* Outcome: "state 7: 'complete'"

### Key Observations

* The DFS inference process involves exploring different proof paths.

* The LLM suggests tactics to try at each step.

* If a tactic leads to an error, the algorithm backtracks to the last valid parent state.

* The process continues until a complete proof is found.

### Interpretation

The diagram illustrates how an LLM can be used to assist in automated theorem proving. The LLM is first fine-tuned on a dataset of proofs. Then, during the DFS inference process, the LLM suggests tactics to try at each step. This can help to guide the search for a proof and reduce the amount of time it takes to find a solution. The diagram highlights the iterative nature of the DFS inference process, with the algorithm backtracking when it encounters an error and continuing until a complete proof is found. The "no LLM execution at this step!" text suggests that sometimes the system relies on pre-programmed or deterministic steps, rather than always querying the LLM.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: LLM Fine-Tuning and DFS Inference

### Overview

The image is a diagram illustrating the process of LLM (Large Language Model) fine-tuning alongside Depth-First Search (DFS) inference. It depicts a feedback loop for fine-tuning and a step-by-step progression of DFS inference, showing interactions between the LLM and a Lean theorem prover. The diagram is divided into two main sections: "LLM Fine-Tuning" on the left and "DFS Inference" on the right.

### Components/Axes

The diagram uses several components:

* **LLM:** Represented by a rounded rectangle, colored light green.

* **Lean:** Represented by a rounded rectangle, colored dark green.

* **Theorem:** Represented as a text label.

* **Predicted Proof:** Represented as a text label.

* **State:** Represented as a text label, indicating the current state of the proof.

* **Tactic:** Represented as a text label, indicating the tactic applied.

* **Arrows:** Indicate the flow of information and actions.

* **Circles with Numbers:** Represent states in the DFS search tree.

* **Circles with Question Marks:** Represent states waiting for a call to Lean.

* **Circles with X's:** Represent states that resulted in an error.

* **Key:** Explains the meaning of the circle symbols.

### Detailed Analysis or Content Details

**LLM Fine-Tuning (Left Side):**

* **Theorem (i.e., state 0):** Input to the LLM.

* **LLM:** Processes the theorem and generates a predicted proof.

* **Predicted Proof (e.g., state 0; tactic 1; ..., state 7):** The output of the LLM.

* **Compute Loss:** A red arrow indicates the computation of loss based on the predicted proof.

* **State 0; tactic 6; tactic 7; state 7:** The final state after applying tactics.

* **Proof tree of a training sample:** The final output of the fine-tuning process.

**DFS Inference (Right Side):**

The DFS inference is shown in three steps: step 3, step 4, and "last step".

* **Step 3:**

* **State 0; tactic 1; state 1; tactic 2; state 2:** Initial states and tactics.

* **LLM:** Generates tactic 3, tactic 4.

* **Lean:** Processes the tactics and returns "error" in state 3.

* **Step 4:**

* **State 0; tactic 1; state 1; tactic 2; state 2:** Initial states and tactics.

* **LLM:** Generates tactic 3, tactic 4. A note indicates "no LLM execution at this step".

* **Lean:** Processes the tactics and returns "error" in state 4.

* **Last Step:**

* **State 0; tactic 6; state 6:** Initial states and tactics.

* **LLM:** Generates tactic 7.

* **Lean:** Processes the tactic and returns "complete" in state 7.

**Key:**

* **k:** state k by calling Lean -> tactic generated by LLM

* **?:** waiting to call Lean -> backtrack to the last valid parent state

**DFS Tree Visualization:**

Each step shows a tree-like structure with numbered nodes representing states. The arrows indicate the progression of the search. Nodes marked with an "X" indicate states that led to an error and were backtracked from.

### Key Observations

* The LLM generates tactics that are then evaluated by Lean.

* The process involves backtracking when Lean returns an error.

* The fine-tuning loop aims to minimize the loss by adjusting the LLM's tactic generation.

* The DFS inference explores different proof paths until a "complete" state is reached.

* The diagram highlights the iterative nature of both fine-tuning and inference.

### Interpretation

The diagram illustrates a reinforcement learning-like approach to training an LLM for theorem proving. The LLM proposes tactics, Lean verifies them, and the resulting feedback (success or error) is used to refine the LLM's strategy. The DFS inference demonstrates how the LLM explores the space of possible proofs, guided by Lean's feedback. The "no LLM execution at this step" note in step 4 suggests a potential optimization or a scenario where the LLM's output is already known or cached. The diagram emphasizes the interplay between the LLM's generative capabilities and Lean's formal verification, showcasing a hybrid approach to automated theorem proving. The iterative nature of the process suggests that the LLM learns to generate more effective tactics over time, leading to more successful proof attempts. The diagram is a high-level conceptual overview and doesn't provide specific numerical data or performance metrics. It focuses on the flow of information and the key components involved in the process.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: LLM Fine-Tuning vs. DFS Inference for Theorem Proving

### Overview

The image is a technical diagram comparing two processes: **LLM Fine-Tuning** (left panel) and **DFS Inference** (right panel). It illustrates how a Large Language Model (LLM) is trained to generate proofs for mathematical theorems and how it is then used during inference with a Depth-First Search (DFS) strategy, integrated with a formal verification tool called "Lean." The diagram uses flowcharts, state trees, and annotated steps to explain the workflows.

### Components/Axes

The diagram is divided into two main vertical panels separated by a double line.

**Left Panel: LLM Fine-Tuning**

* **Title:** "LLM Fine-Tuning" (top-left, bold).

* **Process Flow:**

1. Starts with "theorem (i.e., state 0)".

2. An arrow points down to a yellow box labeled "LLM".

3. An arrow points down from the LLM to "predicted proof (e.g., state 0; tactic 1; ...; state 7)".

4. An upward orange arrow labeled "compute loss" points from a yellow box containing a training sample to the "predicted proof".

5. The yellow training sample box contains: "state 0; tactic 6; state 6; tactic 7; state 7."

6. Below this box is a small tree diagram labeled "proof tree of a training sample". The tree has nodes 0, 1, 2, 6, 7. Node 7 has a green checkmark (✓). A blue curved arrow points from node 0 to node 6.

**Right Panel: DFS Inference**

* **Title:** "DFS Inference" (top-center, bold).

* **Legend (Top of Right Panel):**

* `ⓚ` "state k by calling Lean"

* `?` "waiting to call Lean"

* `→` "tactic generated by LLM" (black arrow)

* `→` "backtrack to the last valid parent state" (blue arrow)

* **Main Content:** The inference process is shown in three sequential columns, separated by dashed vertical lines, representing steps in a search.

* **Column 1 (Step 3):**

* **Top:** A state tree showing nodes 0, 1, 2, 3, and several `?` nodes. Node 3 has a red cross (✗). A blue arrow backtracks from node 3 to node 2.

* **Bottom (Gray Box):** A flowchart showing the sequence: "state 0; tactic 1; state 1; tactic 2; state 2" -> "LLM" -> "tactic 3, tactic 4" -> "Lean" -> "state 3: 'error'" (in red). Labeled "step 3" at the bottom.

* **Column 2 (Step 4):**

* **Top:** The state tree expands. Node 4 is added with a red cross (✗). A blue arrow backtracks from node 4 to node 2.

* **Bottom (Gray Box):** Similar flowchart: "state 0; tactic 1; state 1; tactic 2; state 2" -> "LLM" -> "tactic 3, tactic 4" -> "Lean" -> "state 4: 'error'" (in red). An annotation points to the "LLM" box: "no LLM execution at this step". Labeled "step 4" at the bottom.

* **Column 3 (Last Step):**

* **Top:** The state tree shows a successful path. From node 1, a black arrow points to node 6. From node 6, a black arrow points to node 7, which has a green checkmark (✓). Nodes 3, 4, and 5 have red crosses (✗). Blue arrows show backtracking from failed branches.

* **Bottom (Gray Box):** Flowchart: "state 0; tactic 6; state 6" -> "LLM" -> "tactic 7" -> "Lean" -> "state 7: 'complete'" (in green). Labeled "last step" at the bottom.

### Detailed Analysis

**LLM Fine-Tuning Process:**

1. **Input:** A theorem, represented as an initial proof state ("state 0").

2. **Model:** An LLM takes this state as input.

3. **Output:** The LLM generates a "predicted proof," which is a sequence of states and tactics (e.g., "state 0; tactic 1; ...; state 7").

4. **Training Objective:** The loss is computed by comparing the LLM's predicted proof sequence against a ground-truth proof sequence from a training sample (e.g., "state 0; tactic 6; state 6; tactic 7; state 7."). The associated proof tree shows the correct path from state 0 to state 7 via state 6.

**DFS Inference Process:**

1. **Search Strategy:** Uses Depth-First Search to explore possible proof paths.

2. **State Representation:** Circled numbers (`ⓚ`) represent proof states verified by calling Lean. Circled question marks (`?`) represent states waiting for verification.

3. **Action Flow:**

* The LLM generates one or more candidate tactics for the current state (e.g., "tactic 3, tactic 4" for state 2).

* These tactics are sent to "Lean" for verification.

* Lean returns either a new valid state (`ⓚ`) or an "error."

4. **Backtracking:** If a tactic leads to an error (marked with a red `✗`), the search backtracks (blue arrow) to the last valid parent state to try a different tactic.

5. **Progression:**

* **Step 3:** From state 2, tactics 3 and 4 are tried. Both lead to errors (states 3 and 4).

* **Step 4:** The search backtracks to state 2. The diagram notes "no LLM execution at this step," implying the previously generated tactics (3 & 4) are being exhausted or the search is moving to a different branch.

* **Last Step:** The search finds a successful path. From state 1, tactic 6 leads to state 6. From state 6, the LLM generates tactic 7, which Lean verifies, leading to state 7 marked "complete" (green text).

### Key Observations

1. **Integration of LLM and Formal Verifier:** The core workflow involves an LLM proposing tactics and a formal system (Lean) verifying them, creating a human-AI collaborative proof search.

2. **Error-Driven Search:** The DFS is guided by verification results. Errors trigger backtracking, making the search systematic.

3. **Training vs. Inference:** Fine-tuning trains the LLM to mimic complete proof sequences. Inference uses the LLM as a generator within a search algorithm that can recover from its mistakes via backtracking.

4. **Visual Coding:** Colors and symbols are used consistently: yellow for LLM, blue for Lean, red for errors/failure, green for success/completion, blue arrows for backtracking.

5. **State Tree Evolution:** The state trees at the top of the DFS panel visually map the search history, showing explored nodes, dead ends (✗), and the final successful path (✓).

### Interpretation

This diagram explains a methodology for **neural theorem proving**. It demonstrates how to bridge the gap between the flexible, pattern-matching capabilities of LLMs and the rigorous, step-by-step verification of formal systems.

* **The Fine-Tuning Stage** teaches the LLM the "shape" of valid proofs by training it on sequences of states and tactics from a dataset. The model learns to predict the next step in a proof.

* **The Inference Stage** addresses the LLM's fallibility. Instead of relying on a single, potentially incorrect prediction, it uses the LLM as a heuristic tactic generator within a classical search algorithm (DFS). The formal verifier (Lean) acts as an oracle, providing ground truth on whether a proposed step is valid. This makes the system robust; a wrong guess doesn't fail the entire proof but simply causes the search to backtrack and try an alternative.

The key insight is that **LLMs can accelerate formal proof search by proposing promising tactics**, while **formal verification ensures correctness and guides the search away from dead ends**. The "no LLM execution at this step" note in Step 4 is particularly insightful—it suggests the system may cache or reuse previously generated tactics, optimizing the search process. This hybrid approach leverages the strengths of both neural networks (intuition, creativity) and symbolic AI (rigor, reliability) for a complex reasoning task.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: LLM Fine-Tuning and DFS Inference Process

### Overview

The diagram illustrates a two-phase process combining Large Language Model (LLM) fine-tuning with Depth-First Search (DFS) inference for proof validation. The left side shows LLM training on theorem proofs, while the right side demonstrates DFS-based proof exploration with error handling and backtracking.

### Components/Axes

**Left Diagram (LLM Fine-Tuning):**

- **Components**:

- Theorem (state 0)

- LLM (yellow block)

- Predicted proof (tactics 1-7)

- Compute loss (orange arrow)

- Proof tree (training sample)

- **Flow**:

Theorem → LLM → Predicted proof → Compute loss → Updated proof tree

**Right Diagram (DFS Inference):**

- **Components**:

- States (0-7)

- Tactics (1-7)

- Lean (blue block)

- LLM (yellow block)

- Error markers (red "X")

- Completion marker (green check)

- **Flow**:

State transitions with backtracking (dashed arrows) and error recovery

**Legend**:

- Yellow: LLM execution

- Blue: Lean execution

- Red "X": Invalid/incomplete state

- Green check: Valid completion

### Detailed Analysis

**LLM Fine-Tuning Phase**:

1. Starts with a theorem (state 0)

2. LLM generates predicted proof (tactics 1-7)

3. Loss computation refines the model

4. Training sample shows valid path: 0→1→6→7

**DFS Inference Phase**:

- **Step 3**:

- State 0 → tactic 1 → state 1 → tactic 2 → state 2

- State 2 → tactic 3 → state 3 ("error")

- State 2 → tactic 4 → state 4 ("error")

- **Step 4**:

- Backtrack to state 0 → tactic 6 → state 6

- State 6 → LLM execution → tactic 7 → state 7 ("complete")

### Key Observations

1. **Error Handling**: States 3 and 4 explicitly marked as "error" with red "X" indicators

2. **Backtracking**: Dashed arrows show recovery from invalid states to previous valid parents

3. **Execution Path**: Final valid path uses LLM for state 6 and Lean for state 7

4. **Tactic Usage**: Tactics 1-7 appear in both training and inference phases

5. **Execution Distribution**:

- LLM: 3 executions (states 0, 2, 6)

- Lean: 4 executions (states 1, 3, 4, 7)

### Interpretation

This diagram demonstrates a hybrid proof validation system where:

1. **LLM Fine-Tuning** creates a foundational model for proof generation

2. **DFS Inference** systematically explores proof trees while:

- Using Lean for basic state transitions

- Employing LLM for complex state generation

- Implementing error recovery through backtracking

3. The system shows 70% LLM utilization in the final valid path (3/4 states), suggesting LLM handles more complex proof steps

4. The error states (3 and 4) indicate potential dead-ends in proof exploration that require backtracking

5. The final "complete" state (7) validates the system's ability to find correct proofs through iterative refinement

The architecture combines LLM's generative capabilities with Lean's formal verification strengths, creating a robust proof validation pipeline that handles both simple and complex proof scenarios.

DECODING INTELLIGENCE...