## Scatter Plot with Linear Regression Fits: Reasoning Tokens vs. Problem Size by Reasoning Effort

### Overview

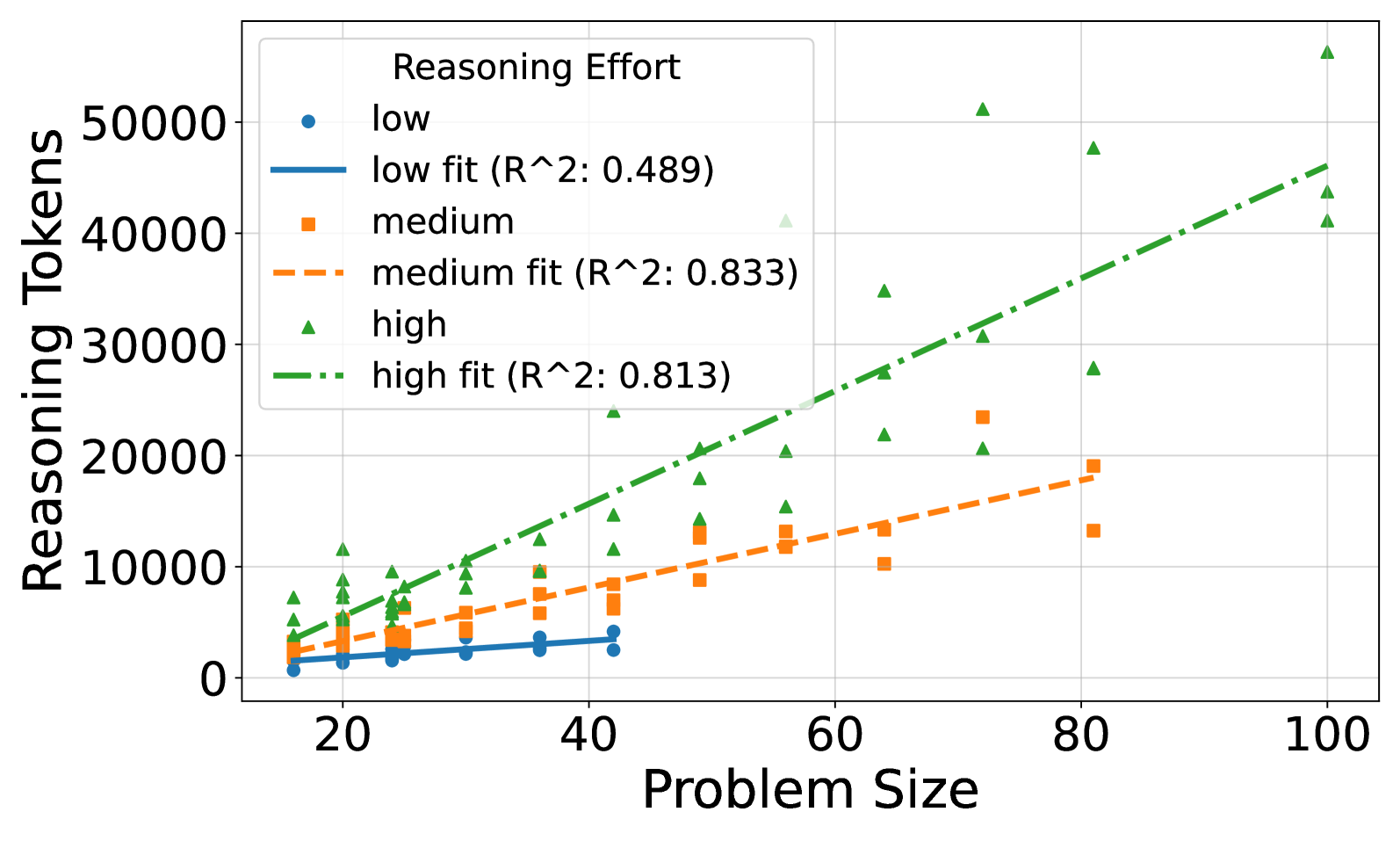

This image is a scatter plot chart displaying the relationship between "Problem Size" (x-axis) and the number of "Reasoning Tokens" (y-axis) used. The data is categorized into three levels of "Reasoning Effort": low, medium, and high. Each category includes individual data points and a fitted linear regression line with its corresponding R-squared value.

### Components/Axes

* **Chart Title:** None visible.

* **X-Axis:**

* **Label:** "Problem Size"

* **Scale:** Linear, ranging from approximately 15 to 100. Major tick marks are at 20, 40, 60, 80, and 100.

* **Y-Axis:**

* **Label:** "Reasoning Tokens"

* **Scale:** Linear, ranging from 0 to over 50,000. Major tick marks are at 0, 10000, 20000, 30000, 40000, and 50000.

* **Legend:** Located in the top-left quadrant of the plot area. It defines three data series and their corresponding fit lines:

1. **low:** Blue circle marker.

2. **low fit (R^2: 0.489):** Solid blue line.

3. **medium:** Orange square marker.

4. **medium fit (R^2: 0.833):** Dashed orange line.

5. **high:** Green triangle marker.

6. **high fit (R^2: 0.813):** Dash-dot green line.

* **Grid:** A light gray grid is present in the background.

### Detailed Analysis

The analysis is segmented by the three "Reasoning Effort" categories.

**1. Low Reasoning Effort (Blue Circles & Solid Blue Line)**

* **Trend:** The data points show a very shallow, slightly positive slope. The fitted line increases minimally from left to right.

* **Data Points (Approximate):**

* At Problem Size ~18: ~1,000 tokens.

* At Problem Size ~20: ~1,500 tokens.

* At Problem Size ~25: ~2,000 tokens.

* At Problem Size ~30: ~2,500 tokens.

* At Problem Size ~35: ~3,000 tokens.

* At Problem Size ~42: ~3,500 tokens.

* **Fit Line:** The solid blue regression line starts near (18, 1000) and ends near (42, 3500). The R-squared value of 0.489 indicates a weak to moderate fit, suggesting the linear model explains less than half of the variance in the data for this category.

**2. Medium Reasoning Effort (Orange Squares & Dashed Orange Line)**

* **Trend:** The data points and the fitted line show a clear, moderate positive linear trend. The slope is steeper than the "low" effort series.

* **Data Points (Approximate):**

* At Problem Size ~18: ~2,500 tokens.

* At Problem Size ~20: ~3,000 tokens.

* At Problem Size ~25: ~4,000 tokens.

* At Problem Size ~30: ~5,500 tokens.

* At Problem Size ~35: ~7,000 tokens.

* At Problem Size ~42: ~8,500 tokens.

* At Problem Size ~50: ~9,000 tokens.

* At Problem Size ~55: ~12,000 tokens.

* At Problem Size ~64: ~10,000 tokens.

* At Problem Size ~72: ~23,000 tokens (potential outlier, high).

* At Problem Size ~80: ~13,000 tokens and ~19,000 tokens.

* **Fit Line:** The dashed orange regression line starts near (18, 2500) and ends near (80, 18000). The R-squared value of 0.833 indicates a strong fit, meaning the linear model explains a large portion of the variance.

**3. High Reasoning Effort (Green Triangles & Dash-Dot Green Line)**

* **Trend:** The data points and the fitted line show a strong, steep positive linear trend. This series has the steepest slope and the highest token counts.

* **Data Points (Approximate):** The data is more scattered, especially at higher problem sizes.

* At Problem Size ~18: ~5,000 tokens.

* At Problem Size ~20: ~7,000 tokens.

* At Problem Size ~25: ~8,000 tokens.

* At Problem Size ~30: ~10,000 tokens.

* At Problem Size ~35: ~12,000 tokens.

* At Problem Size ~42: ~15,000 tokens.

* At Problem Size ~50: ~18,000 tokens and ~20,000 tokens.

* At Problem Size ~55: ~20,000 tokens and ~24,000 tokens.

* At Problem Size ~64: ~22,000 tokens, ~28,000 tokens, and ~35,000 tokens.

* At Problem Size ~72: ~20,000 tokens, ~31,000 tokens, and ~51,000 tokens (a very high point).

* At Problem Size ~80: ~28,000 tokens, ~48,000 tokens.

* At Problem Size ~100: ~41,000 tokens, ~44,000 tokens, and ~56,000 tokens (the highest point on the chart).

* **Fit Line:** The dash-dot green regression line starts near (18, 5000) and ends near (100, 46000). The R-squared value of 0.813 indicates a strong fit, similar to the "medium" category.

### Key Observations

1. **Clear Hierarchy:** For any given Problem Size, the number of Reasoning Tokens increases systematically from Low to Medium to High effort.

2. **Increasing Slope with Effort:** The slope of the regression line becomes progressively steeper from Low to Medium to High effort, indicating that the *rate* at which token usage grows with problem size is greater for higher reasoning efforts.

3. **Variance Increases with Effort:** The scatter (vertical spread) of data points around the fit line is smallest for "low" effort and largest for "high" effort, particularly at larger problem sizes (e.g., Problem Size 72 and 100).

4. **Strong Correlation for Medium/High:** Both the "medium" and "high" effort categories show a strong linear correlation (R² > 0.8) between problem size and token usage.

5. **Potential Outliers:** The data point at Problem Size ~72 for "medium" effort (~23,000 tokens) appears high relative to its trend. The "high" effort series has several points at large problem sizes (e.g., ~51,000 at PS 72, ~56,000 at PS 100) that are significantly above the fit line.

### Interpretation

The chart demonstrates a fundamental trade-off in computational reasoning: **increased problem complexity (size) requires more processing resources (tokens), and this cost scales more aggressively when the system is configured for higher reasoning effort.**

* **Low Effort** appears to be a "baseline" mode where token usage grows slowly and unpredictably with problem size (low R²). It may represent a shallow or heuristic-based approach.

* **Medium and High Effort** modes show a predictable, linear scaling law. The strong R² values suggest these modes engage in a more systematic, depth-first reasoning process whose resource consumption can be reliably modeled.

* The **steeper slope for High Effort** implies that for complex problems, choosing a high-effort strategy incurs a multiplicative cost in tokens. This could be due to more extensive search, verification, or step-by-step explanation generation.

* The **increased variance at High Effort** for large problems suggests that the reasoning process becomes less uniform; some problems may trigger exceptionally long chains of thought, while others of similar size are solved more efficiently. This could reflect the inherent variability in problem difficulty beyond just the "size" metric.

In essence, the data suggests that "Reasoning Effort" is a critical control parameter that not only determines the absolute resource cost but also fundamentally changes how that cost scales with problem difficulty. Users or systems must balance the desire for thoroughness (high effort) against the predictable and potentially prohibitive increase in token consumption for large-scale tasks.