\n

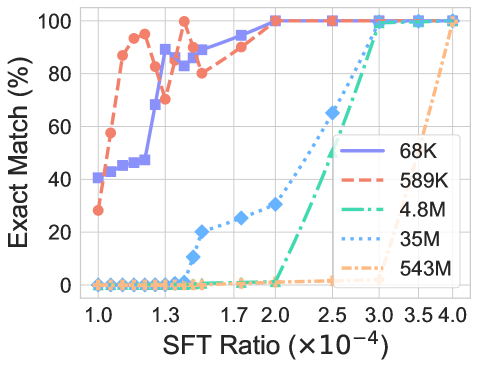

## Line Chart: Exact Match (%) vs. SFT Ratio for Different Model Sizes

### Overview

This is a line chart plotting the performance metric "Exact Match (%)" against the "SFT Ratio (×10⁻⁴)" for five different model sizes. The chart demonstrates how the exact match accuracy changes as the SFT (Supervised Fine-Tuning) ratio increases, with distinct performance curves for each model scale.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **X-Axis:**

* **Label:** `SFT Ratio (×10⁻⁴)`

* **Scale:** Linear, ranging from 1.0 to 4.0.

* **Major Ticks:** 1.0, 1.3, 1.7, 2.0, 2.5, 3.0, 3.5, 4.0.

* **Y-Axis:**

* **Label:** `Exact Match (%)`

* **Scale:** Linear, ranging from 0 to 100.

* **Major Ticks:** 0, 20, 40, 60, 80, 100.

* **Legend:** Positioned in the **bottom-right corner** of the plot area. It contains five entries, each with a unique color, line style, and marker:

1. `68K` - Solid purple line with square markers.

2. `589K` - Dashed orange-red line with circle markers.

3. `4.8M` - Dash-dot green line with diamond markers.

4. `35M` - Dotted blue line with diamond markers.

5. `543M` - Dashed light orange line with diamond markers.

### Detailed Analysis

**Data Series Trends and Approximate Key Points:**

1. **68K (Purple, Solid, Squares):**

* **Trend:** Starts relatively high, increases rapidly, and plateaus at 100%.

* **Key Points:** At SFT Ratio 1.0, Exact Match ≈ 40%. Rises steeply to ≈ 90% at 1.3. Reaches 100% by approximately 2.0 and remains there.

2. **589K (Orange-Red, Dashed, Circles):**

* **Trend:** Shows the most volatile early performance. Starts low, spikes dramatically, dips, then recovers to 100%.

* **Key Points:** At 1.0, ≈ 30%. Sharp increase to a peak of ≈ 95% near 1.2. Dips to ≈ 80% around 1.5. Recovers to 100% by 2.0 and stays there.

3. **4.8M (Green, Dash-Dot, Diamonds):**

* **Trend:** Remains near 0% for low ratios, then exhibits a very steep, almost vertical increase.

* **Key Points:** ≈ 0% from 1.0 to 2.0. Begins a sharp rise after 2.0, crossing 50% near 2.7. Reaches 100% by 3.0.

4. **35M (Blue, Dotted, Diamonds):**

* **Trend:** Similar to 4.8M but with a more gradual initial rise and an earlier takeoff point.

* **Key Points:** ≈ 0% at 1.0. First noticeable increase to ≈ 10% at 1.5. Rises steadily, crossing 50% near 2.3. Reaches 100% by 3.0.

5. **543M (Light Orange, Dashed, Diamonds):**

* **Trend:** The slowest to improve. Flat at 0% for the majority of the chart, then rises sharply at the highest ratios.

* **Key Points:** ≈ 0% from 1.0 to 3.0. Begins a steep ascent after 3.0, reaching ≈ 50% at 3.5 and 100% at 4.0.

### Key Observations

* **Model Size vs. Data Efficiency:** There is a clear inverse relationship between model size and data efficiency (SFT Ratio required for high performance). Smaller models (68K, 589K) achieve high exact match scores at much lower SFT Ratios (1.0-2.0) compared to larger models.

* **Performance Ceiling:** All models eventually reach 100% Exact Match, but at vastly different SFT Ratios. The 68K and 589K models plateau at 100% from a ratio of ~2.0 onward.

* **Critical Thresholds:** Each model size has a distinct "takeoff" point where performance begins to improve rapidly from near-zero. This threshold increases with model size (e.g., ~1.0 for 68K, ~2.0 for 4.8M, ~3.0 for 543M).

* **Volatility:** The 589K model shows significant volatility in the early training phase (ratios 1.0-1.7), unlike the smoother curves of the other models.

### Interpretation

The data suggests a fundamental trade-off in supervised fine-tuning between model scale and the amount of fine-tuning data (represented by the SFT Ratio) required to achieve task mastery. Smaller models appear to be more "data-efficient" for this specific task, reaching peak performance with less fine-tuning data. However, this does not necessarily mean they are better overall, as their absolute capacity is lower.

The delayed takeoff for larger models (4.8M, 35M, 543M) could indicate that they require a critical mass of fine-tuning data to overcome their initial, possibly more generalized, state and adapt to the specific task measured by "Exact Match." The 100% ceiling for all models implies the task is ultimately solvable given sufficient fine-tuning data relative to model size.

The anomaly of the 589K model's volatile early performance might suggest instability in the fine-tuning process for that specific model scale at low data regimes, or it could be an artifact of a specific experimental run. This chart is crucial for understanding the scaling laws of fine-tuning, indicating that simply increasing model size does not reduce the *relative* amount of fine-tuning data needed; in fact, it increases it.