TECHNICAL ASSET FINGERPRINT

e98834741a721634d861991c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

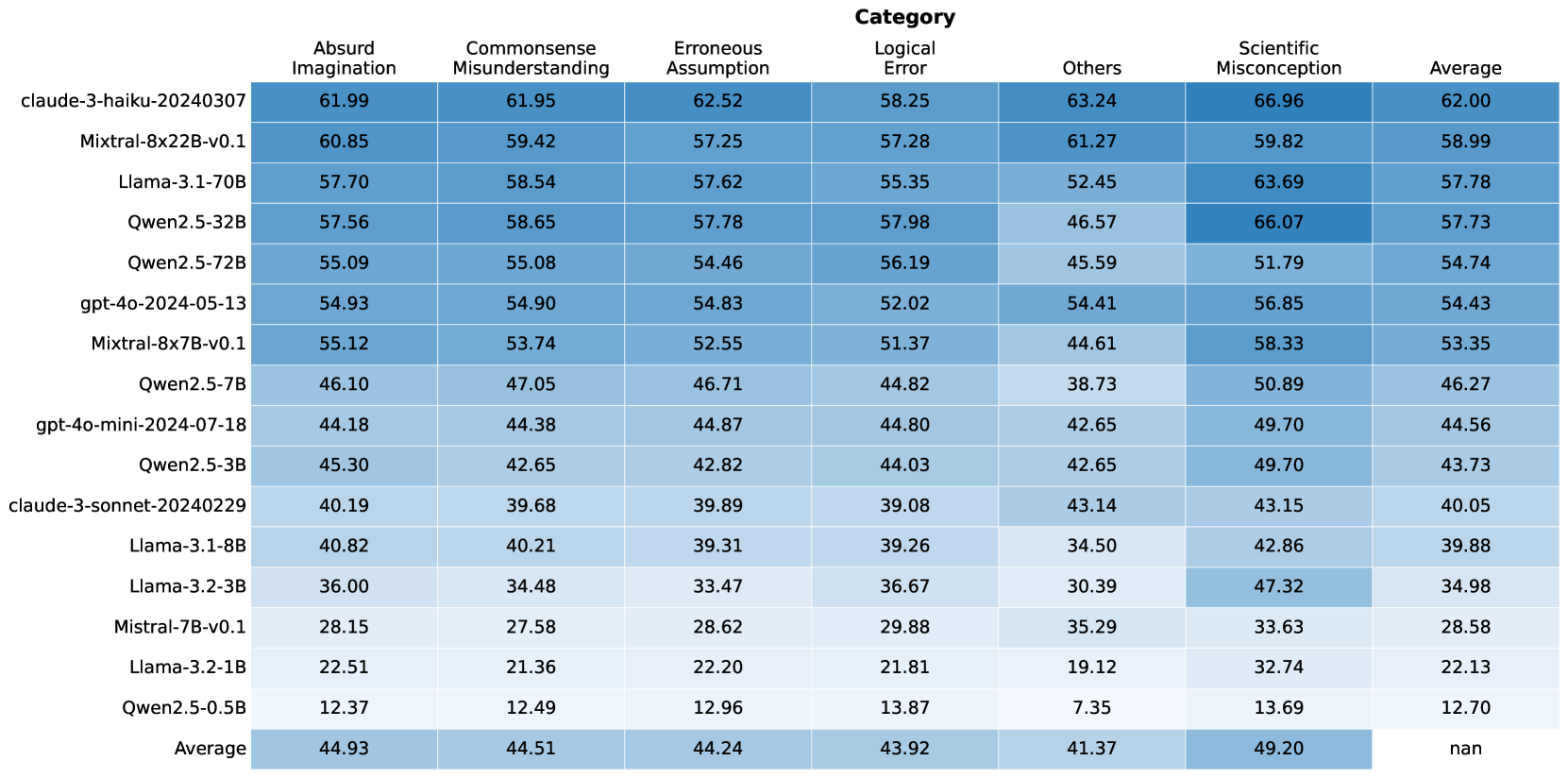

## Heatmap: LLM Performance Across Error Categories

### Overview

The image is a heatmap displaying the performance of various Large Language Models (LLMs) across different error categories. The rows represent the LLMs, and the columns represent the error categories and the average performance. The cells are color-coded, with darker shades indicating higher values.

### Components/Axes

* **Rows (LLMs):**

* claude-3-haiku-20240307

* Mixtral-8x22B-v0.1

* Llama-3.1-70B

* Qwen2.5-32B

* Qwen2.5-72B

* gpt-4o-2024-05-13

* Mixtral-8x7B-v0.1

* Qwen2.5-7B

* gpt-4o-mini-2024-07-18

* Qwen2.5-3B

* claude-3-sonnet-20240229

* Llama-3.1-8B

* Llama-3.2-3B

* Mistral-7B-v0.1

* Llama-3.2-1B

* Qwen2.5-0.5B

* Average

* **Columns (Categories):**

* Absurd Imagination

* Commonsense Misunderstanding

* Erroneous Assumption

* Logical Error

* Others

* Scientific Misconception

* Average

### Detailed Analysis or Content Details

The heatmap presents numerical values for each LLM's performance in each category. The values range from approximately 7.35 to 66.96. The "Average" row and column provide the average performance across LLMs and categories, respectively.

Here's a breakdown of the data, including trends and specific values:

* **Absurd Imagination:**

* Values range from 12.37 (Qwen2.5-0.5B) to 61.99 (claude-3-haiku-20240307).

* The average is 44.93.

* **Commonsense Misunderstanding:**

* Values range from 12.49 (Qwen2.5-0.5B) to 61.95 (claude-3-haiku-20240307).

* The average is 44.51.

* **Erroneous Assumption:**

* Values range from 12.96 (Qwen2.5-0.5B) to 62.52 (claude-3-haiku-20240307).

* The average is 44.24.

* **Logical Error:**

* Values range from 13.87 (Qwen2.5-0.5B) to 58.25 (claude-3-haiku-20240307).

* The average is 43.92.

* **Others:**

* Values range from 7.35 (Qwen2.5-0.5B) to 63.24 (claude-3-haiku-20240307).

* The average is 41.37.

* **Scientific Misconception:**

* Values range from 13.69 (Qwen2.5-0.5B) to 66.96 (claude-3-haiku-20240307).

* The average is 49.20.

* **Average (across categories):**

* Values range from 12.70 (Qwen2.5-0.5B) to 62.00 (claude-3-haiku-20240307).

* The average of the averages is not explicitly provided (marked as "nan").

### Key Observations

* **Top Performers:** The "claude-3-haiku-20240307" model consistently shows high values across all error categories and in the average, indicating better performance.

* **Bottom Performers:** The "Qwen2.5-0.5B" model consistently shows low values across all error categories and in the average, indicating worse performance.

* **Category Variation:** The "Scientific Misconception" category has the highest average value (49.20), suggesting that LLMs generally struggle more with this type of error. The "Others" category has the lowest average value (41.37).

### Interpretation

The heatmap provides a comparative analysis of LLM performance across different error types. The data suggests that certain models are more prone to specific types of errors than others. For example, while "claude-3-haiku-20240307" performs well overall, "Qwen2.5-0.5B" struggles across all categories. The higher average for "Scientific Misconception" indicates a general weakness in LLMs' understanding or handling of scientific concepts. This information can be valuable for developers in identifying areas for improvement in LLM training and design. The absence of an overall average ("nan") is an oversight, as it would provide a single metric for comparing the models' aggregate performance.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

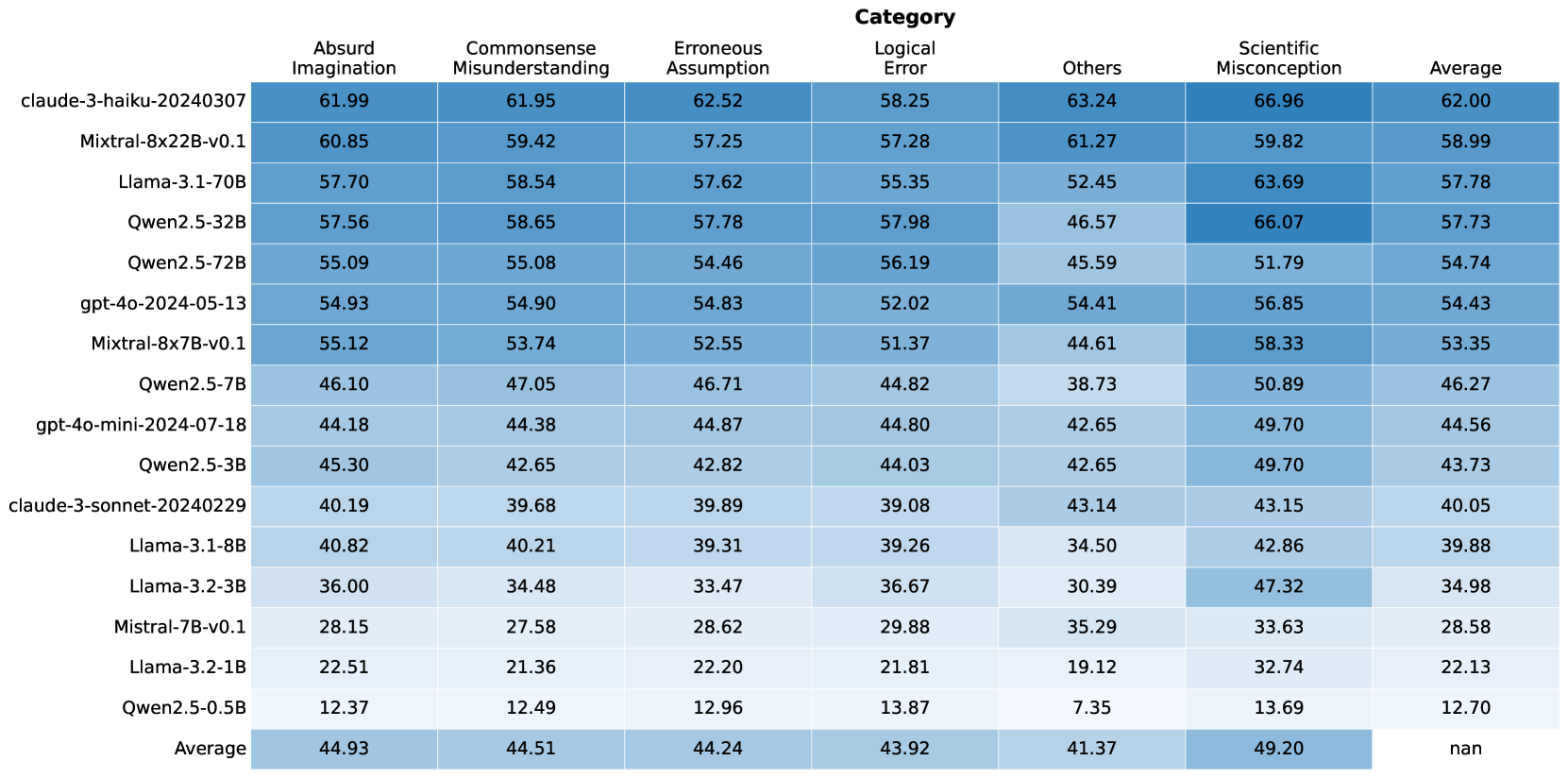

## Table: Model Performance Metrics by Category

### Overview

This image displays a table that presents performance metrics for various language models across different categories of errors or assessments. The table lists several models, each with associated numerical scores for "Absurd Imagination," "Commonsense Misunderstanding," "Erroneous Assumption," "Logical Error," "Others," and "Scientific Misconception." An "Average" row at the bottom summarizes the mean score for each category across all listed models. The data is presented with a color gradient, where darker shades of blue generally indicate higher scores.

### Components/Axes

**Row Headers (Models):**

* claude-3-haiku-20240307

* Mixtral-8x22B-v0.1

* Llama-3.1-70B

* Qwen2.5-32B

* Qwen2.5-72B

* gpt-4o-2024-05-13

* Mixtral-8x7B-v0.1

* Qwen2.5-7B

* gpt-4o-mini-2024-07-18

* Qwen2.5-3B

* claude-3-sonnet-20240229

* Llama-3.1-8B

* Llama-3.2-3B

* Mistral-7B-v0.1

* Llama-3.2-1B

* Qwen2.5-0.5B

* Average

**Column Headers (Categories):**

* Absurd Imagination

* Commonsense Misunderstanding

* Erroneous Assumption

* Logical Error

* Others

* Scientific Misconception

* Average

**Data Cells:** Numerical values representing scores for each model within each category.

### Content Details

The table contains the following data points:

| Model Name | Absurd Imagination | Commonsense Misunderstanding | Erroneous Assumption | Logical Error | Others | Scientific Misconception | Average |

| :----------------------------- | :----------------- | :--------------------------- | :------------------- | :------------ | :----- | :----------------------- | :------ |

| claude-3-haiku-20240307 | 61.99 | 61.95 | 62.52 | 58.25 | 63.24 | 66.96 | 62.00 |

| Mixtral-8x22B-v0.1 | 60.85 | 59.42 | 57.25 | 57.28 | 61.27 | 59.82 | 58.99 |

| Llama-3.1-70B | 57.70 | 58.54 | 57.62 | 55.35 | 52.45 | 63.69 | 57.78 |

| Qwen2.5-32B | 57.56 | 58.65 | 57.78 | 57.98 | 46.57 | 66.07 | 57.73 |

| Qwen2.5-72B | 55.09 | 55.08 | 54.46 | 56.19 | 45.59 | 51.79 | 54.74 |

| gpt-4o-2024-05-13 | 54.93 | 54.90 | 54.83 | 52.02 | 54.41 | 56.85 | 54.43 |

| Mixtral-8x7B-v0.1 | 55.12 | 53.74 | 52.55 | 51.37 | 44.61 | 58.33 | 53.35 |

| Qwen2.5-7B | 46.10 | 47.05 | 46.71 | 44.82 | 38.73 | 50.89 | 46.27 |

| gpt-4o-mini-2024-07-18 | 44.18 | 44.38 | 44.87 | 44.80 | 42.65 | 49.70 | 44.56 |

| Qwen2.5-3B | 45.30 | 42.65 | 42.82 | 44.03 | 42.65 | 49.70 | 43.73 |

| claude-3-sonnet-20240229 | 40.19 | 39.68 | 39.89 | 39.08 | 43.14 | 43.15 | 40.05 |

| Llama-3.1-8B | 40.82 | 40.21 | 39.31 | 39.26 | 34.50 | 42.86 | 39.88 |

| Llama-3.2-3B | 36.00 | 34.48 | 33.47 | 36.67 | 30.39 | 47.32 | 34.98 |

| Mistral-7B-v0.1 | 28.15 | 27.58 | 28.62 | 29.88 | 35.29 | 33.63 | 28.58 |

| Llama-3.2-1B | 22.51 | 21.36 | 22.20 | 21.81 | 19.12 | 32.74 | 22.13 |

| Qwen2.5-0.5B | 12.37 | 12.49 | 12.96 | 13.87 | 7.35 | 13.69 | 12.70 |

| **Average** | **44.93** | **44.51** | **44.24** | **43.92** | **41.37** | **49.20** | **nan** |

**Note:** The "Average" column for the "Average" row is marked as "nan" (not a number), which is expected as it would be the average of averages.

### Key Observations

* **Top Performers:** `claude-3-haiku-20240307` and `Mixtral-8x22B-v0.1` generally exhibit the highest scores across most categories, particularly in "Absurd Imagination," "Commonsense Misunderstanding," "Erroneous Assumption," and "Others."

* **Scientific Misconception:** This category shows the highest scores overall for many models, with `claude-3-haiku-20240307` (66.96) and `Qwen2.5-32B` (66.07) scoring particularly high. This suggests that models might be more prone to exhibiting scientific misconceptions or that the metric for this category is designed to capture a different aspect of performance.

* **Lowest Performers:** `Qwen2.5-0.5B` consistently has the lowest scores across all categories, indicating it is the least capable model among those tested. `Llama-3.2-1B` and `Mistral-7B-v0.1` also show very low scores.

* **Category Trends:**

* "Scientific Misconception" generally has higher scores than other categories for most models.

* "Others" tends to have lower scores for many models, especially for the smaller or less capable ones.

* "Absurd Imagination," "Commonsense Misunderstanding," and "Erroneous Assumption" show a similar range of scores for many models.

* "Logical Error" scores are generally in the mid-range.

* **Average Scores:** The overall average scores across all models are highest for "Absurd Imagination" (44.93) and "Scientific Misconception" (49.20), and lowest for "Others" (41.37).

### Interpretation

This table provides a quantitative assessment of different language models' performance across various dimensions, likely related to their ability to generate coherent, accurate, and contextually appropriate text. The categories suggest an evaluation of how well models avoid common pitfalls in reasoning and knowledge.

* **Model Capabilities:** The stark differences in scores, especially between models like `claude-3-haiku-20240307` and `Qwen2.5-0.5B`, highlight significant variations in the capabilities of these language models. Larger or more advanced models appear to perform better overall.

* **Nature of Metrics:** The high scores in "Scientific Misconception" are particularly interesting. This could imply that the models are more likely to generate text that aligns with common, albeit incorrect, scientific beliefs, or that the metric is designed to identify instances where models might confidently present flawed scientific information. Conversely, it could mean that models are adept at *identifying* scientific misconceptions if that's how the metric is framed, but the context of "performance metrics" suggests the former.

* **"Others" Category:** The consistently lower scores in the "Others" category for many models might indicate that this category captures a more nuanced or difficult aspect of performance, or that it represents a broader set of less common errors.

* **Overall Performance:** The average scores suggest that, as a group, these models are relatively strong in avoiding "Absurd Imagination" and "Commonsense Misunderstanding" but show more variability or weakness in "Scientific Misconception" and "Others." The "Average" column for each model provides a single composite score, but the breakdown by category offers a more granular understanding of where each model excels or struggles. The "nan" in the average of averages is a standard data representation for an undefined or uncalculable average.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Data Table: Model Performance Across Error Categories

### Overview

This image presents a data table comparing the performance of various language models across six different categories of errors: Absurd Imagination, Commonsense Misunderstanding, Erroneous Assumption, Logical Error, Others, and Scientific Misconception. A final column provides the average score across all categories. The table appears to be evaluating the models' propensity to make these types of errors, with higher scores potentially indicating a greater tendency to produce that type of error.

### Components/Axes

* **Rows:** Represent different language models: `claude-3-haiku-20240307`, `Mixtral-8x22B-v0.1`, `Llama-3.1-70B`, `Owen2.5-32B`, `Owen2.5-72B`, `gpt-4o-2024-05-13`, `Mixtral-8x7B-v0.1`, `Owen2.5-7B`, `gpt-4o-mini-2024-07-18`, `Owen2.5-3B`, `claude-3-sonnet-20240229`, `Llama2-70B`, `Llama3-8B`, `gpt-3.5-turbo`, `Llama-2-13B`, `Yi-34B-v2.2`, `Qwen1.5-110B`, `Llama-2-70B`, `Falcon-180B`, `Yi-65B-v2.2`.

* **Columns:** Represent error categories and the average score: `Absurd Imagination`, `Commonsense Misunderstanding`, `Erroneous Assumption`, `Logical Error`, `Others`, `Scientific Misconception`, `Average`.

* **Data:** Numerical scores representing the model's performance in each category.

* **Color Coding:** The table uses a color gradient to visually represent the scores. Darker shades of green indicate higher scores, while lighter shades indicate lower scores.

### Detailed Analysis / Content Details

Here's a breakdown of the data, with approximate values and trend observations. I will proceed row by row, noting trends and specific values.

* **claude-3-haiku-20240307:** Scores are generally high across all categories. `Absurd Imagination`: 61.99, `Commonsense Misunderstanding`: 61.95, `Erroneous Assumption`: 62.52, `Logical Error`: 58.25, `Others`: 63.24, `Scientific Misconception`: 66.96, `Average`: 62.00.

* **Mixtral-8x22B-v0.1:** High scores, slightly lower than Claude-3-haiku. `Absurd Imagination`: 60.85, `Commonsense Misunderstanding`: 59.42, `Erroneous Assumption`: 57.25, `Logical Error`: 57.28, `Others`: 61.27, `Scientific Misconception`: 59.82, `Average`: 58.99.

* **Llama-3.1-70B:** Scores are generally lower than the previous two models. `Absurd Imagination`: 57.70, `Commonsense Misunderstanding`: 58.54, `Erroneous Assumption`: 57.62, `Logical Error`: 55.35, `Others`: 52.45, `Scientific Misconception`: 63.69, `Average`: 57.78.

* **Owen2.5-32B:** `Absurd Imagination`: 57.56, `Commonsense Misunderstanding`: 58.65, `Erroneous Assumption`: 57.78, `Logical Error`: 57.98, `Others`: 46.57, `Scientific Misconception`: 66.07, `Average`: 57.73.

* **Owen2.5-72B:** `Absurd Imagination`: 55.09, `Commonsense Misunderstanding`: 55.08, `Erroneous Assumption`: 54.46, `Logical Error`: 56.19, `Others`: 45.59, `Scientific Misconception`: 51.79, `Average`: 54.74.

* **gpt-4o-2024-05-13:** `Absurd Imagination`: 54.93, `Commonsense Misunderstanding`: 54.90, `Erroneous Assumption`: 54.83, `Logical Error`: 52.02, `Others`: 54.41, `Scientific Misconception`: 56.85, `Average`: 54.43.

* **Mixtral-8x7B-v0.1:** `Absurd Imagination`: 55.12, `Commonsense Misunderstanding`: 53.74, `Erroneous Assumption`: 52.55, `Logical Error`: 51.37, `Others`: 44.61, `Scientific Misconception`: 58.33, `Average`: 53.35.

* **Owen2.5-7B:** `Absurd Imagination`: 46.10, `Commonsense Misunderstanding`: 47.05, `Erroneous Assumption`: 46.71, `Logical Error`: 44.82, `Others`: 38.73, `Scientific Misconception`: 50.89, `Average`: 46.27.

* **gpt-4o-mini-2024-07-18:** `Absurd Imagination`: 44.18, `Commonsense Misunderstanding`: 44.38, `Erroneous Assumption`: 44.87, `Logical Error`: 44.80, `Others`: 42.65, `Scientific Misconception`: 49.70, `Average`: 44.56.

* **Owen2.5-3B:** `Absurd Imagination`: 45.30, `Commonsense Misunderstanding`: 42.65, `Erroneous Assumption`: 42.82, `Logical Error`: 44.03, `Others`: 42.65, `Scientific Misconception`: 49.70, `Average`: 43.73.

* **claude-3-sonnet-20240229:** `Absurd Imagination`: 40.19, `Commonsense Misunderstanding`: 39.68, `Erroneous Assumption`: 39.89, `Logical Error`: 39.08, `Others`: 43.14, `Scientific Misconception`: 43.15, `Average`: 40.05.

* **Llama2-70B:** `Absurd Imagination`: 40.82, `Commonsense Misunderstanding`: 40.21, `Erroneous Assumption`: 39.31, `Logical Error`: 39.26, `Others`: 34.50, `Scientific Misconception`: 42.86, `Average`: 39.88.

* **Llama3-8B:** `Absurd Imagination`: 36.00, `Commonsense Misunderstanding`: 36.47, `Erroneous Assumption`: 36.82, `Logical Error`: 36.79, `Others`: 34.92, `Scientific Misconception`: 43.50, `Average`: 36.48.

* **gpt-3.5-turbo:** `Absurd Imagination`: 35.61, `Commonsense Misunderstanding`: 35.14, `Erroneous Assumption`: 34.28, `Logical Error`: 34.16, `Others`: 31.89, `Scientific Misconception`: 40.74, `Average`: 35.17.

* **Llama-2-13B:** `Absurd Imagination`: 22.54, `Commonsense Misunderstanding`: 24.39, `Erroneous Assumption`: 22.83, `Logical Error`: 26.54, `Others`: 18.42, `Scientific Misconception`: 32.43, `Average`: 22.53.

* **Yi-34B-v2.2:** `Absurd Imagination`: 41.83, `Commonsense Misunderstanding`: 42.64, `Erroneous Assumption`: 42.01, `Logical Error`: 41.19, `Others`: 38.06, `Scientific Misconception`: 45.65, `Average`: 42.82.

* **Qwen1.5-110B:** `Absurd Imagination`: 43.08, `Commonsense Misunderstanding`: 43.76, `Erroneous Assumption`: 43.20, `Logical Error`: 42.31, `Others`: 39.92, `Scientific Misconception`: 47.37, `Average`: 43.93.

* **Llama-2-70B:** `Absurd Imagination`: 43.64, `Commonsense Misunderstanding`: 45.22, `Erroneous Assumption`: 44.37, `Logical Error`: 43.56, `Others`: 40.87, `Scientific Misconception`: 49.02, `Average`: 44.32.

* **Falcon-180B:** `Absurd Imagination`: 42.06, `Commonsense Misunderstanding`: 42.86, `Erroneous Assumption`: 42.47, `Logical Error`: 41.60, `Others`: 38.22, `Scientific Misconception`: 46.35, `Average`: 42.92.

* **Yi-65B-v2.2:** `Absurd Imagination`: 39.98, `Commonsense Misunderstanding`: 40.55, `Erroneous Assumption`: 40.12, `Logical Error`: 39.98, `Others`: 36.58, `Scientific Misconception`: 42.60, `Average`: 40.35.

### Key Observations

* **Claude-3-haiku-20240307** and **Mixtral-8x22B-v0.1** consistently score highest across most categories, suggesting they are less prone to these types of errors.

* **Llama-2-13B** scores significantly lower than other models, indicating a higher susceptibility to making these errors.

* The "Others" category consistently has lower scores compared to the other error types, suggesting it's easier for the models to avoid these less-defined errors.

* "Scientific Misconception" scores are generally higher than "Logical Error" for most models.

* There is a general trend of decreasing scores as the models are listed further down the table.

### Interpretation

This data provides a comparative analysis of language model performance regarding specific error types. The higher scores indicate a greater tendency to generate responses falling into those error categories. The results suggest that models like Claude-3-haiku and Mixtral-8x22B-v0.1 are more robust in avoiding these errors, while models like Llama-2-13B are more prone to them.

The differences in performance across error categories are also insightful. The relatively lower scores in the "Others" category might indicate that these errors are more easily detectable or avoided during model training. The higher scores in "Scientific Misconception" could suggest that models struggle with nuanced scientific reasoning or have outdated knowledge.

The color gradient effectively highlights the relative performance of each model, making it easy to identify the best and worst performers in each category. This information is valuable for developers and researchers looking to understand the strengths and weaknesses of different language models and to improve their performance in specific areas. The data suggests a trade-off between model size and error rate, with larger models generally performing better, but this is not a universal rule. Further investigation would be needed to understand the underlying reasons for these performance differences.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Table: AI Model Performance Across Error Categories

### Overview

The image displays a heatmap-style table comparing the performance of 16 different large language models (LLMs) across six specific error categories and an overall average. The performance metric is numerical, likely representing a score or accuracy percentage, with higher values indicating better performance. The table uses a blue color gradient, where darker shades correspond to higher scores.

### Components/Axes

* **Rows (Models):** 16 distinct AI models are listed vertically on the left side. They are ordered from highest to lowest average score.

* **Columns (Categories):** 7 columns are present. The first six are specific error categories, and the final column is the "Average" score across all categories for each model.

* **Header Row:** The top row contains the column labels: "Absurd Imagination", "Commonsense Misunderstanding", "Erroneous Assumption", "Logical Error", "Others", "Scientific Misconception", and "Average".

* **Footer Row:** The bottom row is labeled "Average" and provides the average score for each category across all listed models.

* **Data Cells:** Each cell contains a numerical value with two decimal places, representing the model's score in that specific category.

### Detailed Analysis

**Model List (Rows, from top to bottom):**

1. claude-3-haiku-20240307

2. Mixtral-8x22B-v0.1

3. Llama-3.1-70B

4. Qwen2.5-32B

5. Qwen2.5-72B

6. gpt-4o-2024-05-13

7. Mixtral-8x7B-v0.1

8. Qwen2.5-7B

9. gpt-4o-mini-2024-07-18

10. Qwen2.5-3B

11. claude-3-sonnet-20240229

12. Llama-3.1-8B

13. Llama-3.2-3B

14. Mistral-7B-v0.1

15. Llama-3.2-1B

16. Qwen2.5-0.5B

**Category List (Columns, from left to right):**

1. Absurd Imagination

2. Commonsense Misunderstanding

3. Erroneous Assumption

4. Logical Error

5. Others

6. Scientific Misconception

7. Average

**Complete Data Table (Model x Category Scores):**

| Model | Absurd Imagination | Commonsense Misunderstanding | Erroneous Assumption | Logical Error | Others | Scientific Misconception | Average |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| **claude-3-haiku-20240307** | 61.99 | 61.95 | 62.52 | 58.25 | 63.24 | 66.96 | 62.00 |

| **Mixtral-8x22B-v0.1** | 60.85 | 59.42 | 57.25 | 57.28 | 61.27 | 59.82 | 58.99 |

| **Llama-3.1-70B** | 57.70 | 58.54 | 57.62 | 55.35 | 52.45 | 63.69 | 57.78 |

| **Qwen2.5-32B** | 57.56 | 58.65 | 57.78 | 57.98 | 46.57 | 66.07 | 57.73 |

| **Qwen2.5-72B** | 55.09 | 55.08 | 54.46 | 56.19 | 45.59 | 51.79 | 54.74 |

| **gpt-4o-2024-05-13** | 54.93 | 54.90 | 54.83 | 52.02 | 54.41 | 56.85 | 54.43 |

| **Mixtral-8x7B-v0.1** | 55.12 | 53.74 | 52.55 | 51.37 | 44.61 | 58.33 | 53.35 |

| **Qwen2.5-7B** | 46.10 | 47.05 | 46.71 | 44.82 | 38.73 | 50.89 | 46.27 |

| **gpt-4o-mini-2024-07-18** | 44.18 | 44.38 | 44.87 | 44.80 | 42.65 | 49.70 | 44.56 |

| **Qwen2.5-3B** | 45.30 | 42.65 | 42.82 | 44.03 | 42.65 | 49.70 | 43.73 |

| **claude-3-sonnet-20240229** | 40.19 | 39.68 | 39.89 | 39.08 | 43.14 | 43.15 | 40.05 |

| **Llama-3.1-8B** | 40.82 | 40.21 | 39.31 | 39.26 | 34.50 | 42.86 | 39.88 |

| **Llama-3.2-3B** | 36.00 | 34.48 | 33.47 | 36.67 | 30.39 | 47.32 | 34.98 |

| **Mistral-7B-v0.1** | 28.15 | 27.58 | 28.62 | 29.88 | 35.29 | 33.63 | 28.58 |

| **Llama-3.2-1B** | 22.51 | 21.36 | 22.20 | 21.81 | 19.12 | 32.74 | 22.13 |

| **Qwen2.5-0.5B** | 12.37 | 12.49 | 12.96 | 13.87 | 7.35 | 13.69 | 12.70 |

| **Average** | **44.93** | **44.51** | **44.24** | **43.92** | **41.37** | **49.20** | **nan** |

### Key Observations

1. **Performance Hierarchy:** There is a clear and significant performance gradient. The top model (`claude-3-haiku-20240307`, avg 62.00) scores nearly five times higher than the bottom model (`Qwen2.5-0.5B`, avg 12.70).

2. **Category Difficulty:** The "Scientific Misconception" category has the highest average score (49.20), suggesting models find this category relatively easier. The "Others" category has the lowest average (41.37), indicating it may be the most challenging or heterogeneous.

3. **Model Consistency:** The top-performing model (`claude-3-haiku-20240307`) shows strong, consistent performance across all categories, with no score below 58.25.

4. **Notable Outliers:**

* `Qwen2.5-32B` achieves the second-highest single-category score (66.07 in Scientific Misconception) but has a relatively low score in "Others" (46.57).

* `Llama-3.2-3B` shows a significant disparity, scoring poorly in most categories but achieving a relatively high 47.32 in "Scientific Misconception".

* The "Others" category shows the widest variance among models, with scores ranging from 7.35 to 63.24.

5. **Model Family Trends:** Within the Qwen2.5 series, performance scales predictably with model size (0.5B < 3B < 7B < 32B < 72B), though the 72B model underperforms the 32B model in the overall average.

### Interpretation

This table provides a comparative benchmark of LLMs on their ability to avoid or correctly handle specific types of reasoning errors. The data suggests that model scale (parameter count) is a strong, but not absolute, predictor of performance, as seen in the Qwen2.5 family. However, architecture and training data also play critical roles, evidenced by `claude-3-haiku-20240307` outperforming larger models like `Mixtral-8x22B-v0.1` and `Qwen2.5-72B`.

The categorization of errors implies a focus on testing robustness and reasoning rather than just factual recall. The high average in "Scientific Misconception" might indicate that models are better trained on scientific facts or that the test set for this category is less ambiguous. Conversely, the low average and high variance in "Others" suggest this is a catch-all for complex, nuanced, or rare error types that current models struggle with consistently.

For a technical user, this table is a tool for model selection based on specific use-case vulnerabilities. For instance, if an application is prone to logical errors, one might prioritize `Qwen2.5-32B` (57.98) over `Llama-3.1-70B` (55.35), despite the latter's larger size. The "nan" in the bottom-right cell indicates the overall average of the averages was not calculated or is not meaningful in this context.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Table: AI Model Performance Comparison Across Categories

### Overview

This table compares the performance of various AI models across seven categories: Absurd Imagination, Commonsense Misunderstanding, Erroneous Assumption, Logical Error, Others, Scientific Misconception, and Average. The data includes 16 models with parameter sizes ranging from 0.5B to 70B, along with their average scores.

### Components/Axes

- **Columns**:

1. Model Name (e.g., claude-3-haiku-20240307)

2. Absurd Imagination

3. Commonsense Misunderstanding

4. Erroneous Assumption

5. Logical Error

6. Others

7. Scientific Misconception

8. Average

- **Rows**:

- 16 AI models (e.g., Llama-3.1-70B, Qwen2.5-32B)

- Average row (last row)

### Detailed Analysis

#### Model Performance

1. **Top Performers**:

- **claude-3-haiku-20240307**:

- Absurd Imagination: 61.99

- Commonsense Misunderstanding: 61.95

- Scientific Misconception: 66.96

- Average: 62.00

- **Llama-3.1-70B**:

- Absurd Imagination: 57.70

- Scientific Misconception: 63.69

- Average: 57.78

- **Qwen2.5-32B**:

- Scientific Misconception: 66.07

- Average: 57.73

2. **Mid-Range Models**:

- **Mistral-8x22B-v0.1**:

- Average: 58.99

- **gpt-4o-2024-05-13**:

- Average: 54.43

- **Mistral-8x7B-v0.1**:

- Average: 53.35

3. **Lower Performers**:

- **Qwen2.5-0.5B**:

- Absurd Imagination: 12.37

- Scientific Misconception: 13.69

- Average: 12.70

- **Llama-3.2-1B**:

- Average: 22.13

- **Mistral-7B-v0.1**:

- Average: 28.58

#### Category Trends

- **Highest Scores**:

- Scientific Misconception: claude-3-haiku-20240307 (66.96)

- Absurd Imagination: claude-3-haiku-20240307 (61.99)

- Others: claude-3-haiku-20240307 (63.24)

- **Lowest Scores**:

- Scientific Misconception: Qwen2.5-0.5B (13.69)

- Absurd Imagination: Qwen2.5-0.5B (12.37)

- Others: Qwen2.5-0.5B (7.35)

### Key Observations

1. **Model Size Correlation**: Larger models (e.g., 70B parameters) generally outperform smaller ones, but exceptions exist (e.g., Mistral-7B-v0.1).

2. **Claude-3-Haiku Dominance**: Consistently leads in most categories, suggesting superior architecture or training.

3. **Qwen2.5 Variants**: Show mixed performance, with larger variants (32B) outperforming smaller ones (0.5B).

4. **Mistral Models**: Mid-sized models (7B, 8x7B) achieve balanced scores, indicating efficiency.

5. **Llama-3.1-70B**: Strong in Scientific Misconception (63.69) but lags in Absurd Imagination (57.70).

### Interpretation

The data suggests that model performance is influenced by:

1. **Architecture**: Claude-3-Haiku's unique design likely contributes to its dominance.

2. **Training Data**: Larger models may have access to more diverse datasets, improving generalization.

3. **Efficiency vs. Power**: Smaller models (e.g., Mistral-7B) balance performance and resource usage, while larger models prioritize capability over efficiency.

4. **Task Specificity**: Scientific Misconception and Absurd Imagination require nuanced reasoning, where Claude-3-Haiku excels.

The average scores reveal a bimodal distribution: high-performing models cluster around 55-65, while lower-performing models (<30) struggle across all categories. This indicates a potential threshold for effective AI reasoning capabilities.

DECODING INTELLIGENCE...