TECHNICAL ASSET FINGERPRINT

e99ebad859f23c2e21db89aa

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

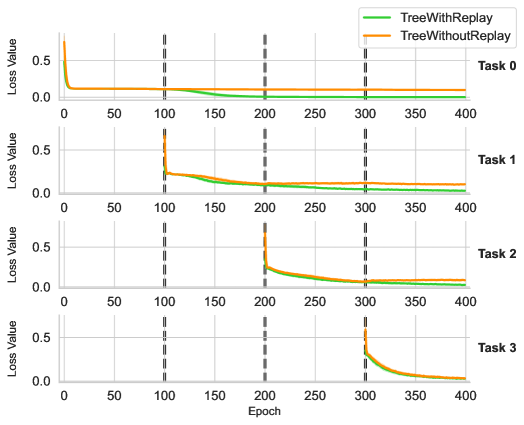

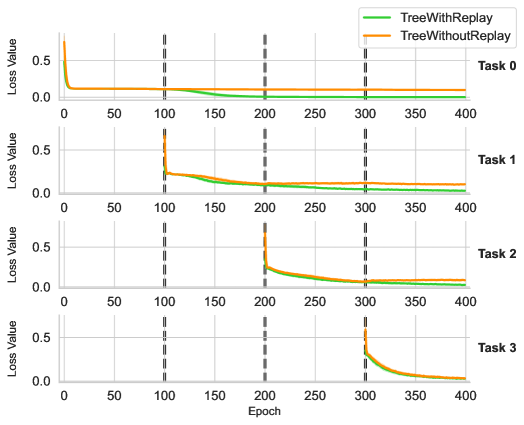

## Line Chart: Loss Value vs. Epoch for Different Tasks

### Overview

The image presents a series of four line charts, each displaying the loss value over epochs for two different training methods: "TreeWithReplay" and "TreeWithoutReplay." Each chart corresponds to a different task (Task 0, Task 1, Task 2, and Task 3). The x-axis represents the epoch number, ranging from 0 to 400. The y-axis represents the loss value, ranging from 0.0 to 0.5. Vertical dashed lines are present at Epoch 100, 200, and 300.

### Components/Axes

* **Title:** Loss Value vs. Epoch for Different Tasks (inferred)

* **Y-axis Label:** Loss Value

* **X-axis Label:** Epoch

* **X-axis Scale:** 0, 50, 100, 150, 200, 250, 300, 350, 400

* **Y-axis Scale:** 0.0, 0.5

* **Legend (Top-Right):**

* Green Line: TreeWithReplay

* Orange Line: TreeWithoutReplay

* **Tasks:** Task 0, Task 1, Task 2, Task 3

* **Vertical Dashed Lines:** Epoch 100, Epoch 200, Epoch 300

### Detailed Analysis

**Task 0:**

* **TreeWithReplay (Green):** Starts at approximately 0.5 and rapidly decreases to approximately 0.05 by epoch 50, then remains relatively constant.

* **TreeWithoutReplay (Orange):** Starts at approximately 0.1 and remains relatively constant throughout the epochs.

**Task 1:**

* **TreeWithReplay (Green):** Starts at approximately 0.5, rapidly decreases to approximately 0.1 by epoch 100, then decreases further to approximately 0.05 by epoch 200, and remains relatively constant.

* **TreeWithoutReplay (Orange):** Starts at approximately 0.6, rapidly decreases to approximately 0.2 by epoch 100, then decreases further to approximately 0.1 by epoch 200, and remains relatively constant.

**Task 2:**

* **TreeWithReplay (Green):** Starts at approximately 0.5, rapidly decreases to approximately 0.1 by epoch 200, then decreases further to approximately 0.05 by epoch 300, and remains relatively constant.

* **TreeWithoutReplay (Orange):** Starts at approximately 0.6, rapidly decreases to approximately 0.2 by epoch 200, then decreases further to approximately 0.1 by epoch 300, and remains relatively constant.

**Task 3:**

* **TreeWithReplay (Green):** Starts at approximately 0.5, rapidly decreases to approximately 0.1 by epoch 300, then decreases further to approximately 0.05 by epoch 400.

* **TreeWithoutReplay (Orange):** Starts at approximately 0.6, rapidly decreases to approximately 0.2 by epoch 300, then decreases further to approximately 0.1 by epoch 400.

### Key Observations

* The loss value generally decreases as the epoch number increases for both methods across all tasks.

* The "TreeWithReplay" method generally achieves a lower loss value than the "TreeWithoutReplay" method, especially in later tasks.

* The rate of decrease in loss value slows down as the epoch number increases.

* The vertical dashed lines at Epoch 100, 200, and 300 seem to indicate significant points in the training process, possibly marking the end of a training phase or the introduction of a new task.

* The initial loss values for both methods are higher in later tasks (Task 1, Task 2, Task 3) compared to Task 0.

### Interpretation

The charts demonstrate the learning process of two different training methods ("TreeWithReplay" and "TreeWithoutReplay") across multiple tasks. The decreasing loss values indicate that both methods are learning and improving their performance as the training progresses. The "TreeWithReplay" method appears to be more effective in reducing the loss value, suggesting that the replay mechanism contributes to better learning. The increasing initial loss values in later tasks may indicate that the model is facing more challenging problems or that the tasks are becoming more complex. The vertical dashed lines could represent milestones in the training process, such as the introduction of new data or changes in the training parameters. The data suggests that "TreeWithReplay" is a superior method for this type of learning task.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Multi-Panel Line Chart: Loss Value vs. Epoch for Two Methods Across Four Tasks

### Overview

The image displays a set of four vertically stacked line charts, each comparing the training loss over time for two different methods: "TreeWithReplay" and "TreeWithoutReplay". The charts track performance across four sequential tasks (Task 0, Task 1, Task 2, Task 3), suggesting a continual or sequential learning scenario. The primary visual pattern is a sharp initial drop in loss, followed by a plateau, with new spikes occurring at specific epoch intervals (100, 200, 300) that correspond to the introduction of new tasks.

### Components/Axes

* **Chart Type:** Multi-panel (faceted) line chart.

* **Panels:** Four subplots arranged vertically, labeled on the right side as "Task 0", "Task 1", "Task 2", and "Task 3" from top to bottom.

* **X-Axis (Common):** Labeled "Epoch" at the bottom of the figure. The scale runs from 0 to 400 with major tick marks at 0, 50, 100, 150, 200, 250, 300, 350, and 400.

* **Y-Axis (Per Panel):** Each subplot has its own y-axis labeled "Loss Value".

* Task 0: Scale from 0.0 to approximately 0.8 (ticks at 0.0, 0.5).

* Task 1: Scale from 0.0 to approximately 0.8 (ticks at 0.0, 0.5).

* Task 2: Scale from 0.0 to approximately 0.8 (ticks at 0.0, 0.5).

* Task 3: Scale from 0.0 to approximately 0.8 (ticks at 0.0, 0.5).

* **Legend:** Positioned in the top-right corner of the entire figure, above the Task 0 plot.

* **Green Line:** Labeled "TreeWithReplay".

* **Orange Line:** Labeled "TreeWithoutReplay".

* **Vertical Reference Lines:** Dashed gray vertical lines are present in all subplots at Epoch = 100, 200, and 300. These likely mark the boundaries where new tasks are introduced.

### Detailed Analysis

**Task 0 (Top Panel):**

* **Trend:** Both lines start at a high loss value (≈0.7-0.8) at Epoch 0 and drop sharply within the first 10-20 epochs to a low value near 0.1. They then plateau. After the vertical line at Epoch 100, the "TreeWithReplay" (green) line shows a very slight, gradual decrease, while the "TreeWithoutReplay" (orange) line remains flat.

* **Key Points (Approximate):**

* Epoch 0: Loss ≈ 0.75 (both).

* Epoch 20: Loss ≈ 0.1 (both).

* Epoch 100: Loss ≈ 0.08 (green), ≈ 0.10 (orange).

* Epoch 400: Loss ≈ 0.05 (green), ≈ 0.10 (orange).

**Task 1 (Second Panel):**

* **Trend:** The plot begins at Epoch 100. Both lines start with a sharp spike to a loss of ≈0.7, then rapidly decrease. The "TreeWithReplay" (green) line consistently maintains a lower loss than the "TreeWithoutReplay" (orange) line after the initial drop. Both lines show a gradual, slight decline from Epoch 150 to 400.

* **Key Points (Approximate):**

* Epoch 100 (start): Spike to Loss ≈ 0.7 (both).

* Epoch 120: Loss ≈ 0.2 (green), ≈ 0.25 (orange).

* Epoch 200: Loss ≈ 0.1 (green), ≈ 0.15 (orange).

* Epoch 400: Loss ≈ 0.08 (green), ≈ 0.12 (orange).

**Task 2 (Third Panel):**

* **Trend:** The plot begins at Epoch 200. A sharp spike occurs for both methods, reaching a loss of ≈0.6-0.7. Following the spike, both lines decrease rapidly. The "TreeWithReplay" (green) line again achieves and maintains a lower loss value compared to the "TreeWithoutReplay" (orange) line.

* **Key Points (Approximate):**

* Epoch 200 (start): Spike to Loss ≈ 0.65 (green), ≈ 0.70 (orange).

* Epoch 220: Loss ≈ 0.15 (green), ≈ 0.20 (orange).

* Epoch 300: Loss ≈ 0.08 (green), ≈ 0.12 (orange).

* Epoch 400: Loss ≈ 0.06 (green), ≈ 0.10 (orange).

**Task 3 (Bottom Panel):**

* **Trend:** The plot begins at Epoch 300 with a sharp spike for both methods to a loss of ≈0.5-0.6. Both lines then decrease rapidly and converge to a very similar, low loss value by Epoch 400. The performance gap between the two methods is smallest in this final task.

* **Key Points (Approximate):**

* Epoch 300 (start): Spike to Loss ≈ 0.55 (green), ≈ 0.60 (orange).

* Epoch 320: Loss ≈ 0.15 (both).

* Epoch 400: Loss ≈ 0.05 (both).

### Key Observations

1. **Task Introduction Spikes:** A clear, sharp increase in loss occurs at the beginning of each new task (Epochs 100, 200, 300), indicating the model's initial poor performance on unfamiliar data.

2. **Consistent Performance Gap:** In Tasks 0, 1, and 2, the "TreeWithReplay" (green) method consistently achieves a lower loss value than the "TreeWithoutReplay" (orange) method after the initial learning phase. This gap is most pronounced in Task 1.

3. **Convergence in Final Task:** In Task 3, the performance of both methods becomes nearly identical by the end of training (Epoch 400).

4. **Learning Dynamics:** All tasks show a pattern of rapid initial learning (steep negative slope) followed by a long tail of gradual refinement (shallow negative slope).

### Interpretation

This chart demonstrates the comparative effectiveness of a "replay" mechanism in a continual learning setting. The "TreeWithReplay" method appears to mitigate **catastrophic forgetting** more effectively than the method without replay.

* **What the data suggests:** The replay mechanism helps the model retain knowledge from previous tasks when learning new ones. This is evidenced by the green line ("With Replay") maintaining a lower loss on earlier tasks (e.g., Task 0's line continues to improve slightly after Epoch 100) and recovering faster with a lower loss on subsequent tasks (Tasks 1 & 2).

* **How elements relate:** The vertical dashed lines are critical anchors, showing that the spikes in loss are not random but systematically tied to the introduction of new tasks. The legend is essential for attributing the performance difference to the specific methodological variable (replay vs. no replay).

* **Notable trends/anomalies:** The most significant trend is the consistent advantage of the replay method until the final task. The convergence in Task 3 is an interesting anomaly. It could suggest that by the fourth task, the model's capacity or the task's difficulty leads to similar final performance regardless of replay, or that the benefits of replay are most critical in the intermediate stages of sequential learning. The data strongly implies that the "TreeWithReplay" architecture is more robust for multi-task or continual learning scenarios.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: Loss Value vs. Epoch Across Four Tasks

### Overview

The image displays four line charts (Task 0–Task 3) comparing the loss values of two models, **TreeWithReplay** (green) and **TreeWithoutReplay** (orange), over 400 epochs. Each task shows distinct convergence patterns, with vertical dashed lines marking epochs 100, 200, 300, and 400.

---

### Components/Axes

- **X-axis**: Epoch (0–400, increments of 50).

- **Y-axis**: Loss Value (0–0.5, increments of 0.1).

- **Legend**:

- Green: TreeWithReplay

- Orange: TreeWithoutReplay

- **Task Labels**: Positioned at the top-right of each subplot (Task 0–Task 3).

---

### Detailed Analysis

#### Task 0

- **TreeWithReplay (Green)**:

- Starts at ~0.5 loss, drops sharply to near 0 by epoch 50, and remains stable.

- **TreeWithoutReplay (Orange)**:

- Starts at ~0.5 loss, declines gradually to near 0 by epoch 100, then stabilizes.

#### Task 1

- **TreeWithReplay (Green)**:

- Drops rapidly to near 0 by epoch 50, maintaining stability.

- **TreeWithoutReplay (Orange)**:

- Starts at ~0.5 loss, spikes to ~0.5 at epoch 100, then declines to near 0 by epoch 200.

#### Task 2

- **TreeWithReplay (Green)**:

- Drops sharply to near 0 by epoch 50, remaining stable.

- **TreeWithoutReplay (Orange)**:

- Declines gradually from ~0.5 to near 0 by epoch 200.

#### Task 3

- **TreeWithReplay (Green)**:

- Drops rapidly to near 0 by epoch 50, stable thereafter.

- **TreeWithoutReplay (Orange)**:

- Starts at ~0.5 loss, spikes to ~0.5 at epoch 300, then declines to near 0 by epoch 400.

---

### Key Observations

1. **Consistent Performance of TreeWithReplay**:

- Across all tasks, TreeWithReplay achieves near-zero loss by epoch 50–100 and remains stable.

2. **Instability in TreeWithoutReplay**:

- Tasks 1 and 3 show abrupt loss spikes (~0.5) at epochs 100 and 300, respectively, before recovery.

3. **Slower Convergence for TreeWithoutReplay**:

- In Tasks 0, 2, and 3, TreeWithoutReplay takes 100–200 epochs to reach near-zero loss compared to TreeWithReplay’s 50–100 epochs.

---

### Interpretation

- **Impact of Replay Mechanism**:

- TreeWithReplay demonstrates faster and more stable convergence, suggesting the replay mechanism mitigates catastrophic forgetting or instability during training.

- **Spike Analysis**:

- The spikes in TreeWithoutReplay (Tasks 1 and 3) may indicate temporary destabilization when learning new tasks without retaining prior knowledge. This aligns with known challenges in continual learning, where models without replay often forget earlier tasks.

- **Practical Implications**:

- The results highlight the importance of replay mechanisms for maintaining performance across sequential tasks, particularly in dynamic or multi-task learning environments.

---

### Spatial Grounding & Verification

- **Legend Position**: Top-right corner, clearly associating colors with model names.

- **Axis Labels**: Y-axis labeled "Loss Value," X-axis labeled "Epoch," with consistent scaling across all subplots.

- **Data Series Alignment**: Green (TreeWithReplay) and orange (TreeWithoutReplay) lines match legend labels in all tasks.

---

### Content Details

- **Task 0**:

- TreeWithReplay: 0.5 → 0 (epoch 0–50).

- TreeWithoutReplay: 0.5 → 0 (epoch 0–100).

- **Task 1**:

- TreeWithReplay: 0.5 → 0 (epoch 0–50).

- TreeWithoutReplay: 0.5 → 0.5 (spike at epoch 100) → 0 (epoch 100–200).

- **Task 2**:

- TreeWithReplay: 0.5 → 0 (epoch 0–50).

- TreeWithoutReplay: 0.5 → 0 (epoch 0–200).

- **Task 3**:

- TreeWithReplay: 0.5 → 0 (epoch 0–50).

- TreeWithoutReplay: 0.5 → 0.5 (spike at epoch 300) → 0 (epoch 300–400).

---

### Final Notes

The chart underscores the efficacy of replay mechanisms in stabilizing training dynamics. The spikes in TreeWithoutReplay suggest potential overfitting or instability when tasks are introduced sequentially without memory retention. Further investigation into the spike causes (e.g., data distribution shifts, hyperparameter sensitivity) could refine model robustness.

DECODING INTELLIGENCE...