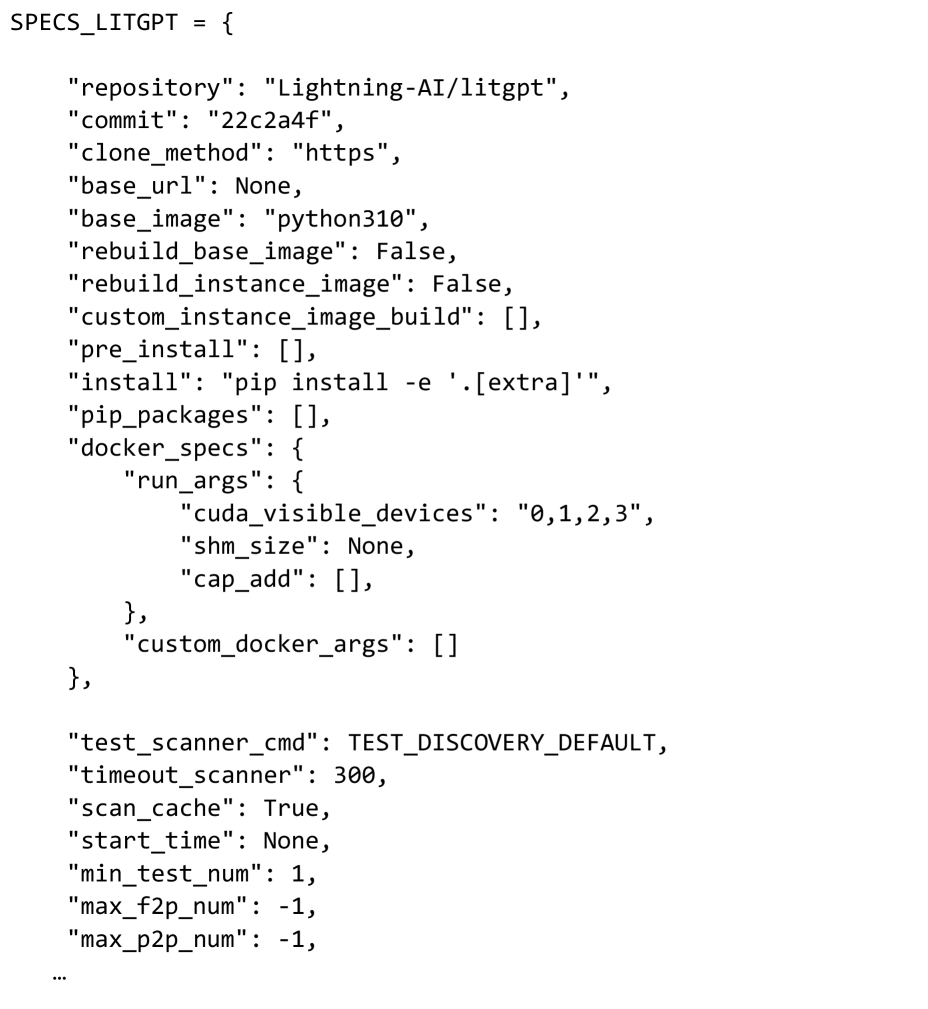

## JSON Configuration: Docker Build and Test Specifications

### Overview

This configuration defines Docker build parameters, test settings, and environment specifications for a Lightning-AI/LitGPT project. It includes repository details, image configurations, CUDA device assignments, and test scanner parameters.

### Components/Axes

- **Repository**: `Lightning-AI/litgpt` (commit `22c2a4f`)

- **Clone Method**: HTTPS

- **Base Image**: `python310`

- **Rebuild Flags**:

- `rebuild_base_image`: False

- `rebuild_instance_image`: False

- **Docker Specifications**:

- `cuda_visible_devices`: `0,1,2,3`

- `shm_size`: None

- `cap_add`: Empty list

- **Test Parameters**:

- `test_scanner_cmd`: `TEST_DISCOVERY_DEFAULT`

- `timeout_scanner`: 300 seconds

- `scan_cache`: True

- `min_test_num`: 1

- `max_f2p_num`: -1

- `max_p2p_num`: -1

### Detailed Analysis

1. **Repository & Build**:

- Uses HTTPS cloning for `Lightning-AI/litgpt` at commit `22c2a4f`.

- Base image is Python 3.10 with no base URL specified.

- No custom instance image build or pre-install steps defined.

2. **Docker Configuration**:

- Explicitly assigns CUDA devices 0-3 for GPU acceleration.

- Shared memory size (`shm_size`) and capability additions (`cap_add`) are unset, implying default system values.

- No custom Docker arguments provided.

3. **Testing Framework**:

- Test command defaults to `TEST_DISCOVERY_DEFAULT`.

- Scanner timeout set to 300 seconds (5 minutes).

- Test caching enabled (`scan_cache: True`).

- Test numbering constraints:

- Minimum tests: 1 (`min_test_num`)

- Maximum F2P/P2P tests: -1 (likely a placeholder for "unlimited" or system-dependent maximum).

### Key Observations

- **CUDA Device Assignment**: Explicit GPU allocation suggests GPU-dependent workloads.

- **Test Constraints**: Negative maximum test numbers (`-1`) may indicate unbounded test execution or system-specific limits.

- **Empty Lists**: Multiple empty arrays (`[]`) suggest default or unset configurations in build steps.

### Interpretation

This configuration prioritizes GPU-accelerated Docker builds for a PyTorch-based project (inferred from `python310` base image and CUDA device assignments). The test framework emphasizes discovery-mode execution with moderate timeout constraints. The use of `.[extra]` in the pip install command implies optional dependency inclusion for advanced features. The negative test number limits (-1) warrant clarification—either a placeholder for "no maximum" or a system-specific cap requiring further investigation. The absence of custom instance images and pre-install steps suggests a minimal base configuration, potentially for reproducibility or CI/CD pipeline simplicity.