TECHNICAL ASSET FINGERPRINT

e9b8a85c790802fd4ba12e08

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

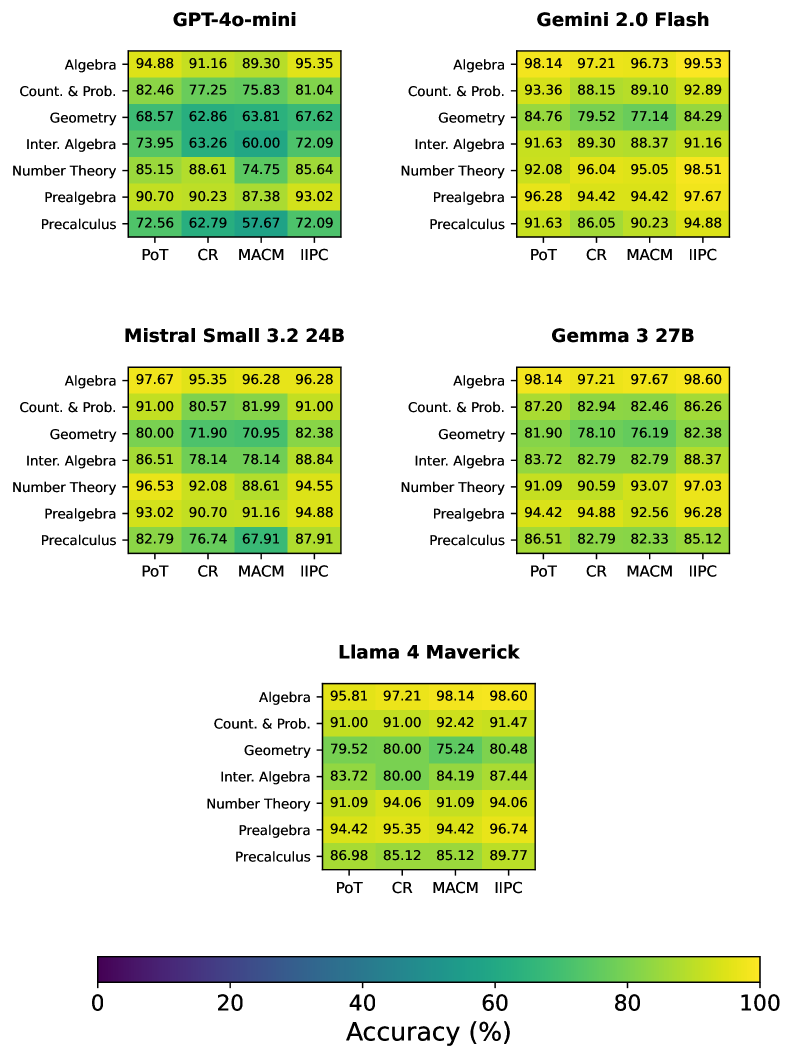

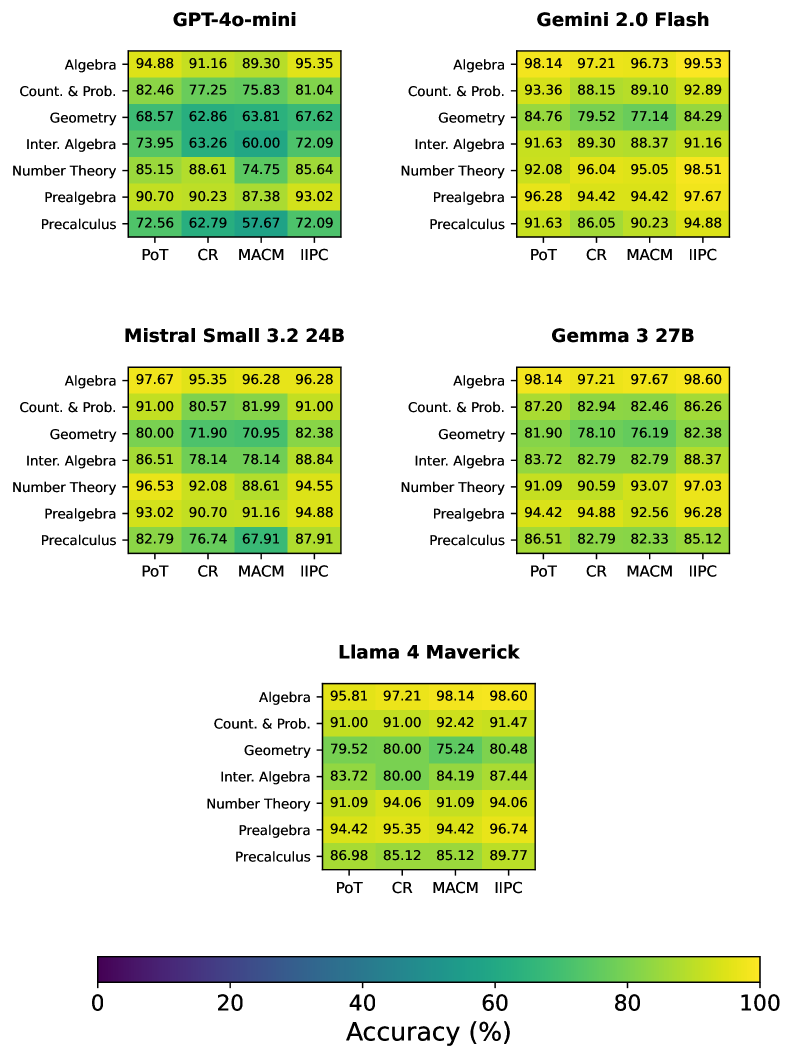

## Heatmap: Model Performance on Math Problems

### Overview

The image presents a series of heatmaps comparing the performance of different language models (GPT-4o-mini, Gemini 2.0 Flash, Mistral Small 3.2 24B, Gemma 3 27B, and Llama 4 Maverick) on various math problem types (Algebra, Counting & Probability, Geometry, Intermediate Algebra, Number Theory, Prealgebra, and Precalculus). The heatmaps display accuracy scores for each model across four different problem-solving approaches (PoT, CR, MACM, and IIPC). The color gradient represents accuracy, ranging from dark purple (low accuracy) to bright yellow (high accuracy).

### Components/Axes

* **Model Names (Titles):** GPT-4o-mini, Gemini 2.0 Flash, Mistral Small 3.2 24B, Gemma 3 27B, Llama 4 Maverick. Each model has its own heatmap.

* **Y-Axis (Problem Types):** Algebra, Count. & Prob. (Counting & Probability), Geometry, Inter. Algebra (Intermediate Algebra), Number Theory, Prealgebra, Precalculus.

* **X-Axis (Problem-Solving Approaches):** PoT, CR, MACM, IIPC.

* **Color Scale:** A horizontal color bar at the bottom indicates accuracy, ranging from 0% (dark purple) to 100% (bright yellow).

* **Numerical Values:** Each cell in the heatmap contains a numerical value representing the accuracy percentage for a specific model, problem type, and problem-solving approach.

### Detailed Analysis

**GPT-4o-mini**

| Problem Type | PoT | CR | MACM | IIPC |

| :---------------- | :---- | :---- | :---- | :---- |

| Algebra | 94.88 | 91.16 | 89.30 | 95.35 |

| Count. & Prob. | 82.46 | 77.25 | 75.83 | 81.04 |

| Geometry | 68.57 | 62.86 | 63.81 | 67.62 |

| Inter. Algebra | 73.95 | 63.26 | 60.00 | 72.09 |

| Number Theory | 85.15 | 88.61 | 74.75 | 85.64 |

| Prealgebra | 90.70 | 90.23 | 87.38 | 93.02 |

| Precalculus | 72.56 | 62.79 | 57.67 | 72.09 |

* **Trends:** Performance is generally high in Algebra and Prealgebra. Geometry and Intermediate Algebra show lower accuracy scores. Precalculus has the lowest scores overall.

**Gemini 2.0 Flash**

| Problem Type | PoT | CR | MACM | IIPC |

| :---------------- | :---- | :---- | :---- | :---- |

| Algebra | 98.14 | 97.21 | 96.73 | 99.53 |

| Count. & Prob. | 93.36 | 88.15 | 89.10 | 92.89 |

| Geometry | 84.76 | 79.52 | 77.14 | 84.29 |

| Inter. Algebra | 91.63 | 89.30 | 88.37 | 91.16 |

| Number Theory | 92.08 | 96.04 | 95.05 | 98.51 |

| Prealgebra | 96.28 | 94.42 | 94.42 | 97.67 |

| Precalculus | 91.63 | 86.05 | 90.23 | 94.88 |

* **Trends:** Consistently high performance across all problem types and approaches. Geometry shows the lowest relative scores compared to other subjects, but is still relatively high.

**Mistral Small 3.2 24B**

| Problem Type | PoT | CR | MACM | IIPC |

| :---------------- | :---- | :---- | :---- | :---- |

| Algebra | 97.67 | 95.35 | 96.28 | 96.28 |

| Count. & Prob. | 91.00 | 80.57 | 81.99 | 91.00 |

| Geometry | 80.00 | 71.90 | 70.95 | 82.38 |

| Inter. Algebra | 86.51 | 78.14 | 78.14 | 88.84 |

| Number Theory | 96.53 | 92.08 | 88.61 | 94.55 |

| Prealgebra | 93.02 | 90.70 | 91.16 | 94.88 |

| Precalculus | 82.79 | 76.74 | 67.91 | 87.91 |

* **Trends:** High performance in Algebra and Number Theory. Geometry and Precalculus have relatively lower scores. CR and MACM approaches tend to have lower accuracy compared to PoT and IIPC.

**Gemma 3 27B**

| Problem Type | PoT | CR | MACM | IIPC |

| :---------------- | :---- | :---- | :---- | :---- |

| Algebra | 98.14 | 97.21 | 97.67 | 98.60 |

| Count. & Prob. | 87.20 | 82.94 | 82.46 | 86.26 |

| Geometry | 81.90 | 78.10 | 76.19 | 82.38 |

| Inter. Algebra | 83.72 | 82.79 | 82.79 | 88.37 |

| Number Theory | 91.09 | 90.59 | 93.07 | 97.03 |

| Prealgebra | 94.42 | 94.88 | 92.56 | 96.28 |

| Precalculus | 86.51 | 82.79 | 82.33 | 85.12 |

* **Trends:** Algebra shows the highest accuracy. Geometry has the lowest relative scores. IIPC generally yields the highest accuracy for each problem type.

**Llama 4 Maverick**

| Problem Type | PoT | CR | MACM | IIPC |

| :---------------- | :---- | :---- | :---- | :---- |

| Algebra | 95.81 | 97.21 | 98.14 | 98.60 |

| Count. & Prob. | 91.00 | 91.00 | 92.42 | 91.47 |

| Geometry | 79.52 | 80.00 | 75.24 | 80.48 |

| Inter. Algebra | 83.72 | 80.00 | 84.19 | 87.44 |

| Number Theory | 91.09 | 94.06 | 91.09 | 94.06 |

| Prealgebra | 94.42 | 95.35 | 94.42 | 96.74 |

| Precalculus | 86.98 | 85.12 | 85.12 | 89.77 |

* **Trends:** High performance across all subjects. Geometry has the lowest relative scores.

### Key Observations

* **Algebra Performance:** All models demonstrate high accuracy in Algebra.

* **Geometry Performance:** Geometry consistently shows the lowest accuracy scores across all models.

* **Problem-Solving Approach Impact:** The IIPC approach often yields the highest accuracy, while CR and MACM sometimes show lower scores, suggesting the problem-solving approach significantly impacts performance.

* **Model Variation:** Gemini 2.0 Flash generally exhibits the most consistent and high performance across all problem types.

### Interpretation

The heatmaps provide a comparative analysis of different language models' ability to solve various math problems. The data suggests that while all models perform well in Algebra, Geometry poses a greater challenge. The choice of problem-solving approach (PoT, CR, MACM, IIPC) also significantly influences accuracy, with IIPC often leading to better results. Gemini 2.0 Flash appears to be the most robust model, demonstrating consistently high performance across all problem types and approaches. These findings can inform the selection of appropriate models and problem-solving strategies for specific mathematical tasks. The lower performance in Geometry across all models could indicate a need for further training or refinement in geometric reasoning.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

## Heatmap Grid: Model Performance Across Subjects and Tasks

### Overview

This image displays a grid of heatmaps, each representing the performance (accuracy) of a specific language model on various mathematical subjects and tasks. The models evaluated are GPT-4o-mini, Gemini 2.0 Flash, Mistral Small 3.2 24B, Gemma 3 27B, and Llama 4 Maverick. For each model, accuracy is shown across six subjects: Algebra, Count. & Prob., Geometry, Inter. Algebra, Number Theory, and Precalculus. The performance is further broken down by four tasks: PoT (Program of Thought), CR (Chain-of-Reasoning), MACM (Multi-step Arithmetic Chain-of-Thought), and IIPC (Instruction-following Prompt Completion). A color bar at the bottom indicates the accuracy scale from 0% to 100%.

### Components/Axes

**Overall Structure:**

The image is organized into five distinct heatmap sections, each titled with the name of a language model. These sections are arranged in a 2x2 grid for the top four models, with the fifth model (Llama 4 Maverick) positioned below the center.

**Individual Heatmap Components:**

* **Model Titles:** Located at the top of each heatmap section.

* GPT-4o-mini

* Gemini 2.0 Flash

* Mistral Small 3.2 24B

* Gemma 3 27B

* Llama 4 Maverick

* **Y-axis (Subjects):** Listed vertically on the left side of each heatmap.

* Algebra

* Count. & Prob.

* Geometry

* Inter. Algebra

* Number Theory

* Precalculus

* **X-axis (Tasks):** Listed horizontally at the bottom of each heatmap.

* PoT

* CR

* MACM

* IIPC

* **Color Bar (Legend):** Located at the bottom of the entire image.

* **Label:** "Accuracy (%)"

* **Scale:** Ranges from 0 to 100, with tick marks at 0, 20, 40, 60, 80, and 100.

* **Color Gradient:** A gradient from dark purple (low accuracy) through blue, teal, green, yellow, to bright yellow (high accuracy).

### Detailed Analysis

The following data represents the accuracy percentages for each model, subject, and task. The color of each cell corresponds to the accuracy indicated by the color bar.

**1. GPT-4o-mini**

| Subject | PoT | CR | MACM | IIPC |

|----------------|---------|---------|---------|---------|

| Algebra | 94.88 | 91.16 | 89.30 | 95.35 |

| Count. & Prob. | 82.46 | 77.25 | 75.83 | 81.04 |

| Geometry | 68.57 | 62.86 | 63.81 | 67.62 |

| Inter. Algebra | 73.95 | 63.26 | 60.00 | 72.09 |

| Number Theory | 85.15 | 88.61 | 74.75 | 85.64 |

| Precalculus | 90.70 | 90.23 | 87.38 | 93.02 |

**2. Gemini 2.0 Flash**

| Subject | PoT | CR | MACM | IIPC |

|----------------|---------|---------|---------|---------|

| Algebra | 98.14 | 97.21 | 96.73 | 99.53 |

| Count. & Prob. | 93.36 | 88.15 | 89.10 | 92.89 |

| Geometry | 84.76 | 79.52 | 77.14 | 84.29 |

| Inter. Algebra | 91.63 | 89.30 | 88.37 | 91.16 |

| Number Theory | 92.08 | 96.04 | 95.05 | 98.51 |

| Precalculus | 91.63 | 86.05 | 90.23 | 94.88 |

**3. Mistral Small 3.2 24B**

| Subject | PoT | CR | MACM | IIPC |

|----------------|---------|---------|---------|---------|

| Algebra | 97.67 | 95.35 | 96.28 | 96.28 |

| Count. & Prob. | 91.00 | 80.57 | 81.99 | 91.00 |

| Geometry | 80.00 | 71.90 | 70.95 | 82.38 |

| Inter. Algebra | 86.51 | 78.14 | 78.14 | 88.84 |

| Number Theory | 96.53 | 92.08 | 88.61 | 94.55 |

| Precalculus | 93.02 | 90.70 | 91.16 | 94.88 |

**4. Gemma 3 27B**

| Subject | PoT | CR | MACM | IIPC |

|----------------|---------|---------|---------|---------|

| Algebra | 98.14 | 97.21 | 97.67 | 98.60 |

| Count. & Prob. | 87.20 | 82.94 | 82.46 | 86.26 |

| Geometry | 81.90 | 78.10 | 76.19 | 82.38 |

| Inter. Algebra | 83.72 | 82.79 | 82.79 | 88.37 |

| Number Theory | 91.09 | 90.59 | 93.07 | 97.03 |

| Precalculus | 94.42 | 94.88 | 92.56 | 96.28 |

**5. Llama 4 Maverick**

| Subject | PoT | CR | MACM | IIPC |

|----------------|---------|---------|---------|---------|

| Algebra | 95.81 | 97.21 | 98.14 | 98.60 |

| Count. & Prob. | 91.00 | 91.00 | 92.42 | 91.47 |

| Geometry | 79.52 | 80.00 | 75.24 | 80.48 |

| Inter. Algebra | 83.72 | 80.00 | 84.19 | 87.44 |

| Number Theory | 91.09 | 94.06 | 91.09 | 94.06 |

| Precalculus | 94.42 | 95.35 | 94.42 | 96.74 |

### Key Observations

* **Top Performers:** Gemini 2.0 Flash and Llama 4 Maverick generally exhibit the highest accuracies, particularly in Algebra and Number Theory, often achieving scores above 95% and even approaching 100% in some cases (e.g., Gemini 2.0 Flash on Algebra IIPC).

* **Subject Difficulty:** Geometry appears to be the most challenging subject across all models, with accuracies consistently lower than other subjects, often falling in the 60-80% range. Count. & Prob. and Inter. Algebra also show moderate difficulty.

* **Task Performance:** The "IIPC" (Instruction-following Prompt Completion) task often yields higher accuracies for many models, suggesting it might be a more straightforward task or that models are better optimized for it. "MACM" (Multi-step Arithmetic Chain-of-Thought) sometimes shows lower scores, particularly in Geometry.

* **Model Strengths/Weaknesses:**

* GPT-4o-mini shows strong performance in Algebra and Precalculus but is weaker in Geometry.

* Gemini 2.0 Flash is a strong all-around performer, especially in Algebra and Number Theory.

* Mistral Small 3.2 24B performs well in Algebra and Number Theory but struggles more with Geometry.

* Gemma 3 27B is competitive, with high scores in Algebra and Number Theory, but also shows moderate performance in Geometry.

* Llama 4 Maverick excels in Algebra and shows strong performance in Precalculus and Number Theory, but its Geometry scores are comparable to other models.

* **Color Consistency:** The color mapping appears consistent across all heatmaps, with darker purples representing lower accuracies and bright yellows representing higher accuracies, aligning with the provided color bar.

### Interpretation

This grid of heatmaps provides a comparative analysis of the mathematical reasoning capabilities of several large language models. The data suggests a clear hierarchy in performance, with Gemini 2.0 Flash and Llama 4 Maverick generally outperforming GPT-4o-mini, Mistral Small 3.2 24B, and Gemma 3 27B on these specific mathematical tasks.

The consistent lower performance in Geometry across most models indicates that this subject might require more sophisticated spatial reasoning or a deeper understanding of geometric principles that current models are still developing. Conversely, subjects like Algebra and Number Theory, which are more amenable to symbolic manipulation and logical deduction, show higher accuracy.

The variation in performance across tasks (PoT, CR, MACM, IIPC) highlights the impact of prompt engineering and task formulation. The generally higher scores on IIPC suggest that models might be more adept at following direct instructions for completion rather than engaging in complex multi-step reasoning processes like MACM, although this is not universally true.

Overall, the data demonstrates the progress in LLM capabilities for mathematical problem-solving, while also pinpointing areas for future improvement, particularly in more complex or abstract reasoning domains like Geometry. The visual representation through heatmaps allows for quick identification of model strengths and weaknesses across different mathematical domains and task types.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Chart: Math Problem Solving Accuracy by Model

### Overview

This chart compares the accuracy of five different Large Language Models (LLMs) – GPT-4o-mini, Gemini 2.0 Flash, Mistral Small 3.2 24B, Gemma 3 27B, and Llama 4 Maverick – on six different math topics: Algebra, Count. & Prob., Geometry, Inter. Algebra, Number Theory, and Prealgebra. Accuracy is represented as a percentage, with each model having a score for each topic across four different datasets: PoT, CR, MACM, and IIPC.

### Components/Axes

* **Models:** GPT-4o-mini, Gemini 2.0 Flash, Mistral Small 3.2 24B, Gemma 3 27B, Llama 4 Maverick (arranged vertically).

* **Math Topics:** Algebra, Count. & Prob., Geometry, Inter. Algebra, Number Theory, Prealgebra (arranged horizontally).

* **Datasets:** PoT, CR, MACM, IIPC (represented as columns within each math topic).

* **Accuracy:** Percentage values displayed within each cell of the grid, representing the model's accuracy on a specific topic and dataset.

* **Color Coding:** The chart uses a color gradient to represent accuracy, with darker shades indicating higher accuracy. The legend at the bottom-right indicates the accuracy range corresponding to each color.

### Detailed Analysis or Content Details

**GPT-4o-mini:**

* Algebra: PoT - 94.88%, CR - 91.16%, MACM - 89.30%, IIPC - 95.35% (Trend: Relatively stable, slightly increasing)

* Count. & Prob.: PoT - 82.46%, CR - 77.25%, MACM - 75.83%, IIPC - 81.04% (Trend: Increasing from CR to IIPC)

* Geometry: PoT - 68.57%, CR - 62.86%, MACM - 63.81%, IIPC - 67.62% (Trend: Relatively stable, slight increase)

* Inter. Algebra: PoT - 73.95%, CR - 63.26%, MACM - 60.00%, IIPC - 72.09% (Trend: Increasing from CR to IIPC)

* Number Theory: PoT - 85.15%, CR - 88.61%, MACM - 74.75%, IIPC - 85.64% (Trend: Fluctuating, highest on CR)

* Prealgebra: PoT - 90.70%, CR - 90.23%, MACM - 87.38%, IIPC - 93.02% (Trend: Relatively stable, increasing to IIPC)

**Gemini 2.0 Flash:**

* Algebra: PoT - 98.14%, CR - 97.21%, MACM - 97.69%, IIPC - 99.53% (Trend: Consistently high, increasing to IIPC)

* Count. & Prob.: PoT - 93.36%, CR - 88.15%, MACM - 89.10%, IIPC - 92.89% (Trend: Decreasing from PoT to CR, then increasing)

* Geometry: PoT - 84.76%, CR - 79.52%, MACM - 77.14%, IIPC - 84.29% (Trend: Decreasing from PoT to CR, then increasing)

* Inter. Algebra: PoT - 91.63%, CR - 89.30%, MACM - 88.37%, IIPC - 91.16% (Trend: Relatively stable)

* Number Theory: PoT - 92.08%, CR - 96.04%, MACM - 95.05%, IIPC - 98.51% (Trend: Increasing to IIPC)

* Prealgebra: PoT - 96.28%, CR - 94.42%, MACM - 94.42%, IIPC - 97.67% (Trend: Relatively stable, increasing to IIPC)

**Mistral Small 3.2 24B:**

* Algebra: PoT - 97.67%, CR - 95.35%, MACM - 96.28%, IIPC - 96.28% (Trend: Relatively stable)

* Count. & Prob.: PoT - 91.00%, CR - 80.57%, MACM - 81.99%, IIPC - 91.00% (Trend: Decreasing from PoT to CR, then increasing)

* Geometry: PoT - 80.00%, CR - 71.90%, MACM - 70.95%, IIPC - 82.38% (Trend: Increasing to IIPC)

* Inter. Algebra: PoT - 86.51%, CR - 78.14%, MACM - 78.14%, IIPC - 88.84% (Trend: Increasing to IIPC)

* Number Theory: PoT - 96.53%, CR - 92.08%, MACM - 88.61%, IIPC - 94.55% (Trend: Decreasing from PoT to MACM, then increasing)

* Prealgebra: PoT - 93.02%, CR - 90.70%, MACM - 91.16%, IIPC - 93.91% (Trend: Relatively stable)

**Gemma 3 27B:**

* Algebra: PoT - 98.14%, CR - 97.21%, MACM - 97.67%, IIPC - 98.60% (Trend: Relatively stable, increasing to IIPC)

* Count. & Prob.: PoT - 87.20%, CR - 82.94%, MACM - 82.46%, IIPC - 86.26% (Trend: Decreasing from PoT to MACM, then increasing)

* Geometry: PoT - 81.90%, CR - 78.10%, MACM - 76.19%, IIPC - 82.38% (Trend: Increasing to IIPC)

* Inter. Algebra: PoT - 83.72%, CR - 82.79%, MACM - 82.79%, IIPC - 88.37% (Trend: Increasing to IIPC)

* Number Theory: PoT - 91.09%, CR - 90.59%, MACM - 93.07%, IIPC - 97.03% (Trend: Increasing to IIPC)

* Prealgebra: PoT - 86.51%, CR - 82.79%, MACM - 83.33%, IIPC - 85.12% (Trend: Relatively stable)

**Llama 4 Maverick:**

* Algebra: PoT - 95.61%, CR - 91.92%, MACM - 91.48%, IIPC - 96.80% (Trend: Increasing to IIPC)

* Count. & Prob.: PoT - 81.17%, CR - 74.20%, MACM - 74.60%, IIPC - 81.67% (Trend: Increasing to IIPC)

* Geometry: PoT - 73.38%, CR - 65.86%, MACM - 65.44%, IIPC - 74.48% (Trend: Increasing to IIPC)

* Inter. Algebra: PoT - 74.95%, CR - 66.22%, MACM - 62.96%, IIPC - 78.14% (Trend: Increasing to IIPC)

* Number Theory: PoT - 88.02%, CR - 84.56%, MACM - 82.54%, IIPC - 90.70% (Trend: Increasing to IIPC)

* Prealgebra: PoT - 92.26%, CR - 89.33%, MACM - 87.16%, IIPC - 94.00% (Trend: Increasing to IIPC)

### Key Observations

* Gemini 2.0 Flash and Gemma 3 27B consistently demonstrate the highest accuracy across most math topics and datasets.

* GPT-4o-mini generally performs well, but lags behind Gemini and Gemma in several areas.

* Mistral Small 3.2 24B and Llama 4 Maverick show lower accuracy scores compared to the other models, particularly in Geometry and Inter. Algebra.

* Accuracy tends to be lower on Count. & Prob. and Inter. Algebra across all models.

* Performance generally improves on the IIPC dataset compared to the other datasets (PoT, CR, MACM) for most models and topics.

### Interpretation

The data suggests that Gemini 2.0 Flash and Gemma 3 27B are the most proficient LLMs for solving math problems across the tested topics and datasets. The consistent high scores indicate a strong understanding of mathematical concepts and the ability to apply them effectively. The improvement in performance on the IIPC dataset could be due to its specific characteristics or the models being better trained on similar data. The lower accuracy on Count. & Prob. and Inter. Algebra might indicate these areas require more complex reasoning or specific knowledge that the models haven't fully mastered. The differences in performance between the models highlight the importance of model architecture, training data, and fine-tuning techniques in achieving high accuracy on math problem-solving tasks. The color gradient provides a quick visual comparison of the models' strengths and weaknesses, allowing for easy identification of the best-performing models for specific math topics.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Accuracy Heatmaps for AI Models on Math Topics

### Overview

The image displays five heatmaps (color-coded accuracy tables) for different AI models, evaluating performance across seven math topics using four methods. A color scale (0–100% accuracy) is provided, with purple for low accuracy and yellow for high.

### Components/Axes

- **Models**: Five models, each with a table:

- GPT-4o-mini (top-left)

- Gemini 2.0 Flash (top-right)

- Mistral Small 3.2 24B (middle-left)

- Gemma 3 27B (middle-right)

- Llama 4 Maverick (bottom)

- **Rows (Math Topics)**: Algebra, Count. & Prob., Geometry, Inter. Algebra, Number Theory, Prealgebra, Precalculus (vertical axis for each table).

- **Columns (Methods)**: PoT, CR, MACM, IIPC (horizontal axis for each table).

- **Color Scale**: Bottom of the image, gradient from purple (0%) to yellow (100%), labeled *“Accuracy (%)”*.

### Detailed Analysis (Per Model)

#### GPT-4o-mini

| Topic | PoT | CR | MACM | IIPC |

|----------------|--------|--------|--------|--------|

| Algebra | 94.88 | 91.16 | 89.30 | 95.35 |

| Count. & Prob. | 82.46 | 77.25 | 75.83 | 81.04 |

| Geometry | 68.57 | 62.86 | 63.81 | 67.62 |

| Inter. Algebra | 73.95 | 63.26 | 60.00 | 72.09 |

| Number Theory | 85.15 | 88.61 | 74.75 | 85.64 |

| Prealgebra | 90.70 | 90.23 | 87.38 | 93.02 |

| Precalculus | 72.56 | 62.79 | 57.67 | 72.09 |

#### Gemini 2.0 Flash

| Topic | PoT | CR | MACM | IIPC |

|----------------|--------|--------|--------|--------|

| Algebra | 98.14 | 97.21 | 96.73 | 99.53 |

| Count. & Prob. | 93.36 | 88.15 | 89.10 | 92.89 |

| Geometry | 84.76 | 79.52 | 77.14 | 84.29 |

| Inter. Algebra | 91.63 | 89.30 | 88.37 | 91.16 |

| Number Theory | 92.08 | 96.04 | 95.05 | 98.51 |

| Prealgebra | 96.28 | 94.42 | 94.42 | 97.67 |

| Precalculus | 91.63 | 86.05 | 90.23 | 94.88 |

#### Mistral Small 3.2 24B

| Topic | PoT | CR | MACM | IIPC |

|----------------|--------|--------|--------|--------|

| Algebra | 97.67 | 95.35 | 96.28 | 96.28 |

| Count. & Prob. | 91.00 | 80.57 | 81.99 | 91.00 |

| Geometry | 80.00 | 71.90 | 70.95 | 82.38 |

| Inter. Algebra | 86.51 | 78.14 | 78.14 | 88.84 |

| Number Theory | 96.53 | 92.08 | 88.61 | 94.55 |

| Prealgebra | 93.02 | 90.70 | 91.16 | 94.88 |

| Precalculus | 82.79 | 76.74 | 67.91 | 87.91 |

#### Gemma 3 27B

| Topic | PoT | CR | MACM | IIPC |

|----------------|--------|--------|--------|--------|

| Algebra | 98.14 | 97.21 | 97.67 | 98.60 |

| Count. & Prob. | 87.20 | 82.94 | 82.46 | 86.26 |

| Geometry | 81.90 | 78.10 | 76.19 | 82.38 |

| Inter. Algebra | 83.72 | 82.79 | 82.79 | 88.37 |

| Number Theory | 91.09 | 90.59 | 93.07 | 97.03 |

| Prealgebra | 94.42 | 94.88 | 92.56 | 96.28 |

| Precalculus | 86.51 | 82.79 | 82.33 | 85.12 |

#### Llama 4 Maverick

| Topic | PoT | CR | MACM | IIPC |

|----------------|--------|--------|--------|--------|

| Algebra | 95.81 | 97.21 | 98.14 | 98.60 |

| Count. & Prob. | 91.00 | 91.00 | 92.42 | 91.47 |

| Geometry | 79.52 | 80.00 | 75.24 | 80.48 |

| Inter. Algebra | 83.72 | 80.00 | 84.19 | 87.44 |

| Number Theory | 91.09 | 94.06 | 91.09 | 94.06 |

| Prealgebra | 94.42 | 95.35 | 94.42 | 96.74 |

| Precalculus | 86.98 | 85.12 | 85.12 | 89.77 |

### Key Observations

- **High-Accuracy Topics**: Algebra and Prealgebra consistently show high accuracy (e.g., Gemini 2.0 Flash’s Algebra IIPC=99.53, Llama 4 Maverick’s Prealgebra IIPC=96.74).

- **Low-Accuracy Topics**: Geometry and Precalculus often have lower accuracy (e.g., GPT-4o-mini’s Geometry CR=62.86, Mistral Small’s Precalculus MACM=67.91).

- **Method Performance**: IIPC frequently yields the highest accuracy (e.g., Gemini 2.0 Flash’s Algebra IIPC=99.53), while MACM often has the lowest (e.g., GPT-4o-mini’s Precalculus MACM=57.67).

- **Model Comparison**: Gemini 2.0 Flash and Llama 4 Maverick excel across topics, with Gemini 2.0 Flash’s Algebra IIPC=99.53 as a standout.

### Interpretation

The heatmaps reveal how AI models perform on math topics using different methods. High accuracy in Algebra/Prealgebra suggests these topics are more straightforward, while Geometry/Precalculus (with complex reasoning) are more challenging. IIPC’s consistent high performance implies it is an effective method for these tasks. The color gradient (purple to yellow) visually emphasizes accuracy differences, guiding model selection for math-related tasks. This data highlights which models/methods excel in specific topics, aiding in practical applications like educational AI or problem-solving tools.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap: AI Model Accuracy Across Math Subjects and Problem Types

### Overview

The image is a comparative heatmap visualizing the accuracy of five AI models (GPT-4o-mini, Gemini 2.0 Flash, Mistral Small 3.2 24B, Gemma 3 27B, and Llama 4 Maverick) across seven math subjects (Algebra, Count. & Prob., Geometry, Inter. Algebra, Number Theory, Prealgebra, Precalculus) and four problem types (PoT, CR, MACM, IIPC). Accuracy is represented via a color gradient (purple = low, yellow = high), with numerical values provided for each data point.

---

### Components/Axes

- **Y-Axis (Rows)**: Math subjects (Algebra, Count. & Prob., Geometry, Inter. Algebra, Number Theory, Prealgebra, Precalculus).

- **X-Axis (Columns)**: Problem types (PoT, CR, MACM, IIPC).

- **Legend**: Color gradient from purple (0%) to yellow (100%) representing accuracy percentages.

- **Sub-Charts**: Five distinct heatmaps, one per AI model, arranged in two rows (top: GPT-4o-mini, Gemini 2.0 Flash; bottom: Mistral Small 3.2 24B, Gemma 3 27B, Llama 4 Maverick).

---

### Detailed Analysis

#### GPT-4o-mini

- **Algebra**: 94.88% (PoT), 91.16% (CR), 89.30% (MACM), 95.35% (IIPC).

- **Count. & Prob.**: 82.46% (PoT), 77.25% (CR), 75.83% (MACM), 81.04% (IIPC).

- **Geometry**: 68.57% (PoT), 62.86% (CR), 63.81% (MACM), 67.62% (IIPC).

- **Inter. Algebra**: 73.95% (PoT), 63.26% (CR), 60.00% (MACM), 72.09% (IIPC).

- **Number Theory**: 85.15% (PoT), 88.61% (CR), 74.75% (MACM), 85.64% (IIPC).

- **Prealgebra**: 90.70% (PoT), 90.23% (CR), 87.38% (MACM), 93.02% (IIPC).

- **Precalculus**: 72.56% (PoT), 62.79% (CR), 57.67% (MACM), 72.09% (IIPC).

#### Gemini 2.0 Flash

- **Algebra**: 98.14% (PoT), 97.21% (CR), 96.73% (MACM), 99.53% (IIPC).

- **Count. & Prob.**: 93.36% (PoT), 88.15% (CR), 89.10% (MACM), 92.89% (IIPC).

- **Geometry**: 84.76% (PoT), 79.52% (CR), 77.14% (MACM), 84.29% (IIPC).

- **Inter. Algebra**: 91.63% (PoT), 89.30% (CR), 88.37% (MACM), 91.16% (IIPC).

- **Number Theory**: 92.08% (PoT), 96.04% (CR), 95.05% (MACM), 98.51% (IIPC).

- **Prealgebra**: 96.28% (PoT), 94.42% (CR), 94.42% (MACM), 97.67% (IIPC).

- **Precalculus**: 91.63% (PoT), 86.05% (CR), 90.23% (MACM), 94.88% (IIPC).

#### Mistral Small 3.2 24B

- **Algebra**: 97.67% (PoT), 95.35% (CR), 96.28% (MACM), 96.28% (IIPC).

- **Count. & Prob.**: 91.00% (PoT), 80.57% (CR), 81.99% (MACM), 91.00% (IIPC).

- **Geometry**: 80.00% (PoT), 71.90% (CR), 70.95% (MACM), 82.38% (IIPC).

- **Inter. Algebra**: 86.51% (PoT), 78.14% (CR), 78.14% (MACM), 88.84% (IIPC).

- **Number Theory**: 96.53% (PoT), 92.08% (CR), 88.61% (MACM), 94.55% (IIPC).

- **Prealgebra**: 93.02% (PoT), 90.70% (CR), 91.16% (MACM), 94.88% (IIPC).

- **Precalculus**: 82.79% (PoT), 76.74% (CR), 67.91% (MACM), 87.91% (IIPC).

#### Gemma 3 27B

- **Algebra**: 98.14% (PoT), 97.21% (CR), 97.67% (MACM), 98.60% (IIPC).

- **Count. & Prob.**: 87.20% (PoT), 82.94% (CR), 82.46% (MACM), 86.26% (IIPC).

- **Geometry**: 81.90% (PoT), 78.10% (CR), 76.19% (MACM), 82.38% (IIPC).

- **Inter. Algebra**: 83.72% (PoT), 82.79% (CR), 82.79% (MACM), 88.37% (IIPC).

- **Number Theory**: 91.09% (PoT), 90.59% (CR), 93.07% (MACM), 97.03% (IIPC).

- **Prealgebra**: 94.42% (PoT), 94.88% (CR), 92.56% (MACM), 96.28% (IIPC).

- **Precalculus**: 86.51% (PoT), 82.79% (CR), 82.33% (MACM), 85.12% (IIPC).

#### Llama 4 Maverick

- **Algebra**: 95.81% (PoT), 97.21% (CR), 98.14% (MACM), 98.60% (IIPC).

- **Count. & Prob.**: 91.00% (PoT), 91.00% (CR), 92.42% (MACM), 91.47% (IIPC).

- **Geometry**: 79.52% (PoT), 80.00% (CR), 75.24% (MACM), 80.48% (IIPC).

- **Inter. Algebra**: 83.72% (PoT), 80.00% (CR), 84.19% (MACM), 87.44% (IIPC).

- **Number Theory**: 91.09% (PoT), 94.06% (CR), 91.09% (MACM), 94.06% (IIPC).

- **Prealgebra**: 94.42% (PoT), 95.35% (CR), 94.42% (MACM), 96.74% (IIPC).

- **Precalculus**: 86.98% (PoT), 85.12% (CR), 85.12% (MACM), 89.77% (IIPC).

---

### Key Observations

1. **Llama 4 Maverick** consistently achieves the highest accuracy across most subjects and problem types, particularly in **Algebra (PoT: 98.60%)** and **Precalculus (IIPC: 89.77%)**.

2. **Geometry** is the weakest subject for all models, with GPT-4o-mini (68.57%) and Mistral Small 3.2 24B (80.00%) showing the lowest scores.

3. **PoT** problem type generally yields higher accuracy than other types (e.g., GPT-4o-mini: 94.88% vs. 60.00% for MACM in Inter. Algebra).

4. **Gemini 2.0 Flash** excels in **Algebra (99.53% IIPC)** and **Number Theory (98.51% IIPC)**, but struggles slightly in Geometry (84.76% PoT).

5. **Mistral Small 3.2 24B** has the lowest accuracy in **Precalculus (MACM: 67.91%)** but performs well in **Number Theory (96.53% PoT)**.

---

### Interpretation

The data suggests that **Llama 4 Maverick** is the most robust model overall, with minimal variance across problem types and subjects. **Gemini 2.0 Flash** and **GPT-4o-mini** also perform strongly, particularly in advanced topics like Algebra and Number Theory. However, **Geometry** remains a consistent weak point for all models, indicating potential gaps in spatial reasoning or visualization capabilities. The **PoT** problem type consistently outperforms others, suggesting that models are better at procedural tasks than complex, multi-step problems (e.g., MACM). This could reflect training data biases or architectural limitations in handling abstract mathematical concepts. The heatmap highlights opportunities for improvement in Geometry and Precalculus, particularly for models like GPT-4o-mini and Mistral Small 3.2 24B.

DECODING INTELLIGENCE...