## Heatmap: Model Performance on Math Problems

### Overview

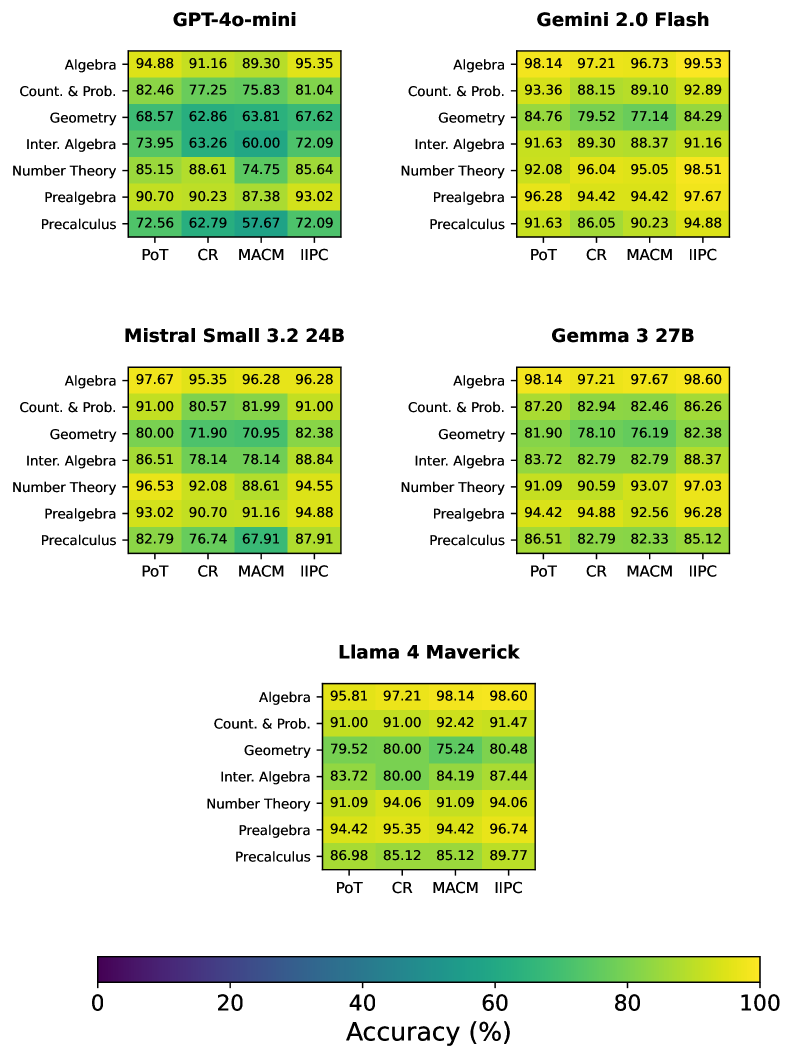

The image presents a series of heatmaps comparing the performance of different language models (GPT-4o-mini, Gemini 2.0 Flash, Mistral Small 3.2 24B, Gemma 3 27B, and Llama 4 Maverick) on various math problem types (Algebra, Counting & Probability, Geometry, Intermediate Algebra, Number Theory, Prealgebra, and Precalculus). The heatmaps display accuracy scores for each model across four different problem-solving approaches (PoT, CR, MACM, and IIPC). The color gradient represents accuracy, ranging from dark purple (low accuracy) to bright yellow (high accuracy).

### Components/Axes

* **Model Names (Titles):** GPT-4o-mini, Gemini 2.0 Flash, Mistral Small 3.2 24B, Gemma 3 27B, Llama 4 Maverick. Each model has its own heatmap.

* **Y-Axis (Problem Types):** Algebra, Count. & Prob. (Counting & Probability), Geometry, Inter. Algebra (Intermediate Algebra), Number Theory, Prealgebra, Precalculus.

* **X-Axis (Problem-Solving Approaches):** PoT, CR, MACM, IIPC.

* **Color Scale:** A horizontal color bar at the bottom indicates accuracy, ranging from 0% (dark purple) to 100% (bright yellow).

* **Numerical Values:** Each cell in the heatmap contains a numerical value representing the accuracy percentage for a specific model, problem type, and problem-solving approach.

### Detailed Analysis

**GPT-4o-mini**

| Problem Type | PoT | CR | MACM | IIPC |

| :---------------- | :---- | :---- | :---- | :---- |

| Algebra | 94.88 | 91.16 | 89.30 | 95.35 |

| Count. & Prob. | 82.46 | 77.25 | 75.83 | 81.04 |

| Geometry | 68.57 | 62.86 | 63.81 | 67.62 |

| Inter. Algebra | 73.95 | 63.26 | 60.00 | 72.09 |

| Number Theory | 85.15 | 88.61 | 74.75 | 85.64 |

| Prealgebra | 90.70 | 90.23 | 87.38 | 93.02 |

| Precalculus | 72.56 | 62.79 | 57.67 | 72.09 |

* **Trends:** Performance is generally high in Algebra and Prealgebra. Geometry and Intermediate Algebra show lower accuracy scores. Precalculus has the lowest scores overall.

**Gemini 2.0 Flash**

| Problem Type | PoT | CR | MACM | IIPC |

| :---------------- | :---- | :---- | :---- | :---- |

| Algebra | 98.14 | 97.21 | 96.73 | 99.53 |

| Count. & Prob. | 93.36 | 88.15 | 89.10 | 92.89 |

| Geometry | 84.76 | 79.52 | 77.14 | 84.29 |

| Inter. Algebra | 91.63 | 89.30 | 88.37 | 91.16 |

| Number Theory | 92.08 | 96.04 | 95.05 | 98.51 |

| Prealgebra | 96.28 | 94.42 | 94.42 | 97.67 |

| Precalculus | 91.63 | 86.05 | 90.23 | 94.88 |

* **Trends:** Consistently high performance across all problem types and approaches. Geometry shows the lowest relative scores compared to other subjects, but is still relatively high.

**Mistral Small 3.2 24B**

| Problem Type | PoT | CR | MACM | IIPC |

| :---------------- | :---- | :---- | :---- | :---- |

| Algebra | 97.67 | 95.35 | 96.28 | 96.28 |

| Count. & Prob. | 91.00 | 80.57 | 81.99 | 91.00 |

| Geometry | 80.00 | 71.90 | 70.95 | 82.38 |

| Inter. Algebra | 86.51 | 78.14 | 78.14 | 88.84 |

| Number Theory | 96.53 | 92.08 | 88.61 | 94.55 |

| Prealgebra | 93.02 | 90.70 | 91.16 | 94.88 |

| Precalculus | 82.79 | 76.74 | 67.91 | 87.91 |

* **Trends:** High performance in Algebra and Number Theory. Geometry and Precalculus have relatively lower scores. CR and MACM approaches tend to have lower accuracy compared to PoT and IIPC.

**Gemma 3 27B**

| Problem Type | PoT | CR | MACM | IIPC |

| :---------------- | :---- | :---- | :---- | :---- |

| Algebra | 98.14 | 97.21 | 97.67 | 98.60 |

| Count. & Prob. | 87.20 | 82.94 | 82.46 | 86.26 |

| Geometry | 81.90 | 78.10 | 76.19 | 82.38 |

| Inter. Algebra | 83.72 | 82.79 | 82.79 | 88.37 |

| Number Theory | 91.09 | 90.59 | 93.07 | 97.03 |

| Prealgebra | 94.42 | 94.88 | 92.56 | 96.28 |

| Precalculus | 86.51 | 82.79 | 82.33 | 85.12 |

* **Trends:** Algebra shows the highest accuracy. Geometry has the lowest relative scores. IIPC generally yields the highest accuracy for each problem type.

**Llama 4 Maverick**

| Problem Type | PoT | CR | MACM | IIPC |

| :---------------- | :---- | :---- | :---- | :---- |

| Algebra | 95.81 | 97.21 | 98.14 | 98.60 |

| Count. & Prob. | 91.00 | 91.00 | 92.42 | 91.47 |

| Geometry | 79.52 | 80.00 | 75.24 | 80.48 |

| Inter. Algebra | 83.72 | 80.00 | 84.19 | 87.44 |

| Number Theory | 91.09 | 94.06 | 91.09 | 94.06 |

| Prealgebra | 94.42 | 95.35 | 94.42 | 96.74 |

| Precalculus | 86.98 | 85.12 | 85.12 | 89.77 |

* **Trends:** High performance across all subjects. Geometry has the lowest relative scores.

### Key Observations

* **Algebra Performance:** All models demonstrate high accuracy in Algebra.

* **Geometry Performance:** Geometry consistently shows the lowest accuracy scores across all models.

* **Problem-Solving Approach Impact:** The IIPC approach often yields the highest accuracy, while CR and MACM sometimes show lower scores, suggesting the problem-solving approach significantly impacts performance.

* **Model Variation:** Gemini 2.0 Flash generally exhibits the most consistent and high performance across all problem types.

### Interpretation

The heatmaps provide a comparative analysis of different language models' ability to solve various math problems. The data suggests that while all models perform well in Algebra, Geometry poses a greater challenge. The choice of problem-solving approach (PoT, CR, MACM, IIPC) also significantly influences accuracy, with IIPC often leading to better results. Gemini 2.0 Flash appears to be the most robust model, demonstrating consistently high performance across all problem types and approaches. These findings can inform the selection of appropriate models and problem-solving strategies for specific mathematical tasks. The lower performance in Geometry across all models could indicate a need for further training or refinement in geometric reasoning.