## Chart: Math Problem Solving Accuracy by Model

### Overview

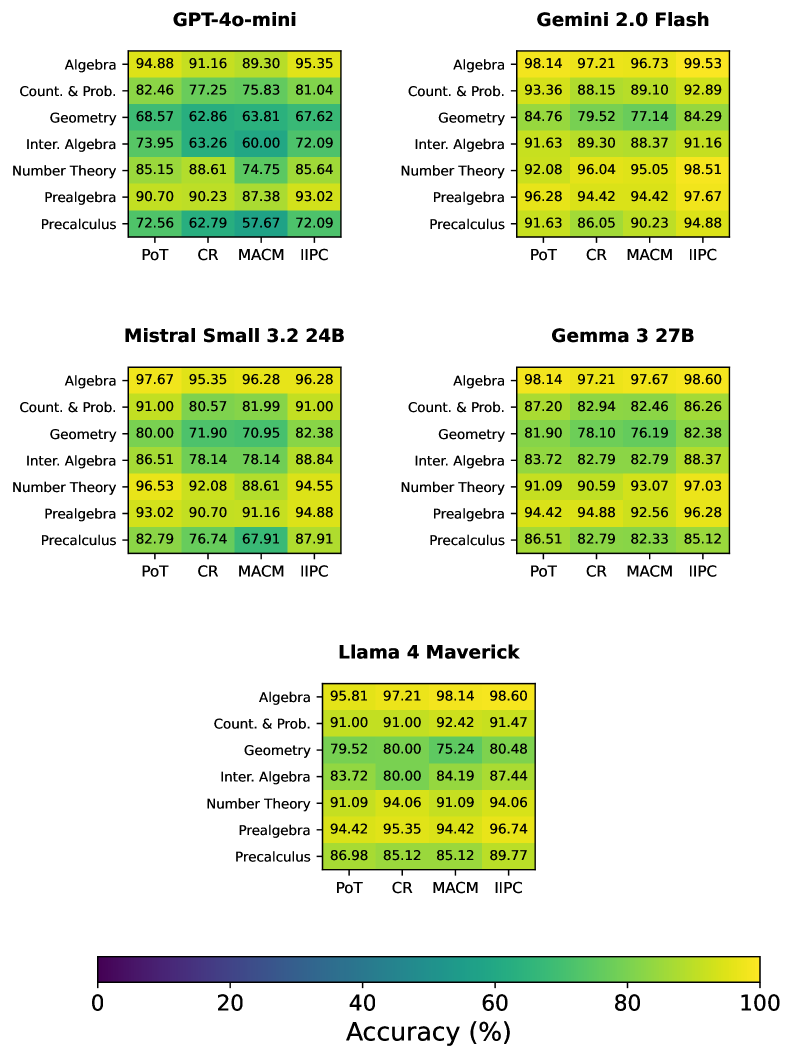

This chart compares the accuracy of five different Large Language Models (LLMs) – GPT-4o-mini, Gemini 2.0 Flash, Mistral Small 3.2 24B, Gemma 3 27B, and Llama 4 Maverick – on six different math topics: Algebra, Count. & Prob., Geometry, Inter. Algebra, Number Theory, and Prealgebra. Accuracy is represented as a percentage, with each model having a score for each topic across four different datasets: PoT, CR, MACM, and IIPC.

### Components/Axes

* **Models:** GPT-4o-mini, Gemini 2.0 Flash, Mistral Small 3.2 24B, Gemma 3 27B, Llama 4 Maverick (arranged vertically).

* **Math Topics:** Algebra, Count. & Prob., Geometry, Inter. Algebra, Number Theory, Prealgebra (arranged horizontally).

* **Datasets:** PoT, CR, MACM, IIPC (represented as columns within each math topic).

* **Accuracy:** Percentage values displayed within each cell of the grid, representing the model's accuracy on a specific topic and dataset.

* **Color Coding:** The chart uses a color gradient to represent accuracy, with darker shades indicating higher accuracy. The legend at the bottom-right indicates the accuracy range corresponding to each color.

### Detailed Analysis or Content Details

**GPT-4o-mini:**

* Algebra: PoT - 94.88%, CR - 91.16%, MACM - 89.30%, IIPC - 95.35% (Trend: Relatively stable, slightly increasing)

* Count. & Prob.: PoT - 82.46%, CR - 77.25%, MACM - 75.83%, IIPC - 81.04% (Trend: Increasing from CR to IIPC)

* Geometry: PoT - 68.57%, CR - 62.86%, MACM - 63.81%, IIPC - 67.62% (Trend: Relatively stable, slight increase)

* Inter. Algebra: PoT - 73.95%, CR - 63.26%, MACM - 60.00%, IIPC - 72.09% (Trend: Increasing from CR to IIPC)

* Number Theory: PoT - 85.15%, CR - 88.61%, MACM - 74.75%, IIPC - 85.64% (Trend: Fluctuating, highest on CR)

* Prealgebra: PoT - 90.70%, CR - 90.23%, MACM - 87.38%, IIPC - 93.02% (Trend: Relatively stable, increasing to IIPC)

**Gemini 2.0 Flash:**

* Algebra: PoT - 98.14%, CR - 97.21%, MACM - 97.69%, IIPC - 99.53% (Trend: Consistently high, increasing to IIPC)

* Count. & Prob.: PoT - 93.36%, CR - 88.15%, MACM - 89.10%, IIPC - 92.89% (Trend: Decreasing from PoT to CR, then increasing)

* Geometry: PoT - 84.76%, CR - 79.52%, MACM - 77.14%, IIPC - 84.29% (Trend: Decreasing from PoT to CR, then increasing)

* Inter. Algebra: PoT - 91.63%, CR - 89.30%, MACM - 88.37%, IIPC - 91.16% (Trend: Relatively stable)

* Number Theory: PoT - 92.08%, CR - 96.04%, MACM - 95.05%, IIPC - 98.51% (Trend: Increasing to IIPC)

* Prealgebra: PoT - 96.28%, CR - 94.42%, MACM - 94.42%, IIPC - 97.67% (Trend: Relatively stable, increasing to IIPC)

**Mistral Small 3.2 24B:**

* Algebra: PoT - 97.67%, CR - 95.35%, MACM - 96.28%, IIPC - 96.28% (Trend: Relatively stable)

* Count. & Prob.: PoT - 91.00%, CR - 80.57%, MACM - 81.99%, IIPC - 91.00% (Trend: Decreasing from PoT to CR, then increasing)

* Geometry: PoT - 80.00%, CR - 71.90%, MACM - 70.95%, IIPC - 82.38% (Trend: Increasing to IIPC)

* Inter. Algebra: PoT - 86.51%, CR - 78.14%, MACM - 78.14%, IIPC - 88.84% (Trend: Increasing to IIPC)

* Number Theory: PoT - 96.53%, CR - 92.08%, MACM - 88.61%, IIPC - 94.55% (Trend: Decreasing from PoT to MACM, then increasing)

* Prealgebra: PoT - 93.02%, CR - 90.70%, MACM - 91.16%, IIPC - 93.91% (Trend: Relatively stable)

**Gemma 3 27B:**

* Algebra: PoT - 98.14%, CR - 97.21%, MACM - 97.67%, IIPC - 98.60% (Trend: Relatively stable, increasing to IIPC)

* Count. & Prob.: PoT - 87.20%, CR - 82.94%, MACM - 82.46%, IIPC - 86.26% (Trend: Decreasing from PoT to MACM, then increasing)

* Geometry: PoT - 81.90%, CR - 78.10%, MACM - 76.19%, IIPC - 82.38% (Trend: Increasing to IIPC)

* Inter. Algebra: PoT - 83.72%, CR - 82.79%, MACM - 82.79%, IIPC - 88.37% (Trend: Increasing to IIPC)

* Number Theory: PoT - 91.09%, CR - 90.59%, MACM - 93.07%, IIPC - 97.03% (Trend: Increasing to IIPC)

* Prealgebra: PoT - 86.51%, CR - 82.79%, MACM - 83.33%, IIPC - 85.12% (Trend: Relatively stable)

**Llama 4 Maverick:**

* Algebra: PoT - 95.61%, CR - 91.92%, MACM - 91.48%, IIPC - 96.80% (Trend: Increasing to IIPC)

* Count. & Prob.: PoT - 81.17%, CR - 74.20%, MACM - 74.60%, IIPC - 81.67% (Trend: Increasing to IIPC)

* Geometry: PoT - 73.38%, CR - 65.86%, MACM - 65.44%, IIPC - 74.48% (Trend: Increasing to IIPC)

* Inter. Algebra: PoT - 74.95%, CR - 66.22%, MACM - 62.96%, IIPC - 78.14% (Trend: Increasing to IIPC)

* Number Theory: PoT - 88.02%, CR - 84.56%, MACM - 82.54%, IIPC - 90.70% (Trend: Increasing to IIPC)

* Prealgebra: PoT - 92.26%, CR - 89.33%, MACM - 87.16%, IIPC - 94.00% (Trend: Increasing to IIPC)

### Key Observations

* Gemini 2.0 Flash and Gemma 3 27B consistently demonstrate the highest accuracy across most math topics and datasets.

* GPT-4o-mini generally performs well, but lags behind Gemini and Gemma in several areas.

* Mistral Small 3.2 24B and Llama 4 Maverick show lower accuracy scores compared to the other models, particularly in Geometry and Inter. Algebra.

* Accuracy tends to be lower on Count. & Prob. and Inter. Algebra across all models.

* Performance generally improves on the IIPC dataset compared to the other datasets (PoT, CR, MACM) for most models and topics.

### Interpretation

The data suggests that Gemini 2.0 Flash and Gemma 3 27B are the most proficient LLMs for solving math problems across the tested topics and datasets. The consistent high scores indicate a strong understanding of mathematical concepts and the ability to apply them effectively. The improvement in performance on the IIPC dataset could be due to its specific characteristics or the models being better trained on similar data. The lower accuracy on Count. & Prob. and Inter. Algebra might indicate these areas require more complex reasoning or specific knowledge that the models haven't fully mastered. The differences in performance between the models highlight the importance of model architecture, training data, and fine-tuning techniques in achieving high accuracy on math problem-solving tasks. The color gradient provides a quick visual comparison of the models' strengths and weaknesses, allowing for easy identification of the best-performing models for specific math topics.