## Accuracy Heatmaps for AI Models on Math Topics

### Overview

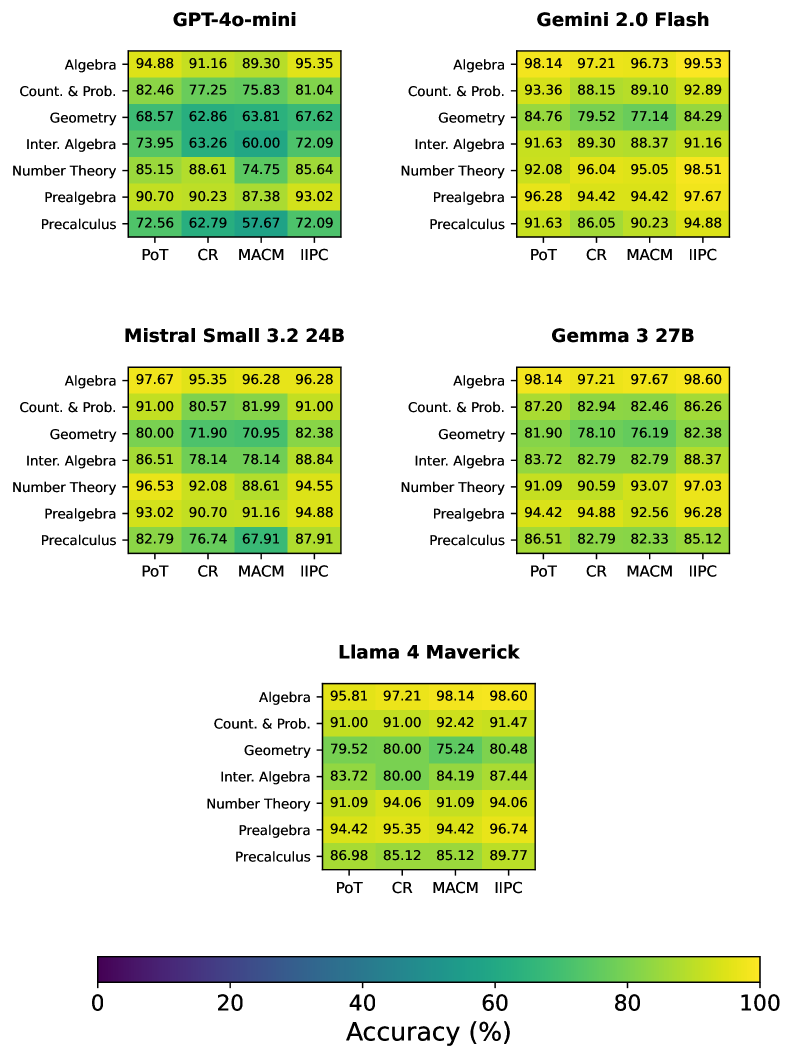

The image displays five heatmaps (color-coded accuracy tables) for different AI models, evaluating performance across seven math topics using four methods. A color scale (0–100% accuracy) is provided, with purple for low accuracy and yellow for high.

### Components/Axes

- **Models**: Five models, each with a table:

- GPT-4o-mini (top-left)

- Gemini 2.0 Flash (top-right)

- Mistral Small 3.2 24B (middle-left)

- Gemma 3 27B (middle-right)

- Llama 4 Maverick (bottom)

- **Rows (Math Topics)**: Algebra, Count. & Prob., Geometry, Inter. Algebra, Number Theory, Prealgebra, Precalculus (vertical axis for each table).

- **Columns (Methods)**: PoT, CR, MACM, IIPC (horizontal axis for each table).

- **Color Scale**: Bottom of the image, gradient from purple (0%) to yellow (100%), labeled *“Accuracy (%)”*.

### Detailed Analysis (Per Model)

#### GPT-4o-mini

| Topic | PoT | CR | MACM | IIPC |

|----------------|--------|--------|--------|--------|

| Algebra | 94.88 | 91.16 | 89.30 | 95.35 |

| Count. & Prob. | 82.46 | 77.25 | 75.83 | 81.04 |

| Geometry | 68.57 | 62.86 | 63.81 | 67.62 |

| Inter. Algebra | 73.95 | 63.26 | 60.00 | 72.09 |

| Number Theory | 85.15 | 88.61 | 74.75 | 85.64 |

| Prealgebra | 90.70 | 90.23 | 87.38 | 93.02 |

| Precalculus | 72.56 | 62.79 | 57.67 | 72.09 |

#### Gemini 2.0 Flash

| Topic | PoT | CR | MACM | IIPC |

|----------------|--------|--------|--------|--------|

| Algebra | 98.14 | 97.21 | 96.73 | 99.53 |

| Count. & Prob. | 93.36 | 88.15 | 89.10 | 92.89 |

| Geometry | 84.76 | 79.52 | 77.14 | 84.29 |

| Inter. Algebra | 91.63 | 89.30 | 88.37 | 91.16 |

| Number Theory | 92.08 | 96.04 | 95.05 | 98.51 |

| Prealgebra | 96.28 | 94.42 | 94.42 | 97.67 |

| Precalculus | 91.63 | 86.05 | 90.23 | 94.88 |

#### Mistral Small 3.2 24B

| Topic | PoT | CR | MACM | IIPC |

|----------------|--------|--------|--------|--------|

| Algebra | 97.67 | 95.35 | 96.28 | 96.28 |

| Count. & Prob. | 91.00 | 80.57 | 81.99 | 91.00 |

| Geometry | 80.00 | 71.90 | 70.95 | 82.38 |

| Inter. Algebra | 86.51 | 78.14 | 78.14 | 88.84 |

| Number Theory | 96.53 | 92.08 | 88.61 | 94.55 |

| Prealgebra | 93.02 | 90.70 | 91.16 | 94.88 |

| Precalculus | 82.79 | 76.74 | 67.91 | 87.91 |

#### Gemma 3 27B

| Topic | PoT | CR | MACM | IIPC |

|----------------|--------|--------|--------|--------|

| Algebra | 98.14 | 97.21 | 97.67 | 98.60 |

| Count. & Prob. | 87.20 | 82.94 | 82.46 | 86.26 |

| Geometry | 81.90 | 78.10 | 76.19 | 82.38 |

| Inter. Algebra | 83.72 | 82.79 | 82.79 | 88.37 |

| Number Theory | 91.09 | 90.59 | 93.07 | 97.03 |

| Prealgebra | 94.42 | 94.88 | 92.56 | 96.28 |

| Precalculus | 86.51 | 82.79 | 82.33 | 85.12 |

#### Llama 4 Maverick

| Topic | PoT | CR | MACM | IIPC |

|----------------|--------|--------|--------|--------|

| Algebra | 95.81 | 97.21 | 98.14 | 98.60 |

| Count. & Prob. | 91.00 | 91.00 | 92.42 | 91.47 |

| Geometry | 79.52 | 80.00 | 75.24 | 80.48 |

| Inter. Algebra | 83.72 | 80.00 | 84.19 | 87.44 |

| Number Theory | 91.09 | 94.06 | 91.09 | 94.06 |

| Prealgebra | 94.42 | 95.35 | 94.42 | 96.74 |

| Precalculus | 86.98 | 85.12 | 85.12 | 89.77 |

### Key Observations

- **High-Accuracy Topics**: Algebra and Prealgebra consistently show high accuracy (e.g., Gemini 2.0 Flash’s Algebra IIPC=99.53, Llama 4 Maverick’s Prealgebra IIPC=96.74).

- **Low-Accuracy Topics**: Geometry and Precalculus often have lower accuracy (e.g., GPT-4o-mini’s Geometry CR=62.86, Mistral Small’s Precalculus MACM=67.91).

- **Method Performance**: IIPC frequently yields the highest accuracy (e.g., Gemini 2.0 Flash’s Algebra IIPC=99.53), while MACM often has the lowest (e.g., GPT-4o-mini’s Precalculus MACM=57.67).

- **Model Comparison**: Gemini 2.0 Flash and Llama 4 Maverick excel across topics, with Gemini 2.0 Flash’s Algebra IIPC=99.53 as a standout.

### Interpretation

The heatmaps reveal how AI models perform on math topics using different methods. High accuracy in Algebra/Prealgebra suggests these topics are more straightforward, while Geometry/Precalculus (with complex reasoning) are more challenging. IIPC’s consistent high performance implies it is an effective method for these tasks. The color gradient (purple to yellow) visually emphasizes accuracy differences, guiding model selection for math-related tasks. This data highlights which models/methods excel in specific topics, aiding in practical applications like educational AI or problem-solving tools.