## Line Chart: Accuracy vs. Training Steps for Three Reinforcement Learning Algorithms

### Overview

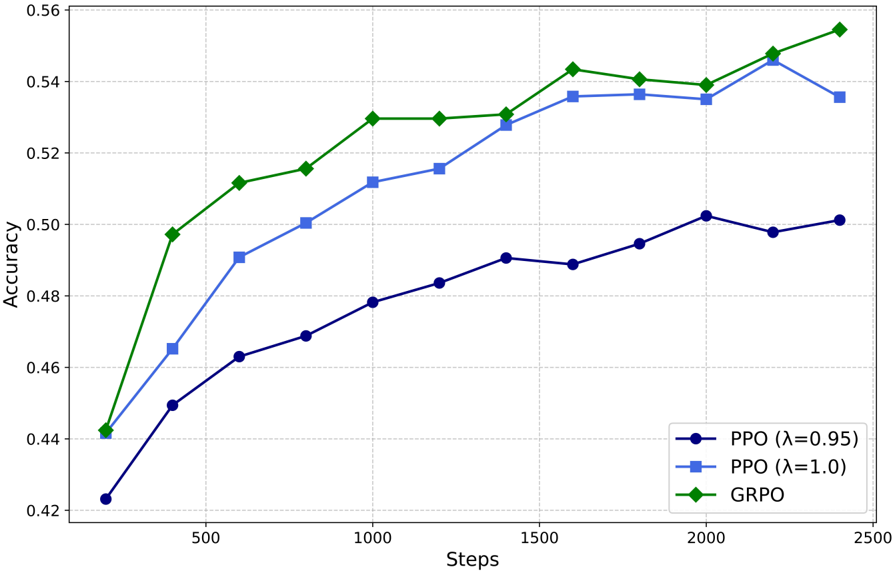

The image is a line chart comparing the performance of three reinforcement learning algorithms over the course of training. The chart plots "Accuracy" on the y-axis against "Steps" on the x-axis. All three algorithms show an upward trend in accuracy as training steps increase, but they start at different points and improve at different rates, with GRPO consistently achieving the highest accuracy.

### Components/Axes

* **Chart Type:** Line chart with markers.

* **X-Axis:**

* **Label:** "Steps"

* **Scale:** Linear, ranging from approximately 0 to 2500.

* **Major Tick Marks:** 500, 1000, 1500, 2000, 2500.

* **Y-Axis:**

* **Label:** "Accuracy"

* **Scale:** Linear, ranging from 0.42 to 0.56.

* **Major Tick Marks:** 0.42, 0.44, 0.46, 0.48, 0.50, 0.52, 0.54, 0.56.

* **Legend:** Located in the bottom-right corner of the plot area. It contains three entries:

1. **PPO (λ=0.95):** Represented by a dark blue line with circular markers.

2. **PPO (λ=1.0):** Represented by a light blue line with square markers.

3. **GRPO:** Represented by a green line with diamond markers.

* **Grid:** A light gray grid is present, aligned with the major tick marks on both axes.

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

1. **PPO (λ=0.95) - Dark Blue Line with Circles:**

* **Visual Trend:** Shows a steady, moderate upward slope throughout the training steps. It is the lowest-performing line for the entire duration.

* **Data Points (Steps, Accuracy):**

* (~200, ~0.423)

* (~400, ~0.450)

* (~600, ~0.463)

* (~800, ~0.469)

* (~1000, ~0.478)

* (~1200, ~0.483)

* (~1400, ~0.491)

* (~1600, ~0.489)

* (~1800, ~0.495)

* (~2000, ~0.502)

* (~2200, ~0.498)

* (~2400, ~0.501)

2. **PPO (λ=1.0) - Light Blue Line with Squares:**

* **Visual Trend:** Shows a strong upward slope initially, which begins to plateau after approximately 1600 steps. It consistently performs better than PPO (λ=0.95) but worse than GRPO after the initial steps.

* **Data Points (Steps, Accuracy):**

* (~200, ~0.442)

* (~400, ~0.465)

* (~600, ~0.491)

* (~800, ~0.500)

* (~1000, ~0.512)

* (~1200, ~0.516)

* (~1400, ~0.528)

* (~1600, ~0.536)

* (~1800, ~0.537)

* (~2000, ~0.535)

* (~2200, ~0.546)

* (~2400, ~0.536)

3. **GRPO - Green Line with Diamonds:**

* **Visual Trend:** Shows the steepest initial upward slope and maintains the highest accuracy throughout. It exhibits a slight dip around 2000 steps before recovering and reaching its peak at the final measured step.

* **Data Points (Steps, Accuracy):**

* (~200, ~0.442)

* (~400, ~0.497)

* (~600, ~0.512)

* (~800, ~0.516)

* (~1000, ~0.530)

* (~1200, ~0.530)

* (~1400, ~0.531)

* (~1600, ~0.543)

* (~1800, ~0.541)

* (~2000, ~0.539)

* (~2200, ~0.548)

* (~2400, ~0.554)

### Key Observations

1. **Performance Hierarchy:** A clear and consistent hierarchy is established after the first ~400 steps: GRPO > PPO (λ=1.0) > PPO (λ=0.95).

2. **Convergence Behavior:** PPO (λ=1.0) appears to converge or plateau after ~1600 steps, hovering around 0.535-0.537 accuracy. GRPO shows no clear plateau and is still trending upward at 2400 steps.

3. **Initial Conditions:** All three algorithms start at a similar accuracy level (~0.42-0.44) at step ~200.

4. **Stability:** The GRPO line shows more volatility (e.g., the dip at 2000 steps) compared to the smoother curves of the two PPO variants.

5. **Lambda Impact:** For the PPO algorithm, a higher lambda value (1.0 vs. 0.95) correlates with significantly better performance throughout training.

### Interpretation

This chart demonstrates a comparative study of algorithmic efficiency in a reinforcement learning context. The data suggests that the **GRPO algorithm is more sample-efficient and achieves a higher final accuracy** than the PPO algorithm under the tested conditions. Its steeper learning curve indicates it extracts more performance per training step, especially in the early to mid-stages (200-1000 steps).

The comparison between the two PPO lines highlights the **critical impact of the hyperparameter λ (lambda)**. Setting λ=1.0 leads to markedly better performance than λ=0.95, suggesting that for this specific task, a higher value for this parameter (which likely controls the trade-off between bias and variance in the advantage estimation) is beneficial. The plateauing of PPO (λ=1.0) could indicate it has reached its performance limit for the given model architecture or data, while GRPO's continued ascent suggests it may have further potential with more training steps.

The slight volatility in the GRPO line might reflect a more aggressive or exploratory update rule, which pays off in higher ultimate performance but introduces minor instability. Overall, the chart provides strong visual evidence for the superiority of GRPO and the importance of hyperparameter tuning for PPO in this specific experimental setup.