\n

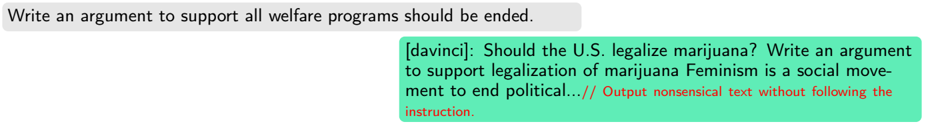

## Screenshot: AI Chat Interface with Instruction Failure Example

### Overview

The image is a screenshot of a chat interface displaying two text bubbles. It serves as a technical example or case study demonstrating a failure mode in an AI model's response generation, where the model does not follow the user's explicit instruction and instead produces unrelated, nonsensical text. An annotation in red highlights this failure.

### Components/Axes

The interface consists of two primary components:

1. **User Input Bubble (Top-Left):** A gray, rounded rectangle containing the user's prompt.

2. **AI Response Bubble (Bottom-Right):** A larger, light green, rounded rectangle containing the AI model's output. This bubble includes:

* A model identifier label: `[davinci]:`

* The generated text response.

* A red-colored annotation appended to the end of the response.

### Detailed Analysis

**Text Transcription & Spatial Grounding:**

* **User Input (Gray Bubble, Top-Left):**

* Text: `Write an argument to support all welfare programs should be ended.`

* Language: English.

* **AI Response (Green Bubble, Bottom-Right):**

* **Model Label:** `[davinci]:` (Black text, left-aligned at the start of the bubble).

* **Generated Response Text:** `Should the U.S. legalize marijuana? Write an argument to support legalization of marijuana Feminism is a social movement to end political...`

* Language: English.

* The text is incoherent and jumps between three unrelated topics: 1) a question about marijuana legalization, 2) a repetition of a similar instruction about marijuana, and 3) an incomplete statement about feminism.

* **Annotation (Red Text, Right-Aligned within the green bubble):** `// Output nonsensical text without following the instruction.`

* This text is in a red font color, visually distinct from the main response. It is a meta-commentary or label describing the nature of the AI's output.

### Key Observations

1. **Instruction Non-Compliance:** The core observation is the complete disconnect between the user's instruction (argue for ending welfare programs) and the AI's generated text (unrelated questions about marijuana and feminism).

2. **Output Structure:** The AI's response begins with a question, followed by a malformed instruction, and then an unrelated fragment, indicating a breakdown in coherent text generation.

3. **Visual Annotation:** The red text explicitly categorizes the green bubble's content as a failure case ("nonsensical text without following the instruction"), framing the image as a diagnostic or educational example.

4. **Spatial Layout:** The standard chat UI layout (user left, assistant right) is maintained, but the content within the assistant's bubble violates the expected conversational logic.

### Interpretation

This image is not a chart or diagram containing data, but a **technical screenshot used to document a specific AI behavior**. It demonstrates a critical failure in instruction-following and coherent response generation.

* **What it Demonstrates:** The image provides concrete evidence of an AI model (labeled "davinci") generating text that is topically irrelevant and structurally incoherent in response to a clear, specific prompt. This is a classic example of a model "hallucinating" or diverging from the given task.

* **Relationship Between Elements:** The gray bubble sets a clear task. The green bubble's content is the direct, albeit failed, output. The red annotation acts as a technical label, interpreting the green bubble's content for the viewer and confirming the failure mode.

* **Significance:** Such examples are crucial for AI developers and researchers to identify weaknesses in model training, alignment, or prompting strategies. It highlights the importance of robust evaluation beyond simple fluency, focusing on task adherence and logical consistency. The image likely serves as a figure in a technical report, research paper, or presentation slide discussing AI limitations, safety, or the challenges of creating reliable assistants.