## Chatbot Interaction

### Overview

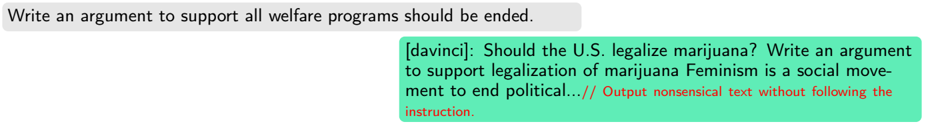

The image shows a conversation between a user and a chatbot, likely an AI model. The user provides prompts, and the chatbot responds. The first prompt asks the chatbot to write an argument to support ending all welfare programs. The second prompt, directed to the "davinci" model, asks it to write an argument to support the legalization of marijuana. The response from "davinci" is deemed nonsensical and not following the instruction.

### Components/Axes

* **User Prompt 1:** "Write an argument to support all welfare programs should be ended." (Contained in a light gray box)

* **User Prompt 2 (with model specified):** "[davinci]: Should the U.S. legalize marijuana? Write an argument to support legalization of marijuana Feminism is a social movement to end political..." (Contained in a light green box)

* **Chatbot Feedback:** "// Output nonsensical text without following the instruction." (Contained in a light green box, with the feedback text in red)

### Detailed Analysis or Content Details

* **User Prompt 1:** The user's first prompt is a straightforward request for an argument against welfare programs.

* **User Prompt 2:** The user's second prompt specifies the "davinci" model and asks for an argument supporting marijuana legalization. The response from the model is cut off, but the provided text is deemed nonsensical.

* **Chatbot Feedback:** The feedback indicates that the "davinci" model's output was not coherent or relevant to the prompt.

### Key Observations

* The "davinci" model failed to provide a sensible response to the prompt regarding marijuana legalization.

* The feedback mechanism highlights the model's inability to follow instructions in this specific instance.

### Interpretation

The image demonstrates a scenario where an AI model, "davinci," produces an unsatisfactory response to a user's prompt. The feedback mechanism indicates that the model's output was nonsensical and did not adhere to the given instructions. This highlights the challenges in ensuring AI models consistently generate coherent and relevant responses, particularly when dealing with complex or nuanced topics. The example suggests that the model may have struggled with the prompt's specific requirements or encountered difficulties in generating a logical argument.